Wearable Sensors for Social Behavior Analysis and Cognitive Decline: From Biomarker Discovery to Clinical Validation

This article synthesizes current research and methodological approaches for using wearable sensors to quantify social behavior and motor patterns as digital biomarkers for cognitive decline.

Wearable Sensors for Social Behavior Analysis and Cognitive Decline: From Biomarker Discovery to Clinical Validation

Abstract

This article synthesizes current research and methodological approaches for using wearable sensors to quantify social behavior and motor patterns as digital biomarkers for cognitive decline. Targeting researchers, scientists, and drug development professionals, it explores the foundational science linking behavior to neurodegeneration, details data collection and analysis methodologies, addresses key technical and adoption challenges, and reviews validation frameworks. By integrating evidence from studies on Parkinson's disease and aging, this review aims to provide a comprehensive roadmap for developing clinically valid tools that can enable early detection, objective monitoring, and more efficient evaluation of therapeutic interventions in cognitive health.

The Neurobehavioral Bridge: Linking Social and Motor Patterns to Cognitive Health

Application Notes

Quantitative Evidence from Recent Studies

Wearable sensors are emerging as powerful tools for quantifying subtle motor and cognitive changes that often precede overt clinical symptoms of cognitive decline. The following tables summarize key quantitative findings from recent research.

Table 1: Wearable Sensor Gait Parameters Predictive of Cognitive Impairment in Parkinson's Disease [1]

| Gait Parameter | Association with Cognitive Impairment | Notes |

|---|---|---|

| Step Length | Shorter step length associated with impairment | One of the most influential predictors in the model |

| Walk Speed | Slower speed associated with impairment | - |

| Stride Time | Increased stride time variability associated with impairment | - |

| Peak Arm Angular Velocity | Reduced velocity associated with impairment | Measured during swing phase |

| Peak Angular Velocity (Steering) | Reduced velocity during turning associated with impairment | - |

Table 2: Cognitive and Physical Outcomes from Interactive Cognitive-Motor Training (ICMT) in Older Adults [2]

| Outcome Measure | ICMT Group (Change) | Control Group (Change) | Significance |

|---|---|---|---|

| Global Cognitive Function | +1.94 points (8.60% increase) | Not specified | p < 0.05 |

| 6-Minute Walk Distance | +18.0 meters (4.65% farther) | Not specified | p < 0.05 |

| Balance (Postural Sway) | Significant improvement | Not specified | p < 0.05 |

| Grip Strength | Significant improvement | Not specified | p < 0.05 |

Table 3: Scalable Biomarkers for Early Detection of Cognitive Decline [3]

| Biomarker | Biological Fluid | Association with Cognitive Decline | Predictive Accuracy (AROC) |

|---|---|---|---|

| Aβ42/40 Ratio | Blood Plasma | Reduced ratio in Alzheimer's Disease continuum | Up to 0.90 (when combined with age & APOE-ε4) |

| p-tau181 | Blood Plasma | Increased levels predict future decline in CU individuals | 0.94 - 0.98 |

| p-tau231 | Blood Plasma | Increases early in the disease process, before Aβ PET positivity | - |

| p-tau217 | Blood Plasma | Associated with the spread of neocortical tangles in AD | - |

Experimental Protocols

Protocol 1: Gait Analysis for Predicting Cognitive Impairment in Parkinson's Disease

This protocol is adapted from a cross-sectional study investigating the link between gait parameters and cognitive impairment in PD patients [1].

Equipment and Setup

- Wearable Sensor System: MATRIX wearable motion and gait analysis system (or equivalent IMU-based system) with NMPA/FDA/CE certifications [1].

- Sensor Configuration: Ten Inertial Measurement Unit (IMU) sensors.

- Sensor Placement:

- Feet: Dorsum of each foot (metatarsal region).

- Thighs: Bilaterally, ~2 cm above the knees.

- Lower Legs: Bilaterally, ~2 cm above the ankle joints.

- Hands: Dorsal side of each wrist.

- Torso: One sensor on the sternum (chest) and one at the fifth lumbar vertebra (lumbar).

- Data Acquisition: Sampling frequency of 100 Hz. Synchronize all sensors via a centralized clock with Bluetooth streaming to ensure inter-sensor synchronization accuracy within ± 2 ms [1].

Participant Preparation and Data Collection

- Participant Onboarding: Recruit PD patients based on MDS criteria during their medication "on" phase. Obtain informed consent.

- Sensor Attachment: Securely fasten all ten sensors using adjustable straps as described in the setup.

- Walking Task: Instruct the participant to complete a straight-line walking trial on a flat, unobstructed surface. The trial should consist of an out-and-back course for a total distance of 16 meters (8 meters in one direction) at their self-selected comfortable speed.

- Data Recording: Initiate and monitor data collection via the central system. Raw motion signals (accelerometer and gyroscope data) are transmitted in real-time via Bluetooth.

Data Processing and Analysis

- Data Extraction: Process raw sensor data to extract spatiotemporal gait parameters, including but not limited to: Step Length, Walk Speed, Stride Time, Peak Arm Angular Velocity, and Peak Angular Velocity during steering [1].

- Cognitive Assessment: Administer standard cognitive assessments (e.g., MoCA, MMSE) to classify patients as cognitively impaired or unimpaired based on defined cut-offs [1].

- Statistical Modeling:

- Perform baseline comparisons and logistic regression analyses to identify independent risk factors.

- Train machine learning models (e.g., Logistic Regression, Random Forest) using the gait parameters and clinical variables to predict cognitive impairment status.

- Evaluate model performance using AUC-ROC curves, decision curve analysis, and calibration plots.

- Apply SHAP analysis to interpret the model and identify the most influential predictors.

Protocol 2: Interactive Cognitive-Motor Training (ICMT) for Older Adults

This protocol details a single-blind, randomized controlled trial designed to improve cognitive and physical function in community-dwelling older adults using a wearable sensor-based system [2].

Equipment and Setup

- Core Device: A custom wearable sensor-based ICMT device developed using Arduino and RFID technology [2].

- Components: The system includes a wearable sensor unit and RFID tags/reader for interactive tasks.

- Cognitive Tasks: The system is programmed with five core cognitive tasks:

- Number Sequence

- Number-Word Sequence

- Card Matching

- Number Memorization

- Route-Finding

Participant Preparation and Intervention

- Screening: Recruit community-dwelling adults aged ≥65 with an MMSE score ≥18 and no diagnosis of dementia or mobility-impairing conditions. Obtain informed consent [2].

- Randomization: Randomly assign participants to the ICMT group or an active control group (e.g., seated tablet-based cognitive training).

- Intervention Sessions (ICMT Group):

- Frequency/Duration: Conduct sessions twice a week for 6 weeks, 50 minutes per session.

- Aerobic Warm-up: Begin with 5 minutes of stepping exercises (forward, backward, left, right) to music at 126 bpm.

- Device Setup: Participants attach the wearable device to their dominant upper extremity.

- Task Execution: Participants perform the five cognitive tasks while engaging in free physical movements. These include transitions from sitting to standing, walking, pivot turns, and reaching with their arms. The cognitive task display is placed at least 3 meters away from the chair to encourage movement.

Outcome Measures and Data Analysis

- Pre- and Post-Testing: Assess the following domains before and after the 6-week intervention:

- Cognitive Function: Using standardized cognitive batteries.

- Physical Function: Balance (e.g., postural sway), muscle strength (e.g., grip strength), and endurance (6-minute walk test).

- Prefrontal Cortex Activity: Hemodynamic response using functional near-infrared spectroscopy (fNIRS).

- Instrumental Activities of Daily Living (IADLs).

- Data Analysis: Use repeated-measures ANOVA or similar statistical methods to compare within-group and between-group changes in outcome measures.

Visualization of Workflows

Gait Analysis Protocol for Cognitive Impairment Prediction

Interactive Cognitive-Motor Training Study Design

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Materials for Wearable Sensor-Based Cognitive Decline Research

| Item / Solution | Specification / Example | Primary Function in Research |

|---|---|---|

| Multi-Sensor IMU System | MATRIX System (Gyenno Science); 10 sensors, 100Hz sampling [1] | Captures high-fidelity kinematic data (acceleration, angular velocity) for quantitative gait and movement analysis. |

| Validated Cognitive Assessments | MoCA (Montreal Cognitive Assessment), MMSE (Mini-Mental State Examination) [1] [2] | Provides standardized, clinical benchmarks for classifying cognitive status and validating digital biomarkers. |

| Custom ICMT Platform | Arduino-based wearable with RFID tasks [2] | Enables integrated cognitive-motor training and assessment, facilitating dual-task paradigm studies. |

| Data Processing Pipeline | Custom software for signal processing & feature extraction (e.g., in Python/MATLAB) [1] | Converts raw sensor data into analyzable spatiotemporal gait parameters and movement features. |

| Machine Learning Libraries | Scikit-learn, SHAP (SHapley Additive exPlanations) [1] | Used to build and interpret predictive models that identify individuals at risk of cognitive decline. |

| Blood-Based Biomarker Assays | SIMOA, IP-MS for Aβ42/40, p-tau181/217/231 [3] | Provides pathophysiological correlates of Alzheimer's disease for validating digital biomarkers. |

The early detection of neurodegenerative diseases is a critical public health imperative, given the projected exponential increase in global dementia prevalence [4]. The current diagnostic paradigm often relies on invasive techniques or clinical assessments administered only after significant cognitive decline has occurred. However, the progression of neurodegenerative diseases like Alzheimer's disease (AD) and Parkinson's disease (PD) is characterized by extended preclinical and prodromal phases where subtle behavioral and motor changes manifest long before traditional diagnosis [5]. This application note synthesizes current research on two promising early behavioral indicators—social withdrawal and gait changes—and details how wearable sensor technology can capture these digital biomarkers for early detection and monitoring within real-world environments.

Quantitative Evidence: Linking Gait, Social Behavior, and Neurodegeneration

Table 1: Key Gait Digital Mobility Outcomes (DMOs) in Neurodegenerative Disease Detection

| Digital Mobility Outcome (DMO) | Association with Neurodegeneration | Detection Capability | Relevant Condition(s) |

|---|---|---|---|

| Walking Speed | Most common DMO; significantly different in real-world vs. supervised settings [6]. Differentiates patients from controls [6]. | PD, general neurodegeneration | |

| Step Length | Reduced in real-world environments compared to clinical settings [6]. | PD | |

| Stride Time | Commonly measured gait characteristic; shows variability in neurodegenerative populations [6]. | PD | |

| Real-world vs. Supervised Gait | Consistent pattern of lower values (e.g., slower speeds, reduced step length) in real-world conditions [6]. | PD, general neurodegeneration | |

| Gait, Balance, & Fall Risk | Wearables show good diagnostic accuracy for assessing these composite outcomes [7]. | PD, Stroke, AD, MS |

Table 2: Wearable Device Acceptability and Feasibility for Continuous Monitoring

| Wearable Form Factor | Key Acceptability Findings | Primary User Concerns | Target User Population |

|---|---|---|---|

| Wrist-worn (Smartwatch) | Preferred due to familiarity and minimal impact on appearance; higher long-term usage [4]. | Accuracy for medical purposes; data disclosure [4]. | Broad, including SCD, MCI [4]. |

| Head-worn (EEG Headband) | Less preferred due to comfort and impact on appearance [4]. | Wearability, aesthetics [4]. | Specialized sleep monitoring [4]. |

| Smartphone Apps (Passive) | Acceptable for continuous, background data collection on fine motor movements [4]. | Lack of transparency on data collected [4]. | Broad population [4]. |

| Smartphone Apps (Active) | Cognitive testing games can cause frustration and increased awareness of impairment in MCI [4]. | Digital anxiety, cognitive burden [4]. | Populations with minimal cognitive impairment [4]. |

Experimental Protocols for Digital Biomarker Capture

Protocol: Multi-Day Real-World Gait Assessment

Objective: To capture ecologically valid digital mobility outcomes (DMOs) from individuals in their everyday environment to identify early signs of motor impairment.

Materials:

- Single inertial measurement unit (IMU) sensor(s)

- Adhesive pads or hypoallergenic bandages for sensor attachment

- Data docking station or charging equipment

- Secure cloud storage and processing platform

Procedure:

- Sensor Configuration: Initialize and calibrate the wearable sensors according to manufacturer specifications.

- Sensor Placement: Attach a single sensor at the lower-back (L5 vertebra) using a medical-grade adhesive pad. This is the most common placement for gait analysis [6]. Alternative placements include both feet and the lower back for more detailed analysis.

- Assessment Duration: Instruct the participant to wear the device during all waking hours for a continuous 7-day period to capture weekday and weekend activity variations [6].

- Data Collection: Sensors passively collect tri-axial accelerometer, gyroscope, and magnetometer data at a sampling frequency ≥ 100 Hz.

- Data Processing:

- Bout Detection: Identify "Walking Bouts" (WBs) from the continuous data stream. A WB is typically defined as a sequence containing at least two consecutive strides from both feet, bookended by non-walking periods [6].

- DMO Extraction: From each valid WB, extract key DMOs such as walking speed, step length, and stride time.

- Data Aggregation: Generate summary statistics (e.g., mean, variance, distribution) for each DMO across all WBs over the assessment period.

Protocol: Remote Assessment of Cognition and Social Engagement

Objective: To classify mild cognitive impairment (MCI) and detect patterns of social withdrawal through unsupervised remote interaction.

Materials:

- Consumer smartphone and/or smartwatch (e.g., iPhone, Apple Watch)

- Custom research application with gamified cognitive assessments and passive data collection capabilities [8]

Procedure:

- Enrollment & Consent: Obtain electronic informed consent. Onboard participants remotely, providing clear instructions and support for device setup [4] [8].

- Passive Data Collection:

- Smartphone: Deploy a passive app that runs in the background to collect metrics on fine motor movements, reaction times, keystroke dynamics (characters redacted), and device usage patterns (e.g., screen-on time, app usage frequency) as a proxy for social and cognitive engagement [4] [8].

- Smartwatch: Continuously collect data on activity levels, heart rate, and sleep [4] [8].

- Active Cognitive Assessment: The research application should prompt participants to complete brief, gamified cognitive tasks randomly scheduled throughout the day. These should assess domains such as episodic memory, executive function, and language [4] [8]. Each session should last 5-10 minutes.

- Self-Reported Scales: Integrate short digital surveys on mood, sleep, and subjective cognitive function.

- Data Integration and Analysis: Transmit encrypted data to a secure analysis platform. Apply machine learning models to the multimodal data stream (passive digital phenotyping, active cognitive tasks, self-report) to classify MCI and identify longitudinal trends indicating social withdrawal or cognitive decline [8].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Analytical Tools for Digital Biomarker Research

| Category | Item | Function/Application |

|---|---|---|

| Hardware | Inertial Measurement Unit (IMU) Sensor | Core component of wearables for capturing kinematic gait data (acceleration, angular velocity) [7] [6]. |

| Consumer Smartwatch & Smartphone | Ubiquitous platforms for deploying active tests and passive sensing in real-world settings [8]. | |

| Software & Data | protViz R Package |

A specialized tool for visualizing and analyzing mass spectrometry data in proteomics, useful for correlating digital findings with fluid biomarkers [9]. |

| Digital Biomarker Classification Models | Machine learning algorithms to classify cognitive status (e.g., MCI) from multimodal digital data streams [8]. | |

| Biomarker Assays | Plasma/CSF Proteomic Panels | Multiplexed assays (e.g., SomaScan, Olink) to measure proteins like Aβ42, p-tau, NFL, and α-synuclein for biological validation of digital findings [10] [5]. |

Visualizing the Integrated Assessment Workflow

Diagram 1: Integrated digital assessment workflow for detecting early signs of neurodegeneration, combining passive and active data collection in real-world settings.

Diagram 2: Logical relationship showing how sensor data is transformed into an integrated risk profile for early neurodegeneration through the analysis of key behavioral red flags.

Wearable sensors have emerged as powerful tools for continuous, objective data collection in real-world settings, offering significant potential for research on social behavior and cognitive decline. These devices, encompassing technologies from Inertial Measurement Units (IMUs) to Photoplethysmography (PPG), enable researchers to move beyond traditional laboratory assessments and subjective reporting to capture fine-grained behavioral and physiological data in ecological environments [11] [12]. For researchers and drug development professionals, this technological evolution provides unprecedented opportunities to quantify novel digital biomarkers, monitor intervention efficacy, and understand the complex relationships between behavior, physiology, and cognitive health across the lifespan.

The integration of wearable sensor data is particularly valuable for studying aging populations, where early detection of cognitive decline and social isolation can enable timely interventions. Recent research demonstrates that sensor-derived metrics can predict cognitive performance [13] and quantify social behavior patterns [14] [15] with clinical relevance. This application note provides a comprehensive framework for utilizing wearable sensor technologies in research investigating the relationships between social behavior and cognitive decline, including standardized protocols for data collection, processing, and analysis.

Wearable Sensor Technologies and Their Applications

Core Sensor Types and Measurable Parameters

Wearable sensors encompass multiple technological approaches for monitoring physiological, kinematic, biochemical, and behavioral parameters. Table 1 summarizes the primary sensor types, their applications, and key considerations for research on social behavior and cognitive decline.

Table 1: Wearable Sensor Technologies for Social Behavior and Cognitive Decline Research

| Sensor Type | Measured Parameters | Research Applications | Limitations & Considerations |

|---|---|---|---|

| Inertial Measurement Units (IMUs) | Acceleration, angular velocity, orientation [11] | Physical activity quantification, gait analysis, movement patterns [16] | Soft-tissue artifacts, integration drift, placement sensitivity [11] |

| Photoplethysmography (PPG) | Heart rate, heart rate variability, blood volume changes [17] | Stress response, autonomic nervous system activity, cardiovascular function [11] [18] | Motion artifacts, skin tone/perfusion effects, signal quality variability [11] [17] |

| Electrophysiological Sensors | Electrocardiogram (ECG), electromyogram (EMG), electrodermal activity (EDA) [11] [12] | Cardiac function, muscle activity, sympathetic nervous system arousal [11] | Contact quality dependency, environmental interference |

| Biochemical Sensors | Lactate, cortisol, glucose, electrolytes in sweat or interstitial fluid [11] | Metabolic stress, energy homeostasis, physiological stress response [11] | Calibration stability, sweat-blood correlation variability, limited analyte selection [11] |

| Acoustic Sensors | Conversation time, ambient sound analysis [14] | Social interaction quantification, communication patterns [14] | Privacy considerations, content/text distinction needed |

Sensor Selection Framework for Research Objectives

The selection of appropriate wearable sensors should align with specific research questions in social behavior and cognitive decline. Table 2 provides a decision matrix linking common research objectives to recommended sensor configurations and validation approaches.

Table 2: Sensor Selection Guide for Research Objectives

| Research Objective | Primary Sensor(s) | Complementary Sensor(s) | Validation Approach |

|---|---|---|---|

| Social interaction quantification | Acoustic sensors (conversation time) [14] | IMUs (proximity/movement), PPG (stress response) | Self-report questionnaires, behavioral coding [14] |

| Cognitive function assessment | IMUs (activity patterns, gait) [13] | PPG (heart rate variability), sleep parameters | Standardized cognitive tests (DSST, CERAD-WL, AFT) [13] |

| Early neurodegenerative disease detection | IMUs (tremor, bradykinesia) [16] | PPG (autonomic function), dynamic monitoring | Clinical diagnosis (MDS-PD criteria), specialist assessment [16] |

| Intervention response monitoring | PPG (stress physiology), IMUs (activity) [11] | Biochemical sensors (cortisol, lactate), self-report measures | Pre-post intervention assessment, control group comparison |

Experimental Protocols and Methodologies

Protocol for Measuring Social Interaction and Cognitive Function in Older Adults

Background: This protocol outlines procedures for investigating relationships between social behavior (measured via wearable sensors) and cognitive function in older adult populations, based on validated methodologies [14] [15].

Materials:

- Wrist-worn wearable sensors with microphone (e.g., Silmee W20 type device) [14]

- Validated cognitive assessment tools (e.g., MMSE, DSST, CERAD-WL, AFT) [13]

- Social behavior questionnaires (community engagement, outing frequency, social contact) [14]

- Data processing infrastructure for sensor data analysis

Procedure:

- Participant Screening and Recruitment:

- Recruit adults aged ≥60 years without dementia diagnosis [14]

- Obtain informed consent following institutional ethics approval

- Collect baseline demographics (age, sex, education, medical history)

Sensor Deployment and Data Collection:

- Instruct participants to wear wristband sensor continuously except when bathing

- Minimum monitoring period: 7-14 consecutive days quarterly to account for seasonal variations [14]

- Define valid sensing day as ≥4 days per period with at least two periods per year

- Process acoustic data to calculate conversation time (defined as minutes with ≥4 speech frames/minute) [14]

- Validate sensor-measured conversation time against video observation (target correlation: r ≥ 0.85) [14]

Cognitive and Social Assessment:

- Administer cognitive tests (MMSE for global function, DSST for processing speed/working memory, CERAD-WL for memory, AFT for verbal fluency) [13]

- Collect self-reported social behavior data:

- Frequency of community activities (continuous scale)

- Outing frequency (4-point scale: none to ≥5 days/week)

- Lesson/class participation (5-point scale: none to ≥5 days/week)

- Contact with friends/relatives (5-point scale: none to ≥5 days/week) [14]

Data Analysis:

- Calculate mean daily conversation time across monitoring period

- Perform correlation analysis between conversation time and social behavior metrics

- Conduct multivariate regression adjusting for age, sex, education

- For longitudinal designs, employ cross-lagged panel models to examine temporal relationships [15]

Quality Control:

- Implement regular sensor synchronization and data offloading

- Monitor participant compliance through wear time validation

- Train assessors on standardized cognitive test administration

- Apply data quality filters for acoustic signals (remove non-speech sounds)

Protocol for Cognitive Status Prediction Using Wearable Sensor Data

Background: This protocol describes methodology for developing machine learning models to differentiate cognitive status using wearable-derived features, based on validated approaches [13].

Materials:

- Hip-worn accelerometers (e.g., ActiGraph GT3X+)

- Light sensors with calibrated lux measurement

- Cognitive test materials (DSST, CERAD-WL, AFT)

- Machine learning infrastructure (Python/R with relevant libraries)

Procedure:

- Data Collection:

- Collect ≥7 days of accelerometer data with waking-hour wear requirement

- Administer cognitive tests within temporal proximity to sensor monitoring

- Define poor cognition as scores in bottom quartile on each cognitive test [13]

Feature Extraction:

- Activity parameters: sedentary time, light/moderate/vigorous activity, activity variability

- Sleep parameters: sleep efficiency, sleep duration, sleep efficiency variability

- Circadian rhythm metrics: relative amplitude, intradaily variability

- Light exposure: mean lux, timing of light exposure

Model Development:

- Employ machine learning algorithms (CatBoost, XGBoost, Random Forest)

- Include core demographic features (age, education) in all models

- Perform repeated cross-validation (e.g., 100 repeats of 5-fold CV)

- Evaluate performance using AUC, AUPRC, sensitivity, specificity

Model Interpretation:

- Apply SHapley Additive exPlanations (SHAP) for feature importance

- Identify strongest predictors across cognitive domains

- Validate model robustness across demographic subgroups

Expected Outcomes:

- Highest predictive performance for processing speed/working memory (DSST; AUC ≥0.82)

- Moderate performance for memory (CERAD-WL; AUC ≥0.72)

- Lower performance for verbal fluency (AFT; AUC ≥0.68) [13]

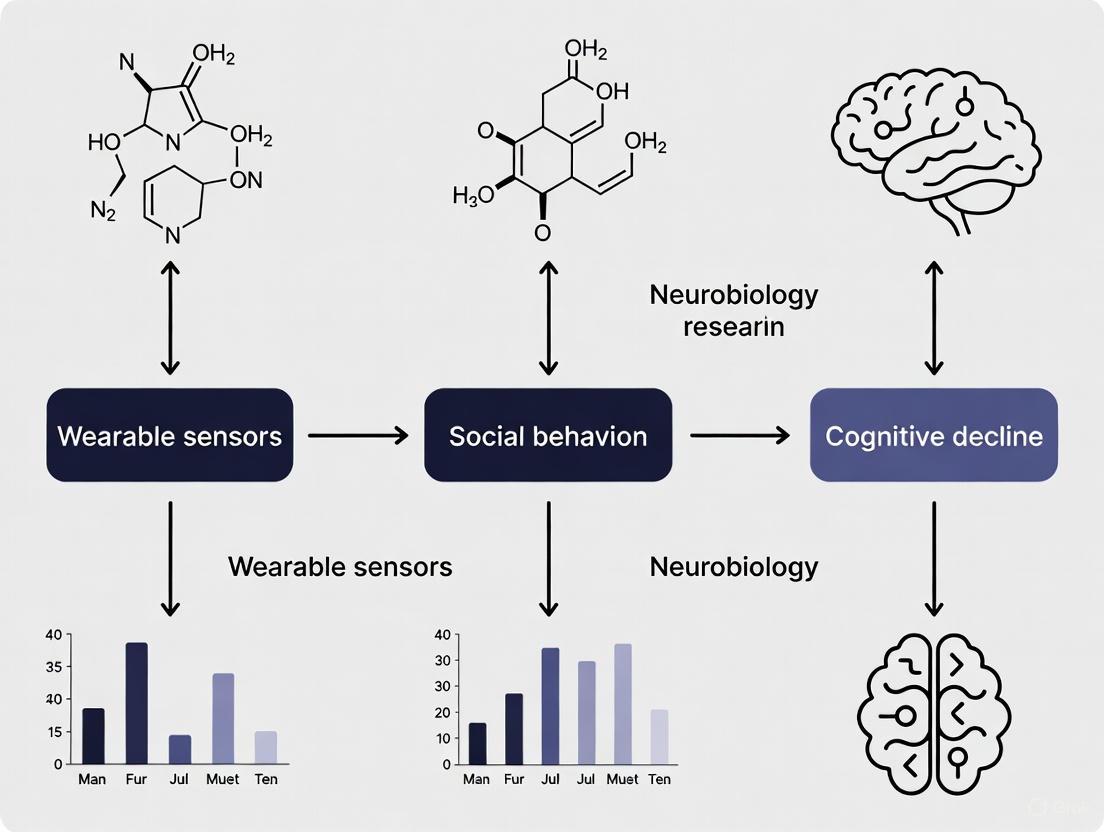

Signaling Pathways and Workflow Diagrams

Diagram 1: Comprehensive Workflow for Wearable Sensor Research in Social Behavior and Cognitive Decline

The Researcher's Toolkit

Essential Research Reagents and Solutions

Table 3: Essential Research Materials and Tools for Wearable Sensor Studies

| Category | Specific Items | Purpose/Function | Example Products/Protocols |

|---|---|---|---|

| Wearable Sensor Platforms | Research-grade accelerometers, IMU sensors, PPG devices | High-frequency raw data capture, research SDK access | ActiGraph, Axivity, Empatica E4, Fitbit Charge 5 [19] [13] |

| Sensor Calibration Tools | Shake tables, optical heart rate simulators, standardized motion tasks | Device validation, measurement accuracy assessment | NIST-traceable calibration equipment, six-minute walk test protocol |

| Data Processing Software | Signal processing toolboxes, machine learning libraries, statistical packages | Feature extraction, model development, data analysis | MATLAB Toolboxes, Python (scikit-learn, TensorFlow), R packages |

| Cognitive Assessment Tools | Standardized cognitive tests, administration guides | Ground truth cognitive status assessment | DSST, CERAD-WL, AFT, MMSE [14] [13] |

| Data Quality Tools | Signal quality indices, compliance algorithms, wear time validation | Data quality assurance, outlier detection | Choi algorithm for wear time, PPG SQI algorithms [11] [17] |

Data Analysis and Interpretation Framework

Quantitative Data Standards and Performance Metrics

For research utilizing PPG sensors, standardized performance evaluation is essential. Table 4 outlines recommended metrics for assessing PPG-based algorithm performance based on recent consensus recommendations [17].

Table 4: Standardized Performance Metrics for PPG-Based Algorithms

| Metric Category | Specific Metrics | Calculation Method | Acceptance Thresholds |

|---|---|---|---|

| Absolute Error Metrics | Mean Absolute Error (MAE), Root Mean Square Error (RMSE) | Average absolute differences between measured and reference values | Device- and parameter-specific; should be compared to clinical standards |

| Relative Error Metrics | Mean Absolute Percentage Error (MAPE), Coefficient of variation | Error normalized to the measured value range | ≤10-15% for most physiological parameters |

| Correlation Metrics | Pearson's r, Spearman's ρ, R² | Strength and direction of linear relationship between measures | r ≥ 0.8 for strong agreement |

| Bland-Altman Analysis | Bias, Limits of Agreement (LOA) | Mean difference and spread between measurement methods | Bias should be minimal with narrow LOA |

| Clinical Accuracy | Grade A/B/C/D per IEEE/BSI standards | Percentage of measurements within error thresholds | ≥85% within ±10 mmHg for BP [17] |

Methodological Considerations for Robust Research

Dataset Requirements:

- Ensure sufficient sample size (N≥100 for model development)

- Include diverse demographic representation (age, sex, skin tone for PPG) [17]

- Balance dataset across cognitive status categories (normal, mild impairment, dementia)

- Include appropriate clinical reference standards for validation

Signal Quality Management:

- Implement signal quality indices (SQI) for automated data quality assessment

- Apply motion artifact correction algorithms (adaptive filtering, template matching)

- Establish minimum wear time requirements (e.g., ≥4 valid days including weekend) [14]

- Document and report data loss and reasons for exclusion

Analytical Considerations:

- Address multiple comparison issues in association analyses (Benjamini-Hochberg FDR control)

- Account for repeated measures in longitudinal designs

- Validate models in held-out test sets not used for training

- Report performance metrics with confidence intervals

Wearable sensors, from IMUs to PPG, represent a transformative approach for data collection in social behavior and cognitive decline research. The protocols and frameworks presented herein provide researchers with standardized methodologies for generating robust, reproducible evidence. As the field advances, key challenges remain in ensuring data quality, addressing privacy concerns, and validating digital biomarkers against clinical endpoints. Nevertheless, the integration of multimodal sensor data with advanced analytics offers unprecedented opportunities to understand the complex interplay between social behavior, physiological function, and cognitive health, ultimately supporting earlier detection and more personalized interventions for cognitive decline.

The early detection of cognitive decline is a critical challenge in neurogeriatric research and therapeutic development. Wearable sensors offer a transformative approach by enabling continuous, objective, and high-resolution monitoring of digital biomarkers in free-living environments. This document frames the assessment of key physiological targets—gait parameters, activity levels, and social interaction metrics—within a broader research thesis on wearable sensors for social behavior and cognitive decline. It provides detailed application notes and standardized protocols to facilitate robust, reproducible research for scientists and drug development professionals. By quantifying subtle changes in motor function, daily activity patterns, and social engagement, these biomarkers can provide critical insights into underlying neurological integrity and the efficacy of novel therapeutic interventions.

Quantitative Data on Key Physiological Targets

The following tables synthesize key quantitative parameters derived from wearable sensor data, which serve as potential biomarkers for cognitive health and functional decline.

Table 1: Gait Parameters as Biomarkers for Neurological and Orthopedic Conditions (from a multi-pathology dataset) [20]

| Gait Domain | Specific Parameter | Measurement Method | Relevance to Cognitive & Motor Function |

|---|---|---|---|

| Temporal | Gait cycle time, Stance phase, Swing phase | IMU on dorsal foot; event detection algorithms | Reflects motor control efficiency; prolonged cycle time indicates gait deterioration [20]. |

| Spatial | Stride length, Step width, Cadence | Fused accelerometer & gyroscope data from foot IMUs | Assesses locomotor stability and balance; reduced stride length correlates with fear of falling [20] [21]. |

| Rhythmicity | Step time variability, Stride length variability | Statistical analysis (e.g., Coefficient of Variation) over multiple gait cycles | High variability is a strong indicator of impaired motor automaticity and elevated fall risk [20] [22]. |

| Turning | Turning duration, Number of steps to turn, Peak turning velocity | IMU on lower back and head during 180° turn | Turning kinetics are highly sensitive to Parkinson's disease and other neurodegenerative disorders [20]. |

Table 2: Activity Level Metrics for Community-Dwelling Older Adults (Evidence from Meta-Analyses) [23]

| Metric Category | Specific Metric | Device Typical Use | Association with Health Outcomes |

|---|---|---|---|

| Volume | Daily step count, Physical activity time (minutes/day) | Wrist-worn activity trackers (e.g., Fitbit, Garmin) | Significantly increased by WAT-based interventions vs. usual care (SMD=0.58, 95% CI 0.33-0.83) [23]. |

| Intensity | Time in moderate-to-vigorous physical activity (MVPA), Sedentary time | Triaxial accelerometers (e.g., ActiGraph) | MVPA is associated with better physical function; sedentary behavior correlates with functional decline [23] [21]. |

| Pattern | Bout length of sedentary behavior, Activity fragmentation | Algorithm-derived from accelerometer data | Patterns of activity (e.g., prolonged sedentary bouts) may be more informative than total volume alone for predicting cognitive decline. |

Table 3: Sensor-Derived Metrics for Intrinsic Capacity and Social Behavior [21] [24] [25]

| Intrinsic Capacity Dimension | Sensor-Measurable Proxy | Typical Sensor Modality | Research Application |

|---|---|---|---|

| Locomotion | Gait speed, Rhythm, Stability during brisk walking | IMU, Plantar pressure sensor | Primary indicator of functional reserve; closely linked to cognitive load [21]. |

| Vitality | Heart rate, Heart rate variability (HRV) during exercise | PPG, ECG chest strap | HRV, especially during physical activity, indicates autonomic nervous system health [21] [26]. |

| Cognition | Dual-task cost on gait parameters | IMU + cognitive task | Gait stability under dual-task conditions is a marker of cognitive-motor interference [21]. |

| Psychological | Electrodermal Activity (EDA), Skin temperature | EDA sensor (e.g., Empatica E4) | Indicates emotional arousal and stress, which can affect social motivation [21] [24]. |

| Sensory | N/A | N/A | (Typically assessed directly; less commonly proxied by motor sensors) |

| Social Interaction | Mobility radius, Location entropy, Phone usage data | GPS from smartphone, Bluetooth proximity, Call logs | Proxies for social engagement; reduced mobility and interaction are linked to social withdrawal and depression [25]. |

Experimental Protocols

Protocol for Instrumented Gait Analysis

This protocol is adapted from a standardized clinical gait assessment with inertial sensors [20].

- Objective: To quantitatively assess spatiotemporal gait parameters and turning kinematics in a controlled setting to identify markers of motor impairment and cognitive decline.

- Equipment:

- Four synchronized Inertial Measurement Units (IMUs), e.g., XSens or Technoconcept models [20].

- Adhesive straps or hypoallergenic tape for sensor attachment.

- A measuring tape and markers for a 10-meter walkway.

- A data acquisition laptop/tablet with relevant software.

- Sensor Placement:

- Head (HE): Centered on the parietal bone.

- Lower Back (LB): Over the L4/L5 vertebrae.

- Left Foot (LF) and Right Foot (RF): On the dorsal surface of the foot.

- Procedure:

- Participant Preparation: Explain the protocol and obtain informed consent. Attach sensors securely to the specified body locations.

- Calibration: Record a 10-second static standing trial with the participant looking straight ahead. This serves as a baseline for sensor orientation.

- Gait Trial:

- Instruct the participant to: "Stand still until I give the signal. Then, walk at your comfortable, normal pace for 10 meters, make a 180° turn within the designated area, walk back 10 meters, and stand still again until I tell you to stop."

- Initiate data recording.

- Signal the participant to begin.

- After the trial, stop the recording.

- Repetition: Perform a minimum of 3 trials to ensure data reliability and account for natural variability.

- Data Processing and Output:

- Event Detection: Use validated algorithms to detect initial contact (heel strike) and final contact (toe off) for each foot from the foot-mounted IMU signals [20] [22].

- Parameter Calculation: From these events, calculate for each gait cycle: stride length, stride time, cadence, stance phase duration, swing phase duration, and their respective variabilities (Coefficient of Variation).

- Turn Analysis: From the lower back and head IMUs, identify the turn phase and extract metrics like turning velocity, number of steps, and turn duration.

The workflow for this protocol is summarized in the diagram below:

Protocol for Continuous Activity and Social Metric Monitoring

This protocol outlines a framework for longer-term, free-living data collection.

- Objective: To monitor real-world physical activity levels, sleep patterns, and proxy measures of social interaction over an extended period (e.g., 7-14 days).

- Equipment:

- A wrist-worn wearable device (e.g., research-grade ActiGraph, or consumer-grade Fitbit/Garmin with research permissions) with accelerometry and PPG.

- A smartphone with a dedicated research app for GPS and communication log data collection.

- Procedure:

- Device Setup: Configure devices to collect data at a specified sampling frequency (e.g., 30-100 Hz for accelerometry). Ensure informed consent for continuous monitoring and data privacy is obtained.

- Device Distribution: Instruct participants to wear the wrist device continuously (24/7) for the study duration, removing only for charging as needed. Ensure the smartphone app is running in the background.

- Compliance Check: Monitor data streams for compliance (e.g., periods of non-wear). Use automated alerts or daily diaries to prompt participants if needed.

- Data Processing and Output:

- Activity Levels: Process accelerometer data using validated algorithms (e.g, Freedson et al.) to estimate daily step count, time spent in sedentary, light, and moderate-to-vigorous physical activity [23].

- Sleep: Use device-native or open-source algorithms (e.g., Cole-Kripke) to estimate total sleep time, sleep efficiency, and wake-after-sleep-onset from accelerometry and PPG [27].

- Social Proxies:

- Mobility: From GPS data, calculate the diameter of the daily mobility radius or location entropy (a measure of routine) [25].

- Interaction: From smartphone logs (with privacy safeguards), aggregate metrics like the number of outgoing calls/messages or Bluetooth proximity events to other devices.

The data processing pipeline for free-living monitoring is illustrated below:

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Materials and Tools for Wearable Sensor Research

| Category / Item | Specific Examples | Function & Application Note |

|---|---|---|

| Wearable Sensors | XSens IMUs, Technoconcept I4 Motion [20]; Shimmer3 GSR+ [24]; Empatica E4 [24] [25] | Function: Capture raw inertial data (acceleration, angular velocity), electrodermal activity, heart rate, etc. Note: Select based on sampling rate, accuracy, and synchronization capabilities required for your protocol. |

| Research Software & Toolkits | Gaitmap [20]; OpenSense [11]; Machine Learning Libraries (Python, R) | Function: Standardized algorithms for gait event detection, sensor-to-segment calibration, and feature extraction. Note: Gaitmap provides a verified directory of algorithms for processing IMU gait data. |

| Data Processing Algorithms | Finite Impulse Response Neural Network (FIRNN), Long Short-Term Memory (LSTM) networks [22] | Function: Model temporal dependencies in sensor data for high-accuracy Human Activity Recognition (HAR). Note: LSTM achieved highest accuracy (98.76%) in HAR tasks, while FIRNN offers lower computational cost [22]. |

| Explainable AI (XAI) Methods | Layer-wise Relevance Propagation (LRP), SHAP [22] | Function: Interpret model predictions to identify which sensor inputs (e.g., gyroscope Y-axis) drive activity classification. Note: Critical for building trust and understanding digital biomarkers in clinical and research settings [22]. |

| Analytical Assays | N/A (Biospecimen analysis is typically a separate parallel assessment in multimodal studies) | Note: While wearable sensors capture functional/behavioral data, correlative assays (e.g., plasma biomarkers like NfL) can be collected to validate against pathophysiological processes. |

Cognitive impairment is a prevalent and debilitating non-motor symptom in Parkinson's disease (PD), significantly affecting patients' quality of life and disease progression. Understanding the connection between motor and cognitive dysfunction provides a critical pathway for early intervention strategies. Recent research demonstrates that gait parameters, quantified through wearable sensors, serve as sensitive biomarkers for predicting cognitive decline in PD patients. This application note synthesizes current evidence and methodologies, positioning gait analysis within a broader research context that integrates wearable sensor technology, machine learning analytics, and social behavior monitoring to combat cognitive decline.

Experimental Design and Key Findings

A recent cross-sectional study provides compelling evidence for gait parameters as independent predictors of cognitive impairment in PD. The research involved 177 early-to-mid-stage PD patients, with approximately 28.8% diagnosed with cognitive impairment based on education-adjusted Mini-Mental Status Examination (MMSE) scores [1]. The study collected 38 clinical variables, including demographic data, medical history, cognitive scale scores, and gait parameters captured using a wearable sensor system.

Independent Predictors of Cognitive Impairment

Multivariate logistic regression analysis identified seven independent risk factors for cognitive impairment in PD, with several representing quantifiable gait parameters [28] [1].

Table 1: Independent Predictors of Cognitive Impairment in Parkinson's Disease

| Predictor Variable | Category | Clinical/Role Significance |

|---|---|---|

| Duration of PD | Clinical | Indicates disease progression burden |

| UPDRS-III Score | Clinical | Measures motor symptom severity |

| Step Length | Gait Parameter | Reflects stride integrity and coordination |

| Walk Speed | Gait Parameter | Indicates overall motor function and confidence |

| Stride Time | Gait Parameter | Reveals gait rhythm and timing control |

| Peak Arm Angular Velocity | Gait Parameter | Measures upper body movement fluidity |

| Peak Angular Velocity During Steering | Gait Parameter | Assesses dynamic balance and adaptation |

Predictive Model Performance

The study employed six different machine learning models to predict cognitive impairment. The logistic regression model demonstrated superior performance, achieving an area under the curve (AUC) of 0.957 on the test set, outperforming other algorithms [1]. SHapley Additive exPlanations (SHAP) analysis further identified Step Length, UPDRS-III score, Duration of PD, and Peak angular velocity during steering as the most influential predictors within the model [28] [1].

Experimental Protocols

Participant Recruitment and Assessment

Inclusion Criteria:

- Diagnosis of PD confirmed by two movement disorder specialists per International Parkinson and Movement Disorder Society (MDS) criteria [1].

- Age ≥ 18 years.

- Ability to walk independently without assistance.

- Availability of complete and accurate clinical data.

- Capacity to provide autonomous informed consent.

Exclusion Criteria:

- History of neurological or psychiatric disorders interfering with PD diagnosis or treatment.

- History of deep brain stimulation (DBS) or other invasive brain surgeries.

- Recent (< 4 weeks) initiation or dose adjustment of antiparkinsonian drugs, or use of medications affecting cognition or gait (e.g., anticholinergics, cholinesterase inhibitors, memantine, sedatives) [1].

- Presence of severe systemic diseases (cardiac, hepatic, renal), symptomatic orthostatic hypotension, or other conditions impairing gait.

- History of substance abuse or alcoholism.

Wearable Sensor-Based Gait Analysis Protocol

Equipment: MATRIX wearable motion and gait analysis system (Gyenno Science Co., Ltd.), certified by NMPA, FDA, and CE [1]. The system uses ten inertial measurement unit (IMU) sensors.

Sensor Placement: Sensors were attached to the following locations, sampling at 100 Hz [1]:

- Feet: Dorsum of each foot (metatarsal region)

- Thighs: Bilaterally, approximately 2 cm above the knees

- Lower Legs: Bilaterally, approximately 2 cm above the ankle joints

- Hands: Dorsal side of each wrist

- Torso: One sensor on the sternum (chest) and one at the fifth lumbar vertebra (lumbar)

Data Collection Procedure:

- Patients performed tests during their medication "on" phase.

- Participants completed a straight-line walking trial consisting of an out-and-back course (16 m total distance, 8 m in one direction) at a self-selected comfortable speed.

- Raw motion signals from the 10 sensors were captured in real-time and transmitted via Bluetooth to a central system for analysis [1].

Data Quality Assurance and Analysis

Implementing rigorous data quality assurance is fundamental prior to statistical analysis [29]. Key steps include:

- Checking for Duplications: Identifying and removing identical copies of data, ensuring only unique participant data remains.

- Managing Missing Data: Establishing percentage thresholds for questionnaire completion and using statistical tests (e.g., Little's Missing Completely at Random test) to analyze the pattern of missingness.

- Checking for Anomalies: Running descriptive statistics to identify data points that deviate from expected patterns or fall outside scoring ranges.

Following data cleaning, analysis proceeds in waves:

- Descriptive Analysis: Summarizing the dataset using frequencies, means, medians, and modes.

- Assessing Normality: Testing if data stems from a normal distribution using measures of kurtosis, skewness, Kolmogorov–Smirnov, or Shapiro–Wilk tests to inform the choice of statistical tests [29].

- Inferential Analysis: Employing statistical tests to compare groups, analyze relationships, and build predictive models.

Visualization of Research Workflow

The following diagram illustrates the integrated research workflow, from data acquisition to clinical application, incorporating both the cited study on gait analysis and the broader context of social robot-driven interventions for cognitive decline.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Research Reagents and Materials for Wearable Sensor-Based Gait and Cognitive Research

| Item Name | Specification / Model Example | Primary Function in Research |

|---|---|---|

| Wearable Gait Sensor System | MATRIX System (Gyenno Science) with 10 IMU sensors [1] | Captures high-frequency (100 Hz) kinematic data (acceleration, angular velocity) for quantitative gait analysis. |

| Inertial Measurement Unit (IMU) | Triaxial accelerometer (±16 g) & gyroscope (±2000 dps) [1] | Provides raw motion data for calculating specific gait parameters like step length and peak angular velocity. |

| Cognitive Assessment Tools | MMSE, Montreal Cognitive Assessment (MoCA) [1] | Standardized instruments for classifying cognitive impairment and validating predictive models. |

| Motor Symptom Scale | Unified Parkinson's Disease Rating Scale Part III (UPDRS-III) [1] | Quantifies motor symptom severity, a key clinical covariate in predictive models. |

| Social Robot Platform | Pepper Robot (engAGE Project) [30] [31] | Provides cognitive therapy and engagement; part of broader digital health ecosystems for intervention. |

| Activity Tracker | Fitbit (engAGE Project) [30] [31] | Monitors physical activity and sleep patterns, contributing to holistic lifestyle management in longitudinal studies. |

| Data Analysis Software | Machine Learning Libraries (e.g., for Logistic Regression, SHAP) | Used to develop, validate, and interpret predictive models of cognitive decline. |

Application Note: The SOR Framework in Wearable Sensor Research

The Stimulus-Organism-Response (SOR) model provides a robust theoretical framework for understanding how wearable sensors influence user behavior and generate valuable data, particularly in research on social behavior and cognitive decline. Originating from psychological and consumer behavior studies, the SOR model explains how external stimuli (S) affect an individual's internal state (O), leading to observable behaviors or responses (R) [32]. This framework is exceptionally well-suited for structuring research on how data from wearables can predict and monitor cognitive health.

- Stimulus (S): In the context of wearable sensors, the stimulus comprises the continuous, multi-modal data collection from devices. This includes tracked metrics such as gait, posture, head motion, heart rate variability, respiration, location, orientation, and movement [33]. The sheer volume and temporal nature of this data constitute a persistent environmental stimulus.

- Organism (O): The organism represents the internal cognitive and physiological state of the user. For cognitive decline research, this encompasses constructs like mild cognitive impairment (MCI), memory function, processing speed, and working memory [34] [13]. The organism is the "black box" where raw sensor data is translated into meaningful digital phenotypes related to cognitive health.

- Response (R): The response includes the observable behavioral and psychological outcomes. This can be a direct health behavior, such as changes in physical activity or sleep patterns monitored by the wearable [30]. In a research context, the response can also be a clinically measurable cognitive outcome, such as performance on the Digit Symbol Substitution Test (DSST) or the Montreal Cognitive Assessment (MoCA) [13] [30].

The diagram below illustrates the application of the SOR model to wearable sensor research for cognitive decline.

Quantitative Data on Wearable Performance in Cognitive Assessment

Recent large-scale studies demonstrate the strong predictive value of wearable data for cognitive assessment. The tables below summarize key performance metrics from recent research.

Table 1: Performance of Machine Learning Models in Predicting Poor Cognition from Wearable Data (n=2,479 older adults) [13]

| Cognitive Test | Cognitive Domains Assessed | Best-Performing Model | Median AUC | Key Predictive Wearable Features |

|---|---|---|---|---|

| Digit Symbol Substitution Test (DSST) | Processing Speed, Working Memory, Attention | CatBoost | 0.84 | Lower activity variability, Less time in moderate-vigorous activity, Greater sleep efficiency variability |

| CERAD Word-Learning (CERAD-WL) | Immediate/Delayed Memory | CatBoost | 0.73 | Higher sedentary activity levels, Lower total activity variability |

| Animal Fluency Test (AFT) | Categorical Verbal Fluency | CatBoost | 0.71 | Activity levels, Sleep parameters |

Table 2: Performance of Foundation Models on Behavioral Data from Wearables [35] [36]

| Model / Approach | Data Volume | Number of Tasks | Key Strengths | Notable Results |

|---|---|---|---|---|

| Behavioral Foundation Model (Apple) | 2.5B hours from 162K individuals | 57 health-related tasks | Excels in behavior-driven tasks (e.g., sleep prediction); improves when combined with raw sensor data | Strong performance across individual-level classification and time-varying health state prediction |

| LSM-2 with AIM (Google) | 40 million hours from 60K participants | Health condition classification, activity recognition, data imputation | Robust to missing data; achieves high performance even with sensor gaps | Outperformed LSM-1 on hypertension/anxiety classification, activity recognition, and BMI regression |

Experimental Protocols for SOR-Based Cognitive Decline Research

Protocol 1: Kitchen Activity Assessment for MCI Detection

Objective: To quantify mild cognitive impairment (MCI) in older adults using multi-modal wearable sensor data during instrumental activities of daily living (IADL) in a kitchen environment [34].

Background: Deficits in visuospatial navigation affect functional independence in individuals with MCI, with kitchen activities serving as a sensitive indicator of cognitive decline.

Materials & Methods:

- Participants: 19 older adults (11 with MCI, 8 with normal cognition) diagnosed via standardized clinical assessment.

- Wearable Sensors: Wrist-worn activity sensors and eye-tracking glasses.

- Protocol:

- Task: Participants prepare a standardized yogurt bowl in a controlled kitchen environment.

- Data Collection: Simultaneously collect upper limb movement data from wrist sensors and visual attention data from eye-tracking.

- Feature Extraction: Analyze motor function features (movement smoothness, efficiency) and oculomotor features (fixation duration, saccadic patterns).

- Modeling: Apply multimodal machine learning analysis to classify MCI status.

Outcomes: The model achieved 74% F1 score in discriminating older adults with MCI from normal cognition. Feature importance analysis confirmed association of weaker upper limb motor function and delayed eye movements with cognitive decline [34].

Protocol 2: Social Robot-Driven Intervention (engAGE)

Objective: To counteract cognitive decline in older adults with MCI through a technology-based multidomain intervention combining social robot-driven cognitive therapy with wearable-enabled lifestyle monitoring [30].

Background: Cognitive interventions, physical activity, and reminiscence therapy can improve outcomes in MCI, but require consistent implementation that technology can facilitate.

Materials & Methods:

- Study Design: 6-month randomized controlled trial (RCT) with 49 older adults with MCI (40 experimental, 9 control).

- Technology Platform:

- Social Robot: Pepper robot for weekly cognitive therapy sessions at healthcare facilities.

- Wearable: Fitbit activity tracker for daily sleep and physical activity monitoring.

- Mobile App: Cognitive games for daily home use.

- Measures:

- Primary: Cognitive capacity (Montreal Cognitive Assessment, Memory Assessment Clinic Questionnaire).

- Secondary: Social engagement (UCLA Loneliness Scale), quality of life (Warwick-Edinburgh Mental Well-Being Scale), acceptability (System Usability Scale).

Procedure:

- Weekly Sessions: Participants engage in social robot-driven cognitive therapy supervised by a psychologist/therapist.

- Daily Monitoring: Participants wear activity tracker and use mobile app for cognitive games at home.

- Data Integration: Combine robot interaction data, wearable sensor data, and app usage data.

- Assessment: Evaluate pre-post changes in cognitive and psychosocial measures.

Applications: This protocol exemplifies the SOR model by using technology-generated stimuli (robot interactions, wearable data) to influence internal cognitive state (organism), resulting in improved cognitive outcomes (response) [30].

The workflow below details the implementation of an SOR-informed digital phenotyping study.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Tools for Wearable Sensor Studies in Cognitive Decline

| Tool / Technology | Type | Function in Research | Example Use Cases |

|---|---|---|---|

| Foundation Models for Behavioral Data [35] [36] | Algorithm | Pre-trained models that learn general representations from massive-scale wearable data; fine-tunable for specific cognitive tasks. | Transfer learning for cognitive classification tasks; generating behavioral embeddings for predictive models. |

| LSM-2 with Adaptive and Inherited Masking (AIM) [37] | Algorithm | Self-supervised learning approach that handles incomplete wearable data without imputation, improving real-world robustness. | Maintaining data integrity in longitudinal studies where sensor data has inevitable gaps; sensor imputation. |

| Social Robot Platform (Pepper) [30] | Hardware/Software | Provides standardized, engaging cognitive therapy sessions; integrates with wearable data for personalized interventions. | delivering cognitive stimulation therapy in RCT settings; engaging older adults with MCI in regular cognitive exercises. |

| Multi-modal Sensor Suite [34] [33] | Hardware | Collects complementary data streams (wrist movement, eye-tracking, physiology) to capture diverse aspects of behavior and cognition. | Quantifying kitchen task performance in MCI; monitoring gait, posture, and head motion for motor-cognitive coupling. |

| Digital Phenotyping Frameworks [38] | Methodology | Standardized protocols for moment-by-moment quantification of human behavior using personal digital devices. | Developing reliable digital biomarkers for cognitive decline; ensuring reproducibility across studies and populations. |

Technical and Methodological Considerations

Handling Data Incompleteness

A fundamental challenge in wearable sensor research is the inevitable missingness of data due to device removal, charging, motion artifacts, or signal noise [38] [37]. Traditional approaches of imputation or aggressive filtering introduce bias or discard valuable data. The Adaptive and Inherited Masking (AIM) approach in LSM-2 represents a significant advancement by treating missingness as a natural artifact rather than an error [37]. During self-supervised learning, AIM combines artificially masked tokens (for reconstruction objectives) with naturally missing tokens, using both token dropout and attention masking to handle variable fragmentation. This results in foundation models that are more robust to the realities of incomplete wearable data.

Standardization and Interoperability

The lack of standardization in digital phenotyping methodologies limits reproducibility and generalizability across studies [38]. Proposed solutions include:

- Universal Frameworks: Developing standardized protocols for data collection, processing, and feature extraction.

- Open-Source APIs: Promoting seamless data integration across devices and platforms (e.g., Apple HealthKit, Google Fit).

- Cross-Platform Interoperability: Ensuring wearable data from different manufacturers can be combined and analyzed consistently.

- Industry-Academia Collaboration: Aligning technology development with research needs through partnerships.

These strategies enhance data reliability, promote scalability, and maximize the potential of wearable sensors in cognitive health research and clinical applications [38].

From Raw Data to Digital Biomarkers: Methodologies for Sensor Data Acquisition and Analysis

The accurate capture of physiological and behavioral data through wearable sensors is foundational to modern research into social behavior and cognitive decline. The selection of sensor modality and its precise placement on the body directly influences the quality, reliability, and validity of the data collected, ultimately determining the success of longitudinal studies and interventional trials. In the context of cognitive decline research, multimodal sensing approaches that combine inertial, cardiac, and neural activity data are increasingly critical for developing digital biomarkers that can detect subtle, early changes in cognitive function [39] [8]. Furthermore, the strategic positioning of these sensors is not merely a technical consideration but a fundamental aspect of participant compliance in long-term studies; uncomfortable or obtrusive placement can significantly impact adherence and data integrity, particularly in older adult populations with mild cognitive impairment (MCI) [30] [31]. This document provides detailed application notes and experimental protocols to guide researchers in optimizing sensor deployment for studies investigating the complex interplay between physiology, behavior, and cognitive health.

Sensor Modalities: Technical Specifications and Research Applications

Inertial Measurement Units (IMUs)

IMUs, typically comprising accelerometers, gyroscopes, and sometimes magnetometers, are the cornerstone for quantifying physical activity and motor behavior in unsupervised, free-living environments. Their data is crucial for human activity recognition (HAR), which can reveal patterns of activity and rest, gait quality, and overall mobility—all of which are potential indicators of cognitive and functional decline [39] [40].

Table 1: Key Specifications and Applications of Inertial Measurement Units (IMUs)

| Parameter | Typical Specifications | Primary Research Applications | Considerations for Cognitive Studies |

|---|---|---|---|

| Accelerometer | Range: ±2g to ±16g; Sampling: 10-100 Hz [40] | Activity classification (walking, sitting), gait analysis, fall risk assessment [39] | Correlate activity intensity and patterns with cognitive test performance [8]. |

| Gyroscope | Range: ±250 to ±2000 dps [40] | Quantifying rotational movements, posture transitions, and complex motions [40] | Detect slowing or hesitancy in movement as a potential proxy for psychomotor slowing. |

| Placement Efficacy | Wrist, lower back, ankle, thigh [40] | Recognition of activities of daily living (ADLs) | The back is optimal for full-body movement assessment with a single sensor [40]. |

| Performance Data | Accuracy up to 91.77% for 54 activity classes using a single sensor on the back [40] | Unsupervised HAR in real-world settings | High accuracy is vital for reliable behavioral phenotyping in decentralized trials [8]. |

Electrocardiography (ECG)

ECG sensors measure the heart's electrical activity, providing insights into autonomic nervous system function through metrics like heart rate variability (HRV). In cognitive research, cardiac dynamics are studied as they correlate with cognitive load, stress, and may offer early signs of neurocognitive disorders [41] [24].

Table 2: Key Specifications and Applications of Electrocardiography (ECG)

| Parameter | Typical Specifications | Primary Research Applications | Considerations for Cognitive Studies |

|---|---|---|---|

| Standard Placement | Chest (e.g., 12-lead, 5-lead systems) [41] | Clinical-grade heart rate and HRV monitoring for stress and workload [24] | Can be obtrusive for continuous, long-term monitoring. |

| Non-Standard Placement | Thoracic area, single arm (wrist, upper arm) [41] | Enables compact, wearable form factors suitable for continuous monitoring | Signal strength decreases with distance from the heart; waveform morphology changes [41]. |

| Signal Quality | R-wave detection sensitivity can be as low as 27.3% for non-standard placements [41] | Feasibility of regional ECG monitoring for joint acquisition with other signals (e.g., ICG) | Requires robust signal quality assessment (SQA) algorithms for post-processing [41]. |

| Biometric Utility | Shows higher discriminative power for subject identification (NMI=0.641) than for activity recognition [39] | Can be used for user verification in longitudinal studies while collecting physiological data. |

Electroencephalography (EEG)

EEG measures electrical activity in the brain, providing direct insight into neural correlates of cognitive processes. It is a critical tool for assessing cognitive workload, mental state, and neurophysiological changes associated with cognitive decline [24].

Table 3: Key Specifications and Applications of Electroencephalography (EEG)

| Parameter | Typical Specifications | Primary Research Applications | Considerations for Cognitive Studies |

|---|---|---|---|

| Common Devices | EPOC X EEG headset [24] | Monitoring cognitive workload, engagement, and emotional state during tasks [24] | Consumer-grade headsets improve accessibility but may have lower resolution than clinical systems. |

| Placement | Scalp, according to the 10-20 international system | Assessing neural oscillations (alpha, beta, theta bands) related to various cognitive functions. | Setup can be challenging for self-administration by older adults or those with cognitive impairment. |

| Research Context | Used in human-robot collaboration studies to measure human factors [24] | Evaluating a user's cognitive state during interactions with assistive technologies or cognitive tests. | Provides a direct neural metric to complement behavioral data from IMUs and self-reports. |

Strategic Sensor Positioning on the Body

Optimal sensor placement is a trade-off between data accuracy, participant comfort, and the specific behaviors or physiological signals of interest. The following diagram summarizes the decision workflow for selecting and positioning sensors in a cognitive research context.

Key findings from recent research on sensor placement include:

- Back Placement for IMUs: A single IMU on the back can accurately classify a wide range of activities with low variability in movement, making it an excellent compromise for capturing whole-body motion without requiring multiple sensors [40].

- Non-Standard ECG Locations: For wearable devices, the thoracic area and single arm (including wrist and upper arm) are feasible for ECG acquisition, enabling compact form factors. However, this comes with a recognized trade-off, including lower R-wave detection sensitivity (27.3%) compared to T-wave (49%) and S-wave (44.9%) [41].

- Comfort and Compliance: For populations such as older adults with MCI, comfort and simplicity are paramount for adherence. Devices like the Empatica E4 wristband are widely used because they balance the capture of key physiological parameters with a socially acceptable and comfortable form factor [24].

Application in Social Behavior and Cognitive Decline Research

The integration of multimodal sensor data is pivotal for creating a holistic picture of an individual's health and cognitive status. This is exemplified by several recent research initiatives:

- The engAGE Project: This project uses a social robot (Pepper) for cognitive therapy, integrated with a wearable fitness tracker (Fitbit) to monitor sleep and physical activity at home. The combination of in-facility robot-led sessions and continuous at-home monitoring aims to counteract cognitive decline in older adults with MCI [30] [31].

- The Intuition Brain Health Study: This large-scale remote study uses iPhones and Apple Watches to capture multimodal data for classifying MCI. It leverages both passive sensing (e.g., activity, sleep) and interactive cognitive assessments on the devices to track cognitive health trajectories in a diverse, aging population [8].

- Unsupervised Activity and Identity Recognition: Research shows that while accelerometer data is superior for activity recognition (NMI=0.728, accuracy=0.817), ECG data has higher discriminative power for subject identification (NMI=0.641). This dual utility is valuable for ensuring data integrity and understanding individual behavioral patterns in longitudinal cognitive studies [39].

Detailed Experimental Protocols

Protocol for Validating Non-Standard ECG Placements

Objective: To evaluate the feasibility of acquiring clinically usable ECG signals from non-standard locations (thorax, upper arm, wrist) for long-term monitoring of participants in cognitive decline studies.

Materials:

- Commercial wet gel Ag/AgCl electrodes or integrated dry-electrode systems.

- A data acquisition system capable of simultaneous recording from multiple channels.

- A signal processing software (e.g., MATLAB, Python with SciPy/NumPy).

Methodology:

- Participant Preparation: Clean the skin with alcohol wipes at all electrode sites to reduce impedance.

- Electrode Placement: Apply electrodes to:

- Standard Lead I: Right and left wrists (for reference).

- Experimental Locations: Thoracic area (targeting proximity to the heart) and the upper arm and wrist of a single limb.

- Data Acquisition: Record ECG signals simultaneously from all locations for a minimum of 10 minutes at rest and, if possible, during light activity (e.g., walking).

- Signal Processing:

- Apply a bandpass filter (e.g., 0.5-40 Hz) to remove baseline wander and high-frequency noise.

- Implement an R-peak detection algorithm (e.g., Pan-Tompkins).

- Signal Quality Assessment (SQA):

- Calculate the sensitivity of R, S, and T wave detection for each non-standard lead compared to the standard lead.

- Apply a custom algorithm to distinguish R waves in cases of large T-wave amplitudes [41].

- Data Analysis: Compare the median sensitivity of waveform detection across locations. A location is deemed feasible if key waveform features are recognizable and allow for reliable RR-interval calculation.

Protocol for Human Activity Recognition (HAR) with a Single IMU

Objective: To accurately classify Activities of Daily Living (ADLs) using a single IMU sensor placed on the lower back for studies on mobility and functional decline.

Materials:

- A single IMU sensor with tri-axial accelerometer and gyroscope.

- A data logger or Bluetooth transmitter for data streaming.

- A computing system with a hybrid 2D CNN-BiLSTM model for analysis [40].

Methodology:

- Sensor Configuration: Secure the IMU sensor to the participant's lower back using an adhesive patch or an elastic belt.

- Activity Protocol: Instruct participants to perform a series of scripted activities:

- Basic motions: Sitting, standing, lying down.

- Ambulation: Walking on a flat surface, climbing stairs, descending stairs.

- Complex tasks: Cleaning the floor (bending, stretching), lifting objects.

- Data Collection: Record IMU data at a minimum of 50 Hz. Label the data for each activity segment.

- Data Pre-processing:

- Normalize the sensor data per participant.

- Segment the data into fixed-length windows (e.g., 2-5 seconds) with 50% overlap.

- Model Training:

- Train a hybrid 2D CNN-BiLSTM model. The CNN extracts spatial features from the sensor data within each window, while the BiLSTM layer captures temporal dependencies between consecutive windows.

- Use the collected labeled data to train the model for multi-class activity classification.

- Validation: Evaluate model performance using leave-one-subject-out cross-validation and report the overall accuracy and per-class F1 scores. The cited study achieved 91.77% accuracy for 54 activity classes using this methodology [40].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Key Materials and Reagents for Wearable Sensor Research

| Item Name | Function/Application | Example Use Case | Specific Examples |

|---|---|---|---|

| Empatica E4 Wristband | A research-grade wearable capturing EDA, PPG-based HR/HRV, skin temperature, and motion. | Measuring psychophysiological responses (stress, cognitive workload) in real-world settings [24]. | Used in human-robot collaboration studies to capture physiological parameters for human factors analysis [24]. |

| Shimmer3 GSR+ Unit | A versatile sensing platform for electrodermal activity (GSR), ECG, and EMG. | Investigating sympathetic nervous system arousal as an indicator of emotional or cognitive state. | A common choice for laboratory and field research requiring multi-modal physiological data capture [24]. |

| EPOC X EEG Headset | A consumer-grade electroencephalography (EEG) device with 14 channels. | Assessing cognitive workload, attention, and engagement during cognitive tasks or therapy sessions. | Provides direct neural metrics to complement behavioral and physiological data in cognitive studies [24]. |

| Ag/AgCl Electrodes | Standard disposable electrodes with wet gel electrolyte for superior signal quality. | Acquiring high-fidelity ECG and EEG signals in controlled or clinical settings. | Essential for validation studies comparing standard and non-standard ECG placements [41]. |

| Hybrid 2D CNN-BiLSTM Model | A deep learning architecture for time-series classification. | Human Activity Recognition (HAR) from IMU data streams. | Achieved 91.77% accuracy in classifying 54 activity classes from a single back-placed IMU [40]. |

The proliferation of consumer-grade wearable devices presents an unprecedented opportunity to monitor human behavior and cognitive health in real-world settings. The Activity Recognition Chain (ARC) provides the essential computational framework for transforming raw sensor data into meaningful insights about an individual's activities and cognitive status. Within research on social behavior and cognitive decline, ARC serves as a critical methodological backbone, enabling the development of digital biomarkers for conditions like Mild Cognitive Impairment (MCI) through continuous, unobtrusive monitoring [8]. Technological advances now allow researchers to capture rich, multimodal data streams from wearable sensors, creating new paradigms for early detection and monitoring of neurodegenerative conditions [30] [8]. This protocol outlines standardized methodologies for the preprocessing, segmentation, and feature extraction stages of ARC, with specific application to cognitive health research for scientific and drug development professionals.

The Activity Recognition Chain Framework

The ARC represents a structured pipeline for converting raw sensor data into identifiable activity classes. This framework is particularly vital for healthcare applications, where it enables the recognition of Activities of Daily Living (ADL)—crucial indicators for supporting independence and quality of life in elderly populations [42]. In cognitive decline research, deviations in normal activity patterns captured through this chain can serve as early warning signs of MCI progression [8]. The ARC follows a sequential protocol comprising data acquisition, preprocessing, segmentation, feature extraction, and model classification, with the output facilitating remote monitoring, accident prevention, and rehabilitation support [42].

Table 1: Core Components of the Activity Recognition Chain

| Chain Component | Primary Function | Output | Significance in Cognitive Health Research |

|---|---|---|---|

| Data Acquisition | Collection of raw sensor signals | Time-series data from accelerometers, gyroscopes, magnetometers | Provides foundational behavioral data for cognitive assessment |

| Preprocessing | Noise reduction and data cleaning | Cleaned, normalized sensor data | Ensures data quality for reliable digital biomarker development |

| Segmentation | Division of data streams into analyzable units | Fixed or variable-length data windows | Enables pattern analysis for activity-specific cognitive demands |

| Feature Extraction | Calculation of informative descriptors | Time-domain, frequency-domain, and statistical features | Facilitates identification of discriminative patterns related to cognitive status |

| Model Classification | Activity recognition and pattern identification | Activity labels or cognitive health classifications | Supports MCI detection and cognitive trajectory mapping [8] |

Preprocessing Protocols

Data Cleaning and Imputation