Visualizing the Nervous System: A Comprehensive Guide to Microscopy Applications in Neuroscience Research and Drug Development

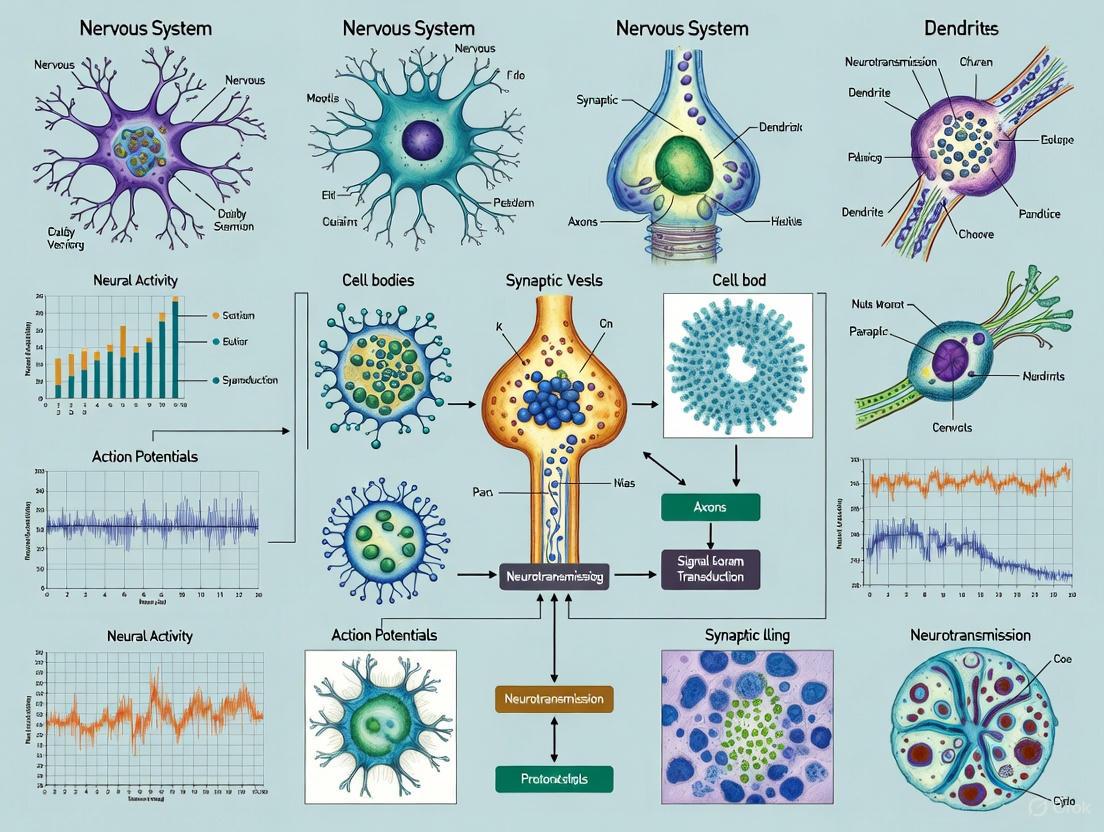

This article provides a comprehensive overview of modern microscopy techniques essential for visualizing the nervous system, from the central and peripheral networks to the enteric nervous system.

Visualizing the Nervous System: A Comprehensive Guide to Microscopy Applications in Neuroscience Research and Drug Development

Abstract

This article provides a comprehensive overview of modern microscopy techniques essential for visualizing the nervous system, from the central and peripheral networks to the enteric nervous system. It explores foundational principles of light and electron microscopy and delves into advanced methodologies like multiphoton and light-sheet imaging that enable deep-tissue and live-cell analysis. The content addresses common challenges such as imaging thick tissues and fast dynamic processes, offering practical solutions. Furthermore, it covers validation protocols and comparative analyses of techniques, providing researchers and drug development professionals with the knowledge to select the optimal imaging strategies for studying neural circuitry, neurodegenerative diseases, and evaluating therapeutic interventions.

The Microscopist's Lens: Core Principles and Evolving Techniques for Nervous System Visualization

Light microscopy serves as a cornerstone in biological research, enabling the visualization of cells, their substructures, and molecular components within the nervous system [1]. The progression from fundamental techniques like brightfield to advanced fluorescence methods has profoundly accelerated our understanding of neural architecture and function. This application note details core methodologies, providing structured protocols and data to support researchers and drug development professionals in visualizing the nervous system. The content is framed within a broader research context, emphasizing practical application and quantitative outcomes relevant to the study of neural tissues.

Core Microscopy Modalities: Principles and Applications

The selection of an appropriate microscopy modality is dictated by the research question, the nature of the specimen, and the required resolution. The following table summarizes key characteristics of prevalent techniques in neural imaging.

Table 1: Comparison of Light Microscopy Techniques in Neural Imaging

| Microscopy Technique | Primary Principle | Typical Resolution | Key Applications in Neural Research | Labeling Requirement |

|---|---|---|---|---|

| Brightfield | Transmitted light absorption | ~200 nm [1] | Histology of neural tissues; visualization of stained cell bodies [1]. | Histochemical stains (e.g., NADPH-diaphorase) [1]. |

| Structured Illumination Microscopy (SIM) | Moiré patterns from grid illumination | ~100 nm [2] | Live imaging of synaptic proteins; organelle dynamics in neurons [2]. | Fluorescent proteins or dyes. |

| Two-Photon Fluorescence | Simultaneous absorption of two photons | Sub-micrometer [3] | In vivo deep-tissue imaging of neural activity (e.g., calcium imaging); monitoring dendritic spines [3]. | Genetically encoded calcium indicators (GECIs) [3]. |

| Expansion Microscopy (ExM) | Physical specimen enlargement | ~25-70 nm (post-expansion) [2] [1] | Nanoscale mapping of synaptic proteins; ultrastructural analysis of neural circuits [2] [1]. | Fluorescent antibodies or stains, anchored to a gel [1]. |

| STED Microscopy | Stimulated emission depletion | Nanoscale [2] | Live imaging of functional neuroanatomy; dynamics of presynaptic vesicles [2]. | Fluorescent labels. |

The following workflow diagram illustrates the logical decision-making process for selecting and applying these microscopy techniques in a neural imaging research context.

Detailed Experimental Protocols

Protocol: Expansion Microscopy (ExM) of the Enteric Nervous System

Expansion Microscopy (ExM) is a powerful technique that bypasses the diffraction limit of light by physically enlarging the biological specimen in a swellable hydrogel, allowing for nanoscale resolution on a conventional light microscope [1]. The following workflow and protocol detail its application for the enteric nervous system (ENS).

Objective: To achieve high-resolution structural analysis of the myenteric plexus in mouse colon using ExM, enabling clear visualization of neuronal somata, fibers, and glial cell processes [1].

Materials and Reagents:

- Animals: Adult Balb/c mice (3–5 months old) [1].

- Fixative: 4% Paraformaldehyde (PFA) in PBS.

- Staining Reagents: Primary antibody against Glial Fibrillary Acidic Protein (GFAP) for glial cells, and appropriate secondary antibody. For neurons, NADPH-diaphorase histochemistry reagents [1].

- Anchoring Solution: Acryloyl-X SE (0.1 mg/mL in 1x PBS) [1].

- Monomer Solution for Gel:

- Sodium acrylate (86 mg/mL)

- Acrylamide (25 mg/mL)

- N,N'-Methylenebisacrylamide (1.5 mg/mL)

- Sodium chloride (117 mg/mL) in 1x PBS [1].

- Gelling Initiators: Tetramethylethylenediamine (TEMED, 2 mg/mL) and Ammonium persulfate (APS, 2 mg/mL) [1].

- Digestion Buffer: 50 mM Tris, 0.5% Triton X-100, 0.29 mg/mL EDTA, pH 8 [1].

- Digestion Enzyme: Proteinase K (8 U/mL) [1].

- Expansion Bath: Deionized water.

Step-by-Step Procedure:

Tissue Preparation and Staining:

- Excise the mouse colon, open along the mesenteric border, and pin flat in a dissection dish.

- Carefully remove the mucosa and most of the submucosa, leaving the external muscle layers with the intact myenteric plexus.

- Fix the tissue preparation with 4% PFA for 1 hour at room temperature.

- Perform immunostaining for GFAP to label enteric glial cells and/or NADPH-diaphorase histochemistry to label nitrergic neurons [1].

Biomolecule Anchoring:

- Incubate the stained tissue in anchoring solution (Acryloyl-X SE) overnight at room temperature. This step covalently links fluorescent labels and biomolecules to the polymer gel that will form [1].

Gelation:

- Prepare the gelling solution by mixing the monomer solution with TEMED and APS initiators.

- Place the tissue in the gelling solution and incubate at 37°C for 2 hours to allow for complete polymerization into a hydrogel [1].

Proteinase K Digestion:

- Transfer the gel-embedded tissue to digestion buffer containing Proteinase K (8 U/mL).

- Incubate overnight at 37°C. This step digests proteins, allowing the gel to expand isotropically by breaking the mechanical integrity of the tissue while the anchored labels remain in place [1].

Isotropic Expansion:

- Carefully transfer the digested gel to a large volume of deionized water.

- Allow the gel to expand fully, replacing the water 3-4 times over the course of 1-2 hours. A 3–5-fold linear expansion (approximately 4x) is typical with this protocol, leading to a ~64x increase in volume [1].

Image Acquisition:

- Image the expanded gel using a standard brightfield or fluorescence microscope. The effective resolution is increased by the expansion factor, allowing visualization of features otherwise obscured by the diffraction limit [1].

Validation and Troubleshooting:

- Expansion Factor: Measure the dimensions of the gel before and after expansion in water to calculate the linear expansion factor.

- Distortion: This protocol reports a distortion in the X-Y plane of about 7%, which is acceptable for most structural analyses [1].

- Non-uniform Expansion: If expansion is uneven, ensure complete digestion by checking Proteinase K concentration and incubation time.

Protocol: Two-Photon Calcium Imaging for Neural Decoding

Two-photon fluorescence imaging, particularly two-photon calcium imaging (2PCI), is an indispensable tool for recording neural activities in living animals with single-cell resolution [3].

Objective: To decode neural activity related to behavior, sensory input, or cognitive processes by recording changes in intracellular calcium concentration using two-photon microscopy [3].

Materials and Reagents:

- Animals: Suitable animal model (e.g., mouse) expressing a genetically encoded calcium indicator (GECI, e.g., GCaMP) in the neuronal population of interest, or prepared with a chemical indicator (e.g., Fluo-4, Fura-2) [3].

- Surgical Supplies: for cranial window implantation.

- Two-Photon Microscope with a pulsed infrared laser and high-sensitivity detectors.

- Data Acquisition Software for recording fluorescence time series and synchronizing with behavioral data.

Step-by-Step Procedure:

Animal Preparation:

- Implement a cranial window over the brain region of interest to provide optical access for the microscope objective.

- Ensure robust expression of the calcium indicator in neurons, either via viral injection or in transgenic animal lines [3].

Microscope Setup:

- Set the two-photon laser to the appropriate wavelength for exciting the chosen calcium indicator (e.g., ~920-1000 nm for GCaMP).

- Define the imaging field of view and depth within the tissue.

Data Acquisition:

- Simultaneously record the fluorescence video (movie) of the neuronal population and the relevant behavioral data (e.g., running speed, lever presses, or visual stimuli).

- Collect data over multiple trials to ensure statistical robustness [3].

Data Preprocessing:

- Motion Correction: Align video frames to correct for movement artifacts from breathing or animal motion.

- Source Extraction: Use algorithms (e.g., independent component analysis) to identify and extract the fluorescence signals from individual neurons within the recorded field of view [3].

Neural Decoding Analysis:

- Model the relationship between the extracted neural activity (e.g., spike rates, fluorescence transients) and the recorded behavior.

- Apply linear (e.g., linear regression) or nonlinear (e.g., support vector machines, random forests) mathematical models to decode the behavioral state from the neural activity patterns [3].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents for Advanced Neural Imaging Protocols

| Reagent / Material | Function / Application | Example Use Case |

|---|---|---|

| Acryloyl-X SE | Anchoring agent that covalently links biomolecules (proteins, labels) to the polyelectrolyte gel matrix in ExM [1]. | Prevents labeled biomarkers from diffusing away during the expansion process in ExM [1]. |

| Sodium Acrylate Monomer | Primary component of the swellable polyelectrolyte gel used in ExM [1]. | Forms the expandable hydrogel network that physically enlarges the biological specimen [1]. |

| Proteinase K | Broad-spectrum serine protease used to digest proteins in expanded samples [1]. | Enables isotropic hydrogel expansion by breaking down the native protein structure of the tissue after gelation [1]. |

| Genetically Encoded Calcium Indicators (GECIs) | Fluorescent proteins (e.g., GCaMP, CaMP series) whose brightness changes with intracellular calcium concentration [3]. | Reporting neural activity (action potentials) in vivo via two-photon calcium imaging for neural decoding studies [3]. |

| Glial Fibrillary Acidic Protein (GFAP) Antibodies | Immunohistochemical markers for astrocytes and enteric glial cells [1]. | Labeling and visualizing glial cell morphology and distribution in the central and enteric nervous systems [1]. |

| NADPH-diaphorase Histochemistry Reagents | Enzymatic staining method that selectively labels nitrergic neurons [1]. | Visualizing specific subpopulations of neurons in the enteric nervous system and brain [1]. |

Volume Electron Microscopy (VEM) has established itself as an indispensable tool in neuroscience research, providing unprecedented, nanometer-resolution insight into the intricate architecture of neurons and synapses. By enabling the detailed reconstruction of neural circuits in three dimensions, VEM techniques allow researchers to infer synaptic function from ultrastructural features and map the complex connectivity patterns that underlie brain function [4]. This application note details the protocols and key findings from contemporary VEM studies, with a specific focus on its application in analyzing human postmortem brain tissue. The ability to perform such detailed analysis on human tissue provides a critical bridge between experimental animal models and human neurobiology, offering direct insights into the microanatomical foundations of human cognition and the pathological changes associated with neurological and psychiatric disorders [4] [5].

Application Notes: Key Insights from Recent Studies

Validation of Postmortem Human Brain Tissue for Ultrastructural Analysis

A significant concern in human neuroscience has been whether the ultrastructural correlates of synaptic function observed in experimental models are preserved in postmortem human brain tissue. Recent VEM studies have convincingly demonstrated that fundamental synaptic relationships remain intact despite postmortem processes and long-term tissue storage [4].

- Preserved Functional Correlates: Quantitative analysis of human dorsolateral prefrontal cortex (DLPFC) tissue using Focused Ion Beam-Scanning Electron Microscopy (FIB-SEM) revealed that key ultrastructural features predictive of synaptic function in experimental models were maintained. These include the correlation between presynaptic active zone size and neurotransmitter release probability, and the relationship between postsynaptic density (PSD) size and AMPA receptor abundance [4].

- Tissue Integrity: Studies of human medial entorhinal cortex (MEC) showed excellent preservation of cellular and organelle plasma membranes with minimal signs of autolysis, confirming that postmortem tissue is suitable for detailed ultrastructural analysis when proper preservation protocols are followed [5].

Unique Synaptic Characteristics of Human Cortical Regions

VEM analysis of different human brain regions has revealed distinct synaptic organizational patterns that may underlie their specialized functional roles.

- Entorhinal Cortex Specialization: A comprehensive 3D analysis of all layers of the human medial entorhinal cortex (MEC) reconstructed 12,974 synapses at the ultrastructural level, revealing a distinct set of synaptic features that differentiate this region from other human cortical areas [5]. While layers I and VI exhibited several unique synaptic characteristics, the overall ultrastructural organization throughout the MEC was predominantly similar, suggesting a consistent computational architecture across layers with specialized input and output layers [5].

- Laminar Variations: Within the MEC, specific layers showed specialized features. For instance, pyramidal neuron dendritic spines often contained a spine apparatus or smooth endoplasmic reticulum, and were frequently observed to receive dual innervation from both Type 1 (excitatory) and Type 2 (inhibitory) synapses, indicating complex integration capabilities [4] [5].

Table 1: Synaptic Characteristics Across Human Cortical Regions Based on VEM Analysis

| Cortical Region | Synaptic Density | Excitatory:Inhibitory Ratio | Unique Features | Postsynaptic Targets |

|---|---|---|---|---|

| DLPFC Layer 3 | High | Not specified | Dually innervated spines receiving both Type 1 and Type 2 synapses | Dendritic spines, dendritic shafts, neuronal somata |

| MEC (all layers) | 12,974 synapses in sampled volume | Varied by layer | Distinct synaptic features differentiating from other cortical areas | Dendritic shafts (spiny and aspiny), spines, somata |

| MEC Layer I | Distinct from other layers | Distinct from other layers | Unique synaptic characteristics | Not specified |

| MEC Layer VI | Distinct from other layers | Distinct from other layers | Unique synaptic characteristics | Not specified |

Correlation Between Synaptic Ultrastructure and Function

VEM enables the quantification of ultrastructural features that directly reflect synaptic function and metabolic capacity.

- Functional Inference: The size of the postsynaptic density (PSD) strongly correlates with excitatory postsynaptic potential amplitude and AMPA receptor abundance, allowing researchers to infer synaptic strength from ultrastructural measurements [4].

- Energetic Capacity: Mitochondrial abundance, size, and morphology within presynaptic boutons reflect the energy demands of synaptic transmission, with larger mitochondria associated with higher metabolic requirements [4].

- Coordinated Pre- and Postsynaptic Specialization: VEM analysis consistently demonstrates coordinated scaling of pre- and postsynaptic elements, reflecting their functional interdependence. Presynaptic active zone size correlates with PSD size, and presynaptic mitochondrial abundance relates to PSD size, indicating matched functional capacity [4].

Table 2: Ultrastructural-Functional Relationships in Synapses Revealed by VEM

| Ultrastructural Feature | Functional Correlation | Biological Significance | Measurement Approach |

|---|---|---|---|

| Postsynaptic Density (PSD) Size | Correlates with excitatory postsynaptic potential amplitude and AMPA receptor abundance [4] | Indicator of synaptic strength and receptor content | 3D volumetric analysis from VEM data |

| Presynaptic Active Zone Size | Reflects glutamate release probability [4] | Indicator of neurotransmitter release capacity | 3D reconstruction of presynaptic specializations |

| Mitochondrial Volume & Abundance | Reflects ATP production capacity for synaptic transmission [4] | Indicator of metabolic support and synaptic endurance | Volumetric analysis of organelles in presynaptic boutons |

| Spine Apparatus Presence | Associated with synaptic plasticity and calcium regulation | Indicator of postsynaptic computational capability | Identification of intracellular organelles in spines |

Experimental Protocols

FIB-SEM for Synaptic Analysis in Human Postmortem Tissue

Tissue Preparation and Preservation

The following protocol has been optimized for human postmortem brain tissue, incorporating modifications to address the challenges of autolysis and preservation:

- Tissue Acquisition and Fixation: Obtain postmortem human brain samples from the middle frontal gyrus (DLPFC) or medial temporal lobe (entorhinal cortex) within the postmortem interval. Dissect tissue samples containing all cortical layers and underlying white matter. Immersion-fix in 4% paraformaldehyde/0.2% glutaraldehyde for 48 hours [4].

- Cryoprotection and Storage: Section fixed tissue at 50-μm intervals using a vibratome. Transfer sections to cryoprotectant solution (30% ethylene glycol/30% glycerol) and store at -30°C for extended periods (protocol validated for up to 8 years) [4].

- EM Sample Preparation: Modify the approach developed by Hua et al. (2015) to optimize preservation, staining, and contrast of postmortem human brain tissue sections from long-term storage. Key modifications include adjusted staining times and concentrations to account for tissue characteristics [4].

- Heavy Metal Staining: Enhance contrast using osmium tetroxide, uranyl acetate, and lead aspartate to ensure sufficient signal for FIB-SEM imaging throughout the tissue depth [4].

FIB-SEM Data Acquisition

- Sample Mounting: Mount stained samples on SEM stubs using conductive adhesive.

- Parameter Optimization: Optimize SEM parameters for neural tissue:

- Beam Current: Use lower beam currents (e.g., 3.1 pA) to resolve fine features while compensating for potential signal-to-noise issues with longer pixel dwell times [6].

- Beam Voltage: Balance between penetration depth and surface detail resolution (typically 1-5 kV for neural tissue).

- Pixel Dwell Time: Adjust dwell time (e.g., 10-30 μs) to achieve sufficient signal-to-noise ratio without excessive charging or prohibitively long acquisition times [6].

- Sequential Milling and Imaging: Use a focused ion beam to mill tissue at 5 nm step-sizes, followed by SEM imaging of each newly exposed surface. Iterate through these steps until the entire tissue block is imaged [4].

- Data Collection: Acquire serial images with ultrafine Z-resolution (5 nm) to generate comprehensive 3D volumes of neuropil for subsequent analysis.

LICONN: Light-Microscopy-Based Connectomics

A groundbreaking methodological advancement, LICONN combines iterative hydrogel expansion with diffraction-limited light microscopy to achieve synapse-level reconstruction while incorporating molecular information.

Iterative Hydrogel Expansion Protocol

- Perfusion and Initial Fixation: Perfuse mice transcardially with hydrogel monomer (acrylamide, AA)-containing fixative solution (10% AA) to equip cellular molecules with vinyl residues for subsequent hydrogel incorporation [7].

- Epoxide Functionalization: Collect and slice brains, then treat with multi-functional epoxide compounds (glycidyl methacrylate, GMA, and glycerol triglycidyl ether, TGE) to functionalize proteins with acrylate groups for enhanced hydrogel anchoring and tissue stabilization [7].

- First Hydrogel Polymerization: Polymerize an expandable acrylamide-sodium acrylate hydrogel, integrating functionalized cellular molecules into the network. Disrupt mechanical cohesiveness using heat and chemical denaturation, achieving approximately 4× expansion [7].

- Optional Immunolabelling: Apply immunolabelling at this stage to visualize specific proteins while maintaining structural context [7].

- Stabilization and Second Hydrogel: Apply a non-expandable stabilizing hydrogel to prevent shrinkage, then introduce a second swellable hydrogel that intercalates with the first network [7].

- Chemical Neutralization: Optimize hydrogel composition and neutralize unreacted groups after each polymerization step to prevent cross-links between hydrogels and ensure independent expansion [7].

- Protein-Density Staining: Comprehensively visualize cellular structures using fluorophore NHS esters to map primary amines abundant on proteins [7].

- Final Expansion: Achieve approximately 16× linear expansion (15.44 ± 1.68, mean ± s.d.) with triple-hydrogel-sample hybrids, translating to effective resolutions of approximately 20 nm laterally and 50 nm axially when imaged with high-NA water-immersion objectives [7].

Imaging and Reconstruction

- Spinning-Disc Confocal Imaging: Image expanded samples using high-numerical-aperture (NA = 1.15) water-immersion objective lenses with effective voxel sizes of approximately 10 × 10 × 25 nm³ (native tissue scale) [7].

- Automated Volume Fusion: Acquire partially overlapping subvolumes arranged on a grid pattern and implement scalable optical flow-based image montaging and alignment (SOFIMA) for seamless volume fusion [7].

- Deep-Learning-Based Segmentation: Apply flood-filling networks and other machine learning algorithms for automated reconstruction of neuronal structures through multiple tissue slices, achieving traceability of the finest neuronal structures including axons and dendritic spines [7] [8].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagent Solutions for Volume EM and Expansion Microscopy

| Reagent/Material | Function | Application |

|---|---|---|

| Glycidyl Methacrylate (GMA) | Multi-functional epoxide compound for protein functionalization with acrylate groups [7] | LICONN: Hydrogel anchoring of cellular molecules |

| Glycerol Triglycidyl Ether (TGE) | Triple-epoxide compound for enhanced biomolecule fixation and stabilization [7] | LICONN: Tissue stabilization and hydrogel incorporation |

| Acrylamide-Sodium Acrylate Hydrogel | Expandable polymer network for physical tissue expansion [7] | LICONN: Iterative expansion to achieve ~16× linear enlargement |

| Fluorophore NHS Esters | Amine-reactive dyes for comprehensive protein-density staining [7] | LICONN: Pan-protein labeling for structural visualization |

| Osmium Tetroxide | Heavy metal fixative and contrast agent for membrane preservation [4] | FIB-SEM: Lipid membrane stabilization and electron density |

| Uranyl Acetate | Heavy metal stain for nucleic acids and proteins [4] | FIB-SEM: Enhanced contrast of cellular structures |

| Lead Aspartate | Aqueous lead stain for enhanced tissue contrast [4] | FIB-SEM: Additional electron density for visualization |

Volume Electron Microscopy, particularly through FIB-SEM and the emerging LICONN method, has revolutionized our ability to analyze the nanoscale architecture of neurons and synapses in both animal models and human postmortem tissue. The protocols detailed in this application note provide researchers with robust methodologies for extracting quantitative ultrastructural data that reflects synaptic function, connectivity, and metabolic capacity. The validation of human postmortem tissue for such analyses opens new avenues for directly investigating the synaptic underpinnings of human cognition and the pathological changes in neurological and psychiatric disorders. As these technologies continue to evolve, particularly with the integration of molecular information in approaches like LICONN, neuroscience research stands to gain increasingly comprehensive insights into the structural and functional organization of the nervous system.

The nervous system's complex architecture, spanning from nanoscopic synapses to macroscopic organ-scale networks, presents a unique challenge for comprehensive visualization. Understanding brain function and the mechanisms of neurological diseases requires tools that can bridge these spatial scales, providing insights into molecular composition, cellular connectivity, and system-wide organization. Recent revolutionary advances in microscopy, tissue preparation, and computational analysis have finally enabled researchers to explore the entire nervous system—the Central (CNS), Peripheral (PNS), and Enteric (ENS) divisions—with unprecedented clarity and precision. This article details cutting-edge imaging applications and protocols that are driving discovery across all neural domains, empowering researchers and drug development professionals with methodologies to visualize the nervous system in its full complexity.

Advanced Imaging Modalities for Nervous System Visualization

Performance Comparison of Modern Neural Imaging Techniques

The following table summarizes key performance metrics for several advanced imaging modalities discussed in this article, highlighting their respective advantages for different nervous system applications.

Table 1: Performance Metrics of Advanced Imaging Technologies for Nervous System Visualization

| Imaging Technology | Effective Resolution | Imaging Depth / Volume | Imaging Speed | Primary Applications in Nervous System |

|---|---|---|---|---|

| LICONN [7] | ~20 nm lateral, ~50 nm axial (after 16x expansion) | ~1 × 10⁶ µm³ volumes | 17 MHz (voxel rate); 0.47 Teravoxels in 6.5 hours | Dense connectomic reconstruction of brain tissue; synaptic-level circuit mapping |

| ExA-SPIM [9] | 375 nm lateral, 750 nm axial (after 4x expansion) | Centimeter-scale samples (entire mouse brains) | Up to 946 Megavoxels/second | Brain-wide imaging at cellular and subcellular resolution; single neuron reconstruction across entire mouse brain |

| Blockface-VISoR [10] | Subcellular resolution | Entire adult mouse body | 40 hours for full mouse body (70 TB/data channel) | Whole-body mapping of peripheral nerve architecture; single-fiber projection tracing |

| LF-MP-PAM [11] | Single-cell resolution | >1.1 mm in living tissue | Not specified | Label-free metabolic imaging of NAD(P)H in living brain; potential for human intraoperative use |

| Expansion Microscopy (ENS) [12] [1] | Nanoscale (after 3-5x expansion) | Tissue sections (mouse colon) | Compatible with standard microscopy | Nanoscale visualization of enteric neuronal and glial architecture |

Technology Workflows and Relationships

The following diagram illustrates the decision-making workflow for selecting the appropriate imaging modality based on the target nervous system division and research objective.

Application Notes and Experimental Protocols

Central Nervous System (CNS): Synapse-Level Connectomic Reconstruction

Protocol: LICONN (Light-Microscopy-Based Connectomics) for Dense Cortical Circuit Reconstruction [7]

The LICONN method enables dense reconstruction of brain circuitry with synaptic resolution by integrating iterative hydrogel expansion with deep-learning-based segmentation, directly incorporating molecular information into connectomic maps.

Step 1: Perfusion and Initial Fixation

- Perfuse mice transcardially with a hydrogel monomer-containing fixative solution (10% acrylamide).

- This step equips cellular molecules with vinyl residues for subsequent co-polymerization.

Step 2: Tissue Processing and Epoxide-Based Anchoring

- Collect and slice brains into sections.

- Incubate slices with multi-functional epoxide compounds (Glycidyl Methacrylate, GMA; Glycerol Triglycidyl Ether, TGE) to functionalize proteins broadly with acrylate groups for hydrogel anchoring and to further stabilize biomolecules.

Step 3: First Hydrogel Polymerization and Expansion

- Polymerize an expandable acrylamide-sodium acrylate hydrogel around the tissue, integrating functionalized cellular molecules into the network.

- Disrupt mechanical cohesiveness using heat and chemical denaturation.

- Achieve approximately 4-fold linear expansion.

Step 4: Optional Immunolabeling

- Apply immunolabeling at this stage to visualize specific proteins of interest in the expanded tissue.

Step 5: Second Hydrogel Application and Expansion

- Apply a non-expandable stabilizing hydrogel to prevent shrinkage.

- Intercalate a second swellable hydrogel with the first network.

- Chemically neutralize unreacted groups after each polymerization to prevent cross-links and ensure independent hydrogel expansion.

- Achieve a final expansion factor of approximately 16-fold.

Step 6: Pan-Protein Staining and Imaging

- Perform protein-density staining using fluorophore-conjugated N-hydroxysuccinimidyl (NHS) esters to comprehensively visualize cellular structures.

- Image using a high-numerical-aperture (NA = 1.15) water-immersion spinning-disk confocal microscope.

- Acquire large volumes by tiling (e.g., 132 subvolumes), then fuse using automated algorithms like SOFIMA.

Research Reagent Solutions for LICONN [7]

- Acrylamide Monomer (10%): Primary component of the swellable hydrogel matrix.

- Glycidyl Methacrylate (GMA): Multi-functional epoxide for broad protein functionalization with acrylate groups.

- Glycerol Triglycidyl Ether (TGE): Triple-epoxide compound for biomolecule fixation and stabilization.

- Fluorophore NHS Esters: For pan-protein staining of amine groups on cellular proteins.

- Primary & Secondary Antibodies: For specific molecular labeling post-expansion.

Peripheral Nervous System (PNS): Whole-Body Nerve Mapping

Protocol: Blockface-VISoR for System-Wide PNS Architecture Mapping [10]

This protocol enables high-definition panoramic imaging of the entire mouse body to map peripheral nerves at subcellular resolution, revealing single-fiber projection paths.

Step 1: Whole-Body Clearing and Hydrogel Embedding

- Utilize the ARCHmap protocol for whole-body clearing and hydrogel embedding of adult mouse specimens.

- This process renders large, heterogeneous biological samples optically transparent and structurally stabilized.

Step 2: In Situ Sectioning and 3D Blockface Imaging

- Mount the prepared sample in the Blockface-VISoR imaging system, which integrates a precision vibratome.

- For each imaging cycle:

- Capture a ~600 μm-depth 3D surface image using the VISoR (Volumetric Imaging with Synchronized on-the-fly scan and Readout) technology.

- Automatically remove a 400-μm-thick tissue layer with the vibratome.

- Repeat the cycle until the entire sample is processed.

Step 3: Automated Image Stitching and 3D Reconstruction

- Use automated inter-section stitching algorithms to perform seamless 3D alignment.

- Utilize ~200-μm overlapping regions between adjacent sections for accurate registration.

- This generates a unified, massive-scale dataset (e.g., ~70 terabytes per fluorescence channel for an entire mouse).

Step 4: Nerve Tracing and Analysis

- Combine with various labeling methods (transgenic, viral, immunostaining) to visualize different nerve types.

- Employ computational tools to trace single-fiber projection paths, map vascular distribution of sympathetic nerves, and resolve the overall architecture of complex nerves like the vagus.

Research Reagent Solutions for Blockface-VISoR [10]

- ARCHmap Clearing Reagents: For whole-body tissue clearing and hydrogel embedding.

- Transgenic Mouse Models: For cell-type-specific labeling of neuronal populations.

- Viral Vectors (e.g., AAV): For targeted delivery of fluorescent reporters to specific neural circuits.

- Primary Antibodies (e.g., anti-beta III tubulin): For immunostaining of neuronal structures.

Enteric Nervous System (ENS): Nanoscale Visualization in the Gut

Protocol: Expansion Microscopy for Mouse Enteric Nervous System [12] [1]

This protocol provides a detailed and reproducible method for applying ExM to mouse colonic ENS tissue, enabling nanoscale resolution of neuronal and glial structures using conventional microscopes.

Step 1: Tissue Preparation

- Dissect the mouse colon, open along the mesenteric border, and pin it flat.

- Remove the mucosa and most of the submucosa, creating a preparation of the external muscle layers with the exposed myenteric plexus.

Step 2: Staining

- For neurons: Perform NADPH-diaphorase histochemistry to selectively stain nitrergic neurons (suitable for brightfield microscopy).

- For glial cells: Perform immunofluorescence staining for Glial Fibrillary Acidic Protein (GFAP).

Step 3: Anchoring

- Incubate stained tissues overnight in Acryloyl-X, SE (AcX) to ensure covalent linkage of biomolecules to the subsequent hydrogel.

Step 4: Gelation

- Embed samples in a polyacrylamide-based swellable hydrogel.

- Prepare the gelling solution on ice, containing monomers, crosslinkers, 4-hydroxy-TEMPO (4HT), TEMED, and Ammonium Persulfate (APS).

- Flatten small tissue fragments under a coverslip during polymerization to prevent distortion.

Step 5: Digestion

- Trim excess gel and incubate tissues in a digestion buffer containing Proteinase K at 50°C overnight.

- Critical Parameter: For ENS tissue with relatively low collagen content in the ganglia, Proteinase K digestion alone is sufficient, avoiding the need for collagenase, which minimizes the risk of over-digestion and structural distortion.

Step 6: Expansion

- Immerse gels in deionized water and allow them to swell through three sequential 15-minute washes.

- This typically yields a 3–5-fold linear expansion, allowing clear visualization of neuronal somata, fibers, and fine glial processes.

Integrated AI-Powered 3D Pathology for GI Disease Diagnosis

Protocol: AI-Enhanced 3D Analysis of Human Colon Tissues [13]

This protocol integrates 3D imaging with artificial intelligence to improve the diagnosis of gastrointestinal diseases like ulcerative colitis and Hirschsprung's disease by providing quantitative analysis of the ENS and tissue microenvironment.

Step 1: Tissue Acquisition and Fixation

- Obtain human colon biopsy or surgical specimens.

- Fix samples overnight in 4% Paraformaldehyde (PFA) at 4°C.

Step 2: Tissue Clearing

- For Biopsy Samples: Use a rapid protocol involving decolorization, followed by electrophoretic tissue clearing (1.5 mA, 35°C, 4 hours) for rapid lipid removal.

- For Surgical Samples: Use a longer electrophoretic clearing step (1.5 mA, 35°C, 16 hours) to effectively clear larger tissue pieces.

Step 3: Immunostaining

- Incubate cleared tissues with primary antibodies (e.g., rabbit anti-beta III tubulin for neurons; mouse anti-E-Cadherin for epithelium) diluted in a specialized staining solution for 1-3 days.

Step 4: 3D Imaging

- Image the cleared and stained samples using appropriate 3D microscopy techniques (e.g., light-sheet, confocal).

Step 5: AI-Powered Analysis

- Process the 3D image data using machine learning algorithms trained for specific tasks.

- This enables automated and highly accurate segmentation, quantification, and classification of crypt structures, neural networks, and other histological features, significantly enhancing diagnostic precision and efficiency compared to traditional 2D histology.

Table 2: Key Reagents for Enteric Nervous System Expansion Microscopy and 3D Pathology

| Reagent Category | Specific Example | Function in Protocol |

|---|---|---|

| Anchoring Agent | Acryloyl-X, SE (AcX) | Covalently links biomolecules to the swellable hydrogel matrix. |

| Hydrogel Monomers | Sodium Acrylate, Acrylamide, N,N'-Methylenebisacrylamide | Forms the expandable polyacrylamide-based hydrogel scaffold. |

| Digestion Enzyme | Proteinase K | Digests proteins to disrupt tissue mechanical cohesiveness for uniform expansion. |

| Polymerization Initiator/Catalyst | Ammonium Persulfate (APS), TEMED | Initiates and catalyzes the free-radical polymerization of hydrogel monomers. |

| Neuronal Marker | NADPH-diaphorase | Histochemical stain for nitrergic neurons in the ENS. |

| Glial Marker | Anti-GFAP Antibody | Immunofluorescence label for enteric glial cells. |

| Pan-Neuronal Marker | Anti-beta III tubulin Antibody | General immunohistochemical marker for neurons in 3D pathology. |

| Clearing Reagents | CHAPS, N-Methyldiethanolamine | Forms decolorization and clearing solutions for lipid removal and tissue transparency. |

Discussion and Future Perspectives

The integration of advanced imaging modalities with sophisticated computational analysis is fundamentally transforming our ability to visualize and quantify the structure of the entire nervous system. Techniques like LICONN bring molecular phenotyping to synapse-level connectomics, while methods like Blockface-VISoR and ExA-SPIM break through previous barriers in imaging scale and speed, enabling system-level exploration of neural networks from the central brain to the peripheral extremities. In the ENS, once a technically challenging frontier, methods like expansion microscopy and AI-powered 3D pathology are now revealing the intricate architecture underlying gastrointestinal function and disease with unprecedented detail.

These technologies are not merely incremental improvements but represent a paradigm shift towards holistic, multi-scale neuroscience. They enable researchers to pose and answer questions about neural development, plasticity, degeneration, and repair that were previously inaccessible. As these protocols become more refined and accessible, they will undoubtedly accelerate both basic neuroscience research and the drug discovery pipeline, providing deeper insights into the pathological mechanisms of neurological and neurogastrointestinal disorders and facilitating the development of targeted therapeutic interventions.

Within the context of microscopy applications in nervous system visualization research, the precise targeting and visualization of specific neuronal populations and circuits represent a fundamental objective. Understanding the brain's intricate wiring and functional architecture requires tools that can delineate these relationships with high molecular and cellular specificity. Genetic and reporter tools have emerged as indispensable assets for this purpose, bridging the gap between anatomical connectivity and functional circuit analysis. These technologies enable researchers to mark, monitor, and manipulate defined neural ensembles based on their activity, connectivity, or molecular identity, thereby transforming our ability to decipher the nervous system's complexity [14]. This application note details key methodologies and protocols that leverage these tools for advanced neuroscience investigation and drug development.

A Toolkit of Genetic and Reporter Strategies

Multiple, complementary strategies exist for visualizing neuronal populations, each with distinct mechanisms, temporal profiles, and applications. The choice of tool depends on the experimental goals, such as the need for temporal control, permanence of labeling, or compatibility with other techniques.

Table 1: Comparison of Major Genetic and Reporter Tool Strategies

| Tool Strategy | Mechanism | Temporal Control | Label Permanence | Key Applications |

|---|---|---|---|---|

| Activity-Dependent Tagging (e.g., TRAP2) | Cre recombinase expression driven by immediate early gene promoters (e.g., c-Fos), activated by neuronal firing and stabilized by 4-OHT injection [15]. | High (hours). Captures ensembles active during a specific time window. | Permanent. Once recombination occurs, label is persistently expressed. | Mapping ensembles encoding specific memories or behaviors [15]. |

| Viral Vectors with Synthetic Promoters (e.g., AAV-RAM) | AAV-delivered gene construct under a synthetic Robust Activity Marker (RAM) promoter, which is silenced by doxycycline and expressed upon its removal [15]. | Moderate (days). Labels neurons active during the doxycycline-free period. | Transient (without integration). Lasts for the lifespan of the AAV episome. | Tagging neuronal populations active during distinct learning phases [15]. |

| Endogenous Protein Visualization (e.g., cFos IHC) | Immunohistochemical detection of the endogenous c-Fos protein, which is rapidly upregulated after neuronal activation [15]. | Low. Captures a snapshot of recent activity (typically 1-2 hours post-stimulus). | Transient. Protein degrades after several hours. | Validating activity patterns and confirming specificity of other tagging methods [15]. |

| Transsynaptic Tracers | Engineered viruses (e.g., rabies) or proteins that travel across synapses, labeling neurons pre- or post-synaptic to a starter population [14]. | Varies. Can be controlled by the timing of tracer injection and use of genetically defined starter cells. | Permanent or transient, depending on the system. | Mapping direct input (retrograde) or output (anterograde) connectivity of a defined cell population [14]. |

The following diagram illustrates the logical workflow for selecting an appropriate genetic or reporter tool based on primary experimental objectives.

Detailed Experimental Protocol: Triple Activity Tagging

This protocol describes a powerful method for visualizing three distinct neuronal ensembles activated during different events within the same animal, combining transgenic, viral, and immunohistochemical approaches [15].

Before You Begin

- Animal Preparation: Generate TRAP2 x Ai14 (tdTomato reporter) double-positive offspring and verify genotypes via PCR. House mice in a controlled environment (12-h light/dark cycle, 20°C–22°C) [15].

- Viral Preparation: Produce and titrate the AAV(DJ)-RAM-GFP viral construct. The RAM promoter drives GFP expression in a doxycycline-off manner [15].

- Behavioral Design: Optimize the behavioral paradigm to clearly separate three distinct learning and memory phases for tagging.

- Reagents: Ensure availability of 4-hydroxytamoxifen (4-OHT), doxycycline-containing food (40 mg DOX per kg chow), and primary antibodies for cFos immunohistochemistry.

- Permissions: Obtain all necessary institutional approvals for animal experiments.

Step-by-Step Procedure

Table 2: Timeline and Key Steps for Triple Activity Tagging

| Time (Relative to Start) | Procedure Step | Key Parameters & Notes |

|---|---|---|

| Week -8 to -10 | Mouse Breeding & Genotyping | Breed TRAP2 mice (Jax #030323) with Ai14-TdT mice (Jax #007914). Genotype pups using ear punches and specified PCR protocols [15]. |

| Week -2 | Viral Microinjection | Stereotaxically inject AAV-RAM-GFP into the brain region of interest (e.g., lateral amygdala). Allow 2 weeks for recovery and viral expression. |

| Day -7 to Day 0 | Doxycycline Diet | Feed animals DOX food to suppress baseline RAM-GFP expression. |

| Event 1 (e.g., Day 1) | Tag Ensemble 1 (TdT) | Administer 4-OHT to permanently label neurons active during Event 1 with tdTomato via the TRAP2 system. |

| Event 2 (e.g., Day 3) | Tag Ensemble 2 (GFP) | Temporarily remove DOX food 24h before Event 2. Neurons active during Event 2 will express GFP from the AAV-RAM construct. |

| Event 3 (e.g., Day 5) | Tag Ensemble 3 (cFos) | Perfuse and fix animals 90 min after Event 3. This timing captures peak cFos protein expression from recent neuronal activity. |

| Post-perfusion | Tissue Processing & Imaging | Prepare frozen or vibratome sections. Perform immunohistochemistry for cFos using a fluorophore-conjugated antibody (e.g., Cy5) not used by TdT or GFP. |

The integrated experimental workflow, from animal preparation to final analysis, is summarized below.

Data Analysis and Quantification

Following imaging, quantify the overlap and distribution of labeled neurons using image analysis software (e.g., ImageJ, Imaris).

- Cell Counting: Manually or automatically count TdT+, GFP+, and cFos+ cells within the region of interest.

- Colocalization Analysis: Determine the percentage of neurons that are positive for one, two, or all three tags to assess ensemble overlap or segregation.

- Statistical Testing: Apply appropriate tests (e.g., ANOVA) to compare cell counts and colocalization across experimental groups.

Advanced and Emerging Methodologies

Beyond the core protocol, several advanced tools are enhancing the resolution and scope of neural circuit visualization.

Light-Microscopy-Based Connectomics (LICONN)

A groundbreaking technology, LICONN, integrates hydrogel embedding and expansion with deep-learning-based segmentation to achieve synapse-level circuit reconstruction using light microscopy [7]. This method overcomes the traditional resolution limits of light microscopy by physically expanding the tissue by approximately 16-fold, achieving effective resolutions of around 20 nm laterally and 50 nm axially. This allows for the dense reconstruction of axons, dendrites, and spines, and the identification of putative synaptic sites, all while preserving the tissue's molecular information for multiplexed immunolabeling [7].

Non-Invasive Imaging with PET and MRI

Medical imaging modalities provide a vital bridge to translational research and drug development by enabling non-invasive, whole-brain visualization of neural circuits.

- Positron Emission Tomography (PET): Using radiolabeled probes (e.g., [¹⁸F]FDG for glucose metabolism), PET can capture brain-wide activity patterns and functional connectivity. Furthermore, transgenic reporter systems (e.g., DREADDs) can be tracked with specific PET ligands, allowing longitudinal assessment of transgene expression and functional engagement in deep brain circuits [14].

- Magnetic Resonance Imaging (MRI): Diffusion tensor imaging maps white matter pathways, while functional MRI (fMRI) reveals real-time neural circuit dynamics through blood-oxygen-level-dependent (BOLD) signals. Manganese-enhanced MRI (MEMRI) can trace neural pathways based on the transsynaptic transport of paramagnetic manganese ions [14].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents for Genetic Visualization of Neural Circuits

| Reagent / Material | Function & Role in Experiment | Example Sources / Identifiers |

|---|---|---|

| TRAP2 Mouse Line | Provides the inducible CreERT2 driver under the Fos promoter for permanent genetic access to active neurons. | Jackson Labs, Stock #030323 [15] |

| Ai14 (tdTomato) Reporter Mouse | Contains a loxP-flanked STOP cassette preceding a CAG-driven tdTomato fluorescent protein. Cross with TRAP2 to generate fate-mapping offspring. | Jackson Labs, Stock #007914 [15] |

| AAV-RAM-GFP Vector | A viral vector for delivering the activity-dependent RAM promoter driving GFP. Expression is "off" in the presence of doxycycline. | Addgene, Plasmid #84469 [15] |

| 4-Hydroxytamoxifen (4-OHT) | The inducer drug that crosses the blood-brain barrier to activate CreERT2, leading to permanent TdT expression in recently active neurons. | Sigma-Aldrich, T-176 [15] |

| Doxycycline (DOX) Food | A diet containing doxycycline used to suppress expression from the RAM promoter until the desired tagging window. | 40 mg/kg chow, Bio-Serv [15] |

| c-Fos Primary Antibody | Validated antibody for immunohistochemical detection of endogenous c-Fos protein to label a third, acutely active neuronal population. | MilliporeSigma, ABE457 [15] |

| Allen Brain Atlas - Genetic Tools | A public database to identify and access characterized transgenic mouse lines and AAVs for targeting specific brain regions and cell types. | portal.brain-map.org [16] |

The integration of genetic, viral, and reporter tools provides an unparalleled capacity to visualize and dissect the functional and structural organization of specific neuronal populations and circuits. From precise activity-dependent tagging in behaving animals to non-invasive whole-brain imaging and nanoscale connectomics, these methods form a comprehensive toolkit for modern neuroscience research. The protocols and resources detailed herein offer a roadmap for researchers and drug development professionals to apply these powerful technologies, driving forward our understanding of the nervous system in health and disease.

The Role of Microscopy in Understanding Neurodegenerative Diseases like Alzheimer's and Parkinson's

Microscopy serves as a fundamental tool in neuroscience research, providing the spatial resolution necessary to visualize the pathological hallmarks and synaptic alterations associated with neurodegenerative diseases. For Alzheimer's disease (AD) and Parkinson's disease (PD), histopathological examination of nervous system tissue remains the diagnostic gold standard [17]. Recent advancements in digital pathology and artificial intelligence (AI) are transforming how researchers quantify and analyze these microscopic features [17] [18]. This document outlines specific applications and detailed protocols for using microscopy to investigate AD and PD pathology, providing a practical resource for researchers and drug development professionals.

Application Notes: Visualizing Pathological Hallmarks

Alzheimer's Disease Pathology

In Alzheimer's Disease, microscopy is critical for identifying and quantifying the two primary pathological hallmarks: amyloid-β plaques and neurofibrillary tangles [19]. Whole slide imaging (WSI) technology now allows for the digitization of entire histologic sections, enabling sophisticated quantitative analysis and cross-institutional collaboration [17].

Table: Key Microscopy Applications in Alzheimer's Disease Research

| Pathological Feature | Microscopy Modalities | Key Staining/Imaging Targets | Research Insights |

|---|---|---|---|

| Amyloid-β Plaques | Brightfield microscopy (WSI), Fluorescence microscopy, Super-resolution microscopy | Thioflavin-S, Amyloid-β immunofluorescence | Core component of senile plaques; derived from amyloid precursor protein (APP) [19]. |

| Neurofibrillary Tangles | Brightfield microscopy (WSI), Electron microscopy | Phospho-Tau immunofluorescence, Silver stains (e.g., Bielschowsky) | Composed of hyperphosphorylated tau protein; distribution correlates with cognitive decline [19]. |

| Synaptic Loss | Electron microscopy, Immunofluorescence | Synaptophysin, PSD95, VAMP2 | Synaptic density reduction is a major correlate of cognitive impairment [20]. |

| Retinal Pathology | Fluorescence microscopy, Electroretinogram (ERG) functional assessment | Amyloid-β, Phospho-Tau | Retina exhibits Aβ plaques and p-Tau, mirroring brain pathology; linked to visual impairments [21]. |

Parkinson's Disease Pathology

In Parkinson's Disease, microscopy focuses on the vulnerability of dopaminergic (DA) neurons and the characterization of Lewy bodies, which are primarily composed of α-synuclein. Recent studies using induced pluripotent stem cell (iPSC)-derived DA neurons have revealed unique structural features of their synaptic vesicles [20].

Table: Key Microscopy Applications in Parkinson's Disease Research

| Pathological Feature | Microscopy Modalities | Key Staining/Imaging Targets | Research Insights |

|---|---|---|---|

| Lewy Bodies | Brightfield microscopy, Immunofluorescence | α-synuclein, Ubiquitin | Eosinophilic cytoplasmic inclusions; primary pathological hallmark of PD [20]. |

| Dopaminergic Neuron Loss | Brightfield microscopy, Immunofluorescence | Tyrosine Hydroxylase (TH) | Selective degeneration in substantia nigra pars compacta [20]. |

| Synaptic Vesicle Alterations | Transmission Electron Microscopy (TEM), Immunofluorescence | VMAT2, VGLUT, Synapsin | DA neurons contain pleiomorphic vesicles (small clear, large clear, and dense core) distinct from classical synapses [20]. |

| Striatal Innervation | Immunofluorescence, Confocal microscopy | DAT, TH | Loss of dopaminergic terminals in the striatum [20]. |

Experimental Protocols

Protocol 1: Digital Histopathology for Alzheimer's Disease Classification

This protocol details the workflow for digitizing and analyzing human brain tissue to quantify Alzheimer's disease pathology, compatible with the standards of the National Alzheimer's Coordinating Center (NACC) [17].

1. Tissue Preparation and Staining:

- Fixation: Fix brain tissue samples (e.g., from hippocampus or cortex) in 10% neutral buffered formalin.

- Embedding and Sectioning: Process fixed tissue through graded alcohols and xylene, embed in paraffin, and section at 5-8 µm thickness using a microtome.

- Staining: Employ standardized staining protocols for key pathologies:

- Amyloid-β Plaques: Immunohistochemistry using validated anti-Aβ antibodies (e.g., 6E10) or thioflavin-S fluorescence staining.

- Neurofibrillary Tangles: Immunohistochemistry for hyperphosphorylated Tau (e.g., AT8 antibody) or traditional silver impregnation stains (e.g., Bielschowsky).

2. Whole Slide Imaging (WSI):

- Slide Scanning: Use an FDA-approved or high-research-grade slide scanner (e.g., Leica Aperio, Hamamatsu Nanozoomer).

- Image Acquisition: Scan slides at 20x or 40x magnification to create high-resolution whole slide images (WSIs). A standard neurodegenerative workup can generate over 500 GB of data per case [17].

- File Format: Save images in proprietary (e.g., .svs, .ndpi) or open-standard (OME-TIFF) formats for downstream analysis [17].

3. Digital Image Analysis:

- AI-Assisted Quantification: Utilize digital pathology software (commercial platforms like Indica Labs HALO or open-source tools like QuPath) with integrated machine learning.

- Train a classifier to identify and segment regions of interest (e.g., gray matter).

- Apply a second algorithm to detect, count, and measure the area of plaques and tangles.

- Data Output: Export quantitative data for statistical analysis, including plaque and tangle counts per mm², and percentage area covered.

Protocol 2: Electron Microscopy for Synaptic Vesicle Characterization in Parkinson's Disease

This protocol uses transmission electron microscopy (TEM) to characterize the unique synaptic vesicle pools in dopaminergic neurons, which is critical for understanding synaptic dysfunction in PD [20].

1. Sample Preparation (in vitro iPSC-derived DA neurons):

- Culture: Maintain human iPSC-derived dopaminergic neurons (≥50 days in culture to ensure mature synaptic marker expression) [20].

- Fixation: Fix cell cultures in a solution of 2.5% glutaraldehyde and 2% paraformaldehyde in 0.1 M sodium cacodylate buffer (pH 7.4) for at least 1 hour at room temperature.

- Post-fixation and Staining: Post-fix in 1% osmium tetroxide, followed by en bloc staining with 2% uranyl acetate.

- Dehydration and Embedding: Dehydrate samples through a graded ethanol series and embed in EPON resin. Polymerize at 60°C for 48 hours.

- Sectioning: Use an ultramicrotome to cut 70-nm thin sections and collect them on copper grids.

2. Imaging and Vesicle Analysis:

- TEM Imaging: Examine sections using a transmission electron microscope operating at 80-100 kV. Capture micrographs of axonal varicosities at high magnification (e.g., 20,000x - 40,000x).

- Vesicle Identification and Measurement:

- Identify three distinct vesicle populations based on morphology [20]:

- Classical Small Synaptic Vesicles (SSVs): ~40-50 nm, clear, round.

- Large Clear Vesicles: 60-100 nm, pleiomorphic (irregularly shaped), clear.

- Dense Core Vesicles (DCVs): 60-100 nm, round/oval, electron-dense core.

- Identify three distinct vesicle populations based on morphology [20]:

- Morphometry: Use image analysis software (e.g., ImageJ/Fiji) to measure the diameter of at least 100 vesicles from each population per condition.

Protocol 3: Assessing Functional Visual Deficits in Alzheimer's Disease Models

This protocol employs a behavioral apparatus to assess contrast sensitivity and color vision deficits in mouse models of AD, which reflect retinal pathology and functional visual impairments observed in patients [21].

1. Apparatus Setup (Visual-stimuli Four-Arm Maze - ViS4M):

- Equipment: Construct a maze with four arms, each illuminated by separate, intensity-controlled LED emitters (Red [λ peak 628 nm], Green [λ peak 517 nm], Blue [λ peak 452 nm], White) [21].

- Calibration: Predefine illumination conditions (e.g., Low, Medium, High) and measure photometric units for each stimulus. Ensure the gradient of S- and M-opsin activation matches experimental requirements [21].

2. Behavioral Testing:

- Subjects: Use transgenic AD-model mice (e.g., APPSWE/PS1∆E9) and age-matched wild-type controls.

- Procedure: Place a single mouse in the central area of the ViS4M and allow it to freely explore all four arms for a set duration (e.g., 10 minutes). No training or rewards are required, relying on innate exploratory behavior.

- Data Collection: Record the session. Analyze the percentage of entries into and time spent in each colored arm, as well as transition patterns and alternation between arms.

3. Data Analysis:

- Color Discrimination: Impaired discrimination in AD+ mice may manifest as a lack of preference for specific colors (e.g., blue), reminiscent of tritanomaly (blue-yellow color deficit) documented in AD patients [21].

- Contrast Sensitivity: Deficits are identified by testing arms with varying luminance contrasts and analyzing the ability of mice to distinguish between them.

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Reagents and Materials for Neurodegenerative Disease Microscopy

| Item Name | Function/Application | Example Use Case |

|---|---|---|

| Anti-Amyloid-β Antibody | Immunohistochemical detection of amyloid plaques in brain tissue. | Staining of human AD brain sections for WSI and quantification [19]. |

| Anti-Phospho-Tau Antibody | Immunohistochemical detection of neurofibrillary tangles. | Staining of human AD brain sections for WSI and quantification [19]. |

| Anti-Tyrosine Hydroxylase (TH) Antibody | Marker for dopaminergic neurons. | Identifying DA neuron loss in PD models and human post-mortem tissue [20]. |

| Anti-VMAT2 Antibody | Marker for dopamine-loaded synaptic vesicles. | Characterizing vesicle pools in iPSC-derived DA neurons via immuno-EM [20]. |

| iPSC-Derived Dopaminergic Neurons | Human-relevant in vitro model for PD. | Studying synaptic vesicle biology and screening neuroprotective compounds [20]. |

| Bevonescein (ALM-488) | Nerve-specific fluorescent imaging agent. | Intraoperative fluorescence-guided surgery to preserve nerves in head and neck procedures [22]. |

| Whole Slide Scanner | Digitizes entire glass slides for computational analysis. | Creating digital archives of neuropathological samples for AI-based analysis [17]. |

Technical Considerations and Best Practices

Accessible Scientific Figure Creation

To ensure research is accessible to all colleagues, including the 8% of males and 0.5% of females with color vision deficiency, follow these guidelines for microscopy images and graphs [23] [24]:

- Avoid Red-Green Combinations: This is the classic yet least distinguishable color combination for the most common forms of color blindness. Use alternatives like green/magenta, yellow/blue, or red/cyan [23] [24].

- Show Grayscale Channels: Best practice is to display individual grayscale channels alongside any merged color image. The human eye is better at detecting contrast changes in grayscale [23] [24].

- Use Accessible Color Palettes: For multi-channel images, suitable three-color combinations include magenta/yellow/cyan [23]. Tools like ColorBrewer and Paul Tol's schemes provide ready-to-use, colorblind-safe palettes [23].

- Simulate Color Blindness: Use software tools (ImageJ's

Image > Color > Dichromacy, Adobe Photoshop'sView > Proof Setup > Color Blindness, or Color Oracle) to check the readability of your figures [23] [24].

Data Management and AI Integration

The large and complex imaging data generated, particularly from WSIs, requires careful management and can be powerfully analyzed with modern computational tools [17] [18].

- Data Volume: A standard neurodegenerative digital pathology case can generate over 500 GB of data, far exceeding a typical MRI brain study (~500 MB) [17].

- AI-Assisted Workflows: Machine learning (ML) and deep learning (DL) are transforming the analysis of neuropathological images. AI can assist with tasks such as noise reduction, automated feature extraction (e.g., plaque counting), spectral unmixing, and pattern recognition, significantly enhancing throughput and objectivity [17] [18].

- Collaboration and Standardization: The use of open-source WSI formats (e.g., OME-TIFF) and software (e.g., QuPath, Bio-Formats) facilitates data sharing and collaborative analysis across institutions [17].

Advanced Imaging Modalities: From Deep Tissue to Functional Analysis in Neuroscience

For generations, researchers have observed dynamic life processes through microscopes. However, standard fluorescence microscopy techniques face significant challenges when applied to intact biological systems, particularly reduced signal strength and signal-to-noise ratios at deeper imaging depths [25]. Multiphoton microscopy, primarily two-photon and three-photon excitation microscopy, has emerged as the gold standard for deep-tissue and intravital imaging by providing exceptional resolution while minimizing phototoxic effects on living samples [25] [26]. This application note details the fundamental principles, technical advantages, and practical methodologies for implementing multiphoton microscopy in nervous system visualization research, with specific protocols for imaging cerebral organoids, deep brain structures, and label-free nervous tissue assessment.

Fundamental Principles and Technical Advantages

Multiphoton excitation microscopy operates on the principle of simultaneous absorption of multiple long-wavelength photons to excite fluorophores that normally require single shorter-wavelength photons [26]. In two-photon excitation, a fluorophore absorbs two photons of approximately double the wavelength (half the energy) required for one-photon excitation within a single quantized event lasting approximately 1 femtosecond [25] [27]. This non-linear process depends on the square of the excitation intensity, functionally confining excitation to the microscope's focal plane without significant out-of-focus absorption [25] [26].

Table 1: Key Advantages of Multiphoton Microscopy for Live Tissue Imaging

| Feature | Confocal Microscopy | Multiphoton Microscopy | Biological Benefit |

|---|---|---|---|

| Excitation Volume | Entire beam path | Focal plane only | Minimal photobleaching outside focal plane [26] [27] |

| Optical Sectioning | Pinhole required | Intrinsic; no pinhole | Efficient scattered emission collection [25] [26] |

| Excitation Wavelength | Visible light | Infrared light | Reduced scattering, deeper penetration [25] [26] |

| Imaging Depth | Limited (<100 μm in scattering tissue) | Enhanced (up to 1.4 mm with 3PM) | Access to deep brain structures [28] [29] |

| Phototoxicity | Throughout illuminated volume | Localized to focal plane | Enhanced long-term viability of live tissue [26] [30] |

| Background Fluorescence | Rejected by pinhole | Minimized by localized excitation | Improved signal-to-background ratio [26] [27] |

The localization of excitation provides multiphoton microscopy with distinct advantages for imaging living systems. Because fluorescence excitation occurs only at the focal point, photobleaching and photodamage are dramatically reduced throughout the rest of the sample [26]. Additionally, the use of infrared excitation wavelengths rather than visible light significantly reduces light scattering in biological tissues, enabling deeper penetration [25]. The combination of these factors makes multiphoton microscopy particularly suitable for long-term, repeated imaging of living specimens with minimal impact on viability and function.

Figure 1: Comparison of Excitation Modalities in Fluorescence Microscopy. Multiphoton techniques utilize longer wavelengths and nonlinear excitation to achieve superior depth penetration and reduced out-of-plane phototoxicity compared to single-photon methods [25] [26] [29].

Quantitative Performance Metrics

Table 2: Performance Characteristics of Multiphoton Imaging Modalities

| Parameter | Two-Photon Microscopy (2PM) | Three-Photon Microscopy (3PM) | Measurement Conditions |

|---|---|---|---|

| Maximum Imaging Depth | ~500-800 μm [29] | ~1.4 mm in mouse brain [29] | Through chronic glass window |

| Excitation Wavelength | 700-1100 nm [25] | 1300 nm or 1700 nm [29] | Optimized for tissue penetration |

| Laser Power | mW range [25] | 0.5-22 mW average power [29] | Below damage thresholds |

| Signal Dependency | Square of excitation intensity [25] | Cube of excitation intensity [26] | Nonlinear optical relationship |

| Axial Resolution | ~1-2 μm | ~1 μm with AO correction [29] | With high NA objective |

| Signal-to-Background Improvement | 15-fold over sLFM [30] | 12 dB over sLFM [30] | In tissue-mimicking phantoms |

| Photobleaching Reduction | Confined to focal plane [26] | 700-fold reduction [31] | Compared to confocal microscopy |

Recent advances in three-photon microscopy (3PM) have pushed imaging depths beyond the limitations of conventional two-photon systems. By utilizing longer excitation wavelengths (typically 1300 nm or 1700 nm) and exploiting the cubic dependence of signal on excitation intensity, 3PM achieves superior signal-to-background ratios at depth, enabling visualization of hippocampal structures at depths exceeding 1.4 mm in the mouse brain [29]. The implementation of adaptive optics (AO) further enhances performance by correcting tissue-induced aberrations, restoring near-diffraction-limited resolution even in deep scattering tissues [29].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagent Solutions for Multiphoton Imaging of Nervous Tissue

| Reagent/Material | Function/Application | Examples/Specifications |

|---|---|---|

| Genetically Encoded Calcium Indicators (GECIs) | Monitoring neural activity via Ca²⁺ transients | GCaMP6, RCaMP2; enables long-term observation of neurodynamics [32] |

| Chemical Calcium Indicators | Bulk loading of neuronal networks | Oregon Green BAPTA-1; used in multicell bolus loading technique [32] |

| Fluorescent Proteins | Labeling specific cell types or structures | Thy1-EGFP for neuronal morphology; ETV2 for vascular induction [28] [29] |

| Viral Vectors | Targeted expression in specific cell populations | AAVs for cell-type specific GECI expression [32] |

| Caged Neurotransmitters | Precise temporal activation of receptors | Caged glutamate for studying synaptic connectivity [27] |

| Channelrhodopsin Variants | Optogenetic control of neural activity | Enables circuit mapping with temporal precision [27] |

| Tissue Clearing Agents | Enhancing optical accessibility | Various aqueous solutions; improves depth penetration in fixed tissue [28] |

| Fiducial Markers | Registration for longitudinal studies | Fluorescent beads; enables tracking of same cells over time |

Experimental Protocols

Protocol 1: Imaging Cerebral Organoids for Neurodevelopmental Studies

Background: Cerebral organoids are self-organizing 3D structures with increased cellular diversity and longevity that better mimic human brain complexity compared to 2D cultures. However, their millimeter size, cellular density, and light-scattering properties present challenges for conventional microscopy [28]. Multiphoton microscopy excels in this application due to its superior penetration and minimal phototoxicity.

Materials:

- Cerebral organoids (guided or unguided differentiation protocols)

- Spinning bioreactor or agitation system for enhanced nutrient diffusion

- Optional: Vascularized organoids (via HUVEC co-culture or ETV2 expression)

- Fluorescent labels (immunolabeling, chemical indicators, or genetically encoded reporters)

- Multiphoton microscope with tunable IR laser (690-1080 nm)

- Long-working-distance water immersion objectives (≥20 mm)

Procedure:

- Organoid Preparation and Labeling:

- For fixed organoids: Employ tissue clearing protocols (e.g., CLARITY, CUBIC) to enhance optical accessibility [28].

- For live imaging: Use genetically encoded indicators (e.g., GCaMP for calcium activity) or chemical dyes loaded via multicell bolus loading.

- For vascularization studies: Employ ETV2-expressing iPSCs to induce endothelial network formation [28].

Microscope Setup:

- Configure laser wavelength according to fluorophore two-photon excitation spectra (note: these often differ from single-photon spectra).

- Set laser power to 1-50 mW (measure at sample) to balance signal and viability.

- Use non-descanned detectors for efficient collection of scattered emission photons.

- Implement resonant scanners for high-speed imaging (≥30 Hz) when capturing dynamic processes.

Image Acquisition:

- Begin with low magnification overview to identify regions of interest.

- Acquire z-stacks with 2-5 μm step size for 3D reconstruction.

- For time-lapse imaging, minimize laser power and acquisition frequency to reduce phototoxicity.

- For calcium imaging, frame rates of 4-10 Hz typically suffice for capturing cellular dynamics.

Data Analysis:

- Employ motion correction algorithms to compensate for sample drift.

- Use 3D reconstruction software for visualization and morphological analysis.

- For functional data, extract ΔF/F traces and identify statistically significant transients.

Figure 2: Cerebral Organoid Imaging Workflow. Multiphoton microscopy enables both structural and functional imaging of intact cerebral organoids, bypassing the need for physical sectioning and enabling longitudinal studies of neurodevelopmental processes [28].

Protocol 2: High-Resolution Deep Brain Imaging with Three-Photon Microscopy

Background: Imaging subcellular structures in deep brain regions (>800 μm) requires three-photon excitation combined with adaptive optics to overcome tissue scattering and aberrations. This protocol enables visualization of dendritic spines and calcium transients in hippocampal layers previously inaccessible with two-photon microscopy [29].

Materials:

- Transgenic mice expressing fluorescent reporters in neurons (e.g., Thy1-EGFP-M) or astrocytes

- Chronic cranial window installation supplies

- Three-photon microscope with 1300 nm excitation capability

- Adaptive optics system with deformable mirror

- Electrocardiogram (ECG) monitoring setup

- High numerical aperture objective (≥1.0 NA)

- Laser pulse compressor for maintaining <50 fs pulse width at sample

Procedure:

- Animal Preparation:

- Install chronic cranial window over the region of interest using standard surgical protocols.

- Allow at least 2 weeks for recovery and tissue stabilization before imaging.

- For functional studies, use viral vectors to express GECIs in target cell populations.

ECG Gating Setup:

- Implement prospective image-gated acquisition synchronized to cardiac cycle.

- Connect FPGA-based gating system between ECG monitor and microscope scanners.

- Pause scanning during peaks of ECG recording to minimize motion artifacts.

Adaptive Optics Calibration:

- Employ modal-based, sensorless AO approach using a deformable mirror.

- Use image-based optimization metrics (e.g., sharpness, intensity) to determine aberration correction.

- Apply Zernike polynomial modes to correct tissue-induced aberrations.

- Perform AO correction at multiple depths to account for depth-dependent aberrations.

Three-Photon Image Acquisition:

- Set 1300 nm excitation wavelength for optimal penetration and signal generation.

- Maintain laser power below 22 mW average power and focal energies <2 nJ to avoid tissue damage.

- For structural imaging, acquire high-resolution z-stacks with 0.5-1 μm steps.

- For functional calcium imaging, frame rates of 5-10 Hz suffice for capturing dendritic signals.

Data Processing:

- Apply motion correction algorithms to compensate for residual tissue movement.

- Use deconvolution algorithms to enhance resolution when needed.

- For functional data, extract calcium transients from regions of interest using standard ΔF/F calculations.

Protocol 3: Label-Free Visualization of Myelin and Nervous Tissue

Background: Label-free multiphoton techniques including coherent Raman scattering (SRS, CARS), third harmonic generation (THG), and two-photon excited autofluorescence (TPEF) enable visualization of nervous tissue without exogenous labels, providing insights into myelin integrity, degeneration, and regeneration [33].

Materials:

- Multiphoton microscope with multiple non-linear detection channels

- Pulsed laser source capable of simultaneous multi-modal imaging

- Spinal cord or peripheral nerve preparations (fixed or live)

- Vibratome for tissue sectioning (optional for fixed tissue)

- Custom-built chambers for in vivo imaging of exposed nerves

Procedure:

- Sample Preparation:

- For in vivo imaging, surgically expose region of interest or use implanted window chambers.

- For peripheral nerves, consider using intervertebral windows with biocompatible clearing methods.

- No staining or labeling required for intrinsic contrast imaging.

Microscope Configuration:

- Set excitation wavelength to optimize multiple non-linear signals simultaneously (typically 800-1300 nm).

- Configure detection channels for:

- CARS/SRS: Myelin visualization via CH₂ vibration at 2845 cm⁻¹

- THG: Myelin sheaths and tissue interfaces

- TPEF: Cellular autofluorescence from NADH, flavoproteins

- SHG: Collagen in connective tissue sheaths

Multi-Modal Image Acquisition:

- Acquire simultaneous multi-channel images to capture complementary information.

- Adjust laser power and detection gains to balance signals across modalities.

- Acquire z-stacks for 3D reconstruction of myelin architecture.

Data Analysis:

- Quantify myelin integrity through CARS/SRS signal intensity and continuity.

- Assess degeneration/regeneration through changes in myelin organization.

- Correlate multi-modal signals for comprehensive tissue assessment.

Advanced Technical Implementations

Active Illumination for Ultrahigh Dynamic Range Imaging

Conventional multiphoton microscopy suffers from limited dynamic range, often unable to simultaneously capture bright somata and dim dendritic structures. Active illumination technology addresses this limitation by implementing real-time negative feedback to regulate laser power pixel-by-pixel [34]. This approach combines simultaneous detection of signal and illumination power with logarithmic representation of sample strength to accommodate ultrahigh dynamic range without information loss [34].

Implementation:

- Integrate FPGA-based control system between detectors and laser modulation input

- Use electro-optic modulator (EOM) for rapid laser power control

- Implement dual detection of fluorescence signal and illumination power

- Apply logarithmic compression to maintain precision across brightness ranges

This technique enables accurate quantification of sample strengths spanning a remarkable ~10⁸:1 dynamic range, particularly beneficial for imaging both large somata and fine dendritic spines in neuronal tissue [34].

Adaptive Optics for Aberration Correction

Optical aberrations caused by tissue heterogeneities and refractive index mismatches degrade image resolution at depth. Incorporating adaptive optics (AO) with multiphoton microscopy restores near-diffraction-limited performance [29]. Modal-based, sensorless AO approaches prove particularly robust for deep imaging where signal-to-noise ratios are low [29].

Implementation:

- Use continuous membrane deformable mirror for wavefront correction

- Employ image-based optimization metrics (sharpness, intensity)