Unbiased Cell Identity: A Guide to High-Content Imaging in Complex Neural Cultures

This article explores the transformative role of high-content imaging (HCI) in identifying and characterizing diverse cell types within mixed neural cultures, a critical challenge in neuroscience research and drug development.

Unbiased Cell Identity: A Guide to High-Content Imaging in Complex Neural Cultures

Abstract

This article explores the transformative role of high-content imaging (HCI) in identifying and characterizing diverse cell types within mixed neural cultures, a critical challenge in neuroscience research and drug development. We cover the foundational principles of HCI and Cell Painting assays that generate unique morphological fingerprints for different neural cells. The piece delves into advanced methodologies, including the implementation of convolutional neural networks (CNNs) like CellSighter for automated classification, and their application in both 2D and 3D culture systems. We also address key troubleshooting and optimization strategies for overcoming hurdles such as high culture density and segmentation errors. Finally, the article provides a rigorous validation and comparative analysis, benchmarking HCI performance against traditional methods and highlighting its superior accuracy and throughput for quality control in iPSC-derived models and preclinical screening.

The Need for Unbiased Identification: From Cellular Heterogeneity to Morphological Fingerprints

The Challenge of Variability in iPSC-Derived Neural Models

The use of induced pluripotent stem cell (iPSC)-derived neural models has revolutionized the study of neurological disorders and drug development by providing unprecedented access to human cell types that are otherwise difficult to obtain [1] [2]. However, a significant challenge impedes the reliability and reproducibility of these models: inherent variability. This variability stems from multiple sources, including genetic background of donors, differences in reprogramming techniques, and inconsistencies in differentiation protocols [2]. For researchers using high-content imaging to identify cell types in mixed neural cultures, this heterogeneity can confound results, leading to misleading conclusions and failed drug screens. This application note details the sources of this variability, provides quantitative methods for its assessment, and outlines standardized protocols to mitigate its impact, ensuring more robust and reproducible research outcomes.

The variability in iPSC-derived neural models is not random but originates from specific, identifiable stages of the cell culture process. Recognizing these sources is the first step toward implementing effective quality control.

- Genetic Background: Inter-individual genetic differences are a major contributor, accounting for 5-46% of the variation in iPSC cellular phenotypes [2]. Lines derived from the same individual are consistently more similar to each other than lines from different donors.

- Reprogramming and Culture Artifacts: The processes of reprogramming somatic cells and subsequent cell culture can introduce somatic mutations and epigenetic variations. The retention of tissue-specific DNA methylation marks from the cell of origin can influence a line's differentiation propensity [2].

- Differentiation Protocol Inconsistencies: The efficiency and outcome of neural differentiation are highly sensitive to minor variations in protocol execution. The use of diverse differentiation protocols (e.g., small molecule-based vs. transcription factor-based) generates neural cells with differing identities, maturity, and purity [1] [2]. This is particularly critical for high-content imaging, as a mixed or impure cellular population can severely skew morphometric analyses.

Table 1: Key Sources of Variability in iPSC-Derived Neural Models

| Source Category | Specific Examples | Impact on Model |

|---|---|---|

| Genetic Background | Donor-specific genetic variation; Expression Quantitative Trait Loci (eQTLs) | Drives 5-46% of phenotypic variation; affects gene expression, differentiation potency [2] |

| Reprogramming & Culture | Somatic mutations; Epigenetic memory of cell of origin; Passage number | Alters genetic stability; influences lineage differentiation bias [2] |

| Differentiation Protocol | Protocol type (e.g., small molecules vs. NGN2 overexpression); Reagent batch variability | Impacts neuronal subtype identity, maturity, and culture purity; can mask disease phenotypes [1] [2] |

Quantitative Assessment of Variability

To control for variability, it must first be quantified. High-content imaging, combined with robust image analysis, provides unbiased metrics to assess the composition and morphology of neural cultures.

High-Content Imaging and Cell Type Identification

Traditional validation methods like flow cytometry are destructive and low-throughput. An advanced alternative employs high-content imaging based on the Cell Painting (CP) assay, which uses fluorescent dyes to label multiple cellular compartments [3]. When combined with Convolutional Neural Networks (CNNs), this approach can identify and classify different cell types (e.g., neural progenitors, postmitotic neurons, microglia) in dense, mixed cultures with an accuracy above 96% [3]. This method is non-destructive, scalable, and provides a powerful tool for quality control before proceeding to more specialized functional assays.

Morphometric Analysis for Neuronal Health and Phenotype

Quantifying neurite outgrowth and branching is a fundamental readout for neuronal development and health. Spatial Light Interference Microscopy (SLIM) is a label-free, quantitative phase imaging technique that allows for long-term, non-destructive measurement of neurite dynamics [4]. The resulting images can be analyzed with semi-automated tracing software like NeuronJ (an ImageJ plugin) to quantify parameters such as total neurite length, number of branches, and growth rates over time [4]. Studies using SLIM have demonstrated that neurite growth rates are highly dependent on cell confluence, with neurons in low-confluence conditions exhibiting significantly higher growth rates than those in medium- or high-confluence conditions [4].

Table 2: Quantitative Metrics for Assessing Neural Cultures

| Assessment Method | Key Measurable Parameters | Significance in Model Validation |

|---|---|---|

| Cell Painting + CNN Classification [3] | Cell type classification accuracy; Proportion of neurons vs. progenitors | Ensures culture composition and purity; critical for reproducible phenotyping |

| SLIM + NeuronJ Tracing [4] | Neurite length (μm); Number of branch points; Growth rate over time | Unbiased, label-free readout of neuronal health, maturation, and network formation |

| Network Science Analysis [5] | Degree centrality; Assortativity coefficient; Clustering coefficient | Reveals self-optimizing topology and information flow capacity of the neuronal network |

Protocols for Mitigating Variability

The following protocols are designed to standardize the generation and analysis of iPSC-derived neural cultures, thereby reducing unwanted variability.

Protocol: Quality Control via Cell Painting and CNN Classification

This protocol provides a workflow for non-destructively validating the composition of a mixed neural culture prior to a dedicated experiment [3].

Workflow Diagram: Cell Type Identification

Materials:

- Research Reagent Solutions:

- Cell Painting Dyes: Mixture of fluorescent dyes staining nuclei, nucleoli, cytoskeleton, Golgi, and endoplasmic reticulum [3].

- Imaging Medium: Phenol-red free culture medium to reduce background fluorescence.

- Fixed Cell Preparation: Cells are typically fixed for compatibility with subsequent assays.

Method:

- Cell Seeding: Plate dissociated neural cells at a defined density in a 384-well microplate suitable for high-content imaging.

- Staining: Follow the standard Cell Painting protocol to stain the cells with the panel of fluorescent dyes.

- Image Acquisition: Use an automated confocal screening microscope to acquire 4-channel images from multiple sites per well.

- Image Analysis:

- Use a deep learning-based algorithm (e.g., a ResNet CNN) for robust cell segmentation, even in dense cultures.

- Train the CNN classifier on a reference set of images with known cell identities.

- Apply the trained model to new images to classify each cell (e.g., neuron, progenitor, microglia).

- Quality Control Decision: Calculate the percentage of the desired cell type. Proceed with the experiment only if the purity meets a pre-defined threshold (e.g., >80%).

Protocol: Label-Free Neurite Outgrowth Quantification

This protocol uses SLIM to non-invasively track the development of neuronal processes over time, providing unbiased morphometric data [4].

Workflow Diagram: Neurite Outgrowth Analysis

Materials:

- Research Reagent Solutions:

- Primary Neurons: Cortical neurons from postnatal (P0-P1) mice [4].

- Coating Reagent: Poly-D-lysine to promote neuronal adhesion.

- Culture Media: Plating and maintenance media as specified in the method.

Method:

- Cell Culture: Plate primary cortical neurons (or iPSC-derived neurons) at defined, low-to-medium confluence on poly-D-lysine-coated glass-bottom dishes. High confluence leads to "shingling" (overlapping neurites), which complicates accurate tracing [4].

- SLIM Imaging: Place the culture dish on the SLIM microscope stage within a environmental chamber (37°C, 5% CO₂). Acquire quantitative phase images every few hours over several days.

- Neurite Tracing with NeuronJ:

- Import the SLIM image sequences into ImageJ with the NeuronJ plugin.

- Manually trace each neurite emerging from the soma, designating them as axons or dendrites based on morphology (axons are longer and of constant diameter; dendrites are thicker and taper).

- Use the batch processing function to extract length measurements for all traced neurites.

- Data Analysis: Calculate the average neurite length per neuron and per neurite over time. Compare growth rates under different experimental conditions. As a reference, neurons in low-confluence conditions show a steady and higher growth rate compared to those in medium-confluence conditions [4].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Reagents for iPSC Neural Differentiation and Imaging

| Reagent / Tool | Function | Application Note |

|---|---|---|

| LDN193189 & SB431542 | Small molecule inhibitors for dual SMAD inhibition; induces neural induction [1] | Foundational step in most small molecule-based differentiation protocols. |

| Retinoic Acid (RA) & SHH Agonists | Promotes caudal and ventral patterning toward motor neuron fate [1] | Critical for obtaining specific neuronal subtypes. |

| Cell Painting Dye Panel [3] | Fluorescent dyes for staining multiple organelles to create a morphological fingerprint | Enables high-content screening and unbiased cell classification via CNN. |

| MitoTracker Dyes [6] | Cell-permeant dyes that accumulate in mitochondria based on membrane potential | Allows live-cell imaging of mitochondrial health and dynamics, key in neurodegeneration models. |

| Adeno-Associated Viral (AAV) Vectors [6] | Efficient viral transduction for stable expression of fluorescent reporters (e.g., Mito-GFP) in neurons | Provides specific, high signal-to-noise labeling for long-term studies. |

Variability in iPSC-derived neural models is a formidable but surmountable challenge. By understanding its sources and implementing rigorous quantitative assessments—such as Cell Painting with CNN classification for purity and SLIM imaging for neurite outgrowth—researchers can significantly enhance the reliability of their models. The protocols and tools detailed herein provide a practical framework for standardizing cultures. Adopting these strategies is crucial for generating biologically relevant, reproducible data that can accelerate the discovery of therapeutics for neurological diseases.

Why Traditional Cell Validation Methods Fall Short

In the rapidly advancing field of mixed neural culture research, the limitations of traditional cell validation methods have become a critical bottleneck. Techniques such as sequencing, flow cytometry, and immunocytochemistry, while valuable, are often low in throughput, costly, and destructive, hindering their utility for comprehensive quality control in complex, heterogeneous cellular systems [3]. This application note details these shortcomings and presents high-content imaging (HCI) and morphological profiling as transformative solutions, providing researchers with robust, scalable, and information-rich alternatives for characterizing cell identity and state in physiologically relevant models.

The Critical Shortcomings of Traditional Validation Methods

Current standards for validating cell culture composition, particularly in induced pluripotent stem cell (iPSC)-derived neural models, rely on a combination of methods. However, these approaches present significant challenges for modern, dense, and mixed culture systems.

Table 1: Limitations of Traditional Cell Validation Methods

| Method | Key Limitations | Impact on Research and Development |

|---|---|---|

| Sequencing [3] | - Lacks spatial and morphological context- Destructive, preventing longitudinal studies- Moderate throughput | Incomplete picture of cellular state; unable to track temporal changes in the same culture. |

| Flow Cytometry [3] [7] | - Requires cell dissociation, losing 2D/3D architectural context- Limited number of simultaneous markers due to spectral overlap- Destructive | Loss of critical information on cell-cell interactions and spatial organization of cell types. |

| Immunocytochemistry (ICC) [7] | - Typically low-throughput and labor-intensive- Subjective or semi-quantitative analysis- Multiplexing capability is limited | Low scalability for screening applications; introduces user bias; difficult to profile many targets at once. |

These limitations are particularly problematic for iPSC-derived neural cultures, where genetic drift, clonal variation, and differentiation protocol inconsistencies lead to significant variability in the final cellular composition [3]. The inability to perform rapid, non-destructive quality control hinders experimental reproducibility and the reliable use of these models in systematic drug screening pipelines [3] [7].

High-Content Imaging as a Transformative Solution

High-content imaging (HCI) overcomes these barriers by combining automated microscopy with multi-parametric image analysis to quantify cellular and subcellular features in a high-throughput manner [8] [9]. Unlike traditional methods, HCI preserves the spatial context of cells and can be applied to the same sample over time for live-cell imaging.

Key Technological Advantages

- Multiplexed Morphological Profiling: Assays like Cell Painting use a panel of fluorescent dyes to label multiple organelles (e.g., nucleus, endoplasmic reticulum, mitochondria, Golgi apparatus, cytoskeleton), generating thousands of quantitative morphological features per cell [3] [10]. This creates a unique "morphotextural fingerprint" for different cell types and states.

- Single-Cell Resolution in Complex Cultures: Advanced image analysis software (e.g., CellProfiler) and machine learning models, particularly Convolutional Neural Networks (CNNs), can segment and classify individual cells even in dense, mixed cultures with high accuracy (exceeding 96% in benchmark studies) [3].

- Scalability and Automation: Fully integrated HCS platforms enable the automated acquisition and analysis of images from 384-well or 1536-well microplates, making it feasible to profile hundreds of conditions in a single experiment [11] [12].

Table 2: Quantitative Performance of HCI vs. Traditional Methods in Neural Cultures

| Application Context | HCI Approach | Reported Performance | Traditional Method Comparison |

|---|---|---|---|

| Cell Line Classification [3] | Cell Painting + Random Forest | F-score: 0.75 ± 0.01 | N/A - Baseline |

| Cell Line Classification [3] | Cell Painting + Convolutional Neural Network | Accuracy > 96% | Significant improvement over RF classifier |

| iPSC Neural Culture QC [3] | Regionally-restricted morphological profiling | 96% prediction accuracy | Outperformed population-level classification (86% accuracy) |

The following workflow diagram illustrates a typical high-content imaging and analysis pipeline for cell validation in mixed neural cultures:

Detailed Experimental Protocol: Cell Type Identification in Mixed Neural Cultures via NeuroPainting

This protocol, adapted from the NeuroPainting assay, is optimized for the morphological profiling of human iPSC-derived neural cell types, including neurons, progenitors, and astrocytes [10].

Research Reagent Solutions

Table 3: Essential Reagents and Materials for NeuroPainting

| Item | Function / Description | Example Catalog Numbers |

|---|---|---|

| CellCarrier-96 Ultra Microplates [7] | 96-well, low-skirted, SBS-footprint plates for imaging | PerkinElmer (6055300) |

| Hoechst 33342 [9] | Stains DNA; labels nuclei for segmentation and analysis. | Thermo Fisher Scientific (H3570) |

| Concanavalin A, Alexa Fluor 488 Conjugate [10] | Labels endoplasmic reticulum (ER). | Thermo Fisher Scientific (C11252) |

| Wheat Germ Agglutinin (WGA), Alexa Fluor 555 Conjugate [10] | Labels plasma membrane and Golgi apparatus. | Thermo Fisher Scientific (W32464) |

| Phalloidin, Alexa Fluor 568 Conjugate [10] | Labels F-actin in the cytoskeleton. | Thermo Fisher Scientific (A12380) |

| SYTO 14 Green Fluorescent Nucleic Acid Stain [10] | Labels nucleoli and cytoplasmic RNA. | Thermo Fisher Scientific (S7576) |

| MitoTracker Deep Red [10] | Labels mitochondria. | Thermo Fisher Scientific (M22426) |

| Automated Imaging System | Confocal, high-content microscope with environmental control. | PerkinElmer Opera Phenix [7] [10] |

| Image Analysis Software | Open-source software for creating custom analysis pipelines. | CellProfiler [6] [10] |

Step-by-Step Procedure

Part 1: Cell Seeding and Fixation

- Plate Preparation: Coat 96-well microplates with an appropriate extracellular matrix (e.g., Poly-L-ornithine/Laminin for neurons) [7].

- Cell Seeding: Seed dissociated iPSC-derived neural cells at optimized densities to ensure healthy morphology without overcrowding [10].

- Neurons: 2,500 cells/well, fix 25 days post-plating.

- Astrocytes: 3,000 cells/well, fix 48 hours post-plating.

- Neural Progenitor Cells (NPCs): 15,000 cells/well, fix 24 hours post-plating [10].

- Fixation: At the desired time point, aspirate the medium and add 4% formaldehyde in PBS for 20 minutes at room temperature.

- Permeabilization and Washing: Wash wells twice with PBS, then permeabilize cells with 0.1% Triton X-100 in PBS for 15 minutes. Wash twice more with PBS.

Part 2: NeuroPainting Staining

- Prepare the staining cocktail in PBS containing 1% BSA with the following dyes [10]:

- Hoechst 33342 (1:2000)

- Concanavalin A, Alexa Fluor 488 (1:200)

- Wheat Germ Agglutinin, Alexa Fluor 555 (1:200)

- Phalloidin, Alexa Fluor 568 (1:200)

- SYTO 14 (1:200)

- MitoTracker Deep Red (1:200)

- Add the staining cocktail to each well and incubate for 30 minutes at room temperature, protected from light.

- Aspirate the cocktail and wash the wells three times with PBS.

- Leave a small volume of PBS in the wells to prevent drying. Seal the plate and proceed to imaging or store at 4°C in the dark.

Part 3: Automated Image Acquisition

- Use a high-content confocal imaging system (e.g., PerkinElmer Opera Phenix) with a 20x or 40x objective [10].

- Define the imaging fields per well to capture a statistically significant number of cells (e.g., 10-20 fields/well for a 96-well plate).

- Acquire images in all fluorescent channels corresponding to the dyes used. Ensure consistent exposure times and laser powers across all plates in a study.

Part 4: Image Analysis and Feature Extraction

- Cell Segmentation: Use a customized CellProfiler pipeline to identify individual cells and subcellular compartments [10].

- Identify nuclei using the Hoechst channel.

- Identify whole-cell regions using the combined membrane (WGA) and cytoskeletal (Phalloidin) signals.

- Feature Extraction: For each segmented cell, extract ~4,000 morphological features describing the size, shape, intensity, and texture of each stained compartment [10].

- Data Preprocessing: Perform well-level averaging and preprocess the data (low-variance filtering, robust standardization, correlation-based feature selection) to yield a final set of ~700 informative morphological features [10].

The following diagram illustrates the logical relationship between the assay output and the analytical steps that lead to biological insight:

Concluding Remarks

Traditional cell validation methods, while foundational, are no longer sufficient for the demands of modern, complex neural culture systems. Their destructive nature, low throughput, and lack of spatial context impede progress in disease modeling and drug discovery. High-content imaging and morphological profiling, as exemplified by the NeuroPainting assay, provide a robust, scalable, and information-rich framework for quantitative cell validation. By adopting these advanced techniques, researchers can achieve unprecedented resolution in characterizing cellular identity and state, ultimately enhancing the reliability and translational potential of their iPSC-based neural models.

High-content imaging (HCI) is a powerful phenotypic screening method that uses automated microscopy to extract quantitative data from cellular images [13]. Unlike conventional assays that measure only one or two features, HCI captures vast amounts of morphological information, making it particularly valuable for detecting subtle phenotypes in complex systems like mixed neural cultures [13] [14].

Cell Painting is a specific, highly multiplexed morphological profiling assay that employs a suite of fluorescent dyes to visualize multiple cellular components simultaneously [13] [15]. By "painting" different organelles and structures, it generates a rich, high-dimensional readout of cellular state. When applied to mixed neural cultures derived from induced pluripotent stem cells (iPSCs), this approach provides a powerful tool for quantifying cell composition and identifying cell types based on their intrinsic "morphotextural fingerprint," even in dense co-cultures [14].

Core Principles of the Technologies

Fundamental Concepts of Morphological Profiling

Morphological profiling involves quantifying hundreds to thousands of morphological features from microscopy images to create a unique fingerprint for each sample or perturbation [13]. This approach is fundamentally unbiased, as it does not target specific pathways, allowing for discoveries unconstrained by prior biological assumptions [13]. The core principle is that biological perturbations—whether chemical, genetic, or disease-related—induce specific, detectable changes in cellular architecture.

- Feature Extraction: Automated image analysis software identifies individual cells and measures approximately 1,500 morphological features, including various measures of size, shape, texture, and intensity [13] [15].

- Profile Comparison: Profiles of cell populations treated with different experimental perturbations are compared to identify biologically relevant similarities and differences [13].

- Single-Cell Resolution: Unlike some profiling methods, morphological profiling with Cell Painting enables analysis at single-cell resolution, allowing detection of perturbations even in subsets of cells within a population [13].

The Cell Painting Assay Mechanism

Cell Painting uses six fluorescent stains imaged in five channels to reveal eight broadly relevant cellular components or organelles [13] [15]. This comprehensive labeling strategy provides a holistic view of cellular morphology.

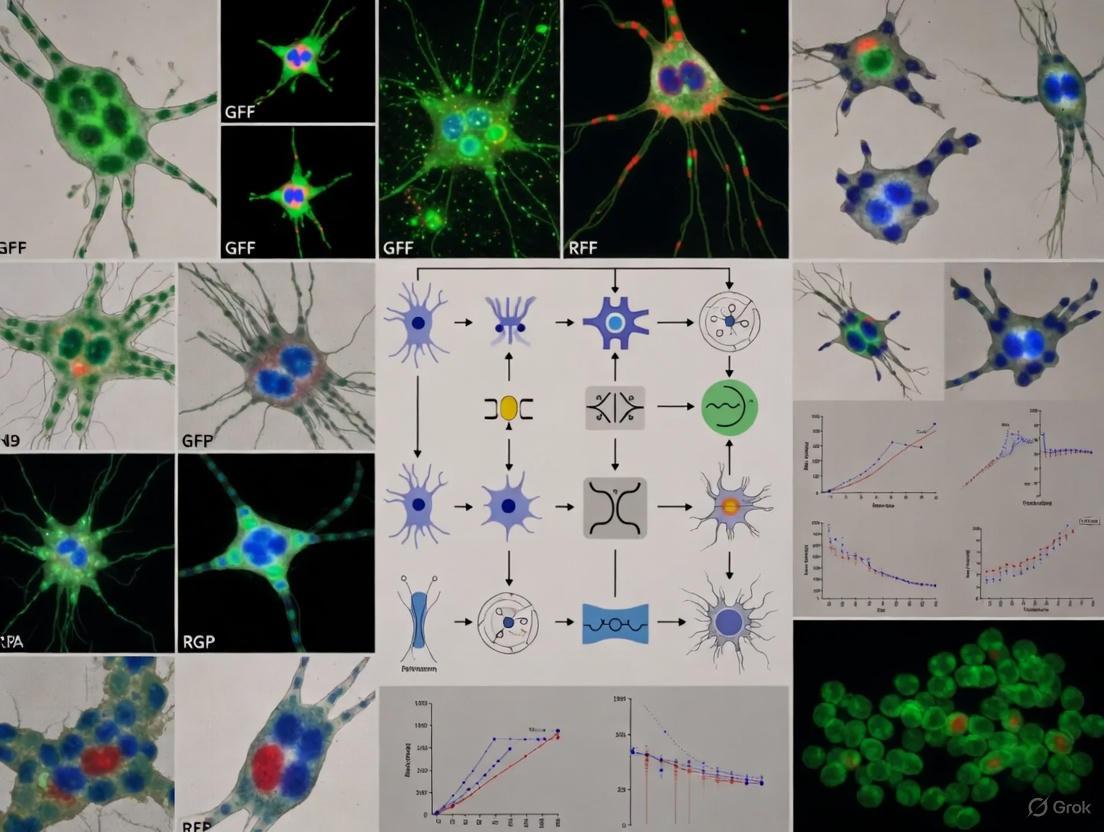

Figure 1: Cell Painting staining targets and experimental workflow from sample preparation to data analysis.

Application in Mixed Neural Culture Research

Challenges in Neural Culture Characterization

iPSC technology has revolutionized neuroscience by enabling generation of human brain-resident cell types, including neurons, astrocytes, microglia, and oligodendrocytes [14]. However, genetic drift and batch-to-batch heterogeneity cause significant variability in reprogramming and differentiation efficiency, hindering the use of iPSC-derived systems in systematic drug screening or cell therapy pipelines [14]. Traditional validation methods like sequencing, flow cytometry, and immunocytochemistry are often low-throughput, costly, and/or destructive [14].

Cell Painting for Cell Type Identification

Research has demonstrated that Cell Painting can distinguish neural cell types with high accuracy based on their morphological profiles [14]. In one study, traditional morphotextural feature extraction from cells and their nuclei provided sufficient distinctive power to separate astrocyte-derived 1321N1 astrocytoma cells from neural crest-derived SH-SY5Y neuroblastoma cells without replicate bias [14]. The study found that both texture (e.g., energy, homogeneity) and shape (e.g., nuclear area, cellular area) metrics contributed to this separation [14].

Convolutional Neural Networks (CNNs) have proven particularly effective for this application, significantly outperforming random forest classification (96.0% accuracy vs. 71.0%) in cell type prediction [14]. This approach uses image crops centered around individual cells as input rather than relying on extraction of features from segmented cell objects [14]. Gradient-weighted Class Activation Mapping (Grad-CAM) revealed that cell borders, nuclear, and nucleolar signals were the most distinctive features for classification [14].

Robustness in Dense Cultures

A significant advantage for neural culture applications is that nucleocentric morphological profiling maintains accuracy even in very dense cultures [14]. While classification accuracy decreased slightly at 95-100% confluency (92.0% vs. >96% at lower densities), performance remained remarkably robust [14]. This is particularly valuable for iPSC-derived cultures that often reach high densities and form complex cellular networks.

Experimental Protocols

Cell Painting Protocol for Morphological Profiling

The following protocol outlines the standard Cell Painting procedure, with specific considerations for neural culture applications:

- Cell Plating: Plate cells in 96- or 384-well multi-well plates at the desired confluency. For iPSC-derived neural cultures, optimize plating density to account for differentiation time and expected proliferation rates [15].

- Treatment/Perturbation: Apply experimental perturbations via chemical treatments (small molecules at 1-100 μM final concentrations) or genetic modifications. For neural differentiation studies, treatment typically occurs after plating and may extend for 48 hours or longer depending on the biological question [15].

- Fixation and Staining:

- Fix cells with appropriate fixative (e.g., 4% formaldehyde)

- Permeabilize cells (e.g., with 0.1% Triton X-100)

- Stain with the Cell Painting dye cocktail [15]

- Image Acquisition: Acquire images on a high-content screening system. For 5-channel Cell Painting, image acquisition time varies based on samples per well, brightness, and z-dimension sampling [15]. Confocal capabilities may be necessary for thick samples or maximum sensitivity [15].

- Analysis: Use automated software to extract ~1,500 morphological features from each cell. For neural cultures, employ deep learning approaches (CNNs) for optimal cell classification accuracy [14].

Table 1: Cell Painting Staining Protocol Components and Specifications

| Dye Target | Specific Dye Examples | Cellular Compartment | Staining Purpose |

|---|---|---|---|

| Nuclei & Nucleoli | Hoechst 33342 | DNA in nucleus & nucleoli | Segmentation anchor; nuclear morphology & cell cycle [13] [15] |

| Endoplasmic Reticulum | Concanavalin A, Alexa Fluor conjugates | ER membrane | ER organization, distribution & structure [13] |

| Golgi Apparatus & Plasma Membrane | Wheat Germ Agglutinin, Alexa Fluor conjugates | Golgi complex & plasma membrane | Golgi integrity, cell surface features & shape [13] [15] |

| Actin Cytoskeleton | Phalloidin, Alexa Fluor conjugates | Filamentous actin | Cytoskeletal organization, cell shape & motility [15] |

| Mitochondria & RNA | SYTO 14 | Mitochondria & cytoplasmic RNA | Mitochondrial morphology, distribution & metabolic state [13] |

Image Analysis and Feature Extraction Workflow

The image analysis pipeline transforms raw images into quantitative morphological profiles suitable for cell type identification and characterization.

Figure 2: Image analysis workflow from raw data to biological insights in mixed neural cultures.

Quantitative Profiling and Machine Learning Classification

For mixed neural cultures, the analytical approach shifts from population-level profiling to single-cell classification. The process involves:

- Traditional Feature-Based Analysis: Extract standardized morphotextural features from cells and nuclei, then visualize using UMAP for population separation [14].

- Deep Learning Implementation: Use ResNet convolutional neural networks with image crops centered around individual cells as input [14].

- Model Training: Train CNNs with sufficient biological replicates to ensure generalizability. Models trained on multiple replicates outperform those trained on single replicates when predicting instances from a third unseen replicate [14].

- Nucleocentric Approach: For dense neural cultures, use the nuclear region of interest and its immediate environment as input, which maintains accuracy even when whole-cell segmentation becomes challenging [14].

Table 2: Performance Comparison of Cell Analysis Methods in Neural Cultures

| Methodological Aspect | Traditional Feature Extraction + Random Forest | CNN with Whole-Cell Input | Nucleocentric CNN |

|---|---|---|---|

| Overall Classification Accuracy | 71.0±1.0% [14] | 96.0±1.8% [14] | >96% at low-moderate density, 92.0±1.7% at 95-100% confluency [14] |

| Key Differentiating Features | Nuclear texture energy, cellular area, DAPI contrast [14] | Cell borders, nuclear & nucleolar signals [14] | Nuclear & perinuclear morphology |

| Performance in Dense Cultures | Poor (segmentation challenges) | Decreased accuracy | Maintains high accuracy [14] |

| Implementation Complexity | Moderate | High | High |

| Interpretability | High (direct feature analysis) | Low (requires Grad-CAM) | Low (requires Grad-CAM) |

Research Reagent Solutions

Essential materials and reagents for implementing Cell Painting in neural culture research:

Table 3: Essential Research Reagents for Cell Painting Applications

| Reagent Category | Specific Examples | Function in Assay |

|---|---|---|

| Cell Painting Kits | Image-iT Cell Painting Kit [15] | Pre-optimized reagent set containing all necessary fluorescent dyes for standardized implementation |

| Individual Fluorescent Dyes | Hoechst 33342, Concanavalin A Alexa Fluor conjugates, Phalloidin Alexa Fluor conjugates, Wheat Germ Agglutinin Alexa Fluor conjugates, SYTO 14 [13] [15] | Individual stains for specific cellular compartments; allow custom panel configuration |

| Cell Lines | 1321N1 astrocytoma cells, SH-SY5Y neuroblastoma cells [14] | Validation and optimization models for neural cell type identification |

| High-Content Imaging Systems | CellInsight CX7 LZR Pro system, Cellomics systems [15] | Automated microscopy platforms for high-throughput image acquisition of multi-well plates |

| Analysis Software | CellProfiler, Deep learning frameworks (ResNet, CNN) [14] | Open-source and commercial software for image analysis, feature extraction, and classification |

High-content imaging combined with Cell Painting provides a powerful framework for quantitative morphological analysis of complex cellular systems. When applied to mixed neural cultures, this approach enables robust, single-cell classification of neural cell types based on their intrinsic morphotextural fingerprints, even in dense co-cultures where traditional segmentation methods fail. The ability to perform non-destructive, high-throughput quality control of iPSC-derived neural cultures addresses a critical bottleneck in neuroscience research and drug discovery. As deep learning methodologies continue to advance alongside improved staining protocols, morphological profiling promises to become an increasingly valuable tool for characterizing cellular heterogeneity in complex neural systems.

Decoding the Morphotextural Fingerprint of Neural Cells

Application Note

High-content analysis (HCA) represents an advanced technological platform that combines automated microscopy with multi-parametric imaging and analysis to extract quantitative data from cell populations [16] [9]. In the context of neural research, the ability to accurately identify and characterize distinct cell types within mixed neural cultures is crucial for advancing our understanding of neural development, function, and disease mechanisms. Traditional methods for cell type validation, including sequencing, flow cytometry, and immunocytochemistry, are often low in throughput, costly, and destructive [3] [14]. This application note details a robust methodology using high-content image-based morphological profiling to quantitatively and systematically characterize induced pluripotent stem cell (iPSC)-derived mixed neural cultures, achieving exceptional classification accuracy above 96% [3].

The Morphotextural Fingerprint Concept

The term "morphotextural fingerprint" refers to the unique combination of morphological and textural features that can be quantitatively extracted from cellular images to define a specific cell type identity. In neural cell lines, including astrocyte-derived 1321N1 astrocytoma and neural crest-derived SH-SY5Y neuroblastoma cells, this fingerprint manifests as a distinct profile of shape, intensity, and texture metrics across different cellular regions [3]. Representation of standardized feature sets in UMAP space reveals clear separation of neural cell types without replicate bias, demonstrating that cell types can be distinguished across biological replicates based on their unique morphotextural signatures [3] [14]. These fingerprints remain sufficiently distinct even in dense, mixed cultures, enabling reliable cell identity discrimination.

Quantitative Performance Data

Table 1: Performance Comparison of Classification Methods for Neural Cell Identification

| Classification Method | Accuracy | Precision | Recall | Key Advantages | Limitations |

|---|---|---|---|---|---|

| Convolutional Neural Network (CNN) | 96.0 ± 1.8% [14] | High and balanced [14] | High and balanced [14] | Superior accuracy; handles raw image data directly; robust to density variations | "Black box" nature complicates model interpretation |

| Random Forest (RF) | 71.0 ± 1.0% [14] | Imbalanced [14] | Imbalanced (46% misclassification of 1321N1 cells) [14] | Allows feature importance analysis | Poor performance with high-dimensional data; biased feature selection |

Table 2: Culture Density Impact on CNN Classification Accuracy

| Culture Confluency Range | Classification Accuracy | Notes |

|---|---|---|

| 0-80% | No significant decrease [14] | Robust performance across low to high densities |

| 80-95% | Maintained high accuracy [14] | Nucleocentric approach preserves accuracy |

| 95-100% | 92.0 ± 1.7% [14] | Slight decrease due to segmentation challenges |

Table 3: Feature Contributions to Morphotextural Fingerprinting

| Feature Category | Examples | Contribution to Cell Type Separation |

|---|---|---|

| Texture Metrics | Nucleus Channel 3 Energy, Homogeneity [3] [14] | High contribution to UMAP separation |

| Shape Metrics | Cellular Area, Nuclear Area [3] [14] | High contribution to UMAP separation |

| Intensity-related Features | Channel 3 Intensity, Mean/Max/Min Intensity [3] [14] | Less pronounced; more correlated with biological replicate |

Experimental Workflow

Signaling Pathways in Neural Differentiation

Protocols

Protocol 1: Cell Painting and Staining for Mixed Neural Cultures

Purpose: To fluorescently label multiple cellular compartments for comprehensive morphotextural analysis.

Reagents and Materials:

- Cell painting dyes (various fluorophore-conjugated probes)

- Fixed mixed neural cultures on coverslips

- Permeabilization buffer (0.1% Triton X-100 in PBS)

- Blocking solution (1-5% BSA in PBS)

- Mounting medium with DAPI

- Wash buffer (PBS)

Procedure:

- Fixation and Permeabilization: Fix cultures with 4% paraformaldehyde for 15 minutes at room temperature. Permeabilize with 0.1% Triton X-100 in PBS for 10 minutes.

- Blocking: Incubate with blocking solution (1-5% BSA in PBS) for 30-60 minutes to reduce non-specific binding.

- Staining Cocktail Preparation: Prepare cell painting dye cocktail according to manufacturer's instructions. A typical 4-channel confocal imaging setup includes:

- Nuclear stain (e.g., DAPI)

- Cytoplasmic stain (e.g., Phalloidin for F-actin)

- Mitochondrial stain

- Golgi apparatus and endoplasmic reticulum stains

- Staining Incubation: Apply staining cocktail to fixed cultures and incubate overnight at 4°C or for 1-2 hours at room temperature protected from light.

- Washing: Perform three washes with PBS, 5 minutes each with gentle agitation.

- Mounting: Mount coverslips using anti-fade mounting medium. Seal edges with clear nail polish.

- Storage: Store slides at 4°C in the dark until imaging.

Technical Notes: Consistent staining conditions across all samples is critical for comparative analysis. Include appropriate controls for autofluorescence and staining specificity.

Protocol 2: High-content Imaging and Analysis Workflow

Purpose: To acquire and analyze high-content images for morphotextural fingerprint extraction and cell type classification.

Equipment and Software:

- High-content analysis platform (e.g., Thermo Scientific CellInsight CX7 HCA Platform or ArrayScan systems) [16]

- Confocal microscope with automated stage

- Image analysis software (e.g., HCS Studio Cell Analysis Software) [16]

- Computational resources for deep learning (GPU-enabled)

Procedure:

- Instrument Calibration: Calibrate the HCA instrument according to manufacturer specifications. Ensure consistent lighting and focus across all imaging sessions.

- Image Acquisition Setup:

- Configure automated acquisition settings including exposure times, z-stack parameters (if applicable), and field selection.

- Set up plate mapping for systematic sampling across culture conditions.

- Define imaging areas ensuring adequate cell numbers (minimum 500-1000 cells per condition recommended).

- Automated Image Acquisition: Run automated imaging protocol. The CellInsight CX7 platform allows interrogation of multiple sample types with techniques including laser autofocus and confocal acquisition [16].

- Image Pre-processing:

- Perform flat-field correction to account for illumination irregularities.

- Apply background subtraction to enhance signal-to-noise ratio.

- Cell Segmentation:

- Use nuclear markers (DAPI) for primary object identification.

- Apply cytoplasm-based segmentation for whole-cell identification.

- For dense cultures, implement specialized algorithms to separate touching cells.

- Feature Extraction: Extract morphotextural features for each cell across all channels. Key feature categories include:

- Shape features: Area, perimeter, eccentricity, form factor

- Intensity features: Mean, maximum, minimum, standard deviation of pixel intensities

- Texture features: Contrast, correlation, energy, homogeneity (calculated from gray-level co-occurrence matrices)

- Model Training and Classification:

- For CNN approach: Use image crops centered around individual cells as input to ResNet architecture.

- Train with equal sampling of cell numbers per class to avoid bias.

- For optimal performance, include at least 5000 training instances per class [14].

- Validate model performance on independent test sets.

Technical Notes: For dense cultures (>80% confluency), employ nucleocentric profiling by using nuclear ROI and immediate periphery as input to maintain classification accuracy [3] [14].

Protocol 3: Differentiation of hiPSCs into Mixed Neural Cultures

Purpose: To generate mixed cultures of neuronal and glial cells from human induced pluripotent stem cells for morphotextural analysis.

Reagents and Materials:

- hiPSCs (e.g., IMR90-derived hiPSCs)

- mTeSR1 medium with supplements

- hESC-qualified basement membrane matrix

- Neural induction medium (NRI)

- Neuronal differentiation medium (ND)

- Laminin for coating

- Accutase or collagenase for dissociation

Procedure:

- hiPSC Maintenance:

- Culture hiPSCs on qualified matrix-coated dishes in mTeSR1 medium.

- Passage colonies when they reach appropriate size (approximately 1 mm diameter) using manual cutting or enzymatic dissociation.

- Embryoid Body (EB) Formation (Days 0-1):

- Cut undifferentiated hiPSC colonies into fragments of approximately 200 × 200 µm using a syringe with 30G needle.

- Transfer fragments to low-attachment plates to allow EB formation in neural induction medium.

- Neuroectodermal Differentiation (Days 1-7):

- Plate EBs on laminin-coated dishes in neuroepithelial induction (NRI) medium.

- Culture for 7 days, monitoring rosette formation (nestin+, β-III-tubulin+ structures).

- Neural Stem Cell (NSC) Expansion (Days 7-14):

- Mechanically isolate rosettes and dissociate into single cells.

- Replate on laminin-coated dishes in neural induction medium for NSC expansion.

- Terminal Differentiation (Days 14-28):

- Switch to neuronal differentiation medium to promote maturation into mixed neuronal and glial cultures.

- Culture for additional 14 days, with medium changes every 2-3 days.

- Characterization:

- Validate culture composition using immunocytochemistry for neuronal (NF200) and glial (GFAP) markers.

- Perform functional characterization through calcium imaging or other functional assays.

Technical Notes: The entire differentiation process takes approximately 28 days to establish mature mixed neural cultures suitable for morphotextural fingerprint analysis [17].

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Reagents and Materials for Morphotextural Analysis

| Reagent/Material | Function | Example Applications |

|---|---|---|

| Cell Painting Dye Cocktail | Multi-compartment cellular staining | Simultaneous labeling of nucleus, cytoplasm, mitochondria, Golgi, and ER [3] |

| HCS NuclearMask Stains | Nuclear segmentation and identification | Primary object identification in high-content analysis [9] |

| CellROX Reagents | Oxidative stress measurement | Detection of reactive oxygen species in neural cells [16] |

| Click-iT EdU HCS Assays | Cell proliferation analysis | S-phase identification and cell cycle analysis [9] |

| Alexa Fluor Conjugates | High-quality fluorescence labeling | Immunofluorescence and specific protein detection [9] |

| LIVE/DEAD Staining Kits | Cell viability assessment | Viability quantification in high-content screens [9] |

| BacMam Gene Delivery | Targeted fluorescent protein expression | Organelle-specific labeling with Organelle Lights reagents [9] |

| FluxOR Assay Kits | Ion channel screening | Potassium ion channel function analysis [9] |

The application of morphotextural fingerprinting for neural cell identification represents a significant advancement in high-content analysis, enabling unbiased, quantitative classification of cell types in complex mixed cultures. The methodology outlined in this application note, combining cell painting with convolutional neural networks, achieves exceptional classification accuracy above 96% [3] [14], significantly outperforming traditional machine learning approaches. This approach maintains robust performance even in dense cultures through nucleocentric profiling and provides a cost-effective, scalable solution for quality control in iPSC-derived neural culture models. As the field progresses, the integration of these methodologies with advanced 3D culture systems and organoid models will further enhance their relevance for neurodevelopmental studies, disease modeling, and drug discovery applications.

From Pixels to Predictions: Implementing Deep Learning for Automated Cell Classification

High-content imaging represents a powerful paradigm for quantifying cell type and state in complex biological systems. For researchers working with dense, mixed neural cultures derived from induced pluripotent stem cells (iPSCs), this approach is particularly valuable for quality control, as traditional methods like flow cytometry and immunocytochemistry are often low-throughput, costly, and destructive [18]. The integration of specific staining protocols with multi-channel imaging and advanced computational analysis creates a robust framework for unbiased cell identification, achieving classification accuracies exceeding 96% in validation studies [18] [3]. This application note details the essential staining techniques, dye selection, and imaging workflows that underpin successful high-content imaging for neural cell identification.

Staining and Dye Selection for High-Content Imaging

The foundation of effective image-based profiling lies in the strategic selection of fluorescent stains that highlight distinct subcellular compartments. The table below summarizes key dyes and their applications.

Table 1: Essential Stains and Dyes for High-Content Imaging of Neural Cultures

| Reagent | Staining Target | Excitation/Emission (nm) | Key Applications | Notes and Considerations |

|---|---|---|---|---|

| Hoechst 33342 [19] | dsDNA (Nucleus) | ~350/461 | Nuclear counterstain; identification of apoptotic cells (condensed nuclei); cell cycle studies. | Cell-permeant. Known mutagen; handle with care. Fluorescence is quenched by BrdU. |

| Acridine Orange (AO) [20] | Nucleic acids (DNA/RNA) and acidic compartments | Varies by complex | Live-cell imaging; phenotypic profiling; visualization of nuclei and cytoplasmic organelles. | Metachromatic dye; offers a two-channel readout. Enables dynamic, real-time measurements. |

| Cell Painting Dyes [18] | Multiple compartments (e.g., nucleus, cytoplasm, mitochondria) | Multi-channel | Creating a morphological fingerprint for cell type/state identification. | Typically a 4-6 channel assay. Used to distinguish cell types with high fidelity. |

| GCaMP6f [21] | Intracellular Calcium (Ca²⁺) | ~488/510 (GFP-based) | Monitoring functional neuronal activity and maturation in live cells. | Genetically Encoded Calcium Indicator (GECI). Use with neuron-specific promoters (e.g., hSyn) for specificity. |

| LNA/DNA Imaging Probes [22] | Specific proteins (via antibody conjugation) | Varies by fluorophore | Highly multiplexed protein imaging (confocal and super-resolution). | Enables sequential multiplexing of dozens of targets (e.g., synaptic proteins) in the same sample. |

Detailed Experimental Protocols

Protocol 1: Nuclear Staining with Hoechst 33342 for Fixed Cells

This protocol is ideal for providing a fundamental nuclear counterstain in fixed-cell imaging workflows [19].

You will need:

- Cells cultured appropriately for microscopy

- Hoechst 33342, trihydrochloride, trihydrate

- Phosphate-buffered saline (PBS)

- Fluorescence microscope with DAPI filter set

Staining Procedure:

- Prepare Hoechst Stock Solution: Dissolve Hoechst 33342 in deionized water to a final concentration of 10 mg/mL (16.23 mM). Sonicate if necessary to dissolve completely. Aliquot and store at 2–6°C for up to 6 months or at ≤ –20°C for longer storage [19].

- Prepare Staining Solution: Dilute the Hoechst stock solution 1:2,000 in PBS. For example, add 5 µL of stock to 10 mL of PBS.

- Stain Cells: Remove culture medium from cells and add sufficient staining solution to cover them completely.

- Incubate: Protect from light and incubate for 5–10 minutes at room temperature.

- Wash and Image: Remove the staining solution. Wash the cells 3 times with PBS. Image the cells using a microscope equipped with a DAPI filter set.

Protocol Tips:

- Hoechst is a known mutagen and should be handled with appropriate care.

- Dissolving the dye directly in PBS for the stock solution is not recommended; use deionized water instead.

- Over-staining can result in a green haze (emission ~510-540 nm) from unbound dye; optimize concentration for your system [19].

Protocol 2: Live-Cell Morphological Profiling with Acridine Orange

This protocol enables image-based phenotypic profiling in live cells, allowing for the assessment of dynamic processes [20].

You will need:

- Live cells in an appropriate culture medium and vessel

- Acridine Orange (AO) stock solution

- Live-cell imaging compatible microscope with environmental control

Staining and Imaging Procedure:

- Prepare Staining Solution: Dilute Acridine Orange in pre-warmed culture medium or buffer to the working concentration (specific concentration should be optimized for the cell type, e.g., 1-5 µg/mL).

- Stain Cells: Replace the culture medium with the AO staining solution.

- Incubate: Incubate for 15-30 minutes at 37°C and 5% CO₂, protected from light.

- Wash (Optional): For reduced background, the staining solution can be replaced with fresh, pre-warmed medium. However, the dye can also be imaged directly in the staining solution.

- Image: Immediately image live cells using a fluorescence microscope. AO stains nuclei and cytoplasmic organelles, providing a two-channel readout.

Protocol Tips:

- This method is compatible with high-throughput screening and dose-response analyses.

- It is particularly useful for detecting subtle, sublethal phenotypic changes in toxicology and drug discovery [20].

Workflow for Multiplexed Cell Type Identification

The following diagram illustrates the integrated workflow for staining, imaging, and computational analysis for cell identity determination, synthesizing the protocols from the cited research.

The Scientist's Toolkit: Research Reagent Solutions

Successful implementation of these protocols relies on a core set of reagents and tools.

Table 2: Essential Research Reagent Solutions for High-Content Imaging Assays

| Item | Function/Description | Example Use Case |

|---|---|---|

| Hoechst 33342 [19] | Cell-permeant nuclear counterstain for fixed or live cells. | Distinguishing individual cells in dense cultures; identifying condensed apoptotic nuclei. |

| Acridine Orange [20] | Live-cell dye for nucleic acids and acidic compartments. | Phenotypic profiling and dose-response analysis in viable neural cultures. |

| Cell Painting Kit [18] | A standardized panel of dyes targeting multiple organelles to generate a morphological "fingerprint." | Unbiased identification of cell types (e.g., neurons vs. progenitors) in mixed cultures. |

| GCaMP6f AAV (hSyn promoter) [21] | Genetically encoded calcium indicator for monitoring neuronal activity. | Specific measurement of functional maturation in human iPSC-derived neurons over multiple time points. |

| LNA/DNA-PRISM Probes [22] | Diffusible nucleic acid imaging probes for highly multiplexed protein imaging. | Sequential imaging of dozens of synaptic and cytoskeletal proteins in the same neuronal sample. |

| Convolutional Neural Network (CNN) [18] [3] | Deep learning model for high-accuracy cell classification based on raw image crops. | Achieving >96% accuracy in distinguishing neuroblastoma from astrocytoma cells in mixed cultures. |

Data Analysis and Validation

Quantitative Analysis of Staining and Classification

The efficacy of staining and imaging protocols is ultimately quantified through downstream analytical outputs.

Table 3: Quantitative Outcomes from Featured Imaging and Staining Approaches

| Method / Reagent | Key Quantitative Output | Reported Performance | Technical Notes |

|---|---|---|---|

| Hoechst 33342 [19] | Nuclear count, morphology (area, roundness), intensity. | Standard for nuclear segmentation. | Fluorescence intensity can be used for ploidy and cell cycle analysis. |

| Cell Painting + CNN [18] [3] | Single-cell classification accuracy. | >96% accuracy distinguishing cell types in mixed neural cultures. | Outperforms Random Forest classifiers (F-score: 0.75) which rely on hand-crafted features. |

| LNA-PRISM [22] | Number of protein targets imaged in a single sample. | Up to 13-channel confocal imaging of synaptic and cytoskeletal proteins. | Enables correlation analysis of 66 protein co-expression profiles from thousands of synapses. |

| GCaMP6f (AAV2/retro-hSyn) [21] | Specific neuronal transduction and calcium event detection. | Efficient for multi-time point imaging; specific to neurons in mixed cultures. | Allows for functional assessment of network activity during neurodifferentiation. |

Computational Analysis Workflow

The transition from raw images to cell identity involves a critical computational pipeline, the logic of which is shown below.

Convolutional Neural Networks (CNNs) have proven superior to traditional methods like Random Forest classification, which achieved an F-score of only 0.75, largely due to misclassification of one cell type [18]. CNNs use isotropic image crops centered on individual cell nuclei, blanking out the immediate surroundings, to achieve F-scores of 0.96 [18] [3]. Techniques like Grad-CAM can be applied to visualize the morphological features—such as cell borders, nuclear, and nucleolar signals—that the network uses for classification, adding a layer of interpretability [18].

Convolutional Neural Networks (CNNs) vs. Traditional Machine Learning

For researchers in cell type identification, the choice between Convolutional Neural Networks (CNNs) and traditional machine learning is pivotal. Evidence from high-content imaging studies demonstrates that CNNs consistently achieve superior classification accuracy, exceeding 96% in distinguishing neural cell types in dense, mixed cultures [3] [23]. Traditional methods, relying on handcrafted morphotextural features, typically achieve lower performance (e.g., ~75% F-score) and struggle with generalization [3]. The primary trade-off involves data requirements; CNNs require large, varied training datasets to perform optimally, whereas traditional methods with handcrafted features can be more effective with limited data [24]. This application note provides a structured comparison and detailed protocols to guide the selection and implementation of these methods for robust, automated quality control in neural culture research.

Quantitative Performance Comparison

The table below summarizes key performance metrics from relevant studies, highlighting the comparative effectiveness of CNNs and traditional machine learning in biological image analysis.

Table 1: Performance Comparison of CNNs vs. Traditional Machine Learning in Image-Based Classification

| Application Context | Traditional ML (Algorithm, Accuracy/Score) | CNN (Architecture, Accuracy/Score) | Reference |

|---|---|---|---|

| Cell Type Identification in Mixed Neural Cultures | Random Forest (F-score: 0.75) | ResNet-based CNN (F-score: 0.96) | [3] [23] |

| Deep Vein Thrombosis on CT Venography | Extreme Gradient Boost (AUC: 0.975) | VGG16 (AUC: 0.982) | [25] |

| Liver MR Image Adequacy Assessment | Random Forest with Handcrafted Features (Performance superior with small sample sizes) | CNN (Performance superior with large sample sizes; combined approach best) | [24] |

| Ultrasound Breast Lesion Classification | Multiple Traditional Classifiers with Handcrafted Features (Performance lower than deep learning) | Pre-trained CNNs (e.g., ResNet, Inception; Accuracy: ~85-88%) | [26] |

Detailed Experimental Protocols

Protocol 1: Cell Type Identification via CNN-Based Morphological Profiling

This protocol leverages a Cell Painting assay and a ResNet-based CNN for high-accuracy cell classification in dense neural cultures [3] [23].

Sample Preparation and Staining (Cell Painting Assay)

- Culture Setup: Prepare pure and mixed cultures of the target cell types (e.g., iPSC-derived neurons, progenitors, microglia) as well as benchmark cell lines (e.g., SH-SY5Y, 1321N1).

- Staining: Label cells with a standard 4- or 6-channel Cell Painting cocktail. A typical setup includes:

- Hoechst 33342: Nuclei staining.

- Phalloidin: F-actin staining for cytoskeleton.

- Concanavalin A: Glycoproteins staining.

- Wheat Germ Agglutinin: Golgi and plasma membrane.

- SYTO 14: Nucleoli.

High-Content Image Acquisition

- Image the stained cultures using a high-content confocal microscope.

- Acquire images from all relevant channels with consistent exposure settings across all samples and replicates to ensure reproducibility.

Image Preprocessing and Single-Cell Isolation

- Isotropic Crop Generation: For each cell, generate a standardized image crop (e.g., 60 µm x 60 µm) centered on the nucleus [23].

- Data Augmentation: Apply random transformations (rotations, flips, minor intensity variations) to the training dataset to improve model robustness and prevent overfitting.

Convolutional Neural Network Training & Classification

- Architecture Selection: Implement a standard deep learning architecture such as ResNet-50 or ResNet-152 [3] [25].

- Model Training: Train the network using the preprocessed image crops. Use a standard 80/10/10 split for training, validation, and testing.

- Performance Validation: Assess the model using metrics like F-score, precision, and recall on the held-out test set. Use Gradient-weighted Class Activation Mapping (Grad-CAM) to visualize regions of the image most influential for the classification decision, adding interpretability [23].

Protocol 2: Cell Classification Using Traditional Machine Learning with Handcrafted Features

This protocol is suitable for scenarios with limited training data, where handcrafted features can provide a strong baseline performance [3] [24].

Sample Preparation, Staining, and Image Acquisition

- Follow Steps 1 and 2 of Protocol 1.

Cell Segmentation and Feature Extraction

- Cell Segmentation: Use a segmentation algorithm (e.g., marker-controlled watershed) on the nuclear stain channel to identify individual cells [27].

- Region of Interest (ROI) Definition: For each segmented cell, define key ROIs: Nucleus, Cytoplasm, and Whole Cell.

- Handcrafted Feature Calculation: For each ROI and imaging channel, calculate a suite of morphotextural features. These typically include:

Classifier Training and Validation

- Data Preparation: Compile the extracted features into a structured data matrix. Normalize the feature values to have zero mean and unit variance.

- Model Training: Train a traditional machine learning classifier, such as a Random Forest, on the normalized feature matrix.

- Performance Assessment: Validate the classifier performance on a separate test set using standard metrics.

Workflow Visualization

The following diagram illustrates the logical and procedural relationship between the two protocols for cell type identification.

The Scientist's Toolkit: Research Reagent Solutions

The table below details essential materials and reagents for implementing the imaging and analysis workflows described in this note.

Table 2: Key Research Reagents and Materials for High-Content Imaging and Analysis

| Item | Function/Application | Example Use Case |

|---|---|---|

| Cell Painting Assay Kit | A standardized set of fluorescent dyes for multiplexed morphological profiling. | Staining mixed neural cultures to generate rich morphological data for CNN or feature-based classification [3] [23]. |

| Induced Pluripotent Stem Cells (iPSCs) | Patient-specific source material for generating relevant human neural cell types. | Differentiating into neurons, astrocytes, and microglia to create physiologically relevant mixed culture models [3] [23]. |

| High-Content Confocal Microscope | Automated imaging system for acquiring high-resolution, multi-channel z-stack images. | Capturing the detailed morphology of individual cells in dense, mixed cultures for downstream analysis [3] [27]. |

| Marker-Controlled Watershed Algorithm | Image processing technique for segmenting touching cells in an image. | Delineating individual cells in dense cultures based on nuclear staining prior to feature extraction [27]. |

| Pre-trained CNN Models (ResNet, VGG) | Deep learning models with pre-learned feature detectors, adaptable via transfer learning. | Accelerating and improving the training of cell classification models, especially with datasets of moderate size [25] [26]. |

Within the field of neuroscience research, particularly in the study of human neurological disorders and the development of novel therapeutics, the adoption of induced pluripotent stem cell (iPSC)-derived neural cultures has become pivotal. These models recapitulate the cellular heterogeneity of the human brain, but this same complexity presents a significant challenge: the need for precise and reliable identification of constituent cell types, such as neurons, astrocytes, and microglia [3]. Traditional validation methods like flow cytometry or immunocytochemistry are often low-throughput, costly, and destructive, hindering rapid and routine quality control [3].

This application note details a case study demonstrating how high-content imaging and morphological profiling can overcome these limitations. By implementing a method based on cell painting and convolutional neural networks (CNNs), researchers achieved exceptional classification accuracy exceeding 96% for identifying individual cell types within dense, mixed neural cultures [3] [28]. This approach provides a fast, affordable, and scalable solution for quantifying cell composition, thereby enhancing experimental reproducibility and supporting more reliable preclinical screening [3].

Experimental Workflow & Key Findings

The study established an unbiased workflow for cell type identification by combining multiplexed fluorescent imaging with advanced computational analysis. The process begins with the labeling of cultured cells using a modified cell painting (CP) assay, which employs a panel of simple organic dyes to reveal a wealth of morphological information [3]. After high-content confocal imaging, the resulting data is processed through a deep learning pipeline. This involves cell segmentation to identify individual cells in dense cultures, followed by cell type classification using a ResNet-based convolutional neural network (CNN) [3]. This tiered strategy allows for the precise discrimination of not only broad cell types but also distinct cell states, such as activated versus non-activated microglia [3].

Key Quantitative Findings

The implemented methodology was rigorously benchmarked and validated, yielding several key findings with compelling quantitative results, as summarized in the table below.

Table 1: Summary of Key Experimental Findings and Performance Metrics

| Experimental Scenario | Methodology | Key Finding | Reported Accuracy |

|---|---|---|---|

| Benchmarking on Cell Lines [3] | Cell Painting + CNN on SH-SY5Y (neuroblastoma) and 1321N1 (astrocytoma) co-cultures. | Unequivocal discrimination of two distinct neural cell lineages. | >96% classification accuracy |

| Analysis of Dense Cultures [3] | Iterative data erosion, focusing on the nuclear region and its immediate environment. | Regional analysis preserved high prediction accuracy even in very dense cultures. | Equally high accuracy vs. whole-cell analysis |

| iPSC-Differentiation Status [3] | Cell-based profiling of postmitotic neurons vs. neural progenitors. | Significantly outperformed classification based on population-level time in culture. | 96% (cell-based) vs. 86% (time-based) |

| Identification of Microglia [3] | Tiered classification strategy in mixed iPSC-derived neuronal cultures. | Unequivocal discrimination of microglia from neurons; further distinction of microglial reactivity state. | Unequivocal discrimination (high accuracy), lower accuracy for activation state |

A critical insight from the study was that a regionally restricted cell profiling approach, which uses inputs containing the nucleus and its immediate surroundings, achieved classification accuracy as high as an analysis of the whole cell in semi-confluent cultures. Furthermore, this restricted input preserved prediction accuracy exceptionally well in very dense cultures where whole-cell segmentation is challenging [3]. When applied to iPSC-derived neural cultures, this morphological single-cell profiling significantly outperformed a simpler classification based on the time the population had spent in culture, achieving a 96% accuracy versus 86%, respectively [3]. This underscores the power of a single-cell resolution approach over population-level assumptions.

Furthermore, the CNN-based classifier demonstrated superior performance compared to traditional machine learning models. In benchmark tests, a Random Forest (RF) classifier using hand-crafted morphotextural features achieved a comparatively poor F-score of 0.75, largely due to a 46% misclassification rate of one cell type. In contrast, the ResNet CNN surpassed this, enabling the high classification accuracy central to this case study's findings [3].

Detailed Protocols

Cell Painting Assay for Mixed Neural Cultures

This protocol describes the process for staining mixed neural cultures to generate rich morphological data for subsequent image analysis and cell classification [3].

Materials and Reagents

Table 2: Key Research Reagent Solutions for Cell Painting Assay

| Item | Function / Explanation |

|---|---|

| Neural Culture Medium | Supports the survival and health of mixed neural cultures during the assay. Typically based on Neurobasal-A or similar, supplemented with B-27 [29] [30]. |

| Cell Painting Dye Kit | A multiplexed set of fluorescent dyes that target specific cellular compartments (e.g., nuclei, endoplasmic reticulum, Golgi apparatus, cytoskeleton, mitochondria) to generate a morphological "fingerprint" [3]. |

| Formaldehyde (4%) | Fixes the cells, preserving cellular structures and morphology at the time of fixation for subsequent staining and imaging. |

| Triton X-100 | A detergent used to permeabilize the cell membrane, allowing fluorescent dyes to access intracellular targets. |

| Phosphate-Buffered Saline (PBS) | Used for washing steps to remove excess reagents and reduce background fluorescence. |

| Glass-Bottom Culture Plates | Optimal for high-resolution confocal microscopy, providing superior optical clarity for image acquisition. |

Step-by-Step Procedure

- Culture Preparation: Plate mixed neural cultures (e.g., iPSC-derived neurons, astrocytes, and microglia) in glass-bottom multi-well plates. Allow cells to adhere and mature under appropriate culture conditions.

- Fixation:

- Aspirate the culture medium.

- Gently wash the cells once with pre-warmed PBS.

- Add 4% formaldehyde in PBS and incubate for 15-20 minutes at room temperature.

- Aspirate the fixative and wash the cells twice with PBS.

- Permeabilization and Blocking:

- Incubate the fixed cells with a PBS solution containing 0.1% Triton X-100 for 15 minutes.

- Aspirate the permeabilization solution and wash once with PBS.

- (Optional) Incubate with a blocking solution (e.g., 1-2% normal goat serum in PBS) for 30 minutes to reduce non-specific staining.

- Cell Painting Staining:

- Prepare the cocktail of fluorescent dyes according to the established Cell Painting protocol [3].

- Aspirate the PBS (or blocking solution) from the wells and add the dye cocktail.

- Incubate in the dark for 30 minutes at room temperature.

- Aspirate the dye solution and wash the cells thoroughly three times with PBS, ensuring all unbound dye is removed.

- Storage and Imaging: Add a small volume of PBS to prevent the cells from drying out. Seal the plate to protect from light. Image the plates as soon as possible using a high-content or confocal microscope, acquiring images in all relevant fluorescence channels [3].

Image Analysis and Cell Classification Pipeline

This protocol covers the computational workflow for segmenting cells and classifying cell types based on the acquired Cell Painting images.

Computational Tools and Environment

- Software Environment: A standard environment like Python (with Anaconda distribution) is used for running the analysis pipeline [3].

- Deep Learning Framework: Use a framework such as PyTorch or TensorFlow to implement the ResNet architecture for classification.

- Image Analysis Libraries: Libraries like CellProfiler or DeepCell can be used for initial image preprocessing and segmentation.

- High-Performance Computing: Access to a GPU cluster is highly recommended to reduce the time required for training the CNN model.

Step-by-Step Procedure

- Image Preprocessing: Apply standard preprocessing steps to the multi-channel images, such as flat-field correction to illuminate unevenness and background subtraction.

- Cell Segmentation:

- Use a deep learning-based segmentation model (e.g., a pre-trained U-Net or a model from DeepCell) to identify individual cell boundaries, even in dense regions.

- The model generates a mask that outlines each cell (or nuclear region, per the regional restriction finding).

- Feature Extraction & Dataset Creation:

- For a CNN approach, the input is typically the multi-channel image crops of individual cells, as defined by the segmentation masks.

- The dataset is split into training, validation, and test sets, ensuring that cells from the same biological replicate are kept together to prevent data leakage.

- CNN Model Training:

- A ResNet architecture (e.g., ResNet50) is adapted for the multi-channel input.

- The model is trained on the training set using the cell type labels as the ground truth. The validation set is used to monitor for overfitting.

- Data augmentation techniques (e.g., rotations, flips, minor contrast changes) are applied to improve model robustness.

- Cell Type Classification and Validation:

- The trained model is used to predict cell types on the held-out test set.

- Performance is evaluated by calculating accuracy, precision, recall, and F-score.

- Predictions can be validated against known markers via immunofluorescence on a separate set of cultures [3].

Discussion and Application

The high classification accuracy (>96%) achieved through this cell painting and CNN pipeline underscores its significant potential for quality control in iPSC-derived neural culture models [3] [28]. This method provides an unbiased, quantitative, and scalable alternative to traditional, more variable validation techniques.

The primary application of this technology is in preclinical drug screening, where consistent and well-characterized cellular models are crucial for generating reproducible and translatable data. By accurately quantifying the ratio of neurons to progenitors or detecting the presence and activation state of microglia, researchers can better standardize their assays and interpret compound effects [3]. Furthermore, this approach holds promise for cell therapy development, where robust quality control is a prerequisite for safety and regulatory compliance. The ability to perform this analysis without destroying the cultures is a key advantage, allowing for longitudinal studies or subsequent molecular analyses on the same sample [3].

Future directions for this work include extending the classification capabilities to a wider range of neural cell types, such as oligodendrocytes and different neuronal subtypes, and further refining the discrimination of functional states like microglial activation. Integration with other omics data layers could also provide deeper insights into the relationship between cell morphology and molecular function.

The adoption of three-dimensional (3D) neural cell culture models, such as neurospheroids, represents a significant advancement in neuroscience research, as they more accurately replicate the complex architecture, cell organization, and multicellular interactions characteristic of native neural tissue compared to traditional two-dimensional (2D) cultures [31]. However, the complexity of these 3D structures presents distinct challenges for monitoring and analysis. Traditional microelectrode arrays (MEAs) used for electrophysiological recording require external amplification and reference electrodes, limiting system miniaturization [31]. Concurrently, the variability in differentiation outcomes and cellular heterogeneity in induced pluripotent stem cell (iPSC)-derived models necessitates robust quality control methods to ensure experimental reproducibility [3]. This application note details integrated protocols for the functional electrophysiological assessment and high-content morphological analysis of 3D neurospheroids, providing a framework for comprehensive characterization within research and drug development pipelines.

Electrophysiological Monitoring using Organic Charge-Modulated Field Effect Transistors (OCMFETs)

Background and Principle

Organic Charge-Modulated Field Effect Transistors (OCMFETs) present a promising alternative to standard MEAs for monitoring electrical activity in 3D cellular aggregates. Their operation is based on the modulation of transistor channel conductivity induced by the presence of charge on the surface of a sensing area, which is read out as a variation of the device's threshold voltage [31]. A key advantage of this architecture is the physical separation of the sensing area from the organic semiconductor channel, which allows for effective encapsulation and protects the semiconductor from degradation in humid biological environments—a critical feature for long-term cell culture monitoring [31].

Device Fabrication Protocol

Objective: To fabricate ultra-sensitive, flexible OCMFET sensors on plastic substrates for interfacing with neurospheroids. Materials:

- Substrate: Polyethylene terephthalate (PET, 175 μm thickness)

- Metallic Layers: Gold (for floating gate, source, drain, and control gate capacitor)

- Dielectric Layer: Parylene C (200 nm, deposited via Chemical Vapor Deposition - CVD)

- Organic Semiconductor: 6,13-bis(triisopropylsilylethynyl)pentacene (TIPS pentacene) in anisole (1% w/v)

- Equipment: Thermal evaporator, CVD system (e.g., Labcoater 2 SCS PDS 2010), plasma oxygen etcher (e.g., Tucano)

Procedure:

- Substrate Preparation: Clean the PET substrate thoroughly.

- Floating Gate Deposition: Thermally evaporate the first gold layer onto the substrate and pattern it using a low-resolution photolithographic process. This layer serves as the floating gate.

- Dielectric Coating: Deposit a 200 nm Parylene C film across the entire substrate via CVD.

- Source/Drain Electrode Patterning: Evaporate and pattern a second gold layer using a self-alignment process to form interdigitated source and drain electrodes (channel width W = 25 mm, length L = 40 µm, yielding W/L = 625), the upper plate of the control gate capacitor, and connection pads.

- Sensing Area Exposure: Create a via (200 µm x 200 µm) in the Parylene C layer using plasma oxygen etching to expose the sensing area of each OCMFET.

- Semiconductor Application: Drop-cast a 2 µL droplet of TIPS pentacene solution over the transistor channel.

- Device Encapsulation: Cover the channel area with a thick (1.2 µm) layer of Parylene C to enhance stability in humid environments during incubation.

OCMFET Coupling and Neurospheroid Recording

Neurospheroid Generation: [31]

- Cell Line: Utilize an rtTA/Ngn2-positive human induced pluripotent stem cell (hiPSC) line (e.g., GM25256 from the Coriell Institute).

- Differentiation: Differentiate hiPSCs into early-stage excitatory cortical neurons (iNeurons) via doxycycline treatment for 3 days.

- Aggregate Formation: Employ the hanging-drop method to create scaffold-free spherical aggregates. Generate neurospheroids composed of 50,000 iNeurons and astrocytes in a 1:1 ratio.

Recording Setup:

- Couple the prepared neurospheroid directly to the exposed sensing area of the OCMFET device.

- Record the spontaneous electrical activity without the need for a reference electrode in the culture medium.

- The electrical signals from the neurospheroid modulate the charge on the OCMFET's floating gate, leading to a measurable shift in the drain current (I_ds).