Overcoming Digital Literacy Barriers in Older Adults: Evidence-Based Interventions for Research and Clinical Practice

This article synthesizes current evidence on digital literacy barriers faced by older adults and evaluates intervention strategies relevant to biomedical research and clinical practice.

Overcoming Digital Literacy Barriers in Older Adults: Evidence-Based Interventions for Research and Clinical Practice

Abstract

This article synthesizes current evidence on digital literacy barriers faced by older adults and evaluates intervention strategies relevant to biomedical research and clinical practice. It explores the multifaceted nature of digital exclusion, examining foundational barriers including capability, opportunity, and motivation factors. The review assesses methodological approaches for improving digital health literacy, analyzes optimization strategies for technology design and implementation, and validates intervention effectiveness through comparative outcomes. For researchers and healthcare professionals, this analysis provides critical insights for developing equitable digital health strategies that accommodate aging populations, particularly those with chronic conditions who stand to benefit most from telehealth and remote monitoring technologies.

Understanding the Digital Divide: Multidimensional Barriers Facing Older Adults

The Capability-Opportunity-Motivation (COM-B) Framework for Digital Exclusion

Frequently Asked Questions (FAQs)

1. What is the COM-B Framework and why is it relevant for studying digital exclusion in older adults? The COM-B Framework is a behavior change model that posits for any behavior (B) to occur, individuals must have the Capability (C), Opportunity (O), and Motivation (M) to perform it [1]. It is highly relevant for digital exclusion research as it provides a structured way to analyze the multiple, intertwined barriers preventing older adults from engaging with digital technologies [1]. It helps move beyond simplistic explanations and allows researchers to design targeted interventions addressing specific deficits in capability, opportunity, or motivation.

2. What are the most common barriers to digital engagement identified through the COM-B lens? Research has identified a range of barriers mapped to the COM-B components [1]:

- Capability: Physical and psychological changes related to aging (e.g., vision, memory, dexterity) and a fundamental lack of digital skills [1] [2].

- Opportunity: Lack of access to affordable devices and internet connectivity, technologies with poor usability for older adults, and a lack of social or environmental support [1] [3].

- Motivation: Low confidence, anxiety, and pessimism about one's ability to learn, as well as not perceiving digital tools as useful or relevant to their lives [1].

3. How can researchers effectively co-design digital inclusion interventions with older adults? Co-design is a critical methodology for ensuring interventions are relevant. Best practices include [4] [3]:

- Partner with established community organizations (e.g., VCSE organisations, libraries) to connect with hard-to-reach older adults, including those who are non-users.

- Use diverse engagement methods such as focus groups and partnerships with patient participation groups to identify specific barriers.

- Ensure diversity and inclusivity in recruitment to include people with varying levels of confidence, device access, and connectivity. If an intervention works for those facing the most barriers, it will likely work for most.

4. What constitutes "basic" digital skills for older adults, and why is this important for research? For older adults with no prior experience, even tasks considered "basic" by framework developers can be major hurdles [2]. These include:

- Understanding ICT jargon and terminology.

- Operating hardware (e.g., turning on a device, using a touchscreen).

- Navigating software and the internet (e.g., downloading an app). Researchers must not assume foundational knowledge and should design studies and interventions that start from a truly basic level, acknowledging that these skills are not trivial for this population [2].

Troubleshooting Guide: Common Research Implementation Challenges

Problem 1: High Drop-Out Rates in Longitudinal Studies on Digital Skill Acquisition

- Potential Cause: Interventions may be progressing too quickly, failing to account for the significant time and repetition older adults need to master foundational skills [2].

- Solution:

- Pilot Test Skill Progression: Conduct thorough pilot studies to map a realistic learning curve, breaking down skills into micro-tasks.

- Provide Ongoing Support: Ensure participants have access to continuous, patient support from dedicated staff or "digital champions" who are permitted to spend sufficient time with them [4].

Problem 2: Failure to Recruit Digitally Excluded Older Adults, Leading to Survivorship Bias

- Potential Cause: Relying solely on digital channels (e.g., online ads, practice websites) for recruitment will miss the target population [3].

- Solution:

Problem 3: Intervention Fails to Improve Sustained Digital Engagement

- Potential Cause: The intervention may focus only on initial adoption, neglecting factors that promote long-term use, such as perceived relevance and ongoing motivation [1].

- Solution:

- Focus on Personal Utility: Connect digital skills to tasks that are immediately and personally beneficial to the older adult, such as viewing family photos or managing prescriptions [1].

- Build "Warm Expert" Networks: Facilitate opportunities for participants to identify and rely on a supportive social network (family, friends, peers) for ongoing informal support [1].

Quantitative Data on Digital Exclusion and the COM-B Framework

The table below summarizes key quantitative findings from the literature to inform research design and hypothesis generation.

| Metric | Reported Figure | Population / Context | Relevant COM-B Component |

|---|---|---|---|

| Lacking Basic Digital Skills [4] | 10 million adults | UK, 2022 | Capability (Psychological) |

| No Internet Access [4] | 1 in 20 households | UK, 2022 | Opportunity (Environmental) |

| Associated Economic Deprivation [4] | 4x more likely to be from low-income households | UK, 2022 | Opportunity (Environmental) |

| Workforce Skill Gap Projection [3] | 5 million workers under-skilled by 2030 | UK | Capability (Psychological) |

| Impact of Co-designed Support [4] | Improved patient experience and service use | NHS England case studies | Motivation & Opportunity |

Experimental Protocol: Mapping Barriers Using the COM-B and TDF

This protocol provides a methodology for systematically identifying barriers to digital engagement in a specific older adult population.

1. Research Design:

- A qualitative study involving semi-structured interviews and/or focus groups.

2. Participant Recruitment:

- Use the strategies outlined in the troubleshooting guide (Problem 2) to recruit a diverse sample, ensuring inclusion of current non-users and those with low skills.

3. Data Collection:

- Conduct sessions exploring participants' experiences with a specific digital technology (e.g., NHS app, video calls). Probe into past attempts, challenges, and reasons for non-use.

4. Data Analysis - Thematic Mapping to COM-B/TDF:

- Transcribe and code the data.

- Map emergent themes to the components of the COM-B model and its more detailed Theoretical Domains Framework (TDF) [1]. For example:

- A theme about "forgetting steps" maps to Capability (Psychological) > Memory.

- A theme about "can't afford data" maps to Opportunity (Environmental) > Resources.

- A theme about "fear of being scammed" maps to Motivation (Reflective) > Beliefs about Consequences.

5. Output:

- A synthesized report detailing the predominant barriers within each COM-B component, which can directly inform the design of a targeted intervention.

Logical Workflow for Intervention Design

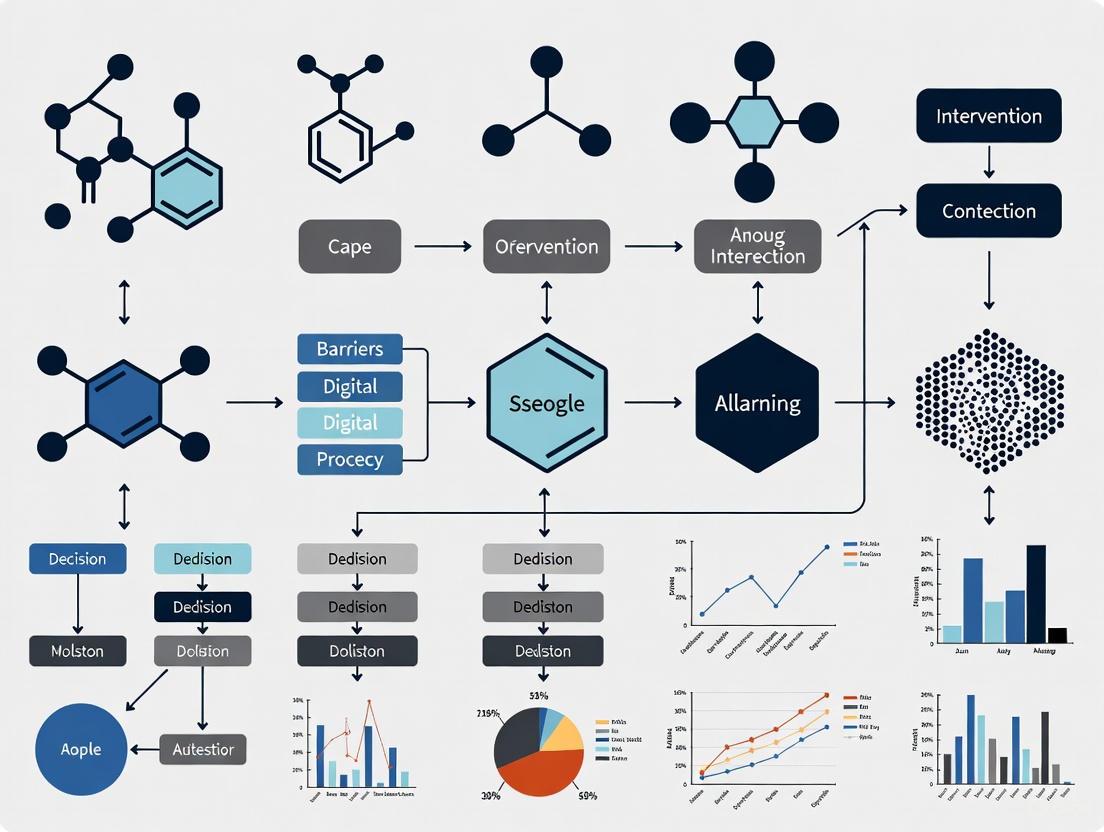

The diagram below outlines a logical pathway for designing a digital inclusion intervention based on the COM-B diagnosis.

The table below lists key "reagents" or resources essential for conducting research on digital exclusion in older adults.

| Resource Category | Specific Examples & Functions |

|---|---|

| Validated Assessment Tools | Digital Exclusion Risk Index: Identifies populations most at risk of digital exclusion based on demographic and geographic data [4]. COM-B Interview Schedule: A semi-structured interview guide based on the TDF to systematically identify barriers [1]. |

| Recruitment & Outreach Channels | National Digital Inclusion Network (Good Things Foundation): A network of community organizations that can facilitate access to digitally excluded groups [4] [3]. Local VCSE Organizations & Libraries: Key partners for reaching participants in trusted, local environments [4] [3]. |

| Training & Implementation Aids | Skills for Life Platform: Helps identify free, local courses for teaching essential digital skills [4]. Digital Health Champions Network: Provides resources for training staff or volunteers to become digital inclusion champions [4]. |

| Usability & Accessibility Benchmarks | NHS England GP Website Benchmarking Tool: Allows auditing and benchmarking of the usability and accessibility of digital services, a key consideration for intervention design [4]. |

For researchers and drug development professionals working on digital health interventions, understanding the specific physical and cognitive challenges that older adults face is crucial for designing effective products. These age-related barriers significantly impact technology adoption and can determine the success or failure of clinical trials and therapeutic digital tools. This technical support center provides evidence-based troubleshooting guides to address these challenges within the context of digital literacy barrier research.

↑Troubleshooting Guides: Addressing Physical & Cognitive Barriers

↑Vision-Related Challenges

Reported Issue: Older adult study participants report eye strain, difficulty reading on-screen text, and inability to distinguish interface elements.

Root Cause: Age-related vision changes include presbyopia (reduced ability to focus on near objects), reduced contrast sensitivity, and decreased adaptability to glare [5]. These changes are exacerbated by prolonged screen exposure in digital work environments [5].

Evidence-Based Solutions:

- Implement high-contrast color schemes (black on white or white on dark blue) rather than similar hues

- Provide text scaling options up to 200% without breaking interface layout

- Ensure all critical information is conveyed through multiple sensory channels (visual + auditory)

- Implement consistent layout patterns to reduce visual search demands

Research Support Protocol: When participants report vision-related difficulties, recommend the following assessment protocol:

- Conduct contrast sensitivity testing using Mars Perceptrix test or similar

- Document font size preferences and reading distance requirements

- Test interface under various lighting conditions (low, medium, bright)

↑Motor Control and Dexterity Challenges

Reported Issue: Users experience difficulty with precise mouse control, touchscreen gestures, or rapid interface interactions.

Root Cause: Age-related conditions such as arthritis, essential tremor, or reduced fine motor coordination can make standard interface interactions challenging [6]. These barriers are particularly pronounced in older adults with chronic diseases who are key beneficiaries of digital health technologies [6].

Evidence-Based Solutions:

- Increase target size for touch interfaces (minimum 9.6mm according to accessibility standards)

- Provide extended timeouts for tasks requiring rapid responses

- Implement gesture tolerance to accommodate less precise movements

- Offer alternative input methods (voice commands, switch devices)

Validation Methodology: To test motor accessibility:

- Use Fitts' Law testing to measure pointing efficiency

- Conduct task completion rate analysis with timed components

- Implement error rate monitoring for precise touch targets

↑Cognitive Load and Memory Challenges

Reported Issue: Study participants struggle to remember navigation paths, interface workflows, or authentication credentials.

Root Cause: Normal age-related cognitive changes affect working memory, processing speed, and executive function [7] [6]. Cognitive overload occurs when interface demands exceed these capacities, leading to abandonment of digital health tools [6].

Evidence-Based Solutions:

- Implement progressive disclosure - show only essential information initially

- Maintain consistent navigation patterns across all application sections

- Provide clear feedback for all actions to reinforce learning

- Allow personalization of frequently used functions

- Incorporate cognitive offloading tools (notes, reminders, favorites)

Assessment Protocol: For cognitive load evaluation:

- Administer NASA-TLX (Task Load Index) after key tasks

- Track error recovery paths and time-to-resolution

- Monitor working memory demands through task interruption tests

↑Technology Familiarity and Digital Literacy

Reported Issue: Participants lack foundational digital literacy skills, including terminology understanding, basic navigation concepts, and troubleshooting instincts.

Root Cause: Many digital health technologies are developed without full consideration of older adults' physical, cognitive, or cultural needs, creating unintended barriers to adoption [7]. Limited prior exposure to digital interfaces throughout life course contributes to this challenge [6].

Evidence-Based Solutions:

- Use familiar, non-technical language consistently

- Provide contextual help that appears automatically during challenging tasks

- Implement guided tutorials with hands-on practice for core functions

- Create analogies relating digital actions to familiar real-world activities

Training Support Framework: Effective digital literacy building requires:

- Pre-assessment of existing digital capability levels

- Tiered learning paths based on initial assessment

- Just-in-time learning support integrated into the interface

- Practice environments without consequence for errors

↑Frequently Asked Questions: Researcher Guidance

Q: What co-design methodologies effectively engage older adults with varying cognitive abilities?

A: Participatory co-design that continuously involves older adults uncovers hidden usability failures and ensures cultural fit [7]. Successful approaches include:

- Flexible design processes that adapt to participant needs and capabilities [8]

- Supportive environments that reduce power imbalances between researchers and participants [8]

- Participant-led documentation to reduce academic bias and empower contributions [8]

- The PRODUCES framework from Health CASCADE provides structured methodology for rigorous co-design [8]

Q: How can we objectively measure technology adoption barriers in older adult populations?

A: Use multidimensional assessment capturing capability, opportunity, and motivation factors [6]. Standardized metrics include:

Table: Core Metrics for Technology Adoption Barriers

| Domain | Specific Metrics | Assessment Method |

|---|---|---|

| Physical Capability | Handgrip strength, visual acuity, tremor assessment | Standardized clinical assessments, performance testing |

| Cognitive Load | NASA-TLX, error rates, task completion time | Controlled usability testing with think-aloud protocol |

| Motivational Factors | Perceived usefulness, trust, privacy concerns | Likert-scale surveys, qualitative interviews |

| Opportunity Barriers | Social support, access to technology, training availability | Demographic questionnaires, environmental assessments |

Q: What implementation strategies successfully address adoption barriers in real-world settings?

A: Effective implementation requires multilevel approaches [6] [9]. Key strategies include:

- Health care provider endorsement and training to build trust and provide support [6]

- Hybrid care models that combine digital tools with human contact [8]

- Tailored training programs that address specific literacy gaps [6]

- Strategic navigation of organizational approval processes and alignment with existing initiatives [9]

Q: What evidence exists for the effectiveness of digital interventions addressing physical function in older adults?

A: Recent systematic reviews show digital-based interventions for healthy older adults can significantly improve physical functions relevant to sarcopenia prevention, though evidence certainty varies [10]:

Table: Effects of Digital Interventions on Physical Function in Older Adults

| Outcome Measure | Effect Size | Certainty of Evidence | Clinical Significance |

|---|---|---|---|

| Handgrip Strength | Significant enhancement | Low certainty | Maintains functional independence |

| Usual Walking Speed | Significant improvement | Low certainty | Reduces fall risk |

| Five Times Sit-to-Stand | Significant enhancement | Low certainty | Indicates lower body strength |

| 30-Second Chair Stand | Significant improvement | Low certainty | Measures functional endurance |

| Appendicular Muscle Mass | No significant effect | Low certainty | Limited impact on muscle morphology |

↑Experimental Protocols for Barrier Assessment

↑Comprehensive Usability Testing Protocol

Objective: Systematically identify physical and cognitive barriers to technology adoption in older adult populations.

Materials:

- Test device with screen recording capability

- Eye-tracking equipment (optional but recommended)

- NASA-TLX questionnaire for cognitive load assessment

- Audio recording equipment for think-aloud protocol

- Performance metrics logging system

Procedure:

- Recruitment: Purposefully sample for diversity in age (65+), gender, prior technology experience, and cognitive status

- Pre-test assessment: Document visual acuity, hand dexterity, and technology familiarity

- Task development: Create realistic scenarios reflecting core application functions

- Testing session:

- Record initial impressions and navigation instincts

- Document task completion success, time, and error rates

- Capture subjective feedback during think-aloud protocol

- Post-test assessment:

- Administer NASA-TLX for cognitive load evaluation

- Conduct semi-structured interview about specific challenges

- Data analysis:

- Quantitative: Success rates, time-on-task, error counts

- Qualitative: Thematic analysis of verbalized difficulties

Validation: Protocol should detect at least 80% of critical usability barriers as confirmed through iterative design testing.

↑Co-Design Workshop Framework for Older Adults

Objective: Engage older adults as equal partners in designing digital health interventions that address their specific capabilities and constraints.

Materials:

- Low-fidelity prototyping materials (paper, markers)

- Digital prototyping tools with adjustable interface parameters

- Audio recording equipment for workshop documentation

- Comfortable seating with adequate lighting and acoustics

Procedure (based on successful implementation [8]):

- Participant recruitment: 10-12 participants including older adults and allied health professionals using purposive convenience sampling

- Workshop structure: Six two-hour sessions following the Double Diamond design process (Discover, Define, Develop, Deliver)

- Activities:

- Discover: Understand experiences and attitudes toward healthy behaviors and technology

- Define: Identify desired features of interventions

- Develop: Create intervention concepts and interface designs

- Deliver: Test prototypes and refine based on feedback

- Power balance mitigation:

- Use participant-led documentation to reduce academic bias

- Implement structured member checking to ensure accuracy

- Empower participants to guide discussion topics

Outcome Measures:

- Number of participant-generated design modifications implemented

- Participant satisfaction with workshop process and outcomes

- Usability testing results comparing co-designed vs. expert-designed interfaces

↑Visualization: Relationship Mapping

↑COM-B Framework for Technology Adoption

(COM-B Model of Technology Adoption Barriers and Facilitators)

↑Iterative Co-Design Process

(Iterative Co-Design Process with Older Adults)

↑Research Reagent Solutions

Table: Essential Methodological Tools for Age-Inclusive Digital Health Research

| Research Tool | Function | Application Context |

|---|---|---|

| System Usability Scale (SSU) | Standardized usability assessment | Quantifies perceived usability across diverse user groups |

| NASA-TLX | Multidimensional cognitive load rating | Measures mental demand during technology interactions |

| Health CASCADE Framework | Co-design methodology | Provides rigorous structure for participatory design |

| Double Diamond Design Process | Design thinking framework | Guides Discover, Define, Develop, Deliver phases |

| PROGRESS-Plus Equity Framework | Equity assessment tool | Ensures consideration of place, race, occupation, gender, religion, education, socioeconomic status |

| COM-B Model | Behavior analysis framework | Identifies Capability, Opportunity, and Motivation barriers |

Addressing physical and cognitive challenges in technology adoption requires rigorous, systematic approaches that prioritize the capabilities and constraints of older adult populations. By implementing these troubleshooting guides, assessment protocols, and co-design methodologies, researchers and drug development professionals can create digital health interventions that are both accessible and effective for diverse older adult populations. The continued refinement of these approaches through rigorous evaluation will advance both the science and practice of inclusive digital health innovation.

Frequently Asked Questions (FAQs): Core Concepts and Mechanisms

Q1: What is the established relationship between self-efficacy and anxiety symptoms in adolescents, and how might this inform interventions for older adults? Research demonstrates a strong, negative association between self-efficacy and anxiety. In a study of 1,705 adolescents, both emotional and social self-efficacy were found to have a predictive effect on anxiety symptoms, suggesting that higher self-efficacy can lead to a reduction in anxiety [11]. This relationship is well-grounded in social cognitive theory, which posits that individuals only experience anxiety when they believe themselves to be incapable of managing potentially detrimental events [12]. For older adults, this implies that interventions designed to boost digital self-efficacy could similarly reduce technology-related anxiety.

Q2: What quantitative evidence links specific domains of self-efficacy to mental health outcomes? A study of 549 high school students quantified the negative relationships between different self-efficacy domains and specific symptoms [12]. The key findings are summarized in the table below.

Table 1: Relationships Between Self-Efficacy Domains and Mental Health Symptoms

| Self-Efficacy Domain | Mental Health Symptom | Relationship Strength | Statistical Significance |

|---|---|---|---|

| Total Self-Efficacy | Depression | Significant & Negative | p < 0.05 [12] |

| Physical Self-Efficacy | Depression | Significant & Negative | p < 0.05 [12] |

| Academic Self-Efficacy | Depression | Significant & Negative | p < 0.05 [12] |

| Total Self-Efficacy | Anxiety | Significant & Negative | p < 0.05 [12] |

| Physical Self-Efficacy | Anxiety | Significant & Negative | p < 0.05 [12] |

| Emotional Self-Efficacy | Anxiety | Significant & Negative | p < 0.05 [12] |

| Emotional Self-Efficacy | Worry | Significant & Negative | p < 0.05 [12] |

| Physical Self-Efficacy | Worry | Significant & Negative | p < 0.05 [12] |

| Social Self-Efficacy | Social Avoidance | Significant & Negative | p < 0.05 [12] |

| Physical Self-Efficacy | Social Avoidance | Significant & Negative | p < 0.05 [12] |

Q3: According to recent models, what are the primary barriers to digital health technology adoption among older adults with chronic diseases? An updated 2025 systematic review mapped barriers using the Capability, Opportunity, Motivation–Behavior (COM-B) model [13]. These barriers create a "psychological hurdle" that impedes the adoption of digital health interventions.

Table 2: Barriers to DHT Adoption Among Older Adults (COM-B Framework)

| COM-B Component | Specific Barrier | Manifestation in Older Adults |

|---|---|---|

| Capability | Limited Digital Literacy | Lack of skills to understand and use information from digital formats [13]. |

| Physical & Cognitive Challenges | Age-related declines that make using technology difficult [13]. | |

| Opportunity | Infrastructural Deficits | Lack of reliable internet, especially in rural areas [13]. |

| Usability Challenges | Poorly designed interfaces that are not age-friendly [13]. | |

| Motivation | Privacy Concerns & Mistrust | Apprehension about data security and skepticism of technology's benefits [13]. |

| High Satisfaction with Existing Care | A preference for traditional, in-person care methods [13]. |

Q4: How does improved digital literacy functionally reduce reliance on formal care services among older adults? Empirical analysis from the 2020 China Longitudinal Aging Social Survey identifies three key mechanisms. Improved digital literacy reduces the use of Community-based Home Care Services (CHCS) by enabling alternative consumption expenditures (e.g., using e-commerce for shopping), strengthening social and family support through communication tools, and enhancing self-efficacy for independent health management [14]. Notably, different digital literacy dimensions have divergent effects; while digital application literacy increases service use, device operation and information acquisition literacy decrease it [14].

Troubleshooting Guides: Experimental Protocols and Diagnostics

Guide 1: Diagnosing and Mitigating Low Self-Efficacy in Intervention Cohorts

Objective: To identify participants with low self-efficacy and implement targeted boosting protocols.

Experimental Protocol (Methodology):

- Baseline Assessment:

- Tool: Administer the Self-Efficacy Questionnaire for Children (SEQ-C) or an age-adapted equivalent. This 24-item tool measures three domains on a 5-point scale: Social Self-Efficacy (peer relationships, assertiveness), Academic Self-Efficacy (managing learning), and Emotional Self-Efficacy (coping with negative emotions) [12].

- Procedure: Conduct the assessment pre-intervention to establish a baseline for total self-efficacy and subscale scores [12].

- Intervention (Self-Efficacy Boosting):

- Mastery Experiences: Structure tasks to ensure participants experience small, successive successes with digital health technologies (DHTs). Break down complex processes like using a telemedicine app into simple, achievable steps [14].

- Vicarious Learning: Facilitate observation of peers (of similar age and background) successfully using DHTs. This can be done through group training sessions or video demonstrations [13].

- Verbal Persuasion: Provide direct, positive encouragement from researchers, healthcare providers, and tech support staff. Use phrases that affirm capability and past successes [15].

- Post-Intervention Assessment:

- Re-administer the self-efficacy scale and compare scores to baseline to quantify change.

Diagnostic Flowchart: The following diagram illustrates the logical workflow for diagnosing and addressing low self-efficacy in a research cohort.

Guide 2: Troubleshooting Barriers to Digital Health Technology (DHT) Adoption

Objective: To systematically identify and overcome capability, opportunity, and motivation barriers in a research setting.

Experimental Protocol (Methodology): This protocol uses a structured, phased approach based on the COM-B model of behavior change [13].

- Phase 1: Capability Building (Knowledge & Skills)

- Action: Conduct tailored, hands-on training sessions. Focus on the specific DHTs used in the research (e.g., wearable devices, health apps). Training should be accessible, account for physical and cognitive challenges, and include practical exercises [13].

- Measurement: Use pre- and post-training quizzes and observed competency checks.

- Phase 2: Opportunity Enhancement (Environment & Resources)

- Action: Provide participants with necessary hardware (e.g., tablets, monitors) and ensure reliable internet access. Engage healthcare providers to endorse and demonstrate the DHTs. Implement hybrid (online-offline) support models to assist with usability challenges [13].

- Measurement: Track device usage logs and support ticket requests.

- Phase 3: Motivation Fortification (Mindset & Beliefs)

- Action: Address privacy concerns with clear, transparent data policies. Use co-design methods, involving older adults and community stakeholders in the intervention design to build trust and recognize the benefits of DHTs [13].

- Measurement: Administer surveys on technology acceptance and perceived usefulness.

Diagnostic Flowchart: The following diagram maps the troubleshooting process for DHT non-adoption to its root cause and proposed solution.

The Scientist's Toolkit: Research Reagent Solutions

This table details key methodological "reagents" and their functions for research on digital literacy interventions for older adults.

Table 3: Essential Materials and Methodologies for Intervention Research

| Research Reagent / Tool | Function / Explanation |

|---|---|

| Self-Efficacy Questionnaire for Children (SEQ-C) | A validated 24-item instrument to measure social, academic, and emotional self-efficacy domains. Can be adapted for older adult populations to establish baseline and post-intervention metrics [12]. |

| COM-B Model (Capability, Opportunity, Motivation–Behavior) | A theoretical framework used to systematically categorize and address barriers (e.g., digital literacy, infrastructure, privacy concerns) to digital health technology adoption [13]. |

| Penn State Worry Questionnaire (PSWQ) | A 14-item self-report scale measuring the trait of worry. Useful for quantifying one aspect of anxiety that is negatively correlated with emotional self-efficacy [12]. |

| Co-Design Methodology | A participatory research approach that involves older adults, healthcare providers, and community stakeholders in the design of interventions. This enhances adoption by building trust and ensuring usability [13]. |

| Heckman's Two-Stage Model | An advanced statistical model used to correct for selection bias in survey data. It is employed to generate robust empirical evidence on the impact of digital literacy, such as its negative effect on the use of community-based home care services [14]. |

| PROGRESS-Plus Equity Framework | A tool for ensuring equitable research. It guides the analysis of how factors like Place of residence, Race, Occupation, Gender, Education, and Social capital (PROGRESS) influence digital health adoption and outcomes [13]. |

For older adults, digital literacy is a crucial determinant of health and equity, particularly as essential services rapidly shift to digital platforms [16]. This transition has significant implications for inclusion and well-being, affecting older adults' ability to access essential services and information, especially during emergencies [16]. The COVID-19 pandemic starkly revealed these challenges when public computer labs closed, leaving many older adults stranded at home without access to shared computers, the internet, or digital skills training [17].

Research reveals that media frequently depicts older adults as needing significant help with digital technologies, reinforcing digital ageism—a systemic exclusion of older adults from digital environments through both technology design and societal perception [16]. This bias fosters stereotypes that assume older adults lack digital skills, despite evidence showing they possess diverse digital competencies [16].

Key Barriers to Digital Inclusion for Older Adults

Older adults face multiple, interconnected barriers that hinder their digital inclusion and ability to sustain digital literacy skills.

Sustained Digital Literacy Challenges

Research from the Home Connect program reveals distinct patterns in how older adults maintain digital literacy skills, with more than 63% showing a growing pattern of skill utilization, largely due to ongoing support like Q&A sessions [17]. However, significant challenges remain for other learners, as outlined in the table below.

Table: Patterns of Digital Literacy Skill Utilization Among Older Adults

| Usage Pattern | Percentage of Learners | Key Characteristics |

|---|---|---|

| Growing | >63% | Skills continue to develop with ongoing support and practice |

| Initially growing but not sustaining | Not specified | Initial progress is made but not maintained over time |

| Nonchanging | Not specified | Skills remain static despite training efforts |

| Decreasing | Not specified | Skills deteriorate after initial acquisition |

Specific Barriers to Skill Sustainability

- Physical Challenges: Memory issues significantly impact the ability to retain and apply digital skills [17]. As one learner expressed, "I don't even remember how to turn on/off my device. I always ask my son for help" [17].

- Technical Troubles: Consistent technical issues, such as disappearing buttons and unstable Wi-Fi connections, create ongoing frustration [17].

- Rapidly Changing Systems: Frequent system updates and changing interfaces prove challenging for older adults, leading to confusion and functional issues [17].

- Lack of Ongoing Support: The perceived absence of continuous technical assistance hinders independent exploration and problem-solving [17].

Infrastructure and Affordability Concerns

The conceptual framework below illustrates how structural barriers create accessibility challenges that impact digital inclusion outcomes for older adults.

Infrastructure Deficits

- Digital Ageism in Technology Design: At the organizational level, digital ageism is perpetuated by a lack of training and awareness among technology developers, including website designers and service providers, leading to digital platforms that are not accessible or inclusive of older adult users [16].

- Exclusion from Digital Spaces: Negative portrayals of older adults' digital skills and their exclusion from digital spaces underscore the need for more inclusive media representations and technology design [16].

- Inadequate Training Infrastructure: The closure of public computer labs during the pandemic eliminated crucial access points for many older adults, highlighting the dependency on physical infrastructure for digital inclusion [17].

Affordability Considerations

The concept of affordability encompasses both user and provider perspectives. From the user perspective, affordability relates to the ability to pay for tariffs or user charges associated with infrastructure services without being excluded from access—a particular concern for low-income groups [18]. For older adults on fixed incomes, this includes:

- Device Acquisition Costs: Initial purchase of smartphones, tablets, or computers

- Internet Service Expenses: Monthly connectivity fees for reliable home internet

- Ongoing Support Costs: Expenses associated with maintaining devices and skills

- Training Program Costs: Access to formal digital literacy programs

Financial assistance can take the form of government subsidies for providers and/or end users, with the aim of promoting economic and social policy objectives [18]. A gap may exist between what users can pay and the revenues required to meet project costs, requiring mechanisms like cross-subsidy structures, government subsidies, and ancillary revenue arrangements [18].

Research Reagent Solutions: Digital Intervention Tools

Table: Essential Research Components for Digital Literacy Interventions

| Research Component | Function | Application Example |

|---|---|---|

| UTAUT2 Framework | Evaluates technology acceptance and use through performance expectancy, effort expectancy, and facilitating conditions [16]. | Analyzing media representations of older adults' digital literacy [16]. |

| Personalized Virtual Learning | One-on-one virtual learning available in multiple languages for homebound older adults [17]. | CTN's Home Connect program engaging individuals aged 60+ in their homes [17]. |

| Ongoing Q&A Sessions | Provides continuous support beyond initial training to sustain skill development [17]. | Virtual sessions helping learners troubleshoot problems and maintain skills [17]. |

| Critical Discourse Analysis | Examines how language and media representations perpetuate or challenge digital ageism [16]. | Analyzing Canadian news media portrayals of older adults' digital skills [16]. |

Troubleshooting Guides: Addressing Common Digital Literacy Barriers

Guide: "I don't remember how to use this feature I learned previously"

Research Context: Memory issues emerged as a significant physical challenge for older learners, directly impacting their ability to retain and apply digital skills [17].

Methodology for Support Providers:

- Understanding the Problem: Acknowledge that memory challenges are a common barrier, not a personal failing. Ask: "Which specific steps are you having trouble remembering?" [17]

- Isolating the Issue: Determine if this is a retention issue (forgetting learned skills) or a transfer issue (unable to apply skills in new contexts).

- Finding a Fix or Workaround:

- Create personalized quick-reference guides with screenshots

- Establish consistent routines for frequently performed tasks

- Implement spaced repetition practice to reinforce learning

- Provide easy-access to ongoing support through dedicated Q&A sessions [17]

Experimental Protocol for Researchers:

- Measurement Tools: Pre- and post-intervention skill assessments, frequency of support requests, skill retention rates over 3, 6, and 12 months

- Control Variables: Type and frequency of support interventions, individual cognitive baseline, previous digital experience

- Data Collection: Mixed-methods approach combining quantitative skill assessments with qualitative interviews about confidence and frustration levels

Guide: "The website/interface changed and I can't find anything anymore"

Research Context: Keeping up with system updates and changing interfaces proved challenging for older adults, leading to confusion and functional issues [17].

Methodology for Support Providers:

- Understanding the Problem: Recognize that rapidly changing systems disproportionately affect older adults. Ask: "What specifically looks different now compared to before?"

- Isolating the Issue: Identify whether the changes are cosmetic (moved buttons) or functional (new processes required).

- Finding a Fix or Workaround:

- Provide change-specific guides highlighting key differences

- Offer "what's new" orientation sessions after major updates

- Teach general navigation principles rather than specific button locations

- Advocate for consistent design patterns and gradual interface changes

Experimental Protocol for Researchers:

- Measurement Tools: Time-to-task-completion metrics, error rates, user frustration scales, adaptation speed measurements

- Control Variables: Magnitude of interface changes, consistency of design patterns, availability of transition support

- Data Collection: Usability testing sessions with think-aloud protocols, longitudinal adaptation tracking

Guide: "I'm worried about scams and don't trust this technology"

Research Context: Media frequently highlights older adults' susceptibility to digital scams and fraud, reinforcing digital ageism while acknowledging legitimate security concerns [16].

Methodology for Support Providers:

- Understanding the Problem: Validate security concerns while building confidence. Ask: "What specific concerns do you have about using this technology safely?"

- Isolating the Issue: Determine whether this is based on previous negative experiences, media reports, or general anxiety.

- Finding a Fix or Workaround:

- Provide clear, specific safety guidelines rather than general warnings

- Teach concrete skills for identifying common scam tactics

- Establish trusted channels for verifying suspicious communications

- Balance security education with empowerment messaging

Experimental Protocol for Researchers:

- Measurement Tools: Security behavior checklists, confidence scales, susceptibility to simulated phishing attempts

- Control Variables: Previous negative experiences, media consumption patterns, general anxiety levels

- Data Collection: Pre- and post-intervention security knowledge tests, observed security practices, qualitative feedback on confidence levels

Experimental Framework for Digital Literacy Research

The following workflow details a comprehensive methodology for implementing and evaluating digital literacy interventions with older adult populations.

Key Experimental Protocols

Participant Recruitment and Screening: Target adults aged 60+ with varying levels of prior digital experience. Include assessment of cognitive baseline, physical limitations affecting device use, and previous technology exposure. Ensure representation across socioeconomic backgrounds to properly assess affordability barriers.

Intervention Implementation Protocol: Deploy personalized one-on-one virtual learning programs available in multiple languages [17]. Combine initial intensive training with structured ongoing support through regular Q&A sessions. Document intervention fidelity, dosage, and adaptation requirements.

Data Collection and Analysis Methods: Employ mixed-methods approaches combining quantitative metrics (skill acquisition rates, retention measures, usage frequency) with qualitative data (participant interviews, support session transcripts, researcher observations). Use pre-post designs with longitudinal follow-up at 3, 6, and 12 months to assess skill sustainability.

FAQ: Addressing Researcher Questions

What theoretical frameworks are most appropriate for studying digital literacy interventions with older adults? The Unified Theory of Acceptance and Use of Technology 2 (UTAUT2) provides a comprehensive framework for evaluating technology acceptance and use through factors including performance expectancy, effort expectancy, social influence, facilitating conditions, and hedonic motivation [16]. This model helps explain behavior and intentions related to digital technology adoption in this population.

How can researchers effectively address the sustainability of digital literacy skills beyond initial training? Research indicates that ongoing support is critical for skill sustainability. The Home Connect program demonstrated that virtual Q&A sessions allowing continued digital skills education beyond initial classes were crucial for maintaining skills, with over 63% of learners showing a growing pattern of skill utilization when this support was available [17].

What are the most significant methodological challenges in this research area, and how can they be addressed? Key challenges include accounting for the diversity of older adults' digital competencies despite stereotypes of technological incompetence [16], addressing physical barriers like memory issues that impact skill retention [17], and designing studies that can track long-term skill sustainability beyond short-term intervention effects.

How can affordability concerns be properly incorporated into intervention research? Affordability must be evaluated from both user and provider perspectives [18]. Research should assess Ability to Pay (ATP) and Willingness to Pay (WTP) among older adult populations, considering that vulnerable groups with the lowest income levels are particularly price-sensitive. Studies should document both direct costs (devices, internet service) and indirect costs (ongoing support, training).

The digital transformation of healthcare and social services presents a complex paradox for aging populations. While digital literacy is widely promoted as a key to accessing modern care systems, evidence suggests it may simultaneously reduce older adults' reliance on formal support structures. This phenomenon represents a significant shift in traditional care utilization models, with substantial implications for service planning and policy development in an increasingly digitalized world.

Research conducted in China, which has entered a stage of moderate aging characterized by a "90-7-3" eldercare pattern (90% home-based care, 7% community-based care, 3% institutional care), reveals a significant negative relationship between digital literacy and the utilization of Community-based Home Care Services (CHCS). This indicates that higher digital literacy is associated with a lower propensity to use formal CHCS [14]. This counterintuitive finding challenges conventional assumptions that digital proficiency primarily facilitates access to services and suggests more complex behavioral mechanisms at play.

Empirical Evidence: Quantifying the Digital Literacy Effect

Key Statistical Relationships from Longitudinal Research

Table 1: Digital Literacy Dimensions and Their Impact on Service Utilization

| Digital Literacy Dimension | Impact on CHCS Utilization | Statistical Significance | Proposed Mechanism |

|---|---|---|---|

| Digital Application Literacy | Positive association | Significant | Enhances ability to navigate formal digital service platforms |

| Device Operation Literacy | Negative correlation | Significant | Increases self-reliance and reduces perceived need for formal services |

| Information Acquisition Literacy | Negative correlation | Significant | Enables independent problem-solving through information access |

| Digital Social Literacy | Negative correlation | Significant | Strengthens informal support networks as service alternatives |

Analysis of the 2020 China Longitudinal Aging Social Survey (CLASS 2020) data employing factor analysis and probit regression methods confirms these multidimensional relationships. The Heckman's two-stage model further validated that digital literacy reduces older adults' reliance on CHCS through multiple pathways, including increased alternative consumption expenditures, strengthened social and family support, and improved self-efficacy [14].

Assessment Frameworks in Current Research

Table 2: Digital Literacy Assessment Tools and Methodologies

| Assessment Tool | Methodology | Target Population | Key Metrics |

|---|---|---|---|

| eHealth Literacy Scale (eHEALS) | 8-item survey measuring ability to find, evaluate, and apply electronic health information | Originally developed for young people, now adapted for older adults | Skills, access, confidence in using digital tools for health [19] [20] |

| Conversational Health Literacy Assessment Tool (CHAT) | 10-question dialogue-based approach | Patients in clinical settings | Promotes open communication, identifies strengths and challenges [20] |

| Digital Health Readiness Questionnaire (DHRQ) | Brief questionnaire for routine clinical settings | Patients across age groups | Measures digital readiness in healthcare contexts [20] |

Methodological Framework: Experimental Protocols for Digital Literacy Research

Protocol 1: Quantitative Analysis of Service Utilization Patterns

Objective: To examine the impact of digital literacy on older adults' utilization of community-based home care services.

Methodology:

- Data Collection: Utilize nationally representative longitudinal survey data (e.g., CLASS 2020) with large sample sizes (n=1100+)

- Digital Literacy Measurement: Employ factor analysis to construct comprehensive digital literacy metrics across multiple dimensions

- Statistical Analysis: Apply probit regression models to establish relationships while controlling for covariates

- Selection Bias Correction: Implement Heckman's two-stage model to address potential self-selection biases

- Mechanism Testing: Conduct pathway analysis to identify mediating factors in the relationship between digital literacy and service utilization [14]

Key Covariates: Age, gender, education, socioeconomic status, health conditions, social support networks, geographical location, and prior technology experience.

Objective: To understand older adults' preferences and needs regarding digital health and social services.

Methodology:

- Participant Recruitment: Target population aged 75+ through senior organizations, elderly councils, and community networks

- Mixed-Mode Survey Administration: Combine electronic and paper-based questionnaires to avoid digital exclusion bias

- Open-Ended Data Collection: Include structured and open-ended questions to capture nuanced preferences

- Qualitative Content Analysis: Employ inductive coding techniques to identify emerging themes from respondent feedback

- Stakeholder Validation: Involve older adults in questionnaire development to ensure comprehensibility of digital terminology [21]

Analytical Focus: Identify key preference categories including usability, training needs, security concerns, device compatibility, and service personalization.

Protocol 3: Digital Literacy Intervention Efficacy

Objective: To evaluate the effectiveness of digital health literacy interventions on healthcare access and outcomes.

Methodology:

- Systematic Review Framework: Follow PRISMA guidelines for comprehensive literature synthesis

- Database Searching: Query multiple scientific databases (PubMed, Scopus, Web of Science) using structured keyword strategies

- Study Selection: Apply inclusion/exclusion criteria focused on experimental studies with defined outcomes

- Quality Assessment: Evaluate methodological rigor of included studies

- Qualitative Synthesis: Analyze thematic patterns and insights across interventions [19]

Outcome Measures: Health literacy improvement, medication adherence, self-confidence, healthcare access, and specific clinical outcomes.

Technical Support Center: Troubleshooting Digital Literacy Research

Frequently Asked Questions: Methodological Challenges

Q: How can researchers accurately measure digital literacy among older adults with limited technological experience? A: Traditional digital literacy assessments often assume baseline knowledge that may be absent in older populations. Implement staged assessments that begin with very fundamental concepts. Consider using the eHEALS framework but supplement with observational components to capture practical competencies beyond self-reported abilities. Incorporate familiar analogies to bridge knowledge gaps [2] [20].

Q: What strategies can address recruitment challenges when studying digital literacy in older populations? A: Employ mixed-mode recruitment approaches that include non-digital channels (community centers, printed materials, telephone outreach) to avoid selection bias toward digitally proficient seniors. Partner with established senior organizations and utilize peer recruiters to build trust. Offer multiple participation formats (in-person, paper surveys, telephone interviews) alongside digital options [21].

Q: How can researchers distinguish between different dimensions of digital literacy in intervention studies? A: Develop multidimensional assessment frameworks that separately measure technical operation skills, information evaluation capabilities, application proficiency, and social communication competencies. Use factor analysis to validate these dimensions statistically. Track each dimension's relationship with specific outcomes to identify which competencies drive particular behaviors [14].

Q: What ethical considerations are unique to digital literacy research with older adults? A: Special attention must be paid to informed consent processes that ensure comprehension of digital terminology. Implement data security measures that address potential vulnerabilities. Consider privacy implications when introducing unfamiliar digital tools. Provide adequate post-study support to prevent abandonment frustration [2] [21].

Technical Issue Resolution: Common Experimental Problems

Problem: High attrition rates in digital literacy intervention studies

- Solution: Implement scaffolded learning approaches that break complex digital tasks into manageable steps. Provide ongoing technical support throughout the study period. Establish personal connections between researchers and participants to maintain engagement. Consider intergenerational mentoring models that combine technical instruction with social interaction [22] [2].

Problem: Standardized measures insufficiently sensitive to detect incremental progress

- Solution: Develop study-specific assessment tools that align with intervention content. Incorporate qualitative measures that capture nuanced improvements not reflected in quantitative scores. Use video recording of digital tasks to enable micro-analysis of skill development. Create personalized learning milestones rather than relying exclusively on normative comparisons [2].

Problem: Technological heterogeneity complicates intervention standardization

- Solution: Establish device lending libraries to ensure consistent technological platforms across participants. Develop platform-agnostic skill assessments that focus on functional competencies rather than specific interface knowledge. Create modular intervention content that can adapt to different devices while maintaining core learning objectives [22].

Visualization: Theoretical Framework and Pathways

Diagram 1: Digital Literacy Impact Pathways on Service Utilization. This visualization illustrates the paradoxical relationship where most digital literacy dimensions negatively impact formal service use through mediating mechanisms, while application literacy shows a positive relationship.

Table 3: Digital Literacy Research Reagents and Solutions

| Research Tool | Function | Application Context | Implementation Considerations |

|---|---|---|---|

| CLASS Survey Data | Provides longitudinal aging data with digital literacy components | Quantitative analysis of service utilization patterns | Requires specialized authorization; Chinese population focus [14] |

| eHEALS Framework | Standardized eHealth literacy assessment | Pre/post intervention measurement | May need modification for older adult populations [19] [20] |

| PRISMA Guidelines | Systematic review methodology framework | Literature synthesis and meta-analysis | Essential for rigorous review of intervention studies [19] |

| Hybrid Survey Administration | Mixed digital and paper-based data collection | Inclusive participant recruitment | Critical for avoiding digital selection bias in older populations [21] |

| Factor Analysis | Statistical dimension reduction technique | Identifying digital literacy constructs | Validates theoretical dimensions of digital literacy [14] |

| Heckman's Two-Stage Model | Statistical correction for selection bias | Addressing non-random utilization patterns | Important for causal inference in observational studies [14] |

The paradoxical relationship between digital literacy and formal service utilization presents both challenges and opportunities for aging societies. Research indicates that comprehensive digital literacy does not uniformly increase dependence on digitalized formal services but rather creates a complex ecosystem where empowered older adults may choose alternative support mechanisms.

Future research should prioritize longitudinal designs that track how these relationships evolve as digital natives age into older adulthood. Additionally, intervention studies must develop more nuanced theoretical frameworks that account for the multidimensional nature of digital literacy and its varied impacts on service utilization patterns. Understanding these dynamics is crucial for designing balanced care systems that leverage digital tools while maintaining appropriate formal support structures for vulnerable older adults.

Within digital literacy intervention research for older adults, equity considerations are paramount. The rapid digitalization of essential services, including healthcare, banking, and social connectivity, has made digital literacy a critical social determinant of health and well-being in later life [23]. However, significant disparities in digital access, skills, and adoption persist along geographic and gender dimensions. Older adults in rural areas face compounded barriers due to infrastructural deficits and fewer support resources [13] [24], while older women experience unique gendered challenges that can further limit their digital participation [13]. This technical guide synthesizes current evidence and methodologies to help researchers effectively identify, measure, and address these equity considerations in intervention studies, ensuring that digital literacy programs do not inadvertently widen existing social inequalities.

Troubleshooting Guides and FAQs: Addressing Common Research Challenges

Frequently Asked Questions

Q1: What are the primary rural-specific barriers to digital health technology (DHT) adoption among older adults? A1: Research identifies a constellation of rural-specific barriers spanning multiple domains:

- Infrastructure: Deficits in broadband availability and reliability create a fundamental access barrier [13].

- Geographic Isolation: Longer travel distances to in-person support services and limited transportation options compound access issues [24].

- Workforce Shortages: Scarcity of healthcare clinicians and digital literacy instructors in rural areas restricts access to both formal training and contextualized support for using health technologies [13] [24].

- Attitudinal Factors: Some evidence suggests rural older adults may express higher satisfaction with existing local care, potentially reducing motivation for digital uptake [13].

Q2: How do gender-specific challenges manifest in older women's digital literacy and technology adoption? A2: Gender-specific challenges are rooted in a combination of socio-economic and psychosocial factors:

- Lower Digital Confidence: Older women often report lower self-efficacy and confidence in learning and using new technologies compared to men [13].

- Differing Outcome Priorities: Studies indicate that older women may prioritize different outcomes from DHTs, which may not be adequately addressed by standard technology designs [13].

- Heightened Privacy Concerns: A greater sensitivity to privacy and data security risks can act as a barrier to adoption [13].

- Economic Disparities: Lower income and retirement savings among older women can limit their ability to purchase devices or data plans, creating a financial barrier [25].

Q3: What is the observed relationship between an older adult's digital literacy and their use of community-based home care services (CHCS)? A3: Evidence from large-scale surveys reveals a counterintuitive relationship. Higher overall digital literacy is significantly associated with a lower propensity to use CHCS [23]. This appears to operate through several mechanisms:

- Substitution with Market Services: Digitally literate older adults use e-commerce, food delivery apps, and other online services to meet needs otherwise provided by CHCS [23].

- Enhanced Social & Family Support: Digital communication tools help strengthen informal support networks, reducing reliance on formal services [23].

- Improved Self-Efficacy: Greater confidence in managing their own lives and health reduces perceived need for formal care services [23].

Q4: Which validated scale is recommended for measuring comprehensive digital literacy in older adults? A4: The Mobile Device Proficiency Questionnaire (MDPQ) is a strong candidate, as it is one of the few instruments validated with older adults that measures all five competence areas of the European Digital Competence (DigComp) Framework, including the often-neglected areas of "digital content creation" and "safety" [26]. For research focused on the Chinese context, a newly developed and validated four-factor scale measuring Basic Technology Literacy, Communication Literacy, Problem-Solving Literacy, and Security Literacy offers a culturally tailored alternative [27].

Troubleshooting Common Intervention Challenges

Challenge: High Attrition Rates in Rural Digital Literacy Programs.

- Diagnosis: Potential causes include lack of sustained support, perceived irrelevance of training content, or insurmountable infrastructural barriers (e.g., poor home internet).

- Solution: Implement a hybrid support model that combines initial in-person training with ongoing remote assistance [13]. Co-design the curriculum with rural older adults to ensure it addresses their specific life needs, such as connecting with distant family or managing agricultural subsidies, rather than offering generic digital skills [2].

Challenge: Older Female Participants Show Resistance or Anxiety Toward Technology.

- Diagnosis: This may stem from lower prior exposure, fear of making mistakes, or technology designs that do not align with their usability preferences.

- Solution: Create single-gender, small-group learning environments facilitated by female trainers to foster psychological safety [13]. Integrate principles of trauma-informed care and universal design into training protocols to reduce anxiety and build confidence. Actively involve older women in the design and testing of interventions to ensure their needs are met [24].

Challenge: An Intervention Successfully Improves Digital Skills, But Fails to Change Health Behaviors.

- Diagnosis: The intervention may have targeted general digital literacy without a specific focus on health-related application (e-health literacy).

- Solution: Move beyond basic skills to develop digital problem-solving literacy [27]. Training should include hands-on modules for specific tasks like online health information verification, accessing telemedicine platforms, using medication management apps, and protecting personal health data online.

Quantitative Data Synthesis

Table 1: Key Quantitative Findings on Digital Literacy and Service Utilization from CLASS 2020 Data

| Metric | Finding | Source/Context |

|---|---|---|

| Overall effect of Digital Literacy on CHCS Use | Significant negative relationship | [23] |

| Disparate Impact by Literacy Dimension | ||

| - Digital Application Literacy | Positive association with use | [23] |

| - Device Operation, Information Acquisition, & Digital Social Literacy | Significant negative correlation with use | [23] |

| Internet Penetration among Older Adults in China | 15.6% (170 million of 1.092B internet users) | China Internet Network Information Center (2024) [27] |

| Maternal Mortality Risk (U.S. Context) | Rural women 60% more likely to die from pregnancy-related causes vs. urban | Centers for Disease Control and Prevention (CDC) [24] |

Table 2: Research Reagent Solutions: Essential Tools for Equity-Focused Digital Literacy Research

| Tool / Reagent | Function/Description | Key Application in Equity Research |

|---|---|---|

| Mobile Device Proficiency Questionnaire (MDPQ) | Validated instrument measuring comprehensive digital skills in older adults. | Assesses all 5 DigComp areas; useful for establishing baseline disparities and measuring intervention impact across different subgroups. [26] |

| PROGRESS-Plus Equity Framework | A framework for identifying equity-relevant factors (Place of residence, Race, Occupation, Gender, etc.). | Ensures systematic collection and analysis of data on key social determinants that shape digital inclusion. Critical for studying rural-urban and gender disparities. [13] |

| DigComp Framework | European Commission's Digital Competence Framework defining 5 key areas. | Provides a standardized structure for defining digital literacy outcomes (Information, Communication, Content Creation, Safety, Problem-solving). [27] [26] |

| Four-Factor Digital Literacy Scale (China) | A culturally tailored 19-item scale for older Chinese adults. | Measures: Basic Technology, Communication, Problem-Solving, and Security Literacy. Ideal for context-specific research in China. [27] |

| Co-Design Methodologies | Participatory approaches that involve end-users in the design process. | Engages older adults, including rural and female populations, in designing interventions, ensuring relevance and addressing specific barriers. [13] [28] |

Experimental Protocols & Methodologies

Protocol for Measuring Digital Literacy with Equity Variables

Objective: To quantitatively assess digital literacy levels among a diverse sample of older adults, analyzing variances by rural/urban residence and gender. Methodology:

- Sampling: Employ stratified random sampling to ensure adequate representation of older adults (e.g., aged ≥60) from both rural and urban areas, with balanced gender representation.

- Instrument Administration: Administer a validated scale such as the MDPQ [26] or the Four-Factor Scale for the Chinese context [27]. The mode of administration (in-person, telephone, online) should be adapted to participants' capabilities to avoid bias.

- Data Collection on PROGRESS-Plus Factors: Systematically collect equity-relevant data aligned with the PROGRESS-Plus framework [13]:

- Place of residence: Rural/Urban classification (e.g., using zip codes or standardized definitions).

- Gender and Sex: Self-identified gender.

- Education: Highest educational attainment.

- Socioeconomic Status: Income level, occupation before retirement.

- Social Capital: Marital status, living arrangements, frequency of social contact.

- Data Analysis:

- Calculate overall and sub-scale digital literacy scores.

- Conduct multivariate regression analyses to determine the independent effect of rural residence and gender on digital literacy scores, while controlling for confounding variables like age, education, and socioeconomic status.

- Report disaggregated results by place of residence and gender.

Protocol for a Co-Designed Digital Literacy Intervention

Objective: To develop and pilot a digital literacy training program tailored to the specific needs of older rural women. Methodology:

- Formative Research (Needs Assessment):

- Conduct focus group discussions and in-depth interviews with the target population to understand their daily challenges, current technology use, and learning preferences.

- Identify "warm experts" (e.g., family members, community health workers) who can provide support [2].

- Co-Design Workshop:

- Recruit a panel of 8-10 older rural women, along with 2-3 healthcare providers or community leaders.

- Use participatory methods to map key life domains (health, social, finance) and brainstorm how digital tools could address specific pain points (e.g., booking medical appointments online, using video calls with family).

- Collaboratively outline the training curriculum and key features of any supporting toolkits.

- Intervention Development: Translate the co-design outputs into a structured training program with accessible materials (large print, simple language, step-by-step pictorial guides). The program should prioritize skills identified as most relevant.

- Pilot Implementation and Evaluation:

- Deliver the program in a convenient, trusted community setting.

- Use a mixed-methods pre-post evaluation: quantitative surveys (using a tool from Table 2) to measure skill changes, and qualitative interviews to assess changes in confidence, self-efficacy, and practical application.

Visualizations of Conceptual Pathways

Rural Disparity Pathways

Gender-Specific Barrier Pathways

Intervention Frameworks and Implementation Strategies for Digital Inclusion

For researchers designing interventions to overcome digital literacy barriers in older adults, the choice between face-to-face instruction and digital self-guided programs is a critical methodological consideration. This technical support center outlines the specific advantages, challenges, and effective applications of each modality, providing a structured framework for developing and troubleshooting research protocols. The content is grounded in the understanding that digital literacy is not merely a technical skill but a complex competency influenced by social-cognitive factors, technological self-efficacy, and specific age-related barriers such as anxiety, fear of online dangers, and challenges with rapidly evolving interfaces [29].

The shift of essential health and social services to digital platforms has made digital literacy a key determinant of health and equity for older adults [16]. Consequently, the design of educational interventions requires careful deliberation of modality to ensure both efficacy and inclusion. This guide provides the foundational tools for such decision-making.

Troubleshooting Guides and FAQs for Research Design

This section addresses common experimental and implementation challenges in a question-and-answer format, providing actionable guidance for researchers.

Frequently Asked Questions

Q: What are the primary socio-technical barriers that affect modality choice for older adults?

- A: Research identifies several key barriers. Anxiety often stifles exploration, with users fearing they will "break" their devices [29]. Perceived danger online, such as fear of fraud and identity theft, can create resistance to independent digital learning [29]. Furthermore, rapidly changing systems and interfaces lead to confusion, while physical challenges, such as memory issues, can impact the retention of new skills [17]. These barriers often make initial, high-touch interventions more effective.

Q: When is face-to-face instruction the most effective modality?

- A: Instructor-led training (ILT) is superior when the learning requires high levels of personalization, immediate feedback, and hands-on practice [30]. It is particularly crucial for complex topics where strong knowledge retention is required for the intervention to have impact [30]. Furthermore, the social context of ILT can directly combat the isolation some older adults experience, thereby increasing motivation and engagement [31] [30].

Q: What are the main challenges of deploying self-guided digital programs?

- A: The most significant challenges include low completion rates, with studies showing only 5% to 15% of learners finish self-paced courses [30]. Learners also risk misinterpreting content without immediate instructor clarification and may experience a feeling of isolation due to a lack of peer support [30]. These programs also require learners to possess a greater degree of self-motivation and time-management skills [31].

Q: How can we support skill retention and sustainability after the initial intervention?

- A: Sustaining digital literacy requires ongoing support. Research from the Home Connect program indicates that virtual Q&A sessions are a highly effective model for providing continued assistance, allowing learners to resolve new challenges as they arise [17]. This approach helps transition learners from a "script-based" learning style to developing more flexible problem-solving skills and technological self-efficacy [29].

Troubleshooting Common Research Implementation Issues

Problem: High Attrition Rates in Self-Guided Program Cohort

- Investigation: Check participant engagement metrics. Are there drop-off points at specific technical tasks?

- Solution: Implement the intervention using a hybrid approach. Supplement self-guided modules with structured, periodic check-ins (virtual or in-person) to answer questions and provide encouragement. Break content into bite-sized modules of 5-15 minutes and use automated reminders to encourage progress [30].

Problem: Participants Struggle with Generalizing Skills Across Different Devices/Platforms

- Investigation: Assess the study's instructional design. Is it focused on procedural steps for one device, or does it teach abstract concepts?

- Solution: Ground instruction in Social Cognitive Theory. Tutors should model the process of exploration and problem-solving, not just rote answers. Encourage learners to "drive" their own devices during sessions and demonstrate how the same cloud-based service (e.g., email) functions across a PC, tablet, and smartphone to build a conceptual understanding [29].

Problem: Participant Anxiety is Impeding Willingness to Explore

- Investigation: Use pre-intervention surveys to gauge baseline computer self-efficacy and anxiety levels.

- Solution: In face-to-face settings, tutors should explicitly model coping behaviors when they encounter an unknown issue, showing that it is normal to not have all the answers and demonstrating safe recovery strategies [29]. For self-guided programs, include reassuring, simple instructions on how to "undo" actions or reset to a known state.

Quantitative Data Comparison of Instructional Modalities

The tables below summarize key quantitative and qualitative findings from the literature to inform experimental design.

Table 1: Comparative Analysis of Modality Effectiveness

| Metric | Face-to-Face Instruction | Digital Self-Guided Programs |

|---|---|---|

| Completion Rates | Typically high due to structured schedule and social accountability [30]. | Not specified in search results, but generally lower; one source notes online course completion rates of only 5-15% [30]. |

| Skill Retention & Digital Literacy Gains | Effective for complex skill retention due to immediate feedback [30]. | Enables repetition, which can improve retention [30]. One study showed statistically significant improvements (p < 0.001) with AI-driven tools [32]. |

| Participant Engagement | High cognitive, emotional, and behavioral engagement facilitated by instructor adaptation [30]. | Can be high with interactive, AI-driven tools (e.g., p < 0.01 engagement metrics), but requires self-discipline [32] [31]. |

| Best-Suited Content Type | Complex, hands-on topics; practical skills training [31] [30]. | Primarily theoretical knowledge; compliance and policy training [30]. |

| Scalability & Cost | Higher cost due to instructor time, venues, and materials; scales poorly [30]. | Highly scalable and cost-efficient after initial development [31] [30]. |

Table 2: Quantified Barriers and Enablers for Older Adults' Digital Literacy

| Factor | Quantitative/Qualitative Evidence | Impact on Modality Choice |

|---|---|---|

| Sustained Skill Utilization | 63% of learners showed a growing pattern of use with ongoing Q&A support; others showed decreasing or non-sustained use without it [17]. | Highlights the critical need for ongoing support mechanisms in any modality. |

| Technical Troubles | A primary barrier cited by learners, including unstable Wi-Fi and confusing interface changes [17]. | Supports the initial use of face-to-face support to build foundational confidence for later self-guided learning. |

| Physical Challenges | Memory issues are a significant hurdle for skill retention [17]. | Favors modalities that offer repetition and easy reference materials, and where instructors can patiently adapt pacing. |

| Anxiety & Self-Efficacy | A common concern is "breaking" devices, stifling exploration [29]. | Face-to-face tutoring is optimal for initial confidence-building through direct modeling and reassurance [29]. |

Experimental Protocol and Workflow for Intervention Design

The following diagram outlines a structured methodology for developing and testing digital literacy interventions, based on established research frameworks.

Workflow for Digital Literacy Intervention Design

The Scientist's Toolkit: Key Research Reagent Solutions

This table details essential conceptual "reagents" and methodological tools for designing robust digital literacy interventions for older adults.

Table 3: Essential Research Reagents and Methodologies

| Research "Reagent" | Function & Explanation in Experimental Design |

|---|---|

| Social Cognitive Theory (SCT) | A theoretical framework that posits learning occurs in a social context through observation and modeling. It is crucial for designing interventions that boost self-efficacy and problem-solving skills in older learners, moving beyond rote memorization [29]. |

| Unified Theory of Acceptance and Use of Technology 2 (UTAUT2) | A model used to evaluate technology adoption and use. Its factors (e.g., Performance Expectancy, Effort Expectancy, Social Influence) provide a structured way to analyze media portrayals of older adults' digital literacy and design targeted interventions [16]. |