Mobile Ecological Momentary Assessment (mHealth) for Cognitive Monitoring: A Comprehensive Guide for Researchers and Clinicians

Ecological Momentary Assessment (EMA) delivered via mobile health (mHealth) platforms is revolutionizing cognitive monitoring by enabling real-time, ecologically valid data collection in naturalistic environments.

Mobile Ecological Momentary Assessment (mHealth) for Cognitive Monitoring: A Comprehensive Guide for Researchers and Clinicians

Abstract

Ecological Momentary Assessment (EMA) delivered via mobile health (mHealth) platforms is revolutionizing cognitive monitoring by enabling real-time, ecologically valid data collection in naturalistic environments. This article provides a comprehensive overview for researchers and drug development professionals, exploring the foundations of cognitive EMA and its application across diverse populations, including older adults at risk for dementia and breast cancer survivors. It details methodological considerations for designing robust studies, addresses critical challenges such as participant compliance and data missingness, and synthesizes evidence on the feasibility, reliability, and validity of these digital tools. The discussion extends to the integration of wearable sensors and artificial intelligence, offering insights into future directions for validating digital biomarkers and integrating mHealth into large-scale clinical and biomedical research.

Understanding Mobile Cognitive EMA: Foundations and Core Principles for Real-World Assessment

Definition and Conceptual Framework

Cognitive Ecological Momentary Assessment (EMA) is a methodology that uses mobile health (mHealth) technologies to collect real-time cognitive data from individuals in their natural environments. This approach involves repeated, brief sampling of cognitive performance and related contextual factors through smartphone applications, enabling researchers to capture dynamic cognitive processes as they unfold in daily life [1] [2].

Unlike traditional neuropsychological assessments conducted in clinical settings, cognitive EMA leverages the ubiquity of mobile devices to assess cognitive functioning with enhanced ecological validity while minimizing recall bias and contextual limitations of laboratory-based testing [2] [3]. The approach is particularly valuable for detecting subtle cognitive fluctuations in conditions such as aging populations, neurodegenerative diseases, and cancer-related cognitive impairment [1] [3].

Key Quantitative Evidence and Validation Studies

Table 1: Feasibility and Adherence Metrics Across Cognitive EMA Studies

| Population | Sample Size | Protocol Duration | Adherence Rate | Primary Cognitive Domains Assessed | Key Findings | Citation | ||

|---|---|---|---|---|---|---|---|---|

| Older Adults (Cognitively Normal vs. Very Mild Dementia) | 417 (380 CN, 37 VMD) | Up to 4x/day for 1 week | Not specified | Processing speed, Working memory, Associative memory | Minimal environmental distraction effects overall; location/social context had small, domain-specific impacts, more apparent in VMD | [1] | ||

| Breast Cancer Survivors | 105 | Once every other day for 8 weeks (28 sessions) | 87.3% | Working memory, Executive functioning, Processing speed, Memory | Strong test-retest reliability (ICC>0.73); moderate-strong convergent validity ( | r | =0.23-0.61) with traditional measures | [3] |

| Metastatic Breast Cancer Patients | 51 | Once daily for 4 weeks (28 sessions) | High (exact rate not specified) | Working memory, Executive functioning, Processing speed, Memory | Demonstrated feasibility, reliability, and validity in metastatic cancer population | [3] |

Table 2: Impact of Environmental Factors on Cognitive EMA Performance in Older Adults

| Environmental Factor | Cognitive Domain | Effect on Cognitively Normal | Effect on Very Mild Dementia | Statistical Significance |

|---|---|---|---|---|

| Testing Location (Away vs. Home) | Visuospatial Working Memory | Worse performance when away (P=.001) | No significant effect (P=.36) | Differs by cognitive status |

| Testing Location (Away vs. Home) | Processing Speed | No difference (P=.88) | Slightly faster when not at home (P=.04) | Differs by cognitive status |

| Social Context (With Others vs. Alone) | Processing Speed Variability | Minimal effect | Increased variability (P=.04) | Significant for VMD only |

| Most Distracting Environment (Away + With Others) | Visuospatial Working Memory | Minimal effect | Larger performance differences | Significant for VMD only |

| Self-Reported Interruptions | Overall Cognitive Performance | Minimal residual effects after removing interrupted sessions | More apparent effects after removing interrupted sessions (12.4% of sessions) | Effects remain after exclusion |

Experimental Protocols and Methodologies

Protocol 1: Ambulatory Research in Cognition (ARC) for Aging Populations

Objective: To examine the impact of environmental distractions on unsupervised digital cognitive assessments in older adults with normal cognition and very mild dementia [1].

Population: Adults classified as cognitively normal (CDR 0) or with very mild dementia (CDR 0.5) using Clinical Dementia Rating scale [1].

EMA Protocol:

- Platform: Custom-built smartphone application (iOS/Android)

- Frequency: Up to 4 assessments daily for one week

- Assessment Window: 2-hour completion window per assessment

- Cognitive Measures:

- Symbols Task: Processing speed measure with 12 trials assessing abstract shape matching (20-60 seconds)

- Prices Task: Associative memory with learning and recognition phases for item-price pairs (approximately 60 seconds)

- Grids Task: Spatial working memory assessment

- Contextual Data Collection:

- Current location (home/not home)

- Social context (alone/with others)

- Self-reported interruptions post-assessment

- Statistical Analysis: Mixed-effect modeling to test interactions between location, social context, and clinical status

Protocol 2: NeuroUX for Cancer-Related Cognitive Impairment

Objective: To establish feasibility, reliability, and validity of smartphone-administered cognitive EMA in breast cancer survivors [3].

Population: Breast cancer survivors (stage 0-III) who completed primary treatment within previous 6 years.

EMA Protocol:

- Platform: NeuroUX smartphone application

- Frequency: Once daily every other day for 8 weeks (28 total assessments)

- Assessment Duration: Approximately 10 minutes per assessment

- Notification System: Texted weblinks with reminders at 3h and 5h if incomplete

- Cognitive Measures:

- Self-Report Items:

- Cognitive symptoms severity (0-7 scale)

- Confidence in cognitive abilities (0-7 scale)

- Objective Cognitive Tests:

- Working Memory: N-Back (2-back, 12 trials) and CopyKat tests

- Executive Functioning: Color Trick and Hand Swype tests

- Additional tests alternating throughout protocol

- Self-Report Items:

- Baseline Assessments:

- FACT-Cog for self-reported cognitive function

- BrainCheck computerized neuropsychological battery

- Post-Study Evaluation: Satisfaction and feedback surveys

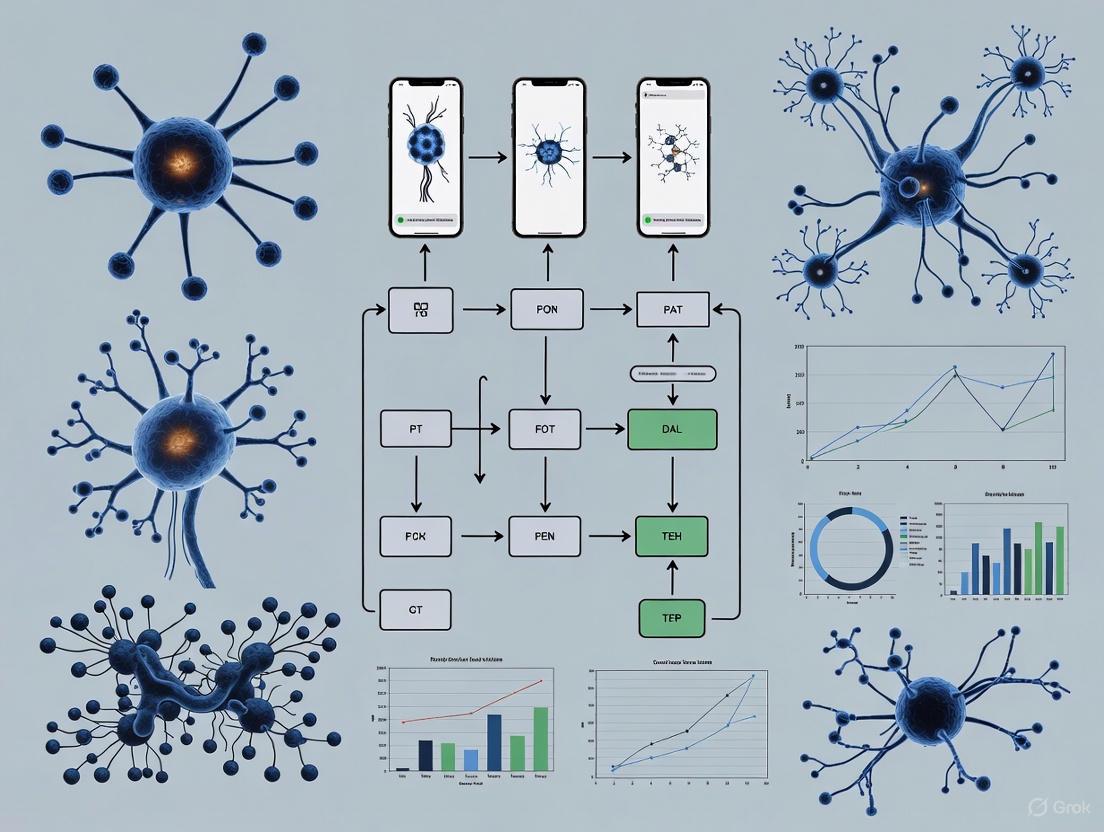

Signaling Pathways and Workflow Diagrams

Cognitive EMA Implementation and Analysis Workflow

This diagram illustrates the comprehensive workflow for implementing and analyzing cognitive EMA studies, from initial design through final validation.

Statistical Analysis Framework for EMA Data

Key Considerations:

- Multilevel Data Structure: EMA data are nested within individuals, requiring specialized statistical approaches [4]

- Missing Data: Non-response is inevitable in EMA research and should be accounted for analytically [5]

- Auto-correlation: Observations are typically correlated with delayed copies of themselves [5]

Recommended Analytical Approaches:

- Linear Mixed Models (LMM): For continuous outcome variables, accounting for within-person correlations [4]

- Generalized Linear Mixed Models (GLMM): For categorical, ordinal, or count data [4]

- Feature Extraction Techniques:

- Central tendency and variability measures

- Trend analysis using regression approaches

- Periodicity analysis via Fourier analysis

- Rolling statistics to identify patterns [5]

Sample Size Considerations: More participants is generally more important than numerous responses per participant for statistical power [4].

Research Reagent Solutions and Essential Materials

Table 3: Essential Research Tools for Cognitive EMA Implementation

| Tool Category | Specific Examples | Function/Purpose | Key Features |

|---|---|---|---|

| EMA Platforms | NeuroUX, Ambulatory Research in Cognition (ARC) | Delivery of cognitive tests and collection of momentary data | Customizable sampling schedules, integrated cognitive tasks, real-time data capture |

| Cognitive Task Batteries | Symbols Task, Prices Task, Grids Task, N-Back, CopyKat | Assessment of specific cognitive domains | Brief administration time, alternate forms, sensitivity to fluctuation |

| Clinical Characterization Tools | Clinical Dementia Rating (CDR), FACT-Cog, BrainCheck | Participant characterization and validation | Gold-standard clinical assessment, comparison with traditional measures |

| Statistical Analysis Tools | R, Python, specialized packages (emaph) | Analysis of intensive longitudinal data | Multilevel modeling capabilities, time-series analysis, feature extraction |

| Data Collection Infrastructure | REDCap, IRIS platform | Secure data management and regulatory compliance | Electronic data capture, participant management, regulatory submission support |

Implementation Considerations for Regulatory Applications

For Drug Development Professionals:

- Early Regulatory Engagement: Utilize scientific advice procedures and qualification of novel methodologies pathways [6]

- Psychometric Validation: Establish reliability, validity, and sensitivity of cognitive EMA measures for specific populations [3]

- Contextual Factor Control: Account for environmental distractions and testing conditions that may influence cognitive performance [1]

- Compliance with Regulatory Standards: Follow established guidelines for electronic data collection and patient-reported outcomes

Cognitive EMA represents a transformative methodology for capturing real-world cognitive functioning in clinical research and drug development. When properly validated and implemented, it offers enhanced ecological validity, reduced recall bias, and the ability to detect subtle cognitive fluctuations that may be missed by traditional assessment methods.

Mobile Ecological Momentary Assessment (mHealth EMA) represents a paradigm shift in cognitive and health monitoring, moving assessments from artificial laboratory settings into the natural flow of participants' daily lives. This methodology involves repeated sampling of participants' cognitive performance, behaviors, and experiences in real-time within their natural environments [1] [7]. By leveraging ubiquitous smartphone technology, researchers can capture dynamic fluctuations in cognitive function with unprecedented ecological validity while minimizing the distortions of retrospective recall [8]. This approach is particularly valuable for tracking subtle cognitive changes in aging populations and those with neurodegenerative conditions, providing rich datasets that reveal both within-day and between-day fluctuations [1] [9]. The integration of mHealth EMA into clinical research and drug development offers powerful advantages for measuring intervention effects and understanding real-world cognitive functioning.

Quantitative Evidence: Validating the mHealth EMA Advantage

Feasibility and Compliance Metrics Across Populations

Table 1: Compliance and Feasibility Metrics in mHealth EMA Studies

| Study Population | Sample Size | Study Duration | Compliance/Response Rate | Key Feasibility Findings |

|---|---|---|---|---|

| Older Adults (Cognitively Normal & Very Mild Dementia) [1] | 417 total participants | 1 week (up to 4x daily) | 87.6% completion rate (after removing interrupted sessions) | Minimal effects of environmental distractions on performance; suitable for unsupervised testing |

| Transdiagnostic Dementia Sample [9] | 12 participants | 10 days (7x daily + end-of-day survey) | 80% compliance rate | No dropouts; low burden reported; mean completion time: 2 min 10 sec for momentary questionnaires |

| Cross-Study Analysis (Multiple Clinical Studies) [8] | 454 participants across 9 studies | 2 weeks to 16 months | 79.95% average response rate | 88.37% of prompted sessions fully completed; higher responsiveness evenings (82.31%) and weekdays (80.43%) |

Cognitive Performance Data Across Testing Environments

Table 2: Environmental Effects on Cognitive Performance in Unsupervised Digital Assessments [1]

| Cognitive Domain | Participant Group | Testing Environment | Performance Impact | Statistical Significance |

|---|---|---|---|---|

| Visuospatial Working Memory | Cognitively Normal Older Adults | Home vs. Away | Better performance at home | P=.001 |

| Visuospatial Working Memory | Very Mild Dementia | Home vs. Away | No significant effect of location | P=.36 |

| Processing Speed | Cognitively Normal Older Adults | Home vs. Away | No difference between locations | P=.88 |

| Processing Speed | Very Mild Dementia | Not at Home | Slightly faster when not at home | P=.04 |

| Processing Speed | All Participants | Presence of Others | Increased variability in processing speed | P=.04 |

Experimental Protocols and Methodologies

Protocol: Ambulatory Research in Cognition (ARC) Smartphone Assessment

Platform Development: The ARC platform is a custom-built smartphone application available for both iOS and Android devices. Participants use either their personal smartphones or study-provided devices [1].

Assessment Schedule:

- Notifications sent via native iOS or Android systems pseudorandomly

- Participants complete assessments within 2-hour response windows

- Testing occurs up to 4 times daily for one week

- Three cognitive tasks administered per session [1]

Cognitive Task Battery:

- Symbols Task (Processing Speed)

- Procedure: Participants view 3 pairs of abstract shapes and select which of 2 possible responses matches 1 of the 3 targets

- Trials: 12 trials per assessment

- Duration: 20-60 seconds

- Measures: Median reaction time (RT) for correct trials, coefficient of variation (CoV) for RT variability [1]

Prices Task (Associative Memory)

- Learning Phase: 10 item-price pairs presented for 3 seconds each

- Recognition Phase: Participants select correct price from two options when presented with items

- Duration: Approximately 60 seconds

- Measures: Error rate during recognition phase [1]

Grids Task (Spatial Working Memory)

- Procedure: Detailed protocol not fully described in available sources

- Context: Part of comprehensive cognitive assessment battery [1]

Environmental Context Assessment:

- Pre-assessment: Participants report current location and social surroundings

- Post-assessment: Participants report any interruptions during testing

- Location classification: Home vs. not home

- Social context: Alone vs. with others [1]

Protocol: High-Intensity Experience Sampling in Dementia

Co-Design Methodology:

- Protocol developed in collaboration with dementia stakeholders

- Personalized adaptations implemented based on individual capabilities [9]

Assessment Structure:

- Duration: 10-day intensive sampling period

- Frequency: 7 daily notifications assessing thoughts and affect

- Additional Measures: End-of-day questionnaire on daily life satisfaction and meaning

- Extensions: Data collection period extended by 1-2 days for some participants to ensure adequate sampling [9]

Compliance Support Strategies:

- Partner notification system: For one participant, their partner received synchronized notifications to provide reminders

- Flexible scheduling: Adaptations made to accommodate individual needs and capabilities [9]

Feasibility Assessment:

- Participation rates tracked from initial contact through study completion

- Compliance rates calculated as completed measurements out of total possible

- Subjective participation experiences assessed through post-study evaluations

- Completion times recorded for each assessment type [9]

Visualizing mHealth EMA Workflows

Research Study Implementation Workflow

Cognitive EMA Data Collection Process

Table 3: Essential Resources for mHealth EMA Cognitive Monitoring Research

| Resource Category | Specific Tool/Platform | Primary Function | Key Features/Applications |

|---|---|---|---|

| mHealth Platforms | m-Path [9] | User-friendly ESM platform and app | Flexible ESM implementation; suitable for clinical populations including dementia |

| Cognitive Assessment | ARC Platform [1] | Custom smartphone cognitive assessment | Processing speed, working memory, and associative memory tasks; designed for older adults |

| Usability Assessment | mHealth App Usability Questionnaire (MAUQ) [10] | Standardized usability evaluation | Measures ease of use, interface satisfaction, and usefulness; 21-item scale |

| Clinical Characterization | Clinical Dementia Rating (CDR) [1] | Dementia staging and participant classification | Standardized clinical assessment; essential for participant stratification in cognitive studies |

| Compliance Monitoring | Smartphone Notification Systems [8] | Participant prompting and response tracking | Native iOS/Android integration; configurable reminder schedules; response timing metadata |

| Data Analysis | Mixed-Effects Modeling [1] | Statistical analysis of longitudinal EMA data | Handles nested data structure; accounts for within-person and between-person variability |

The implementation of mobile Ecological Momentary Assessment in cognitive monitoring research offers substantial methodological advantages through enhanced ecological validity, reduced recall bias, and rich high-frequency data collection. Evidence from recent studies demonstrates that this approach is feasible across diverse populations, including older adults with cognitive impairment [1] [9]. The structured protocols and resources outlined provide researchers with practical frameworks for implementing robust mHealth EMA studies. As technology continues to evolve, these methods offer increasingly powerful tools for capturing real-world cognitive functioning and assessing intervention effectiveness in natural environments, ultimately bridging the gap between laboratory findings and everyday cognitive performance.

This application note details protocols for the mobile Ecological Momentary Assessment (mEMA) of three core cognitive domains—Processing Speed, Working Memory, and Associative Memory—which are foundational to higher-order executive function and general cognitive ability (g) [11]. The escalating global prevalence of age-related cognitive impairment and dementia underscores the critical need for scalable, ecologically valid monitoring tools [12]. mHealth platforms, particularly mEMA, enable high-frequency, real-world cognitive assessment, overcoming the limitations of traditional lab-based testing by capturing data within an individual's natural environment [13] [14]. This approach facilitates the detection of subtle, day-to-day fluctuations in cognitive performance, providing sensitive metrics for tracking disease progression or intervention efficacy in clinical research and drug development [15].

The scientific rationale for focusing on these domains is robust. Research confirms that Processing Speed, Working Memory, and Associative Learning each contribute significant unique variance to models of general intelligence, indicating they are separable mechanistic substrates of g [11]. Furthermore, task-specific learning—gains in performance from repeated practice on a task even when the to-be-learned material changes—is a crucial mechanism in cognitive training and is predicted by individual differences in processing speed and working memory in older adults [16]. Assessing these domains via mEMA allows researchers to capture both baseline ability and dynamic learning effects over time.

Core Cognitive Domain Assessments & Protocols

The following section outlines the standardized protocols for assessing each target cognitive domain. Adherence to these protocols ensures data consistency and reliability for longitudinal monitoring and multi-site trials.

Table 1: Core Cognitive Domains and Their mEMA Assessment Protocols

| Cognitive Domain | mEMA Task Prototype | Key Independent Variable(s) | Primary Dependent Variable(s) | mEMA Sampling Cadence |

|---|---|---|---|---|

| Processing Speed | Pattern Comparison / Symbol Matching | Stimulus complexity; Number of items | Correct responses per minute; Mean reaction time for correct items [11] | 2-3 times daily, randomized within 4-hour blocks [14] |

| Working Memory | N-back Task (N=1,2) | Load level (1-back vs. 2-back) | d-prime (sensitivity index); Correct trial reaction time [11] | 1-2 times daily, >4 hours apart to minimize fatigue |

| Associative Memory | Paired Associates (PA) Learning | Number of word pairs; Semantic relatedness | Trials to criterion; Correct recalls per trial [16] [11] | Once daily (to measure task-specific learning) [16] |

Protocol for Processing Speed Assessment

Objective: To measure the speed at which an individual can perform a simple cognitive operation, a foundational ability that declines with age and in various cognitive pathologies.

- Task Description (Pattern Comparison): Participants are presented with two abstract patterns or symbol strings side-by-side. They must indicate as quickly and accurately as possible whether the two items are identical or different.

- Stimuli: Use non-verbal, culturally neutral stimuli (e.g., abstract line patterns, simple geometric shapes) to minimize educational and cultural bias [15].

- Trial Structure: Each trial presents a new pair of stimuli. The task consists of a block of 20-30 trials.

- Procedure:

- Instructions screen: "You will see two patterns. Are they the SAME or DIFFERENT? Respond as QUICKLY and as ACCURATELY as you can."

- A fixation cross is displayed for 500ms.

- The stimulus pair is displayed until a response is made or a timeout (e.g., 5000ms) occurs.

- Provide neutral feedback (e.g., a blank screen for 200ms) before the next trial. Avoid positive/negative reinforcement to prevent confounding motivation effects.

- Data Output: The primary metrics are (1) the number of correct responses per minute, and (2) the mean reaction time for correct trials only.

Protocol for Working Memory Assessment

Objective: To assess the capacity to actively maintain and manipulate information over short intervals, a key predictor of fluid intelligence.

- Task Description (N-back): Participants are shown a sequence of stimuli (e.g., letters, locations) one at a time. For each stimulus, they must indicate if it matches the one presented

Nsteps back in the sequence. - Stimuli: Letters or spatial locations on a 3x3 grid.

- Task Levels: Include 1-back (low load) and 2-back (high load) conditions. These can be administered in separate mEMA prompts or blocked within a single session.

- Trial Structure: Each condition consists of a sequence of 20+ stimuli. The target rate (i.e., matches) should be approximately 30% of trials.

- Procedure:

- Instructions: "You will see a series of [letters/locations]. For each one, decide if it is the SAME as the one you saw [1/2] steps back."

- Each stimulus is presented for a fixed duration (e.g., 1500ms), followed by a response interval (e.g., 1500ms). The short presentation time prevents passive maintenance strategies.

- The task is highly structured, with inter-stimulus intervals controlled by the system.

- Data Output: Calculate

d-prime (d')as the primary measure of sensitivity, which incorporates both hits and false alarms. Also, record mean reaction time on correct trials for each load condition.

Protocol for Associative Memory Assessment

Objective: To evaluate the ability to form and recall new associations between unrelated pieces of information, a function critically dependent on the hippocampus and known to be vulnerable in early Alzheimer's disease.

- Task Description (Paired Associates Learning): Participants learn a set of unrelated word pairs (e.g., "dog - spoon"). In the recall phase, they are shown the first word (cue) and must recall the second (target).

- Stimuli: Use a pool of common, concrete nouns. For each mEMA session, select a novel set of 8 word pairs to measure task-specific learning—the improvement in acquiring new associations due to practice with the task procedure itself, rather than memory for specific items [16].

- Trial Structure (Study-Test Cycle):

- Study Phase: All 8 word pairs are presented sequentially, each for 3-5 seconds.

- Test Phase: The cue word from each pair is presented in a random order. The participant types or selects the target word from a set of distractors.

- The cycle can be repeated for a fixed number of trials (e.g., 3-4) or until a mastery criterion (e.g., 100% correct) is reached.

- Procedure:

- Instructions: "You will learn pairs of words. Try to remember which words go together. Later, you will be shown the first word and asked to recall the second."

- The task is administered once per day, as the learning trajectory across days is a key outcome [16].

- Data Output: The primary metrics are (1) the number of correct recalls per learning trial, and (2) the "trials to criterion" (if applicable). Performance is tracked across days to model the task-specific learning curve [16].

mEMA Implementation & Workflow

The successful deployment of these cognitive protocols relies on a robust mEMA implementation strategy.

Sampling Protocols & Compliance

- Sampling Modality: For cognitive tasks, a signal-contingent sampling approach is typically used, where the app prompts the participant to complete a task at random or fixed intervals within a time window [14]. This ensures data is collected at planned times, reducing self-selection bias.

- Feasibility & Compliance: Meta-analyses show that mEMA protocols can achieve compliance rates of 78.3% on average [13]. Compliance is optimized by:

Table 2: mEMA Protocol Feasibility and Acceptability Metrics

| Protocol Parameter | Recommended Specification | Empirical Support |

|---|---|---|

| Daily Prompt Frequency | 2-5 times | Higher frequency (6+) can reduce compliance in non-clinical groups [13]. |

| Overall Compliance Rate | ~78% (Target) | Weighted average from youth studies; a benchmark for feasibility [13]. |

| Prompt Randomization | Within 2-4 hour blocks | Prevents anticipation and captures different times of day [14]. |

| Task Duration | < 3 minutes per prompt | Critical for maintaining long-term participant engagement and compliance. |

The Researcher's Toolkit: Reagents & Materials

Table 3: Essential Research Reagent Solutions for mEMA Cognitive Monitoring

| Tool / Component | Function / Rationale | Implementation Example |

|---|---|---|

| Customizable mEMA Platform | Core software for deploying surveys and cognitive tasks, managing prompts, and collecting data. | Platforms like ilumivu [14] or custom apps built using research SDKs. |

| Mobile App Rating Scale (MARS) | A validated 23-item tool to objectively assess the quality of mHealth apps on engagement, functionality, aesthetics, and information [12]. | Used to ensure the developed mEMA app meets a high-quality standard (target mean score >3.57) [12]. |

| Psychometric Item Bank | A pre-validated library of test items and parallel forms for cognitive tasks to prevent practice effects. | Includes multiple sets of word pairs for associative memory [16] and stimuli for processing speed tasks [15]. |

| Data Processing Pipeline | Automated scripts for scoring cognitive tasks, calculating derived metrics (e.g., d-prime), and flagging invalid data. | Scripts in R or Python to compute primary outcomes from raw reaction time and accuracy data. |

Data Presentation & Analytical Visualization

Adherence to principles of effective data presentation is paramount for clear scientific communication.

- Visualization Best Practices: For presenting group data, dot plots are superior to bar graphs because they allow for precise judgment of average magnitudes without the perceptual distortion introduced by the "spatial extent" of bars [17].

- Table Design: In tables of numerical results, aid comparison by using right-flush alignment for numbers and their headers, employing a tabular font, and ensuring consistent precision [18].

Quality Assurance & Inclusive Design

Ensuring data integrity and app accessibility is critical for generating valid, generalizable results.

- App Quality: A recent review of cognitive training apps found mean quality scores of 3.57 (SD 0.43) on the MARS scale (range 1-5), with functionality scoring highest and engagement lowest [12]. Aim to exceed these benchmarks.

- Inclusive Design for Diverse Populations: To include older adults and individuals with visual impairments, design principles must be prioritized [19] [20]:

- Simplicity & Customizability: Offer adjustable text sizes, high contrast modes, and customizable color schemes [19].

- Reduce Cognitive Load: Simplify navigation, use clear and consistent icons, and provide help and training within the app [20].

- Auditory Support: Provide audible explanations of health data and error feedback [19].

- Input Flexibility: Implement feasible data input methods beyond precise touch, such as voice input [19].

Application Notes

Mobile Ecological Momentary Assessment (mEMA) mHealth platforms have emerged as powerful tools for real-time, ecologically valid cognitive and health monitoring across diverse clinical populations. Their application is critical in aging, neurodegenerative disease, and cancer survivorship, where capturing subtle, fluctuating symptoms and functional status in daily life provides insights beyond traditional clinic-based assessments. The integration of mEMA with wearable sensors and artificial intelligence (AI) enables multidimensional remote monitoring, supporting early detection, personalized interventions, and comprehensive supportive care [21] [1] [7].

The table below summarizes the core quantitative findings and feasibility outcomes from key studies implementing mHealth cognitive monitoring across these populations.

Table 1: Quantitative Outcomes of mHealth Monitoring Across Populations

| Population | Primary mHealth Application | Key Quantitative Findings | Compliance/Feasibility |

|---|---|---|---|

| Cancer Survivors [21] | Multidimensional remote monitoring of patient-reported outcomes (PROs) and physiology via app and smartwatch. | Collection of clinically relevant PROs (e.g., Edmonton Symptom Assessment System) and objective measures (e.g., step counts). | Hypothesis: >50% participant engagement with app at least once/week in 8 of 16 study weeks. Study ongoing. |

| Older Adults (Cognitively Normal & Very Mild Dementia) [1] | Unsupervised daily cognitive assessments (processing speed, working memory, associative memory) via smartphone. | Minimal momentary effects of environmental distractions on performance across groups. Cognitively normal adults showed better visuospatial working memory at home (P=.001). Those with very mild dementia showed no location effect on this task (P=.36). | 12.4% (1194/9633) of all assessments had self-reported interruptions. Small distraction effects persisted after their removal. |

| Older Adults (Health Promotion) [22] | mHealth app (NeoMayor) for promoting healthy lifestyles and cardiovascular/brain health. | Global Cardiovascular Health (CVH) index score increased from 64 (SD 10) to 68 (SD 11); P<.001. Improvements in systolic BP, waist circumference, HDL cholesterol, and physical performance. | High engagement: mean use of 6.6 (SD 11.85) minutes per day, twice a week over 2 months. |

| General Adult (Clinical & Non-Clinical) [23] | mEMA for self-reported health behaviors and psychological constructs. | Meta-analysis of compliance rates across 105 unique datasets. | Overall compliance: 81.9% (95% CI 79.1-84.4). No significant difference between non-clinical and clinical datasets. |

Key Insights for Research and Drug Development

For researchers and drug development professionals, mEMA offers a methodology for collecting high-frequency, real-world data on cognitive function, symptom burden, and functional status that can serve as sensitive endpoints in clinical trials. The evidence suggests that remote, unsupervised cognitive testing provides valid data, though testing environment can have small, domain-specific effects, particularly in populations with very mild dementia [1]. Successful implementation hinges on robust compliance, which is achievable in both clinical and non-clinical adult populations, with an average compliance rate of approximately 82% [23]. Furthermore, a user-centered design is paramount for ensuring engagement, especially in older adult populations who may face barriers related to digital literacy and physical or cognitive limitations [24] [22].

Experimental Protocols

Protocol 1: Multidimensional mHealth Monitoring in Cancer Survivorship

This protocol, derived from the GATEKEEPER pilot study, details a 16-week observational study for remote monitoring of cancer survivors [21].

- Objective: To assess the feasibility (primary) and collect clinically relevant subjective and objective measures (secondary) via an mHealth solution in survivors of cancer.

- Population: Adult survivors (N=100) of any solid malignancy, not receiving toxic anticancer treatment, with controlled disease and scheduled for active surveillance.

- mHealth Solution:

- Dedicated Smartphone App: For self-reported behavioral data (nutrition, physical activity, sleep) and validated PRO questionnaires (e.g., Edmonton Symptom Assessment System).

- Paired Smartwatch (Samsung Galaxy Watch): For automatic, objective collection of physiological data (e.g., step counts, actigraphy).

- Data Integration: The mHealth solution anonymizes and integrates self-reported data, wearable sensor data, and electronic health records for AI-driven analysis.

- Primary Endpoint: Feasibility, defined as participant engagement (e.g., at least 50% using the app at least once per week in 8 of the 16 weeks).

- Ethical Considerations: Approved by NHS Research Ethics Committee. Participants provide informed consent, are gifted the wearable device, and may receive a replacement Android phone if needed. Comprehensive data protection measures are in place.

Protocol 2: Unsupervised Digital Cognitive Assessment in Aging and Neurodegenerative Disease

This protocol, based on the Ambulatory Research in Cognition (ARC) study, outlines the use of smartphone-based mEMA for frequent cognitive testing in older adults, including those with very mild dementia [1].

- Objective: To examine the impact of environmental distractions on unsupervised remote cognitive assessments and compare performance between cognitively normal older adults and those with very mild dementia.

- Population: Older adults classified as cognitively normal (CDR 0, n=380) or having very mild dementia (CDR 0.5, n=37). Clinical status is determined within a year of mEMA via the Clinical Dementia Rating (CDR) scale.

- mEMA Platform: Custom smartphone app (ARC) delivering three cognitive tasks daily (up to 4 times/day) for one week.

- Cognitive Tasks & Measures:

- Symbols: Processing speed task (median reaction time (RT) and RT variability for correct trials).

- Grids: Visuospatial working memory task (accuracy).

- Prices: Associative memory task (error rate during recognition).

- Contextual Data: Before/after each assessment, participants report testing location (home/not home), social context (alone/with others), and any interruptions.

- Statistical Analysis: Mixed-effect models test interactions between location, social context, interruptions, and clinical status on cognitive performance.

The Scientist's Toolkit: Research Reagent Solutions

The following table details essential materials and tools for deploying mEMA in cognitive and health monitoring research.

Table 2: Essential Research Reagents and Tools for mEMA Studies

| Item/Solution | Function in mEMA Research | Exemplars & Key Considerations |

|---|---|---|

| mEMA Software Platform | Delivers cognitive tests, PROs, and contextual questions on a scheduled basis; manages data collection and storage. | Custom apps (e.g., ARC [1]); commercial platforms. Must support iOS/Android, push notifications, and secure data transfer. |

| Wearable Sensor | Passively and continuously collects objective physiological and behavioral data to complement self-report. | Samsung Galaxy Watch [21]; other consumer-grade or research-grade devices. Key metrics: step count, heart rate, sleep actigraphy. |

| Validated PRO Measures | Quantifies symptom burden, quality of life, and other patient-centric outcomes in an ecologically valid manner. | Edmonton Symptom Assessment System [21]; EuroQol Visual Analogue Scale [25]. Must be validated for ePRO administration. |

| Cognitive Test Battery | Assesses fluctuations in specific cognitive domains (e.g., processing speed, memory) in real-world settings. | ARC tasks: "Symbols" (processing speed), "Grids" (working memory), "Prices" (associative memory) [1]. Tasks must be brief and repeatable. |

| Data Integration & AI Analytics Framework | Harmonizes multimodal data streams (mEMA, wearables, EMR) and enables predictive modeling and pattern detection. | GATEKEEPER architecture [21]. Requires robust data anonymization, secure servers, and machine learning capabilities. |

| Participant Training & Support Protocol | Ensures participants, especially older adults, can use the technology effectively, maximizing compliance and data quality. | Includes in-person training [21], instructional materials, and ongoing technical support [24] [22]. Critical for geriatric populations. |

The Role of EMA in Capturing Subtle Cognitive Fluctuations in Early-Stage Dementia

Application Note: Principles and Value of EMA in Cognitive Monitoring

Ecological Momentary Assessment (EMA) is a powerful mHealth methodology for capturing fine-grained, longitudinal data on an individual's cognitive and behavioral well-being in their natural environment. By leveraging mobile devices, EMA minimizes recall bias and provides a more sensitive assessment of subtle cognitive fluctuations compared to traditional, infrequent lab-based assessments [8]. This capability is paramount in early-stage dementia, where the earliest signs are not outright forgetting, but a decline in the precision and quality of memories, which can begin decades before traditional tests like the MoCA (Montreal Cognitive Assessment) show problems [26].

The core strength of EMA lies in its ability to capture within-day and between-day fluctuations in cognitive performance, laying a foundation for timely, in-the-moment interventions. When combined with objective, sensor-based data representing digital phenotypes, EMA enables a powerful comparison of subjective self-reports with objective digital behavior markers [8]. This approach is particularly effective for longitudinally monitoring conditions like Alzheimer's disease and related dementias, as it reduces the burden on participants who would otherwise need to travel to a research lab [8].

Experimental Protocol: EMA for Digital Cognitive Assessment in Aging

This protocol outlines a procedure for using a smartphone-based EMA tool to assess the impact of environmental distractions on cognitive performance in older adults, comparing those who are cognitively normal with those showing very mild dementia [1]. The objective is to determine how testing location and social context affect performance on unsupervised digital cognitive tests and whether these distractions have a differential impact based on clinical status [1].

Participant Selection and Clinical Characterization

- Participants: Recruit older adults from studies of aging and dementia at a clinical research center.

- Inclusion Criteria: Participants must complete at least 10 testing sessions to ensure adequate engagement and have a recent clinical assessment for accurate cognitive classification [1].

- Clinical Status Classification: Cognitive status is determined using the Clinical Dementia Rating (CDR) scale based on semi-structured interviews with the participant and a collateral source. For this study, participants are grouped as cognitively normal (CDR 0) or as having very mild dementia (CDR 0.5) [1].

EMA Cognitive Assessment Procedure

- Platform: A custom-built smartphone application (e.g., the Ambulatory Research in Cognition (ARC) study app) [1].

- Assessment Schedule: Participants receive notifications to complete assessments pseudorandomly, up to 4 times per day for one week. They are instructed to complete the assessment as soon as possible within a 2-hour window [1].

- Contextual Data Collection: At each assessment, participants report their current location (home vs. not home) and social context (alone vs. with others) [1].

- Post-Assessment Report: After each session, participants indicate whether they experienced any interruptions during the testing period [1].

Cognitive Tasks and Key Metrics

Participants complete three primary cognitive tasks during each assessment session [1]:

- Processing Speed Task ("Symbols"): Participants match abstract shapes. Performance is measured by median reaction time (RT) for correct trials and the coefficient of variation (RT CoV) to capture variability. Higher scores indicate poorer performance.

- Associative Memory Task ("Prices"): Participants learn item-price pairs and are later tested on their recognition. The primary metric is the error rate during recognition.

- Visuospatial Working Memory Task ("Grids"): This task assesses spatial working memory.

Data Analysis Plan

- Statistical Approach: Use mixed-effect models to test the interactions between location, social context, and clinical status (cognitively normal vs. very mild dementia) on cognitive task performance [1].

- Sensitivity Analysis: Conduct additional analyses by removing sessions where participants self-reported interruptions to isolate the effect of environmental distractions [1].

Table 1: Key Findings from Cognitive EMA Studies in Aging Populations

| Study Focus | Participant Group | Cognitive Domain / Factor | Key Finding | Statistical Result |

|---|---|---|---|---|

| Impact of Environmental Distractions [1] | Cognitively Normal (CDR 0) | Visuospatial Working Memory | Better performance when tested at home | P = .001 |

| Impact of Environmental Distractions [1] | Very Mild Dementia (CDR 0.5) | Processing Speed | Slightly faster when not at home | P = .04 |

| Impact of Environmental Distractions [1] | Very Mild Dementia (CDR 0.5) | Processing Speed Variability | Social context impacted variability | P = .04 |

| EMA Response Patterns [8] | Mixed (454 participants across 9 studies) | Overall EMA Response Rate (RR) | Average RR of 79.95% | N/A |

| EMA Response Patterns [8] | Mixed (454 participants across 9 studies) | Response Completeness | 88.37% of responses were fully completed | N/A |

| EMA Response Patterns [8] | Mixed (454 participants across 9 studies) | RR Correlation | Negative correlation with number of EMA questions | r = -0.433, P < .001 |

Table 2: The Scientist's Toolkit - Essential Reagents & Materials for EMA Cognitive Monitoring

| Item Name | Type | Function & Application Note |

|---|---|---|

| Smartphone EMA Platform | Software/Hardware | A custom or commercial app for delivering cognitive tests and surveys. Critical for in-the-wild data collection and participant notification [1]. |

| Clinical Dementia Rating (CDR) | Clinical Protocol | A standardized tool to characterize participant cohorts and ensure valid comparisons between cognitively normal and impaired groups [1]. |

| Digital Cognitive Test Battery | Software | A suite of brief, repeatable tests (e.g., processing speed, working memory) sensitive to momentary fluctuations and early decline [1]. |

| Contextual Questionnaire | Software (EMA) | Integrated questions on location and social context to model the impact of environmental distractions on cognitive scores [1]. |

| Sensor Data (Smartwatch/Home) | Data Stream | Optional objective data (e.g., activity level) to complement self-reports and provide digital markers of behavior [8]. |

Workflow and Conceptual Diagrams

Diagram 1: EMA Cognitive Assessment Workflow

Diagram 2: Factors Influencing EMA Data Quality

Designing and Implementing mHealth EMA Studies: Protocols, Platforms, and Practical Applications

Mobile Ecological Momentary Assessment (mHealth) for cognitive monitoring represents a paradigm shift in neuropsychological research, enabling the collection of real-time, real-world data on cognitive function. This approach leverages smartphone applications and integrated digital systems to move assessment beyond the clinic, capturing dynamic cognitive processes within patients' natural environments [27]. For researchers and drug development professionals, these platforms offer unprecedented opportunities for measuring subtle treatment effects, monitoring disease progression, and understanding cognitive fluctuations in conditions like Mild Cognitive Impairment (MCI) and Alzheimer's disease [12] [27]. The integration of these mobile technologies into clinical research requires careful consideration of platform selection, implementation protocols, and system interoperability to ensure scientific validity, regulatory compliance, and meaningful patient engagement.

Current Evidence and Data Synthesis

Recent studies demonstrate the growing evidence base for mHealth cognitive assessment platforms across diverse clinical populations. The quantitative findings from current literature provide critical insights for platform selection.

Table 1: Key Evidence for mHealth Cognitive Assessment Platforms

| Study Focus | Population | Key Findings | Implications for Platform Selection |

|---|---|---|---|

| Cognitive Training App Quality [12] | Older adults with cognitive impairment (24 apps evaluated) | Mean MARS quality score: 3.57/5 (range: 2.38-4.13); Functionality scored highest (mean=3.91); Engagement scored lowest (mean=3.26) | Priorit apps with proven engagement strategies; Brain HQ and Peak demonstrated highest quality scores (>4.0) |

| Chronic Disease App Preferences [28] | Adults with chronic heart disease (n=302) | Post-monitoring recommendations most valued (β=1.45); Adoption increased from 84% (basic) to 92% (preference-aligned) | Include clinical feedback loops; Personalization significantly increases adoption |

| EMR/EHR Integration Impact [29] | Mixed chronic conditions (19 studies, n=113,135) | 68% of studies reported improved patient outcomes; Key benefits: enhanced patient education (n=5), real-time data sharing (n=4), clinical decision support (n=3) | Prioritize platforms with EMR/EHR interoperability; Address technical compatibility challenges |

| Wearable Monitoring in AD [27] | Alzheimer's disease and MCI | Devices successfully monitored physical activity, sleep patterns, and cognitive function; Potential for early diagnosis identified | Consider multi-modal platforms combining active and passive assessment |

Table 2: mHealth Platform Feature Efficacy

| Feature Category | Specific Functionality | Evidence Strength | User Engagement Impact |

|---|---|---|---|

| Monitoring Capabilities | Vital sign tracking with clinical recommendations | β=1.45, 95% UI 1.26-1.64 [28] | Strong positive effect on sustained use |

| Educational Components | Tailored health information | β=0.50, 95% UI 0.36-0.64 [28] | Moderate positive effect |

| Symptom Tracking | Unrestricted diary entry | β=0.58, 95% UI 0.41-0.76 [28] | Moderate positive effect |

| EMR/EHR Integration | Automated data transfer to clinical systems | Support for clinical decision-making (n=3 studies) [29] | Enhances clinical utility and provider engagement |

| Accessibility Features | Appropriate touch target size, text contrast | Critical for stroke populations with motor impairments [30] | Essential for populations with cognitive-motor deficits |

Platform Selection Protocol

Assessment Platform Evaluation Framework

Selecting an appropriate mHealth cognitive assessment platform requires systematic evaluation across multiple domains. The following protocol provides a standardized approach for researchers and drug development professionals.

Phase 1: Technical and Scientific Validation

- Cognitive Assessment Validity: Verify that digital cognitive tasks have been validated against established neuropsychological measures. For cognitive training apps, only 20.8% currently offer user-tailored training modules, indicating a significant gap in personalization [12].

- Data Quality and Security: Ensure platforms comply with regulatory requirements (HIPAA, GDPR) and implement end-to-end encryption. Data accuracy concerns due to network connectivity have been identified in integrated systems [29].

- Technical Reliability: Assess system uptime, data loss protocols, and error handling. In accessibility studies, functionality dimensions generally score highest (mean=3.91/5), indicating relative technical maturity [12] [30].

Phase 2: Participant Experience and Accessibility

- Usability Optimization: Conduct pilot testing with target population. Current stroke apps demonstrate significant accessibility issues, with touch target size errors occurring 687 times across 16 apps [30].

- Engagement Strategy Implementation: Incorporate evidence-based engagement features. Engagement scores are currently the lowest-rated dimension (mean=3.26/5) in cognitive apps, indicating substantial room for improvement [12].

- Accessibility Compliance: Adhere to WCAG 2.1 guidelines, particularly for text contrast and interface navigation. Heuristic evaluations reveal that 100% of stroke apps violate the "Visibility of System Status" principle [30].

Phase 3: Integration and Implementation

- EMR/EHR Interoperability: Prioritize systems with demonstrated integration capabilities. Only 2 of 19 integrated systems used existing portal credentials for app access [29].

- Clinical Workflow Alignment: Ensure platform functionality supports rather than disrupts clinical workflows. Increased clinical workload in response to additional information was reported in 3 of 19 integration studies [29].

- Data Management and Analytics: Verify export capabilities, real-time monitoring, and analytical functions. Real-time data recorded and shared with clinicians demonstrated benefits in 4 of 19 integration studies [29].

Implementation Protocol for Clinical Trials

Participant Onboarding and Training

- Conduct digital literacy assessment using validated instruments before enrollment

- Provide structured training sessions with multimedia resources

- Implement competency verification through demonstration of core app functions

- Assign digital navigators for ongoing technical support, especially for older adults with limited technology experience [31]

Data Collection and Quality Control

- Establish automated data quality checks for missing, duplicate, or outlier data

- Implement compliance alerts for participants falling below engagement thresholds

- Schedule regular data validation against gold-standard measures throughout trial period

- Create data curation protocols for handling technical artifacts in cognitive measures

Clinical Integration and Safety Monitoring

- Develop clear pathways for clinical review of significant findings

- Implement automated alerts for safety concerns with defined response timelines

- Establish protocols for integrating mHealth data with other clinical assessments

- Create standardized reporting templates for regulatory submissions

Implementation Workflow

The integration of mHealth assessment platforms into clinical research requires a systematic approach that addresses both technical and human factors. The following workflow visualization outlines the key decision points and processes for successful implementation.

Figure 1: mHealth Platform Implementation Workflow. This diagram outlines the systematic process for selecting and implementing mobile cognitive assessment platforms, highlighting critical validation points and iterative refinement cycles essential for research-grade applications.

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of mHealth cognitive monitoring requires specific tools and frameworks for development, evaluation, and integration.

Table 3: Essential Research Reagents and Solutions for mHealth Cognitive Monitoring

| Tool Category | Specific Tool/Platform | Primary Function | Key Considerations |

|---|---|---|---|

| Quality Assessment | Mobile App Rating Scale (MARS) [12] | Standardized evaluation of app quality across engagement, functionality, aesthetics, and information dimensions | Demonstrates high interrater reliability (k=0.88); Mean global scores for cognitive apps range 2.38-4.13/5 |

| EMR/EHR Integration | FHIR (Fast Healthcare Interoperability Resources) [29] | Standardized framework for exchanging healthcare information electronically | Addresses incompatibility challenges between mHealth apps and EMR/EHR systems (reported in 3 of 19 studies) |

| Implementation Framework | CFIR (Consolidated Framework for Implementation Research) [31] | Systematic assessment of implementation context across multiple domains | Adaptable to mHealth integration; Identifies critical patient, provider, app, and system factors |

| Cognitive Assessment | Digital cognitive task batteries [12] [27] | Mobile administration of standardized cognitive tests targeting specific domains (memory, attention, executive function) | Only 33% of cognitive apps involve medical professionals in development; Prioritize validated measures |

| Passive Monitoring | Wearable devices (activity trackers, smartwatches) [27] | Continuous collection of behavioral and physiological data in natural environments | Monitor physical activity, sleep patterns; Potential for early detection of cognitive decline |

| Usability Evaluation | Heuristic evaluation protocols [30] | Expert assessment of interface usability against established principles | Critical for identifying accessibility barriers; 100% of stroke apps violated "Visibility of System Status" heuristic |

| Data Analytics | Advanced statistical packages (R, Python) | Processing and analysis of intensive longitudinal data from mHealth platforms | Essential for handling temporal patterns, missing data, and deriving clinically meaningful metrics |

The selection of appropriate smartphone apps and integrated systems for mobile ecological momentary assessment in cognitive monitoring requires meticulous attention to scientific validity, participant engagement, and system interoperability. Evidence indicates that platforms aligning with user preferences demonstrate significantly higher adoption rates (increasing from 84% to 92%) and that integration with clinical systems enhances their utility for healthcare delivery and research [28] [29]. Future development should prioritize improved engagement strategies, standardized evaluation frameworks, and enhanced interoperability to maximize the potential of these innovative assessment platforms in cognitive research and therapeutic development.

Mobile Ecological Momentary Assessment (mHealth) represents a paradigm shift in cognitive and behavioral monitoring, enabling the collection of real-time, ecologically valid data in participants' natural environments. This approach minimizes recall bias and provides granular insights into the dynamic interplay between psychological processes, context, and health behaviors that traditional lab-based or retrospective methods cannot capture [32] [33]. The integration of mobile crowdsensing (MCS) technologies further enhances EMA by incorporating objective sensor data from smartphones and wearables, providing crucial contextual information alongside self-reported measures [33]. Effective protocol design must balance scientific rigor with participant burden to ensure sustainable engagement and high-quality data, particularly in studies targeting sensitive populations or requiring long-term assessment.

Quantitative Benchmarking of Current Practices

Table 1: Sampling Frequency and Study Duration in Recent mHealth Studies

| Study / Protocol | Primary Focus | Sampling Frequency | Study Duration | Overall Compliance/Adherence |

|---|---|---|---|---|

| TIME Study [32] | Physical activity & behaviors | ~12 prompts/day during biweekly 4-day "bursts" | 12 months | 77% (SD 13%) |

| Mezurio App [34] | Cognitive assessment (memory, executive function) | Daily tasks (episodic memory) | 36 days (Baseline) | 80% with daily learning tasks; 88% active engagement at endpoint |

| EMI for Rumination [7] | Experiential avoidance & rumination | Daily sampling | 4 weeks (Intervention) | Protocol-defined: Complete first 2 weeks + 5/6 exercises in weeks 3-4 |

| Factorial Design Study [35] | EMA Best Practices | 2 vs. 4 prompts/day | 28 days | 83.8% average completion |

Table 2: Key Predictors of EMA Compliance and Engagement

| Predictor Category | Specific Factor | Impact on Compliance |

|---|---|---|

| Demographic Factors | Employment status | Employed participants had lower odds of completion (OR 0.75) [32] |

| Ethnicity | Hispanic participants showed lower odds of completion (OR 0.79) [32] | |

| Age | Older adults tended to complete more EMAs [35] | |

| Contextual Factors | Phone screen status | Phone screen being "on" at prompt substantially increased completion (OR 3.39) [32] |

| Location | Being away from home reduced likelihood, particularly at sports facilities (OR 0.58) or restaurants/shops (OR 0.61) [32] | |

| Behavioral & Psychological Factors | Sleep duration | Short sleep the previous night associated with lower completion odds (OR 0.92) [32] |

| Stress levels | Higher momentary stress predicted lower subsequent prompt completion (OR 0.85) [32] | |

| Travel status | Traveling associated with lower completion odds (OR 0.78) [32] | |

| Study Design Factors | Microinteraction approach (μEMA) | Higher adherence observed [33] |

| Use of sensors | Higher adherence observed [33] | |

| Total number of prompts | Negative correlation with adherence [33] |

Experimental Protocols for mHealth Studies

Longitudinal Multiburst EMA Protocol (TIME Study)

Objective: To investigate factors influencing EMA completion rates in a 12-month intensive longitudinal study among young adults [32].

Methodology:

- Participants: 246 young adults (ages 18-29 years)

- Sampling Design: Biweekly measurement bursts consisting of 4-day periods of intensive sampling

- Prompting Strategy: Signal-contingent prompts delivered approximately once per hour during waking hours (average 12.1 prompts/day during bursts)

- Data Collection: Combined smartphone-based EMA with continuous passive data collection via smartwatches

- Measures: Multilevel logistic regression models examined effects of temporal, contextual, behavioral, and psychological factors on prompt completion

Key Findings: Completion odds declined over the 12-month study (OR 0.95) with significant interactions between time in study and various predictors, indicating changing engagement patterns over time [32].

"Little but Often" Cognitive Assessment Protocol (Mezurio)

Objective: To evaluate the feasibility of frequent cognitive assessment using a smartphone app over an extended duration [34].

Methodology:

- Participants: 35 adults (aged 40-59 years) with elevated dementia risk

- Sampling Design: Daily cognitive tasks for 36 days

- Task Selection:

- Gallery Game: Episodic memory task involving cross-modal paired-associate learning with subsequent tests of recognition and recall following ecologically relevant delays (1, 2, 4, 6, 8, 10, or 13 days)

- Story Time: Connected language task

- Tilt Task: Executive function measure

- Engagement Features: Schedule flexibility, clear user interface, and performance feedback

Key Findings: High compliance (80%) with daily learning tasks sustained over the extended assessment period, with 88% of participants still actively engaged by the final task [34].

Factorial Design Protocol for EMA Optimization

Objective: To identify optimal study design factors for achieving high completion rates for smartphone-based EMAs using a factorial design [35].

Methodology:

- Participants: 411 adults recruited nationwide (mean age 48.4 years)

- Experimental Design: 2×2×2×2×2 factorial design (32 conditions)

- Manipulated Factors:

- Number of questions per EMA (15 vs. 25)

- Number of EMAs per day (2 vs. 4)

- Prompting schedule (random vs. fixed times)

- Payment type ($1 per EMA vs. percentage-based payment)

- Response scale type (slider vs. Likert-type; within-person factor)

- Study Duration: 28 days

Key Findings: No significant main effects of design factors on compliance and no significant interactions, suggesting other participant and contextual factors may be more influential on adherence [35].

Visualization of mHealth Study Designs

Diagram 1: mHealth Study Design Decision Framework

Diagram 2: mHealth EMA Data Collection Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Components for mHealth Cognitive Monitoring

| Research Component | Function & Purpose | Example Implementations |

|---|---|---|

| Smartphone EMA Platforms | Delivery of surveys and cognitive tasks in natural environments; enables real-time data capture with minimal recall bias | Custom apps (Mezurio [34], Insight [35]); Commercial research platforms |

| Wearable Sensors | Passive collection of objective behavioral and physiological data; provides context for self-reported measures | Smartwatches with accelerometers [32]; Shimmer2 sensors [36] for motion and vital signs |

| Cognitive Task Batteries | Assessment of specific cognitive domains through brief, repeatable micro-assessments | Gallery Game (episodic memory) [34]; Story Time (language) [34]; Tilt Task (executive function) [34] |

| Multilevel Modeling Frameworks | Statistical analysis of nested EMA data (moments within days within persons); accounts for within-person variation | Multilevel logistic regression for completion predictors [32]; Mixed-effects models for cognitive performance [34] |

| Participant Engagement Features | Maintenance of long-term adherence through user-centered design and feedback mechanisms | Performance feedback (e.g., gold star animations [34]); Schedule flexibility; Personalized reminders [34] |

| MCS (Mobile Crowdsensing) Architecture | Integration of active (EMA) and passive (sensor) data collection for comprehensive contextual understanding | Combination of smartphone sensors (accelerometer, GPS) with self-report [33]; Context-aware prompting systems |

Effective protocol design for mHealth cognitive monitoring requires careful consideration of sampling frequency, study duration, and task selection in relation to specific research questions and target populations. The evidence suggests that multiburst designs with intensive sampling periods interspersed with rest periods can sustain engagement in long-term studies [32], while microinteraction approaches with brief, daily cognitive tasks maintain high compliance over intermediate durations [34]. Future research should explore adaptive sampling techniques that tailor prompt frequency and timing based on individual participant contexts and states [32], potentially leveraging passive sensor data to identify optimal moments for assessment [33]. The integration of multimodal assessment combining self-report, cognitive performance, and sensor data provides the most comprehensive approach for understanding cognitive function in real-world contexts, ultimately advancing the field of mobile cognitive monitoring in both clinical research and drug development.

Mobile cognitive testing represents a paradigm shift in neuropsychological assessment, enabling the capture of cognitive performance in real-world settings through ecological momentary assessment (EMA). This approach provides unparalleled insights into cognitive fluctuations by testing individuals in their natural environments, moving beyond the artificial constraints of the laboratory. Research demonstrates that remote cognitive testing offers valid and reliable data in older adult populations, though careful consideration of environmental confounds is necessary [1]. The core cognitive domains of processing speed, executive function, and memory are particularly amenable to mobile assessment and serve as critical indicators of cognitive health and neurological impairment.

Core Mobile Cognitive Test Domains

Processing Speed

Processing speed measures assess the speed at which an individual can perform cognitive tasks, serving as a foundational element for higher-order cognitive functions.

Digital Symbol Substitution Tests: These established measures assess processing speed and short-term working memory, demonstrating sensitivity to cognitive dysfunction and changes in cognitive function [37]. The Digital Processing Speed Test (DPST) represents an automated, multilingual adaptation that can be completed within 2 minutes on a mobile device, showing similar test performance to traditional measures like the Mini-Mental State Examination (MMSE) and Montreal Cognitive Assessment (MoCA) with an area under the receiver operating characteristic curve (AUROC) of 0.861 for identifying mild cognitive impairment (MCI) and dementia [37].

Symbol Matching Tasks: In the Cognitive Ecological Momentary Assessment study, participants completed a processing speed task where they were shown 3 pairs of abstract shapes and selected which of 2 possible responses matched 1 of the 3 targets [1]. Performance was measured through median reaction time (RT) of correct trials and RT variability (coefficient of variation, CoV), with higher scores indicating poorer performance [1].

Table 1: Processing Speed Tests in Mobile Cognitive Assessment

| Test Name | Cognitive Domain | Administration Time | Key Metrics | Validation Population |

|---|---|---|---|---|

| Digital Processing Speed Test (DPST) | Processing Speed, Working Memory | ~2 minutes | Number of correct digits | 476 adults, MCI/dementia patients [37] |

| Symbols Task | Processing Speed | 20-60 seconds | Median RT, RT variability | Cognitively normal older adults, very mild dementia [1] |

| Matching Pair | Processing Speed | 60-90 seconds | Accuracy, Reaction time | Available in NeuroUX test battery [38] |

Executive Function

Executive function encompasses higher-order cognitive processes including working memory, cognitive flexibility, planning, and inhibition.

Spatial Working Memory Tasks: The Grids task, a spatial working memory measure used in mobile assessment, demonstrates sensitivity to environmental factors and cognitive status [1]. Cognitively normal older adults showed better visuospatial working memory performance when completing tests at home compared to away from home, while older adults with very mild dementia showed no effect of testing location on the same task [1].

Cognitive Flexibility and Inhibition Tasks: Mobile test batteries include tasks such as Hand Swype (assessing cognitive flexibility), Color Trick (executive function), and Quick Tap 2 (inhibition control) [38]. These gamified tests are derived from traditional pen-and-paper tests and are designed to be brief (60-90 seconds) while maintaining measurement accuracy [38].

Table 2: Executive Function Tests in Mobile Cognitive Assessment

| Test Name | Specific Executive Function | Administration Time | Key Metrics | Contextual Considerations |

|---|---|---|---|---|

| Grids Task | Spatial Working Memory | Not specified | Accuracy, Location effects | Performance differs by testing location for cognitively normal [1] |

| N-Back | Working Memory | 60-90 seconds | Accuracy, Reaction time | Available in NeuroUX test battery [38] |

| Hand Swype | Cognitive Flexibility | 60-90 seconds | Accuracy, Switching cost | Available in NeuroUX test battery [38] |

| Quick Tap 2 | Inhibition Control | 60-90 seconds | Commission errors, Reaction time | Available in NeuroUX test battery [38] |

Memory

Memory assessment in mobile cognitive testing focuses on both verbal and visual memory systems through specialized tasks.

Associative Memory Tasks: The Prices associative memory task presents subjects with a learning phase where they study 10 item-price pairs for 3 seconds per pair, followed by a recognition phase where they must select the correct price for each item [1]. This task takes approximately 60 seconds per administration and measures error rate during recognition, with higher scores indicating poorer recognition performance [1].

Verbal Memory Tests: Mobile word list tests have demonstrated validity in serious mental illness populations, with performance positively correlated with traditional Hopkins Verbal Learning Test (HVLT) scores (ρ = 0.52, P < .001) [39]. Performance remains valid even when completed during distraction, with low effort, or outside the home environment [39].

Spatial Short-term Memory: Tests such as the Matrix task assess spatial short-term memory, while Memory Path tasks evaluate visuospatial memory [38]. These brief assessments can be administered repeatedly to track fluctuations in memory performance over time.

Table 3: Memory Tests in Mobile Cognitive Assessment

| Test Name | Memory Type | Administration Time | Key Metrics | Validation Evidence |

|---|---|---|---|---|

| Prices Task | Associative Memory | ~60 seconds | Error rate during recognition | Used with cognitively normal and very mild dementia [1] |

| Mobile Variable Difficulty List Memory Test (VLMT) | Verbal Memory | Not specified | Recall accuracy, Recognition | Correlated with HVLT (ρ = 0.52, P < .001) in SZ, BD [39] |

| Verbal Memory Test | Verbal Memory | 60-90 seconds | Recall accuracy, Recognition | Available in NeuroUX test battery [38] |

| Memory Matrix | Spatial Short-term Memory | 60-90 seconds | Accuracy, Span length | Available in NeuroUX test battery [38] |

Experimental Protocols and Methodologies

Study Design and Participant Recruitment

Robust experimental protocols are essential for valid mobile cognitive assessment research. Participant recruitment should target well-characterized cohorts from clinical and community settings. The Ambulatory Research in Cognition (ARC) study protocol recruits participants from studies of aging and dementia at academic medical centers, with clinical assessments conducted within a year of starting mobile testing [1]. Inclusion criteria typically require completion of a minimum number of sessions (e.g., at least 10 sessions during baseline testing) to ensure adequate engagement and sufficient observations for comparisons across different environments [1].

Clinical status should be determined using standardized assessments such as the Clinical Dementia Rating (CDR), which rates cognitive and functional performance on a 5-point scale across 6 domains (memory, orientation, judgment and problem solving, community affairs, home and hobbies, and personal care) [1]. Participants can be classified as cognitively normal (CDR 0) or as having very mild dementia (CDR 0.5) based on semi-structured interviews with participants and collateral sources [1].

Mobile Assessment Protocols

Mobile cognitive testing protocols should implement several key design elements:

Assessment Frequency: The ARC protocol sends assessments to participants using native iOS or Android notification systems pseudorandomly, with instructions to complete assessments as soon as possible within a 2-hour window [1]. Participants complete 3 cognitive tasks up to 4 times per day over the course of a week, providing high-density data on cognitive fluctuations [1].

Environmental Context Recording: At each assessment, participants should be asked about their current location and social surroundings to quantify whether they are at home (or not) and by themselves (or not) [1]. After each assessment session, participants should report whether they experienced any interruptions during testing [1].

Task Administration: Each cognitive test should be designed for brief administration (typically 20-90 seconds per task) to facilitate compliance with intensive testing protocols [1] [38]. Tests should be presented with clear instructions and intuitive interfaces to minimize learning effects across repeated administrations.

Data Analysis Approaches

Appropriate statistical methods are crucial for analyzing intensive longitudinal data from mobile cognitive assessments:

Mixed-Effects Modeling: This approach tests the interactions between location, social context, and clinical status while accounting for within-person dependencies across multiple assessments [1]. Mixed-effects models can examine how environmental distractions impact performance differently across clinical groups.

Handling Missing Data: Analytical approaches should account for missing data, which is common in intensive longitudinal designs. In one study, participants completed an average of 75.3% of ecological mobile cognitive tests over 30 days [39].

Contextual Factor Analysis: Analyses should examine how performance during experienced distraction, low effort, and out-of-home location affects cognitive scores while maintaining validity compared to in-lab assessments [39].

Implementation Considerations for Mobile Cognitive Testing

Environmental and Contextual Factors

Environmental distractions significantly impact mobile cognitive test performance, particularly in vulnerable populations:

Location Effects: Cognitively normal older adults demonstrate better visuospatial working memory performance when completing tests at home compared to away from home, while those with very mild dementia show no such location effect [1]. Conversely, older adults with very mild dementia were slightly faster on processing speed tasks when not at home [1].

Social Context: The presence of others during testing increases variability in processing speed, with this effect more pronounced in those with very mild dementia [1]. Social context only impacted variability in processing speed for participants with very mild dementia (P=.04) [1].

Interruptions: Across all participants, approximately 12.4% of assessments involve self-reported interruptions [1]. When considering tests completed in the most distracting environments (away from home and in the presence of others), those with very mild dementia show larger differences specifically on visuospatial working memory measures [1].

Design Guidelines for Older Adult Populations

Mobile cognitive tests for older adults require specialized design considerations:

Simplify and Increase Size: The guidelines "Simplify" and "Increase the size and distance between interactive controls" are transversal and of greatest significance for older adult users [20].

Comprehensive Design Categories: Design guidelines for older adults should address Help & Training, Navigation, Visual Design, Cognitive Load, and Interaction (with subcategories for Input and Output) [20].

Visual Design Principles: Text should maintain high contrast (minimum ratio of 4.5:1 for normal text) with appropriate font sizes (14 point and bold or larger, or 18 point or larger for large text) [40]. These design elements support older adults with potential visual declines affecting contrast sensitivity, acuity, and color discrimination [20].

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Research Materials for Mobile Cognitive Assessment Studies

| Tool/Resource | Function/Purpose | Example Implementation |

|---|---|---|

| Mobile Cognitive Testing Platforms | Enables deployment of cognitive tests to mobile devices | NeuroUX platform, ARC smartphone app [1] [38] |

| Clinical Assessment Tools | Provides reference standard for cognitive classification | Clinical Dementia Rating (CDR), MMSE, MoCA [1] [37] |

| Environmental Context Measures | Quantifies testing environment and distractions | Location (home/away), social context (alone/with others), interruption reporting [1] |

| Data Security & Compliance Frameworks | Ensures participant privacy and regulatory compliance | HIPAA, GDPR compliance protocols [38] [41] |

| Mixed-Effects Modeling Software | Analyzes intensive longitudinal data with nested structure | R Studio, specialized statistical packages [37] |