Mitigating Recall Bias in Social Interaction Measurement: A Researcher's Guide to Accurate Data Collection

This article provides a comprehensive guide for researchers and drug development professionals on identifying, mitigating, and validating measures against recall bias in social interaction data.

Mitigating Recall Bias in Social Interaction Measurement: A Researcher's Guide to Accurate Data Collection

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on identifying, mitigating, and validating measures against recall bias in social interaction data. Covering foundational theory, advanced methodological strategies like Ecological Momentary Assessment (EMA), practical troubleshooting, and rigorous validation techniques, it synthesizes current best practices to enhance the validity and reliability of research outcomes in clinical and biomedical settings.

Understanding Recall Bias: The Hidden Threat to Social Interaction Data

FAQs on Recall Bias

What is recall bias? Recall bias is a type of systematic error that occurs when study participants do not accurately remember or report past events or experiences [1] [2]. The accuracy and volume of memories can be influenced by subsequent events and experiences, leading to distorted data [1]. It is sometimes also referred to as response bias, responder bias, or reporting bias [2].

What causes recall bias? Recall bias stems primarily from the fallibility of human memory [3]. Key causes include:

- Time: Memories naturally fade or become distorted over time [3] [1].

- Emotional State: Strong emotions during an event or during recall can alter perceptions and lead to selective memory retrieval [3].

- Personal Perception: An individual's own beliefs, attitudes, and experiences can influence memory, leading to selective recall or exaggeration of details [3].

- External Influences: Media coverage or social interactions can shape memories over time, creating false narratives [3].

- Social Desirability: People may provide responses they believe are socially acceptable rather than accurate [3] [4].

Which study designs are most prone to recall bias? Recall bias is a particular problem in studies that rely on self-reporting after the fact [1]. The designs most prone to recall bias are:

- Case-control studies [3] [4] [1]

- Retrospective cohort studies [3] [4] In these designs, participants are asked to evaluate exposure variables or past behaviors retrospectively, which can lead to inaccurate recollections [4].

What is the difference between recall bias and recall limitation?

- Recall Limitation refers to the natural human tendency to forget or distort information over time [3].

- Recall Bias involves the conscious or unconscious influence on memory recollection, often shaped by external factors like beliefs or emotions [3].

How does recall bias impact research findings? Recall bias can significantly impact the validity and reliability of research findings [3] [5]. It can cause certain events or behaviors to be under-reported or over-reported, leading to an inaccurate representation of their true prevalence or occurrence [3]. This can result in:

- Overestimation or underestimation of the strength of associations between variables [1].

- Skewed study results and incorrect conclusions [3].

Troubleshooting Guide: Mitigating Recall Bias in Your Research

Problem: Inaccurate recall of past behaviors or exposures in a case-control study.

Solution:

- Use a Prospective Design: Whenever feasible, opt for a prospective study design where information is collected in real-time or shortly after events occur, as the outcome is unknown at the time of enrollment [5] [1].

- Shorten the Recall Period: Reduce the time between the event and its recall. Ask participants to report on recent activities (e.g., in the past week) rather than distant ones (e.g., in the past year) [4] [6].

- Utilize Memory Aids: Provide participants with diaries, timelines, or visual cues to help jog their memory and improve recall accuracy [3] [4] [6].

Problem: Participant memories are influenced by their current disease status (e.g., in a case-control study).

Solution:

- Blind Data Collection: Standardize the interviewer's interaction with the patient and blind the interviewer to the participant's exposure or disease status to prevent biased probing [5].

- Use Objective Data Sources: Corroborate subjective self-reports with objective data sources such as medical records, laboratory measurements, or biological testing whenever possible [5] [4].

- Validate Self-Report Instruments: Compare self-reported data with other validated data collection methods, such as laboratory measurements or medical record checks, to assess and improve validity [4].

Problem: Social desirability is leading to under-reporting of sensitive or undesirable behaviors.

Solution:

- Ensure Anonymity and Confidentiality: Create an environment conducive to honest recall by guaranteeing participant anonymity and reducing distractions [3] [4].

- Use Indirect Questioning Techniques: Employ methods like the Unmatched Count Technique, which provides greater anonymity and can reduce overreporting driven by social desirability [7].

- Careful Question Phrasing: Design clear, neutral, and easy-to-understand questionnaires. Avoid leading questions and use open-ended ones to encourage more genuine recollection [3] [6].

Experimental Protocols & Data

Quantitative Data on Self-Reporting Validity

The following table summarizes findings from a 2025 study comparing the validity of different self-reporting methods for physical activity (PA) and sedentary behavior (SB) against accelerometry as an objective reference standard [8].

Table 1: Criterion Validity of Self-Reported Physical Activity and Sedentary Behavior Against Accelerometry [8]

| Self-Report Method | Reporting Period | Response Scale | Comparison with Accelerometry | Key Finding |

|---|---|---|---|---|

| Momentary Reports | Brief (5-120 min), aggregated over 7 days | Quantitative (minutes) | Sedentary Behavior (SB) Duration | Closer in magnitude to accelerometry than 1-week recall; correlation (r = .61) |

| 1-Week Recall | Retrospective, 7 days | Quantitative (minutes) | Sedentary Behavior (SB) Duration | Lower duration of SB reported; less accurate than momentary reports |

| All Self-Reports | Momentary & Recall | All Scales | Physical Activity (PA) Duration | Indicated greater duration of PA than accelerometry |

| All Self-Reports | Momentary & Recall | All Scales | Correlation with Accelerometry | Low to modest correlations for both momentary and retrospective reports |

Table 2: Construct Validity of Self-Reported Physical Activity Measures [8]

| Demographic Variable | Association with Objective Measure (Accelerometry) | Association with Self-Reports (All Methods) |

|---|---|---|

| Age | Step counts increased in younger age groups but were lowest in the 65+ age group. | Total activity duration showed a different pattern, highest in the 65+ age group. |

| Gender, Education, etc. | Specific patterns observed. | Associations often differed from accelerometry; in some cases, directions were opposite. |

Detailed Methodology: Ecological Momentary Assessment (EMA) vs. Retrospective Recall

This protocol is adapted from a study seeking to improve the validity of retrospective self-reports [8].

Objective: To compare the criterion and construct validity of self-reported physical activity (PA) and sedentary behavior (SB) using brief reporting periods (EMA) and quantitative response scales versus retrospective recall and verbal response scales.

Participants: 258 community-dwelling adults.

Procedure:

- Objective Measurement: All participants wore accelerometers throughout a 7-day period to provide objective measures of PA and SB.

- Momentary Reporting (EMA): Participants received prompts 5 times per day via a mobile application to report their current or very recent (last 5-120 minutes) PA and SB. They reported using:

- Quantitative Scales: Duration in minutes.

- Verbal Response Scales (VRS): Relativistic scales (e.g., "Slightly," "Moderately," "Extremely" active).

- Retrospective Reporting: At the end of the 7-day period, participants provided an overall recall of their PA and SB for the entire week, using both quantitative and VRS scales.

- Data Aggregation: Momentary reports were summarized to create a person-level value for the week, allowing direct comparison with the end-of-week retrospective reports and accelerometry data.

Analysis:

- Criterion Validity: Assessed by comparing mean levels of PA/SB from self-reports to accelerometry and by calculating correlations between self-reports and accelerometry.

- Construct Validity: Assessed by examining whether associations between self-reported PA/SB and demographic variables (e.g., age, gender) replicated the associations observed with the accelerometry-based measures.

Research Reagent Solutions: Essential Tools for Mitigating Recall Bias

Table 3: Key Materials and Tools for Recall Bias Prevention

| Tool / Solution | Function | Example Use Case |

|---|---|---|

| Accelerometer | Provides an objective, device-based measure of physical activity and sedentary behavior for validation. | Used as a reference standard to validate self-reported physical activity data [8]. |

| Electronic Diary / Mobile EMA App | Enables real-time data collection through momentary assessments, drastically reducing the recall period. | Sending scheduled prompts to participants to log current activity, emotions, or symptoms [8] [6]. |

| Validated Self-Report Scales (e.g., Marlowe-Crowne Social Desirability Scale) | Identifies and measures the tendency of participants to provide socially desirable answers. | Administered alongside primary study questionnaires to quantify and control for social desirability bias [4]. |

| Unmatched Count Technique (UCT) | An indirect questioning method that provides greater anonymity, reducing overreporting of sensitive behaviors. | Measuring the prevalence of sensitive pro-environmental or health-related behaviors where social desirability is a concern [7]. |

| Digital Ethnography Platform (e.g., EthOS) | Supports diary studies, mobile ethnography, and multimedia data collection (photos, audio, video) to enrich real-time reporting. | Participants document experiences in the moment, providing visual and auditory cues that aid accurate recall and reduce reliance on memory [6]. |

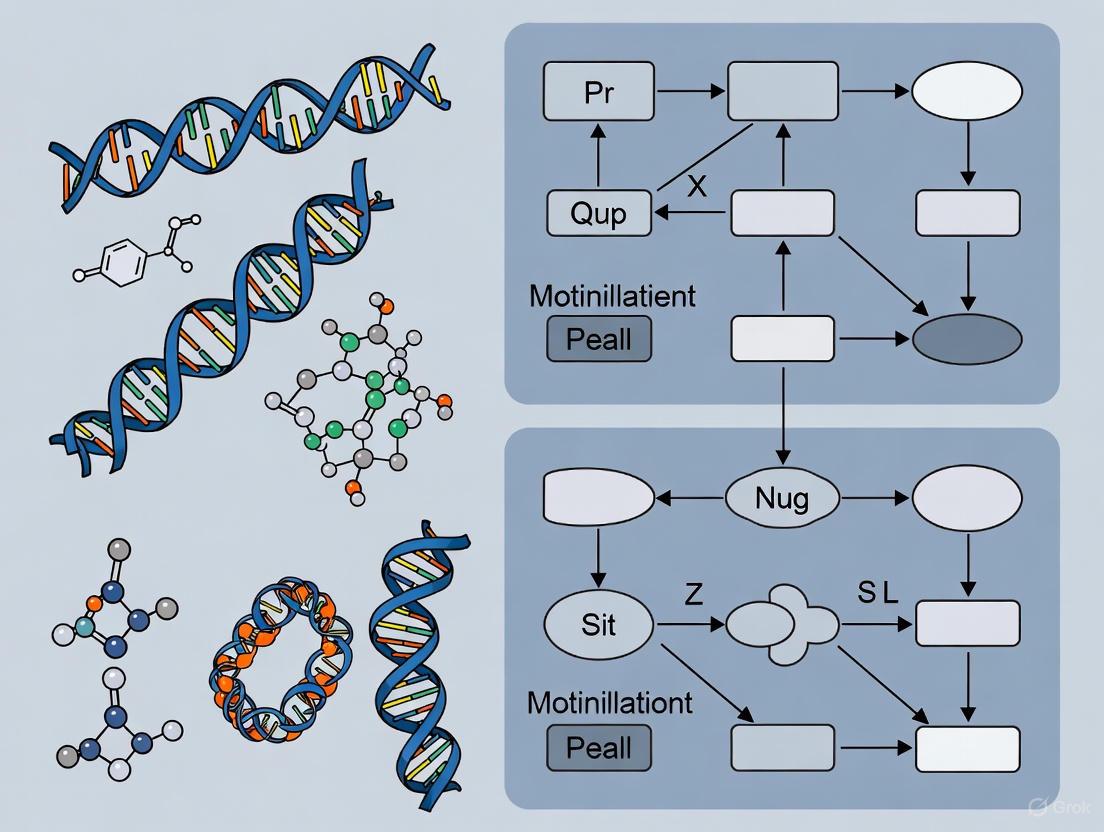

Experimental Workflow Diagram

The following diagram illustrates the key methodological decision points for designing a study to mitigate recall bias, contrasting a problematic retrospective approach with a recommended prospective one.

Recall bias is a systematic error that occurs when participants in a study inaccurately remember or report past events or exposures [3]. In the context of social interaction measurement research, this bias poses a significant threat to the validity and reliability of findings, as it can lead to the under- or over-reporting of specific social behaviors or feelings [3]. Understanding its key causes—time lapse, emotional factors, and social desirability—is the first critical step for researchers to design robust studies and develop effective mitigation strategies, thereby ensuring the collection of high-quality, actionable data.

Core Concepts and Definitions

What is Recall Bias?

Recall bias is a phenomenon where a participant's ability to accurately remember and report past events becomes flawed over time [3]. It is not merely a passive process of forgetting but can be actively influenced by a person's beliefs, current emotional state, or desire to present themselves favorably [3]. This differs from simple recall limitation, which refers to the natural human tendency to forget information over time, whereas recall bias involves conscious or unconscious influences that distort recollection [3].

Key Causes of Recall Bias

The three primary causes identified in the article title are defined as follows:

- Time Lapse: As time passes, memories naturally fade and become more susceptible to distortion. The longer the interval between a social event and its subsequent recall, the greater the chance of inaccuracy [3].

- Emotional Factors: An individual's emotional state during the event or at the time of recall can significantly alter memory. Strong emotions can either enhance or hinder accurate recollection, depending on the individual and context [3].

- Social Desirability: This is a specific type of bias where participants modify their responses to be more socially acceptable or to conform to perceived expectations of the researcher, often leading to an over-reporting of "positive" social behaviors and an under-reporting of "negative" ones [3].

Troubleshooting Guide: Identifying and Resolving Recall Bias

This guide helps researchers diagnose and address common recall bias issues in their study designs.

Problem: Social interaction data appears inconsistent or does not align with other objective measures. Impact: Compromised data validity, leading to inaccurate conclusions about the relationship between social factors and health outcomes [3] [9]. Context: Most prevalent in retrospective study designs (e.g., case-control studies) and any research relying on self-reported past behavior [3].

| Symptom | Likely Cause | Recommended Solution | Verification Method |

|---|---|---|---|

| Participants with a negative health outcome (cases) report more past social isolation than healthy controls. | Differential recall bias; cases are more motivated to search for and recall exposures they believe caused their condition [3]. | Shift to a prospective study design where social interaction is recorded before outcomes are known. | Compare odds ratios before and after methodology change. |

| Consistent over-reporting of socially desirable activities (e.g., group participation) across all study groups. | Social desirability bias; participants want to present themselves in a positive light [3]. | Use objective measures (e.g., electronic behavioural logs) and assure anonymity. | Triangulate self-report data with objective data to quantify the discrepancy. |

| High levels of inconsistency in participant reports of the same event across multiple data collection waves. | Time lapse and natural memory degradation [3]. | Minimize the delay between events and data collection. Use memory aids like diaries or real-time experience sampling [3] [9]. | Calculate test-retest reliability scores for key social interaction variables. |

| Participant recall is highly detailed for emotionally charged events but vague for neutral ones. | Emotional state selectively enhancing or impairing memory encoding and retrieval [3]. | Calibrate data by combining self-report with collateral reports from friends/family. Use experience sampling to capture feelings closer to the event [9]. | Assess correlation between the emotional valence of reported events and the level of recalled detail. |

Frequently Asked Questions (FAQs) for Researchers

Q1: Why is recall bias considered a major limitation in social interaction research? Recall bias is a significant limitation because it can systematically distort the accuracy of collected data [3]. This misclassification can skew the observed associations between social variables (e.g., loneliness) and health outcomes (e.g., cognitive decline, mortality risk), potentially leading to incorrect conclusions about cause and effect [3] [9].

Q2: Which study designs are most vulnerable to recall bias? Case-control studies are considered the most prone to recall bias [3]. In these studies, individuals with a specific condition (cases) may recall past exposures or social interactions differently than those without the condition (controls). Retrospective cohort studies that rely on self-reported past data are also highly susceptible [3].

Q3: What is the difference between recall bias and confirmation bias? Recall bias pertains to the distortion of individual memories of past events. In contrast, confirmation bias is the tendency to selectively seek out or favor information that confirms one's pre-existing beliefs or hypotheses [3]. A researcher with confirmation bias might unconsciously design a questionnaire that leads participants to report social interactions in a way that supports the researcher's initial theory.

Q4: How can experience sampling help mitigate recall bias? Experience sampling (or Ecological Momentary Assessment) involves collecting data about participants' current experiences in real-time and in their natural environment [9]. This methodology drastically reduces the time lapse between a social interaction and its recording, thereby minimizing the opportunity for memory decay or distortion. A 2025 study used this method to effectively capture momentary loneliness and social interactions across different age groups [9].

Q5: Is recall bias always differential? No, recall bias can be non-differential if the degree of misremembering is approximately the same across all groups being compared in a study [3]. However, in social interaction research involving groups with different health statuses (e.g., cognitively unimpaired vs. impaired), the bias is often differential, which can have a more severe impact on the study's validity [3] [9].

Experimental Protocols for Mitigating Recall Bias

Protocol: Experience Sampling for Real-Time Social Interaction Data

This protocol is adapted from a 2025 study on social interactions and loneliness [9].

Objective: To collect high-fidelity, momentary data on social interactions and associated feelings of loneliness, minimizing reliance on retrospective recall.

Methodology:

- Participant Prompting: Program smartphones or dedicated devices to signal participants at random intervals throughout the day (e.g., 5-8 times per day for one week).

- Data Collection: Upon each prompt, present a short electronic survey asking:

- Since the last prompt, have you had a social interaction? (Y/N)

- If yes, what was the mode of interaction? (e.g., Face-to-face, Phone, Digital)

- How close are you to this interaction partner? (e.g., Stranger, Acquaintance, Close Friend/Family)

- Right now, how lonely do you feel? (e.g., on a 5-point Likert scale)

- Compliance Checks: Monitor participant compliance in real-time and send reminders if needed.

Justification: This protocol captures experiences as they occur or shortly thereafter, thereby directly addressing the key cause of time lapse [3] [9].

Protocol: Validating Self-Reports with Objective Measures

Objective: To triangulate self-reported social data with objective metrics to quantify and correct for social desirability bias.

Methodology:

- Parallel Data Streams: For a sub-sample of participants, collect two streams of data concurrently:

- Self-Report: Standard questionnaires on weekly social activity.

- Objective Measures: Use anonymized, privacy-preserving data from mobile phones (e.g., call logs, Bluetooth proximity sensing to estimate co-location) or access to community center swipe-card logs.

- Data Comparison: Statistically compare the self-reported frequency of social interactions with the frequency indicated by the objective measures.

- Calibration: Develop a calibration algorithm to adjust the self-report data from the larger cohort based on the discrepancy found in the sub-sample.

Justification: This provides a concrete method to identify the presence and magnitude of social desirability bias, moving beyond pure reliance on potentially flawed self-reports.

Research Reagent Solutions: Essential Materials for Robust Social Measurement

The following table details key "reagents" or tools for designing studies resistant to recall bias.

| Research Reagent | Function & Application | Key Benefit in Mitigating Bias |

|---|---|---|

| Experience Sampling App (e.g., custom-built or commercial platforms) | A digital tool for administering real-time surveys on participants' mobile devices [9]. | Directly counters time lapse by capturing data proximal to the event and emotional state. |

| Electronic Diaries / Social Interaction Logs | Digital platforms for participants to manually log their social activities at the end of each day. | Reduces memory decay compared to weekly or monthly questionnaires, lessening the effect of time lapse. |

| Objective Data Logs (e.g., anonymized Bluetooth proximity, validated community use data) | Provides a behavioral metric against which to validate self-reported social interaction data. | Serves as a validation tool to identify and correct for social desirability bias. |

| Validated Ecological Momentary Assessment (EMA) Scales | Brief, psychometrically validated scales designed for repeated real-time measurement of constructs like loneliness [9]. | Ensures that momentary data is reliable and valid, capturing the impact of emotional factors accurately. |

| Structured Interview Protocols with Neutral Wording | Pre-written interview scripts that use open-ended, non-leading questions to elicit recall of social history [3]. | Minimizes the introduction of bias through researcher prompting or suggestion, reducing distortions from social desirability. |

Visualizing Workflows: From Study Design to Data Validation

Study Design Comparison for Recall Bias Risk

Study Design Impact on Recall Bias Risk

Real-Time Social Interaction Data Collection Workflow

Real-Time Data Collection Workflow

Frequently Asked Questions (FAQs)

Q1: What is internal validity and why is it critical for my research? Internal validity is the extent to which you can be confident that a cause-and-effect relationship established in your study cannot be explained by other factors. It makes the conclusions of a causal relationship credible and trustworthy. Without high internal validity, an experiment cannot demonstrate a causal link between your treatment and response variables [10].

Q2: What is recall bias and how does it threaten my study's internal validity? Recall bias is a common phenomenon where a participant’s ability to accurately remember and report past events becomes flawed over time. This leads to a distorted or inaccurate memory of past events, experiences, or exposures. It is a significant threat to internal validity because it can systematically skew results, causing under- or over-reporting of events and leading to an inaccurate representation of the true prevalence or occurrence, which ultimately jeopardizes the validity of your research findings [3].

Q3: Which study designs are most vulnerable to recall bias? Case-control studies are the most prone to recall bias. In such studies, individuals with a disease (cases) might be more motivated to recall past exposures they believe caused their illness than individuals without the disease (controls). This can lead to an overestimation of associations between exposures and diseases. Retrospective cohort studies that rely on self-reported data about past lifestyle factors (e.g., diet) are also highly susceptible [3].

Q4: How can I objectively measure social interaction to avoid biases like recall? Using electronic sensors like sociometers can provide objective measurement. Sociometers are wearable devices that use a high-frequency radio transmitter to gauge physical proximity and a microphone to track speech duration. This method removes the human observer, reducing the risk of social desirability bias and the inaccuracies inherent in self-reported or observer-recorded data [11]. Systematic observation protocols like SOSIP also offer valid and reliable objective assessment [12].

Q5: What's the difference between a recall limitation and recall bias? Recall limitation refers to the natural human tendency to forget or distort information over time. Recall bias, on the other hand, is more about the conscious or unconscious influence on memory recollection. Bias occurs when external factors, such as personal beliefs or emotions, shape how you remember specific events [3].

Troubleshooting Guides

Problem: Low Internal Validity Due to Confounding Factors Your study may have low internal validity if you cannot rule out other explanations for your results [10].

- Step 1: Identify Potential Threats. Common threats include [10] [13]:

- History: An unrelated event occurs during the study that influences outcomes.

- Maturation: Natural changes in participants over time affect results.

- Testing: Taking a test influences scores on subsequent tests.

- Selection Bias: Groups are not comparable at the start of the study.

- Attrition: Differential dropout rates between study groups skew results.

- Step 2: Implement Countermeasures.

- Use a Control Group: A comparable control group counters many threats to single-group studies [10].

- Random Assignment: Randomly assign participants to groups to make them comparable at the baseline, countering selection bias and regression to the mean [10].

- Blinding: Blind participants to the study's aim to counter social interaction effects and demand characteristics [10].

Problem: Recall Bias in Self-Reported Data Participants provide inaccurate or distorted information when asked about past events [3].

- Step 1: Minimize Reliance on Memory.

- Use prospective study designs where possible.

- Collect data more frequently to reduce the time between an event and its recall.

- Use diaries or real-time reporting tools instead of retrospective interviews [3].

- Step 2: Improve Interview and Survey Design.

- Use open-ended questions and avoid leading questions.

- Use memory aids like photos or calendars to help participants recall events accurately [3].

- Step 3: Use Objective Measures.

- Where possible, supplement or replace self-reports with objective data. In social interaction research, this could involve using sociometers to measure proximity and talkativeness instead of asking participants to estimate their social activity [11].

Problem: Social Desirability Bias in Interaction Research Participants alter their behavior or reported behaviors to present themselves in a more favorable light, especially when an observer is present [11].

- Step 1: Reduce Observer Intrusion.

- Use unobtrusive sensors (e.g., sociometers) instead of human observers where ethically and practically possible [11].

- Step 2: Ensure Anonymity and Confidentiality.

- Create a private and comfortable environment for data collection to encourage honest responses [3].

Quantitative Data on Measurement Approaches

The table below summarizes different methods for measuring social interaction, highlighting their validity and susceptibility to bias.

Table 1: Comparison of Social Interaction Measurement Methods

| Method | Key Measures | Internal Validity & Objectivity | Primary Biases / Threats |

|---|---|---|---|

| Self-Report Surveys [12] | Sense of contact with neighbors, number of friends, loneliness. | Lower; subjective and indirect assessment. | Recall bias, social desirability bias [3]. |

| Systematic Human Observation (e.g., early methods) [12] | Counts of individuals, functional activity categories (e.g., sitting, socializing). | Moderate; direct observation but can be intrusive. | Reactivity (observer effect), instrumentation if coding is inconsistent [10] [11]. |

| Electronic Sociometers [11] | Physical proximity duration, speech time (in seconds), group size. | Higher; provides objective, quantitative data less prone to participant manipulation. | Potential perception of surveillance; requires technical validation [11]. |

| Structured Observational Protocol (e.g., SOSIP) [12] | Levels of social interaction based on a defined scale (e.g., Parten's scheme), group size. | Established as valid and reliable through psychometric testing; systematic and objective [12]. | Requires trained observers; potential for instrumentation bias if not consistently applied [10]. |

Experimental Protocols

Protocol 1: Systematically Observing Social Interaction in Parks (SOSIP)

SOSIP is a validated protocol for objectively assessing social interactive behaviors within urban outdoor environments [12].

- Objective: To systematically evaluate human interactive behaviors based on their levels of social interaction and group size.

- Materials: Observation tool (e.g., checklist, app) based on the Social Interaction Scale (SIS), which is derived from Parten's scheme of social activities [12].

- Procedure:

- Training: Observers must be trained to reliably identify and code the different levels of social interaction.

- Observation: In a defined area (e.g., a park), observers scan and record the behavior of individuals or groups.

- Coding: Each observed individual/group is coded for:

- Group Size: The number of people in the immediate social unit.

- Level of Social Interaction: Based on the SIS, which categorizes the degree of bonds and interactivities between individuals [12].

- Data Analysis: Use statistical models (e.g., Hierarchical Linear Models) to explore relationships between environmental features and observed social interaction [12].

Protocol 2: Using Sociometers to Quantify Social Patterns

This protocol uses wearable sensors to collect objective data on social behavior in naturalistic settings [11].

- Objective: To quantify social interaction patterns (proximity and talkativeness) without the bias of human observation.

- Materials: Sociometers for all participants. These are wearable devices containing a radio transmitter to measure proximity, a microphone to track speech, and an accelerometer to confirm the device is worn [11].

- Procedure:

- Device Distribution: Participants are given sociometers to wear for the duration of the study period (e.g., 12 hours).

- Data Collection: The devices automatically collect:

- Proximity: Inferred from radio signal strength between devices (e.g., within 3 meters).

- Speech: Computed from audio features (raw audio is not stored).

- Movement: Accelerometer data confirms device usage [11].

- Data Processing:

- Divide data into time windows (e.g., 5-minute segments).

- Construct dynamic networks where individuals are linked if proximate for a full time window.

- Analyze tie persistence and strength based on duration of interactions [11].

- Statistical Analysis: Compare mean degrees of interaction, tie strengths, and talkativeness across different groups (e.g., by gender) and contexts [11].

Research Reagent Solutions

Table 2: Essential Materials for Objective Social Interaction Research

| Item | Function |

|---|---|

| Sociometer | A wearable sensor that objectively quantifies key aspects of social interaction, including physical proximity to others and individual talkativeness, without storing identifiable audio data [11]. |

| Social Interaction Scale (SIS) | A psychometrically established scale or coding scheme used to categorize observed social behaviors into different levels of interaction (e.g., from solitary to cooperative play), providing a structured framework for systematic observation [12]. |

| Systematic Observation Protocol (e.g., SOSIP) | A standardized methodology that guides researchers on how to consistently observe, record, and code social behaviors in a field setting, ensuring strong internal validity and reliability across different observers and sessions [12]. |

Methodological Visualizations

Conceptual Definitions and Core Differences

This section clarifies the fundamental concepts of recall bias and recall limitation, providing a foundation for understanding their distinct impacts on research.

What is Recall Bias?

Recall bias is a systematic error that occurs when participants in a study do not remember previous events or experiences accurately or omit details. It is not a random error; its direction can be predicted as it often results in the over-reporting or under-reporting of information in ways directly related to the research hypothesis or a participant's personal experiences [1]. For example, in a case-control study, individuals with a specific disease (cases) may be more motivated to recall and report past exposures they believe contributed to their illness, compared to healthy controls [3]. This systematic difference in recall between compared groups threatens the internal validity of a study by skewing the observed associations between exposures and outcomes [3] [14] [4].

What is Recall Limitation?

Recall limitation refers to the natural constraints and fallibility of human memory [3] [14]. Unlike the systematic nature of recall bias, recall limitation involves more random errors that do not consistently favor one outcome over another [14]. It encompasses the innate decline in memory's precision and accessibility over time, often due to passive processes like decay [3] [15]. Recall limitation is a broader concept that acknowledges the inherent imperfections of memory as a cognitive system, without implying a directional influence on research findings [14].

Key Conceptual Distinction

The core difference lies in the nature of the memory error.

- Recall Bias is a systematic error influenced by external factors, beliefs, or emotions, leading to skewed data in a particular direction [3] [14].

- Recall Limitation is a random error stemming from the inherent, natural limitations of human memory capacity and its tendency to decay over time [3] [14].

Table 1: Core Conceptual Differences Between Recall Bias and Recall Limitation

| Feature | Recall Bias | Recall Limitation |

|---|---|---|

| Nature of Error | Systematic, non-random [14] | Random, non-systematic [14] |

| Primary Cause | Influence of beliefs, emotions, disease status, or social desirability [3] [1] | Natural memory decay, capacity constraints, and passive forgetting [3] [15] |

| Effect on Data | Can overestimate or underestimate associations; threatens internal validity [3] [4] | Reduces overall precision and accuracy of data [14] |

| Specificity to Groups | Often affects study groups differently (e.g., cases vs. controls) [3] [14] | Tends to affect all participants more uniformly [14] |

| Potential for Mitigation | Can often be reduced through careful study design [14] [4] | More challenging to overcome as it is inherent to human memory [14] |

Quantitative Evidence and Data Presentation

This section presents empirical data demonstrating the effects of recall bias and memory decay, highlighting their quantifiable impact on research outcomes.

Evidence from a large-scale health services study provides a clear example of recall bias in practice. The study compared self-reported general practitioner (GP) visits against national insurer claims data over a 12-month period [16]. The results demonstrated not only an overall under-reporting but also that the direction of the error changed depending on the recall period, indicating a complex pattern of bias beyond simple forgetting [16].

Table 2: Empirical Evidence of Recall Bias in Self-Reported Health Service Use

| Recall Period | Self-Reported GP Visits (Mean) | Administrative Data GP Visits (Mean) | Direction and Magnitude of Error | Percentage Discrepancy |

|---|---|---|---|---|

| 0-6 Months | 7.1 | 5.5 | Over-reporting [16] | +35% over-reporting [16] |

| 7-12 Months | 5.4 | 8.4 | Under-reporting [16] | -36% under-reporting [16] |

| Full 12 Months | 12.5 | 14.5 | Overall under-reporting [16] | -14% under-reporting (requires 16% inflation to match claims) [16] |

Research on episodic memory further illuminates how memory fades over time, contributing to recall limitation. A study investigating memory recall over a week found that while the gist or central details of an event are retained, peripheral details are forgotten more rapidly [17]. This time-dependent decay is a hallmark of natural memory limitation.

Table 3: Memory Decay and Detail Retention Over Time

| Detail Type | Definition | Recall Stability Over Time |

|---|---|---|

| Central Details | Information essential to the storyline or event's core meaning [17] | Higher stability; retained over a week [17] |

| Peripheral Details | Contextual and perceptual information that enriches the narrative [17] | Lower stability; forgotten more rapidly over a week [17] |

Experimental Protocols for Investigating Memory Phenomena

This section outlines established experimental paradigms used in cognitive psychology to study the mechanisms of memory, including those relevant to recall bias and limitation.

The Think/No-Think (TNT) Paradigm

The TNT paradigm investigates intentional forgetting, a cognitive control mechanism where individuals voluntarily suppress the retrieval of specific memories [18].

- Procedure: Participants first study cue-target word pairs (e.g., ordeal-roach). They are then repeatedly trained to produce the target (roach) when shown the cue (ordeal). In the critical TNT phase, cues are presented again, but participants are instructed to either think about the target ("Think" items) or actively avoid thinking about the target ("No-Think" items) when they see the cue [18].

- Outcome Measurement: In a final memory test, recall for "No-Think" items is typically worse than for "Think" items and baseline items, demonstrating suppression-induced forgetting [18].

- Application: This paradigm models how individuals might actively avoid recalling unpleasant or traumatic memories, a potential mechanism in recall bias [18]. Impaired ability to suppress memories has been linked to conditions like post-traumatic stress disorder [18].

The Retrieval-Induced Forgetting (RIF) Paradigm

The RIF paradigm examines incidental forgetting that occurs as a side effect of retrieving related information [18].

- Procedure: The experiment involves three phases:

- Study: Participants learn category-exemplar pairs (e.g., Fruit-Orange, Fruit-Lemon, Drink-Vodka).

- Retrieval Practice: For half of the categories, participants practice retrieving half of the exemplars (e.g., Fruit: Or_ for Orange, making them Rp+ items). The other exemplars from practiced categories (e.g., Lemon, Rp- items) and all exemplars from unpracticed categories (e.g., Vodka, Nrp items) are not practiced.

- Final Test: Participants are tested on all studied exemplars [18].

- Outcome Measurement: The classic RIF effect is observed when recall for Rp- items (Lemon) is worse than for Nrp items (Vodka). This is theorized to occur because retrieving Orange inhibits the competing memory of Lemon [18].

- Application: RIF demonstrates how the act of remembering certain facts can actively and incidentally weaken the recall of other, related information, illustrating a cognitive mechanism behind recall limitation [18].

Visualization of Memory Processes and Research Designs

The following diagrams illustrate the key processes and study designs discussed in this guide.

Diagram 1: Pathways to Memory Error. This diagram contrasts how systematic influences lead to Recall Bias, while natural cognitive constraints lead to Recall Limitation.

Diagram 2: Recall Bias in a Case-Control Study. This diagram shows how differential recall between case and control groups leads to a systematic skewing of the study's results.

The Researcher's Toolkit: Mitigation Strategies and Reagents

This section provides a practical set of strategies and considerations for designing research that is robust against recall bias and limitation.

Study Design and Data Collection Solutions

Table 4: Strategies to Mitigate Recall Bias and Limitation

| Tool / Strategy | Primary Function | Application Context |

|---|---|---|

| Prospective Cohort Design | Eliminates long-term recall by collecting exposure data before outcomes occur [1]. | Gold standard for avoiding recall bias when studying disease etiology. |

| Shorter Recall Periods | Minimizes natural memory decay (limitation) and reduces opportunity for systematic distortion (bias) [4]. | Preferable in surveys and questionnaires; more accurate for frequent events. |

| Memory Aids & Prompts | Uses visual aids, photos, or diaries to trigger more accurate recall [3] [4]. | Useful in retrospective interviews to improve accuracy of event dating and details. |

| Validated Self-Report Instruments | Ensures questions are phrased to minimize social desirability and are tested for reliability [4]. | Critical for any study relying on questionnaires or surveys. |

| Objective Measures | Replaces self-report with biological assays, administrative data, or electronic records [3] [16]. | Provides a gold-standard comparison; used to validate self-reported data. |

| Blinded Interviewing | Prevents interviewers from influencing participants based on the interviewer's knowledge of the hypothesis or participant's group [1]. | Essential in case-control studies to prevent eliciting biased responses. |

Frequently Asked Questions (FAQs) for Troubleshooting

Q1: Our study must be retrospective. What is the single most important thing we can do to reduce recall bias? A1: Meticulously design your data collection instrument. Use blinded interviewing so the interviewer does not know the participant's case/control status, and employ neutral, non-leading questions that are phrased identically for all participants [3] [1]. Where possible, use memory aids like calendars or event histories to structure the recall task [3].

Q2: Is recall bias always differential? A2: No. While recall bias is often differential—meaning the error is different between study groups (e.g., cases vs. controls)—it can also be non-differential. Non-differential recall bias occurs when the degree of misclassification is similar across all groups, which typically biases results toward the null (underestimation of an association) [3].

Q3: How does the time lapse between an event and its recall affect memory? A3: The passage of time is a primary driver of both recall bias and limitation. Memories naturally fade and become less detailed (decay), leading to recall limitation [3] [15]. Furthermore, a longer time lapse allows for more influence from subsequent experiences, beliefs, and emotions, which can systematically distort memory (recall bias) [3] [1]. Therefore, shorter recall periods are generally more reliable [4].

Q4: We are using self-reported data for an economic evaluation. How should we handle potential inaccuracies? A4: The empirical evidence suggests conducting a sensitivity analysis [16]. For example, if self-reported service use is known to be under-reported by approximately 14% over 12 months, you should inflate your self-reported data by this factor (e.g., 16%) in a sensitivity analysis to test the robustness of your cost-effectiveness results [16]. Where crucial and possible, seek to use administrative data as the primary source [16].

Q5: What is the key difference between "recall bias" and "confirmation bias"? A5: Recall bias pertains to the accuracy of a participant's memory of past events [3]. Confirmation bias, in contrast, is a cognitive bias primarily affecting researchers, who may selectively seek or interpret information in a way that confirms their pre-existing hypotheses [3]. Both are detrimental but operate at different stages and for different people in the research process教委.

Frequently Asked Questions (FAQs)

1. What makes case-control and retrospective cohort studies "vulnerable" designs? These observational study designs are considered "vulnerable" primarily because they are retrospective in nature, meaning they look back in time after the outcome has already occurred. This makes them highly susceptible to several biases, most notably recall bias and selection bias, which can threaten the validity of their findings [19] [20] [21]. They offer less control over how original data was collected, as this data was often recorded for clinical rather than research purposes [22].

2. What is the key difference in how participants are selected for these two study designs? The fundamental difference lies in how the study population is grouped.

- Case-Control Studies: Researchers start by identifying individuals based on their outcome status (those with the disease, the "cases," and those without, the "controls") and then look backward to compare their past exposures [20] [23].

- Retrospective Cohort Studies: Researchers start by identifying individuals based on their exposure status in the past (those exposed to a risk factor and those unexposed) and then look forward in time (using existing data) to see which group developed the outcome [19] [22].

3. How does recall bias specifically affect these studies? Recall bias is a systematic error that occurs when participants' ability to remember past exposures is flawed [3]. It is a dominant concern, especially in case-control studies [20] [3]. Individuals who have developed a disease (cases) may recall past exposures differently or more vividly than healthy controls because they are motivated to find a cause for their illness [20]. For example, a mother who has given birth to a child with a birth defect may scrutinize and recall every medication she took during pregnancy more carefully than a mother who gave birth to a healthy child. This can lead to an overestimation of the association between an exposure and an outcome [20].

4. What are some common confounding biases in these study designs? Confounding is a situation where a third, unaccounted-for variable is associated with both the exposure and the outcome, creating a false impression of a relationship between them [24] [23]. For instance, if a study finds an association between coffee drinking and lung cancer, smoking could be a confounder because it is associated with both coffee drinking and lung cancer. Failure to measure and adjust for known confounders during the analysis is a major limitation of these designs [23].

5. Can these studies prove causation? Generally, no. While they are powerful for identifying associations and generating hypotheses, case-control and retrospective cohort studies cannot definitively establish causation on their own [20] [21]. Their retrospective nature makes it difficult to prove that the exposure definitively preceded the outcome, and they are more vulnerable to unmeasured confounding compared to prospective experimental designs [20].

Troubleshooting Guides

Issue 1: Managing Recall Bias in Data Collection

Problem: Data on exposures relies on participants' imperfect memories, leading to inaccurate or differentially reported information between cases and controls [20] [25].

Solutions:

- Minimize Time Lapse: Shorten the delay between the event of interest and data collection as much as possible [3].

- Use Objective Measures: Whenever feasible, use pre-existing objective data sources (e.g., medical records, pharmacy records, employment records) instead of relying solely on participant self-reporting [24] [22].

- Design Neutral Questionnaires: Use carefully phrased, open-ended questions and avoid leading questions that suggest a particular answer [3].

- Implement Memory Aids: In prospective data collection, use tools like diaries, logs, or visual prompts (photos, videos) to help participants record events in real-time or trigger more accurate recall [25] [3].

- Blind Interviewers: If interviews are conducted, keep the interviewers "blinded" to the participant's case or control status to prevent unconsciously influencing responses [20].

Issue 2: Selecting an Appropriate Control Group

Problem: An inappropriate control group can introduce severe selection bias, making the results uninterpretable [20] [23].

Solutions:

- Source Population: Ensure that both cases and controls are selected from the same underlying source population (e.g., the same hospital, community, or practice) [19].

- Clear Eligibility Criteria: Apply specific inclusion and exclusion criteria to ensure controls are subjects who might have been cases in the study but are selected independent of the exposure [23].

- Matching: Consider matching controls to cases on key characteristics (e.g., age, gender, socioeconomic status) to ensure comparability. However, avoid "over-matching" on factors that might be part of the exposure pathway [23].

- Multiple Control Groups: If uncertainty exists, using two different types of control groups (e.g., one from a hospital and one from the community) can strengthen the study's validity. If results are consistent across both groups, confidence in the findings increases.

Issue 3: Handling Incomplete or Poor-Quality Pre-Existing Data

Problem: Retrospective studies often rely on data not designed for research (e.g., clinical charts, billing codes), which can be incomplete, inaccurate, or inconsistently recorded [21] [24] [22].

Solutions:

- Pilot Validation Study: Before full-scale data extraction, pilot your data collection methods on a small sample of records to check for data availability and consistency [24].

- Create a Detailed Manual of Operations: Develop a rigorous protocol that explicitly defines every variable, specifies where to find it in the source, and provides clear rules for handling ambiguous or missing information [24].

- Train Data Abstractors: Conduct standardized training sessions for all personnel involved in data extraction and perform inter-rater reliability checks to ensure consistency across different abstractors [24].

- Leverage Technology: Use standardized data collection tools like REDCap to structure the abstraction process. Where possible, use automated electronic health record (EHR) queries, but ensure they are validated and adapted for each site's unique EHR environment [24].

Issue 4: Managing Confounding Variables

Problem: An observed association is distorted by a third variable (confounder) that is related to both the exposure and the outcome [20] [24].

Solutions:

- Study Design Stage: Use restriction (only including subjects with certain characteristics) or matching to make groups more comparable at the outset.

- Data Analysis Stage: Use statistical techniques to adjust for confounders, such as:

- Stratification: Analyzing the data within separate layers (strata) of the confounder.

- Multivariate Regression Models: Using models that can simultaneously assess the effect of the exposure while holding the effects of confounders constant.

Experimental Protocols for Key Mitigation Strategies

Protocol 1: Designing a High-Frequency Data Collection Diary to Minimize Recall Decay

Objective: To obtain accurate data on highly variable exposures or outcomes (e.g., dietary intake, symptom severity, social interactions) by minimizing the reliance on long-term memory.

Materials:

- Mobile data collection platform (e.g., ODK, REDCap)

- Smartphones or tablets for participants

- Incentive structure (e.g., mobile data credit, small payments)

Methodology:

- Define Variables: Identify the specific behaviors or experiences to be measured (e.g., "number of social interactions lasting >5 minutes per day").

- Develop Short-Form Survey: Create a very brief questionnaire focused only on the essential variables. The survey should take less than 5 minutes to complete.

- Randomize Frequency: To assess the impact of recall period, randomize participants to receive the survey at different frequencies (e.g., daily, weekly, monthly) [25].

- Pilot and Train: Pilot the survey with a small group to ensure clarity. Train participants on how to use the mobile platform.

- Deploy and Monitor: Send surveys at the prescribed frequency. Use automated reminders to maximize response rates.

- Data Validation: Compare data collected at different frequencies to quantify recall decay and establish the optimal recall period for the variable of interest [25].

Objective: To ensure consistent, high-quality, and reliable data extraction from medical records across multiple research sites.

Materials:

- Electronic data capture system (e.g., REDCap)

- Data collection form with branching logic and validation checks

- Manual of Operations (MoO) document

Methodology:

- Develop the Manual of Operations (MoO): Create a comprehensive document that defines every variable, its data type (e.g., integer, string, date), possible values, and, crucially, its precise location in the EHR or chart (e.g., "Vital Signs flowsheet, 24 hours post-admission").

- Build the Data Collection Tool: Program the electronic form based on the MoO. Use features like required fields, range checks, and branching logic to minimize data entry errors.

- Train Site Investigators and Abstractors:

- Conduct a centralized training webinar for all site personnel.

- Have all abstractors review the same 5-10 practice records and compare results to resolve discrepancies in interpretation.

- Perform Inter-Rater Reliability (IRR) Checks: Mandate that a subset of records (e.g., 5-10%) at each site is abstracted independently by two reviewers. Calculate a kappa statistic or percent agreement to ensure consistency [24].

- Ongoing Quality Assurance: Hold regular meetings with site PIs to troubleshoot issues. Perform periodic central audits of submitted data to identify outliers or systematic errors.

Research Reagent Solutions

Table: Essential Materials for Robust Retrospective Research

| Item | Function in Research |

|---|---|

| REDCap (Research Electronic Data Capture) | A secure, HIPAA-compliant web platform for building and managing online surveys and databases. It is essential for standardizing data collection across multiple sites [24]. |

| Manual of Operations (MoO) | A detailed protocol document that ensures all researchers define and collect data in a consistent manner, which is critical for data reliability [24]. |

| Structured Query Language (SQL) | A programming language used to write scripts for automated data extraction from electronic health records, reducing manual abstraction time and errors [24]. |

| PheKB (Phenotype KnowledgeBase) | A publicly available online repository of electronic health record algorithms that can be used or adapted for standardized case ascertainment across sites [24]. |

| Inter-Rater Reliability (IRR) Metrics | Statistical measures (e.g., Cohen's Kappa) used to quantify the agreement between different data abstractors, providing a measure of data quality and consistency [24]. |

Diagrams of Methodological Relationships

Diagram: Bias Pathways in Vulnerable Study Designs

Diagram: Mitigation Strategies Workflow

Advanced Data Collection Methods to Minimize Memory Reliance

Ecological Momentary Assessment (EMA) is a research method that involves collecting real-time data on participants' experiences, behaviors, and moods as they occur in their natural environments [26]. This approach, also known as the Experience Sampling Method (ESM), minimizes recall bias and provides a more dynamic and accurate picture of an individual's subjective experiences compared to traditional retrospective reports [27]. By capturing data within the context of daily life, EMA allows researchers to study the micro-processes that unfold over time, such as the triggers and antecedents of specific behaviors or emotional states [26] [27].

In the specific context of mitigating recall bias in social interaction measurement, EMA's strength lies in its ability to capture the nuances of social contexts and subjective social experiences as they happen, rather than relying on summaries that may be distorted by memory or beliefs [28].

Essential EMA Protocols & Methodologies

EMA employs distinct data collection protocols, each suited to different research questions. The following workflow outlines the core stages of implementing these methodologies, from protocol selection to data analysis.

Core Sampling Protocols

Table: EMA Data Collection Protocols

| Protocol Type | Description | Best Use Cases | Example |

|---|---|---|---|

| Event-Contingent [26] [27] | Participant initiates report when a predefined event occurs. | Studying specific, identifiable events or behaviors. | Recording details after every social interaction exceeding 5 minutes [27]. |

| Signal-Contingent (Random) [26] [27] | Participant responds to random signals ("beeps") throughout the day. | Obtaining a representative sample of experiences and estimating risk of antecedents [26]. | Random prompts to report current mood, stress, and social context [26]. |

| Time-Contingent [26] [27] | Participant reports at predetermined times (fixed or stratified). | Capturing experiences at predictable times or ensuring coverage across the day. | Beginning-of-day and end-of-day reports [26]. |

Advanced Implementation: μEMA and GEMA

To further reduce participant burden and increase data density, consider these advanced methodologies:

- Microinteraction-based EMA (μEMA): This method uses smartwatches to deliver prompts that can be answered with a single tap in just a few seconds. A pilot study found that despite an 8x increase in interruptions, μEMA had higher compliance rates and was perceived as less distracting than smartphone-based EMA [28].

- Geographic EMA (GEMA): This integrates EMA with GPS to capture real-time emotional states alongside precise location-based environmental exposure data. Research shows GEMA effectively mitigates recall bias inherent in methods like the Day Reconstruction Method (DRM), which can underestimate factors like short-term happiness and environmental exposure [29].

The Researcher's Toolkit: Essential Materials & Solutions

Table: Key Reagents and Solutions for an EMA Study

| Item / Solution | Function / Rationale | Technical Notes |

|---|---|---|

| Smartphone Application [30] | Primary platform for signal delivery and data collection; offers ubiquity and user familiarity. | Select apps that provide full control over sampling schedules, data security, and export options. |

| Smartwatch (for μEMA) [28] | Enables microinteractions; minimizes device access time and perceived burden, allowing for higher-density sampling. | Ensure the device platform (e.g., Android) allows for precise timing and reliable logging [26]. |

| Web Server & Database [26] | Backend infrastructure for receiving, storing, and managing the high volume of longitudinal EMA data. | A 3-tiered design (client, web server, database) is common. Test for synchronous communication and data integrity [26]. |

| Pilot Participants | Critical for testing the entire system—technology, question clarity, and participant burden—before main study launch. | Use pilot feedback to optimize the frequency and timing of prompts to maximize data collection without overburdening participants [26]. |

| Validated Question Scales | Ensures the reliability and validity of measured constructs (e.g., mood, stress, social connectedness). | Adapt questions for the momentary context and small screen; pre-test for clarity [27]. |

| Incentive Structure | A strategy to enhance and maintain participant adherence over the study duration. | Can include compensation, feedback, or gamification elements [26] [30]. |

Troubleshooting Common EMA Challenges

Low Participant Compliance and Adherence

- Problem: Participants are not responding to prompts, leading to missing data and potential bias.

- Solutions:

- Optimize Burden: Balance sampling frequency and survey length. Use μEMA for high-density sampling [28]. Pilot studies are essential to find the optimal frequency [26].

- User-Friendly Design: Employ an intuitive interface with clear questions. A positive user experience significantly impacts engagement [30].

- Motivational Strategies: Incorporate incentives, provide feedback on progress, and use motivational messaging to encourage participation [30].

- Regular Monitoring: Actively monitor compliance rates so you can identify and re-engage struggling participants quickly [26].

Technical Failures and Data Loss

- Problem: Smartphones, servers, or software malfunction, resulting in lost signals or data.

- Solutions:

- Robust Infrastructure: Implement a reliable 3-tiered architecture (smartphone, web server, database server) and conduct thorough in-house testing [26].

- Offline Functionality: Use a system that stores data locally on the device when network connectivity is lost and syncs when a connection is restored [26].

- Proactive Monitoring: Set up system alerts for server-side failures or unusual data patterns. Regular data backup is critical [26].

Participant Reactivity and Design Bias

- Problem: The act of repeated measurement alters the participant's natural behavior or experience.

- Solutions:

- Acknowledge and Measure: While research suggests reactivity is often small, it should be considered [28]. You can measure it by looking for changes in reporting patterns over time.

- Minimize Intrusiveness: The less burdensome and disruptive the protocol, the less likely it is to cause reactivity. The μEMA method was specifically designed for this purpose [28].

- Blinding: Where possible, blind participants to specific study hypotheses to reduce the potential for biased reporting.

Ensuring Accessibility and Inclusivity

- Problem: The EMA design excludes participants with varying abilities, technological literacy, or device access.

- Solutions:

- Color Contrast: Ensure all text and interactive elements have sufficient contrast ratios (at least 4.5:1 for small text). Use tools like WebAIM's Color Contrast Checker [31] [32].

- Beyond Color: Do not use color alone to convey meaning. Use icons, bold text, or underlines to reinforce information [31] [32].

- Typography and Layout: Use a legible font size, clear heading hierarchy, and a logical reading order to aid those with low vision or attention deficits [31].

- Input Methods: Consider the accessibility of touch targets and input methods for participants with visual or motor impairments [27].

Frequently Asked Questions (FAQs)

What is the optimal number of prompts per day to ensure good compliance without overburdening participants? There is no universal number, as it depends on the research question, population, and survey length. Studies have used frequencies ranging from a few prompts per day to multiple prompts per hour [27]. The key is to pilot-test your protocol. One longitudinal study achieved an 88% completion rate with a mix of random and time-contingent prompts [26]. For very frequent sampling, the μEMA method has been used successfully with significantly increased interruption rates [28].

How does EMA specifically mitigate recall bias in social interaction research? Recall bias occurs when memories of past events are distorted or summarized inaccurately. EMA captures social experiences (e.g., mood, conflict, feelings of connection) close to their occurrence, preventing the decay and reconstruction of memory [28] [29]. For example, a study comparing EMA to the Day Reconstruction Method (DRM) found that the DRM underestimated short-term happiness, demonstrating EMA's superior accuracy [29].

What are the key statistical considerations for analyzing EMA data? EMA data has a hierarchical (multilevel) structure, with repeated observations (Level 1) nested within individuals (Level 2). This requires statistical techniques like multilevel modeling (also known as hierarchical linear modeling) to account for the non-independence of data points and to partition variance within and between persons [27]. Standard statistical methods like ANOVA are inappropriate for this data structure.

Our research budget is limited. Can we use participants' own smartphones (BYOD) for an EMA study? While using participants' own devices (Bring Your Own Device) reduces costs, it introduces challenges. You may encounter variability in operating systems, device capabilities, and data plan coverage, which can affect the consistency of signal delivery and data collection. A safer, though more costly, approach is to provide standardized devices to all participants to ensure a uniform technical environment [26].

Actigraphy provides an objective, continuous method for collecting sleep and physical movement data in a participant's natural environment. Unlike self-reported sleep diaries or questionnaires, which are susceptible to recall bias and subjective interpretation, actigraphy generates unbiased, quantitative data. This is crucial in social interaction and neuropsychological research, where accurate measurement of behavioral biomarkers like sleep and activity is essential. By using actigraphy, researchers can obtain more reliable data on parameters such as total sleep time and wake after sleep onset, thereby reducing the measurement error that can compromise study validity [33].

Understanding Actigraphy and Core Sleep Parameters

Actigraphs are small, watch-shaped devices containing accelerometers to monitor and record movement. The device is typically worn on the non-dominant wrist for extended periods, collecting movement data multiple times per second. This data is processed by specialized algorithms to infer sleep and wake states, generating a range of objective sleep parameters [33].

The table below summarizes the key sleep parameters derived from actigraphy data, which are essential for objective measurement in research settings.

Table: Key Sleep Parameters Derived from Actigraphy

| Parameter | Technical Definition | Research Significance |

|---|---|---|

| Total Sleep Time (TST) | The total amount of time scored as sleep during the sleep period. | A primary measure of sleep quantity; linked to cognitive function and health outcomes [33]. |

| Sleep Efficiency (SE) | The percentage of time spent asleep during the total sleep period. | A key indicator of sleep quality; lower efficiency is associated with various health risks [33]. |

| Wake After Sleep Onset (WASO) | The total amount of awake time after initially falling asleep. | Measures sleep fragmentation; important for studies on sleep quality and mood disorders [33] [34]. |

| Sleep Latency | The amount of time it takes to fall asleep after the start of the sleep period. | Can be an indicator of hyperarousal or sleep initiation difficulties. |

| Sleep Fragmentation Index (SFX) | A measure of the restlessness of sleep based on the frequency of wake bouts. | Provides a consolidated view of sleep continuity; underutilized in many studies [33]. |

FAQs and Troubleshooting Guides

Q1: What are the most common actigraphy data issues and how can I resolve them?

Data quality issues can compromise your research findings. The table below outlines common problems and their solutions.

Table: Common Actigraphy Data Issues and Solutions

| Issue | Description | Resolution Steps |

|---|---|---|

| Abnormally High or Low Activity | Actigraphy data appears implausibly high or low, interfering with accurate sleep scoring [35]. | 1. Recalibrate the device according to manufacturer instructions.2. Verify device placement on the non-dominant wrist.3. If issues persist, contact technical support with details of steps taken [35]. |

| Invalid or "Blocky" Sleep Data | Sleep data appears distorted or is flagged as invalid, often due to signal loss or device malfunction. | 1. Manually review the uploaded sleep data for obvious anomalies.2. Check the device's physical condition and battery level.3. Ensure the device firmware is up to date [36]. |

| Sync and Bluetooth Pairing Failures | Inability to sync data from the device to the analysis software. | 1. Verify Bluetooth pairing between the device and computer.2. Ensure the device is sufficiently charged.3. Restart both the device and the computer software [36]. |

| Excessive Non-Wear Time | Large periods of missing data, which is a common challenge in longitudinal studies [34]. | 1. Implement a robust non-wear detection algorithm during data processing.2. Cross-reference with a participant wear-time diary if available.3. Define a valid day threshold for analysis (e.g., a minimum of 16 hours of wear time) [34]. |

Q2: How do I handle missing data in long-term longitudinal actigraphy studies?

Long-term studies often face declining compliance. Here is a standardized workflow to manage missing data:

- Pre-processing and Trimming: Define rules for data inclusion, such as a minimum number of valid wear days per week [34].

- Non-Wear Detection: Use validated algorithms (e.g., Choi, Troiano, van Hees) to automatically identify periods when the device was not worn. A "majority algorithm" that combines several methods has been shown to outperform single methods and hardware-based wear sensors [34].

- Sensitivity Analysis: Conduct analyses to demonstrate how your chosen valid-day threshold impacts the relationship between sleep variables and your key outcomes (e.g., depressive symptoms). This proves your results are robust to pre-processing choices [34].

Q3: My actigraphy-based sleep parameters differ from patient self-reports. Which is correct?

Discrepancies between objective actigraphy data and subjective patient reports are common and expected. These differences are not necessarily errors but often reflect the mitigation of recall bias. Actigraphy provides an objective measure of sleep patterns, while self-reports capture perceived sleep quality. This discrepancy can be a valuable research finding in itself, potentially indicating conditions like sleep state misperception. The choice of which measure to prioritize depends on your specific research question—actigraphy for behavioral data and self-reports for perceived sleep experience.

The Researcher's Toolkit: Essential Materials and Reagents

Table: Essential Actigraphy Research Equipment and Software

| Item | Function / Application |

|---|---|

| Actigraph Device (e.g., ActiGraph GT9X Link, Motionlogger Sleep Watch) | A wrist-worn accelerometer to continuously monitor and record movement data in free-living conditions [33] [34]. |

| Charging Dock & USB Cable | For regular recharging of the device to ensure continuous data collection over long-term studies [34]. |

| Data Analysis Software (e.g., Action-W, ActiLife, open-source R packages) | Specialized software to download data from the device, score sleep/wake states using validated algorithms, and derive sleep parameters [33] [34]. |

| Participant Wear-Time Log | A diary for participants to record off-wrist periods, which helps validate and refine automated non-wear detection [34]. |

| Cloud-Based Data Management Platform (e.g., CentrePoint) | A system for secure data upload, storage, and monitoring of participant compliance during a study [34]. |

Standardized Workflow for Actigraphy Data Processing

A reproducible and standardized workflow is critical for ensuring the quality and reliability of actigraphy data, especially in long-term studies. The following diagram visualizes the key stages of this process, from raw data collection to the final analytic dataset.

This workflow, adapted for longitudinal research, highlights the critical importance of automated quality control steps, particularly non-wear detection and sensitivity analysis, to ensure the resulting data is valid and the findings robust [34].

Leveraging Wearable Technology and Digital Phenotyping for Passive Data Collection

Troubleshooting Guides

Data Quality and Signal Integrity

Issue: Missing or Gaps in Sensor Data

- Problem: Data streams from wearables (e.g., heart rate, steps) are incomplete.

- Solution:

- Ensure device firmware and companion applications are updated.

- Verify consistent Bluetooth synchronization protocols; implement automated alerts for disconnections.

- For research, apply statistical imputation techniques to missing data to preserve dataset integrity [37].

Issue: Poor Heart Rate (HR) or Heart Rate Variability (HRV) Signal Quality

- Problem: Noisy or physiologically implausible HR/HRV readings.

- Solution:

- Check device fit; sensors require skin contact and should be snug but comfortable.

- Identify and flag data from periods of high-motion activity for separate processing, as movement can introduce artifact noise [38].

- Use validated, study-specific data cleaning pipelines to filter out noise from raw signals [37].

Issue: Inconsistent Sleep or Activity Classification

- Problem: Device-recorded sleep stages or activity types do not match user logs.

- Solution:

- Use a research-grade actigraphy algorithm for analysis, as consumer algorithms are often proprietary and can vary.

- Collect simple participant diaries (e.g., sleep/wake times) for ground-truth validation of automated classifications [38].

Participant Compliance and Engagement

Issue: Low Participant Wear-Time Adherence

- Problem: Participants are not using the wearable device as instructed.

- Solution:

- Establish a clear wear-time monitoring dashboard with compliance thresholds.

- Implement automated, personalized reminders via SMS or application push notifications to encourage consistent use.

- For long-term studies, plan for periodic re-engagement touchpoints to maintain participation [38].

Issue: User-reported Data Inaccuracies

- Problem: Participants report that the data does not reflect their experience.

- Solution:

- Create a simple feedback mechanism for participants to log concerns.

- Triage issues to identify true sensor errors versus misunderstandings of data presentation.

- Use this feedback to improve participant communication and training materials [4].

Frequently Asked Questions (FAQs)

Q1: How does passive data collection with wearables specifically help mitigate recall bias in social interaction research?

- Answer: Recall bias is a distortion of memory where participants inaccurately remember or report past events, such as social interactions [4] [3]. Wearables passively and continuously collect data like step count, location, and communication logs (via smartphones) without relying on participant memory. This provides an objective, behavioral record that substitutes for or validates self-reported measures, thereby directly mitigating recall bias [38] [37].

Q2: What are the key passive sensing data streams for behavioral phenotyping, and what do they measure?

- Answer: The three primary data streams are movement, sleep, and pulse. The table below summarizes their key metrics and relevance.

| Data Stream | Key Metrics | Behavioral & Physiological Relevance |

|---|---|---|

| Movement/Physical Activity | Step count, activity time, intensity levels [38] | Physical engagement, restlessness, psychomotor retardation/agitation [38] |

| Sleep | Sleep duration, sleep variability, restlessness [38] | Sleep quality, circadian rhythm stability [38] |

| Pulse | Heart rate (HR), Heart rate variability (HRV) [38] [37] | Autonomic nervous system activity, stress arousal [38] |

Q3: Our study involves sensitive data. What are the primary ethical considerations?

- Answer: Key considerations include:

- Privacy and Data Security: Implement end-to-end encryption for data in transit and at rest. De-identify data as early as possible in the processing pipeline [37].

- Informed Consent: Clearly explain the type of data being collected (e.g., location, physiological signals), how it will be used, who will have access, and the measures in place to protect it [38].

- Transparency: Be open about the limitations of the data and algorithms used for analysis [37].

Q4: We are planning a long-term study. How can we manage battery life and device durability?

- Answer:

- Provide participants with clear charging guidelines and consider supplying extra charging cables.

- During the study design phase, factor in the battery life of devices under your specific data collection settings (e.g., continuous HR monitoring drains battery faster).

- Have a protocol for replacing malfunctioning devices with minimal data loss.

Experimental Protocols & Methodologies

Protocol 1: Validating Digital Phenotypes Against Clinical Scales

This methodology outlines the process for associating passive sensing data with clinical questionnaire items to create validated digital biomarkers [38].

1. Objective: To model associations between passively collected features (e.g., pulse, movement, sleep) and individual items on a validated depression scale (CES-D) to move beyond monolithic sum-scores and understand symptom-level signals [38].

2. Materials and Equipment:

- Wearable devices capable of continuous data collection (e.g., Fitbit, Garmin).

- A secure data server for aggregating sensor data.

- Electronic platforms for administering validated clinical questionnaires (e.g., CES-D).

3. Procedure:

- Step 1: Data Collection

- Step 2: Data Preprocessing

- Process raw sensor data into summary features (e.g., average nightly sleep, daily step count, resting heart rate).

- Synchronize the timing of sensor-derived features with the corresponding questionnaire administration window.

- Step 3: Statistical Modeling

- Use mixed ordinal logistic regression models (or other appropriate ML techniques) to quantify the contribution of each passive sensing feature to the prediction of each individual questionnaire item [38].

- This tests, for example, whether reduced step count is more strongly associated with reported fatigue than with reported feelings of sadness.

4. Analysis: