Mapping the Neural Circuitry of Uncertainty: From Foundational Mechanisms to Clinical Translation in Drug Development

This article synthesizes contemporary neuroscience research to elucidate the complex neural networks that underpin decision-making under uncertainty.

Mapping the Neural Circuitry of Uncertainty: From Foundational Mechanisms to Clinical Translation in Drug Development

Abstract

This article synthesizes contemporary neuroscience research to elucidate the complex neural networks that underpin decision-making under uncertainty. It explores foundational brain structures like the anterior insula, anterior cingulate cortex, and striatum, detailing their specialized roles in processing risk, ambiguity, and delay. The content progresses to examine methodological approaches, including fMRI and EEG, and their application in parsing distinct uncertainty types. It further addresses challenges in the field, such as conceptual clarity and individual differences, and validates computational frameworks like Active Inference for modeling these processes. Finally, the article discusses the translational potential of these insights for developing novel therapeutic strategies in neuropsychiatry and addiction, providing a comprehensive resource for researchers and drug development professionals.

The Brain's Uncertainty Network: Core Regions and Functional Specialization

The anterior insular cortex (AIC), once a poorly understood region hidden deep within the lateral sulcus, has emerged as a critical neural hub for integrating cognitive, affective, and interoceptive processes. This triangular region, which serves as a limbic integration cortex, exhibits a remarkable functional distribution with specialized roles across its posterior-anterior axis [1]. The posterior insula primarily processes primary interoceptive signals—somatosensory, vestibular, and motor information—while the anterior insula performs higher-order integration of this physiological data with emotional, cognitive, and motivational signals [1]. This posterior-to-anterior progression of information processing enables the AIC to support conscious feeling states by re-representing interoceptive information in a manner accessible to awareness [2]. The AIC contains specialized neuroanatomical features, including von Economo neurons—large spindle-shaped cells thought to facilitate rapid long-range information integration—which are disproportionately expanded in humans compared to other primates [1].

Within the context of decision-making under uncertainty, the AIC serves as a central node in the brain's salience network, orchestrating the dynamic interplay between the central executive network (CEN) and the default-mode network (DMN) [3]. This positioning enables the AIC to mark stimuli as salient by referring to subjective feeling states, thereby initiating appropriate cognitive processes and motivational behaviors [1]. The AIC's unique capacity to integrate internal bodily states with external sensory information makes it particularly crucial for processing uncertainty and generating arousal responses that guide decision-making in unpredictable environments—a fundamental challenge in both normal cognitive function and neuropsychiatric disorders.

Functional Neuroanatomy of the Anterior Insula

Cytoarchitectonic Organization and Connectivity

The human insular cortex demonstrates a clear cytoarchitectonic progression from granular posterior regions to agranular anterior regions. The posterior granular insula receives ascending sensory inputs from thalamic nuclei and connects with parietal, occipital, and temporal association cortices, positioning it as a primary region for somatosensory and vestibular integration [1]. In contrast, the agranular anterior insula maintains robust reciprocal connections with limbic structures including the anterior cingulate cortex, amygdala, dorsolateral prefrontal cortex, and ventral striatum [1]. This connectivity profile enables the AIC to serve as a limbic sensory area that integrates autonomic and visceral information with emotional and cognitive processes [1].

The AIC's extensive connectivity supports its role as a critical hub in several large-scale brain networks. As a core node of the salience network, the AIC, particularly in the right hemisphere, functions as a causal outflow hub that orchestrates the switching between the central executive network (engaged during goal-directed tasks) and the default-mode network (active during self-referential thought) [3]. This switching mechanism allows the AIC to prioritize stimuli for cognitive resources based on their salience, fundamentally shaping how individuals allocate attention and process information in uncertain environments.

The Anterior Insula as an Interoceptive Hub

A fundamental function of the AIC is its role in interoception—the sense of the physiological condition of the body—which provides a critical foundation for subjective emotional experience [2]. The AIC implements a posterior-to-anterior progression of interoceptive processing, where primary interoceptive signals first arrive in the posterior insula for initial processing of sensory features before being passed anteriorly for integration with emotional, cognitive, and motivational signals [1]. This hierarchical processing enables the conscious perception of interoceptive information, allowing bodily states to influence subjective feeling states [1].

The AIC's interoceptive function forms the neurobiological basis for the "somatic marker hypothesis," which proposes that bodily states associated with emotional experiences influence decision-making processes [1]. Through this mechanism, the AIC supports the generation of subjective feeling states that underlie conscious awareness of both the physical self as a feeling entity and the emotional significance of external stimuli [2]. This role in conscious feeling states positions the AIC as a potential neural correlate of awareness itself, with different subjective feelings represented by distinct AIC activation patterns [2].

The Anterior Insula in Uncertainty Processing

Neural Correlates of Decision-Making Under Uncertainty

The AIC demonstrates consistent activation during decision-making under uncertainty, functioning as a domain-general region for processing uncertainty across perceptual and value-based domains. Empirical evidence indicates that the AIC responds to both perceptual uncertainty (e.g., when viewing ambiguous stimuli like the Necker cube) and financial outcome uncertainty during gambling tasks [4]. This common activation pattern suggests the brain employs inferential processes to resolve uncertainty across different domains, with the AIC serving as a central component of this system [4].

A particularly revealing fMRI study examined neural activity during a card game with parametrically varying degrees of outcome uncertainty [5]. Participants were presented with cue cards numbered 2-10 and asked to predict whether a feedback card would be higher or lower, with cue cards in the middle of the range (5, 6, 7) creating maximal uncertainty. The results demonstrated that while the AIC was activated during all decisions regardless of uncertainty level, individuals with higher neuroticism scores showed significantly increased AIC activity specifically during 'certain decisions'—situations where the most probable outcome was clearly evident [5]. This finding suggests that increasing levels of neuroticism modulate neural activation in such a way that the brain interprets certainty as uncertain, highlighting the AIC's role in subjective uncertainty appraisal.

Table 1: AIC Activation Patterns During Different Decision-Making Conditions

| Condition | AIC Activation Pattern | Functional Interpretation | Study Reference |

|---|---|---|---|

| Uncertain decisions (card game) | Significant bilateral AIC activation | General involvement in decision conflict | [5] |

| Certain decisions in high neuroticism | Elevated right AIC activation | Misinterpretation of certainty as uncertainty | [5] |

| Perceptual uncertainty (Necker cube) | Significant AIC activation | Domain-general uncertainty processing | [4] |

| Financial uncertainty (gambling task) | Significant AIC activation | Outcome uncertainty and risk prediction | [4] |

| Flow state during mental arithmetic | Inverted U-shaped AIC activation | Optimal challenge/salience detection | [3] |

Signaling Pathways and Neurobiological Mechanisms

The AIC contributes to uncertainty processing through specific molecular pathways and network dynamics. Research in mouse models demonstrates that the AIC regulates risk decision-making through glutamatergic signaling and functional connectivity with limbic structures, particularly the basolateral amygdala (BLA) [6]. Pharmacological manipulation of N-methyl-D-aspartate (NMDA) receptors in the AIC significantly alters risk-taking behavior, indicating the importance of glutamatergic transmission in AIC-mediated decision processes [6].

Additionally, estrogen receptors in the AIC appear to modulate risk decision-making, particularly in females, potentially through regulation of synaptic plasticity [6]. This mechanism may explain sex differences in decision-making strategies under stress, with female mice demonstrating lower risk preference than males after stress exposure—a difference that can be altered by estrogen receptor antagonism [6]. The AIC-BLA circuit appears to form a dedicated cortico-limbic network for effective decision-making, with the AIC creating bodily representations that the amygdala uses to modulate emotional responses to uncertain stimuli [6].

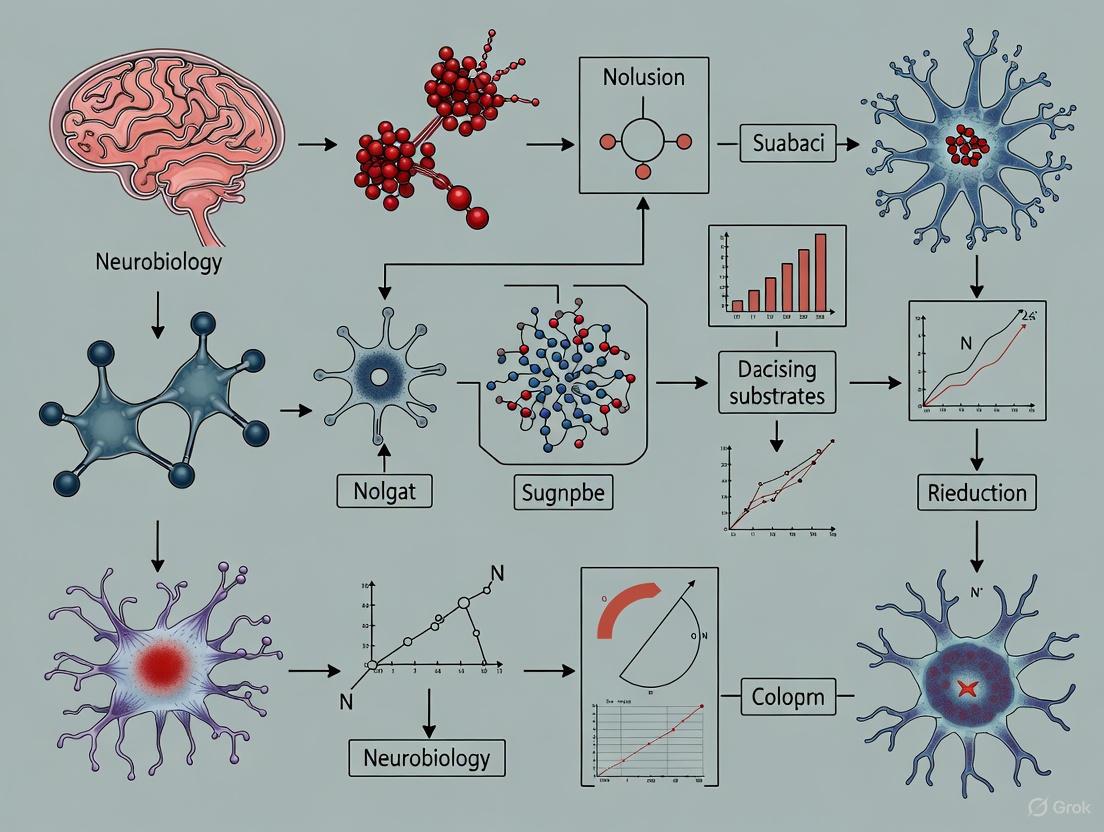

Figure 1: AIC Signaling Pathway in Uncertainty Processing. The AIC integrates sensory and interoceptive information to generate uncertainty signals, which trigger arousal responses through the AIC-BLA pathway and prefrontal engagement, ultimately influencing decision outputs.

The Anterior Insula in Arousal and Salience Detection

Network Dynamics and Flow States

The AIC serves as a causal outflow hub of the salience network, dynamically orchestrating brain network interactions in response to behaviorally relevant stimuli. This function becomes particularly evident during flow states—optimal experience conditions characterized by high attention, reduced self-referential processing, and intrinsic reward [3]. During flow induction through mental arithmetic tasks with balanced challenge-skill levels, the right AIC demonstrates an inverted U-shaped activation pattern, with maximal activation during the flow condition compared to boredom or overload conditions [3].

This flow-related AIC activation pattern reflects its role in enhancing externally oriented attention while decreasing internally oriented self-referential cognition [3]. During flow, the right AIC shows increased functional connectivity with dorsolateral prefrontal regions (central executive network) and decreased coupling with ventral striatum and medial prefrontal regions (default-mode network) [3]. This network reconfiguration prioritizes task-relevant cognitive resources while minimizing distractible self-referential thought, creating the neural conditions for optimal task engagement and performance.

Interoceptive Awareness and Subjective Feeling States

The AIC plays a fundamental role in generating conscious emotional feelings through its interoceptive function, providing the neurobiological substrate for subjective awareness of bodily states associated with arousal [2]. Functional neuroimaging studies consistently demonstrate AIC activation during diverse subjective feeling states—from bowel distension and orgasm to cigarette craving and maternal love [2]. This common activation pattern across diverse experiences suggests the AIC implements a general mechanism for conscious feeling states rather than specializing in specific emotions.

The AIC's role in interoceptive awareness provides a mechanism through which bodily arousal states influence decision-making under uncertainty. By making interoceptive information accessible to conscious awareness, the AIC allows physiological arousal to be incorporated into decision processes, particularly in situations with emotional outcomes [2]. This mechanism aligns with the somatic marker hypothesis, which proposes that bodily states associated with previous emotional experiences bias decision-making toward advantageous choices—a function critically dependent on the AIC [7].

Table 2: AIC Functional Connectivity Patterns Across Different States

| Brain Region | Flow State Connectivity | Normal State Connectivity | Functional Significance |

|---|---|---|---|

| Dorsolateral Prefrontal Cortex | Increased | Moderate | Enhanced cognitive control |

| Medial Prefrontal Cortex | Decreased | Moderate | Reduced self-referential thought |

| Ventral Striatum | Decreased | Moderate | Reduced extrinsic reward processing |

| Amygdala | Decreased/Unaffected | Moderate | Reduced emotional arousal |

| Inferior Parietal Lobule | Increased | Moderate | Enhanced attention reorientation |

Experimental Approaches and Methodologies

Behavioral Paradigms for Assessing AIC Function

Several well-validated behavioral paradigms have been developed to investigate AIC function in uncertainty processing and arousal. The card prediction task represents one established approach, where participants view cue cards (values 2-10) and predict whether a feedback card will be higher or lower [5]. This paradigm creates parametric uncertainty levels, with middle values (5-7) generating maximal uncertainty. During task performance, fMRI data acquisition focuses on the action selection phase, with separate regressors for uncertain and certain trials based on individual response patterns [5].

The flow induction paradigm provides another method for investigating AIC network dynamics [3]. This approach uses mental arithmetic tasks with adaptive difficulty levels to create boredom (low challenge/high skill), flow (balanced challenge/skill), and overload (high challenge/low skill) conditions. During fMRI, participants solve calculations for 30-second blocks, with task difficulty automatically adjusted to maintain target conditions. This paradigm specifically probes the AIC's role in salience detection and network switching during optimal engagement states [3].

Risk decision-making tasks in animal models offer complementary approaches for investigating AIC function with higher mechanistic resolution. The radial maze-based gambling test for mice presents subjects with choices between low-risk/low-reward and high-risk/high-reward arms, with varying probabilities of positive (sucrose water) and negative (quinine water) outcomes [6]. This paradigm allows researchers to quantify risk preference and assess how AIC manipulations alter decision-making under uncertainty.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents for Investigating AIC Function

| Reagent/Resource | Primary Function | Example Application | Experimental Outcome |

|---|---|---|---|

| fMRI BOLD Imaging | Measures neural activity indirectly via hemodynamic changes | Mapping AIC activation during uncertainty processing | Identifies AIC involvement in uncertain decision-making [5] [4] |

| Chemogenetic Tools (DREADDs) | Selective manipulation of neural activity in specific pathways | Testing causal role of AIC-BLA circuit in risk decision-making | Chemogenetic inhibition alters risk preference in mice [6] |

| Estrogen Receptor Antagonists | Pharmacological blockade of estrogen signaling | Investigating sex differences in AIC-mediated decision-making | ER antagonism increases risk-taking in female mice [6] |

| GABA Receptor Agonists | Neuronal inhibition through enhanced GABAergic transmission | Assessing necessity of AIC activity for risk adjustment | AIC inactivation reduces risk-taking in rat gambling task [6] |

| NMDA Receptor Antagonists | Blockade of glutamatergic transmission | Testing role of synaptic plasticity in AIC function | NMDA antagonism decreases social behavior in rats [6] |

Clinical Implications and Pathological Contexts

The AIC in Neuropsychiatric Disorders

Dysfunction of the AIC represents a transdiagnostic feature across multiple neuropsychiatric conditions, particularly those involving impaired decision-making under uncertainty. In drug addiction, the AIC plays a critical role in conscious craving and drug-seeking behavior, with insula damage dramatically disrupting tobacco addiction [8]. Interestingly, patients with AIC lesions who quit smoking report that their "body forgot the urge to smoke," suggesting the AIC mediates the interoceptive representations of drug effects that maintain addictive behavior [7].

In Parkinson's disease, the AIC demonstrates significant functional alterations, particularly in relation to nonmotor symptoms [9]. Quantitative meta-analyses reveal consistent convergence of pathology-related activation maxima in both anterior and posterior insular regions, with the AIC contributing to cognitive, affective, and autonomic disturbances that significantly impact quality of life [9]. The AIC's role as an integrative hub interacting with multiple brain networks makes it particularly vulnerable to distributed neuropathology like alpha-synuclein deposition in Parkinson's disease [9].

The AIC also shows structural and functional abnormalities in mood and anxiety disorders. Patients with major depressive disorder demonstrate both reduced AIC gray matter and altered AIC activity during emotional processing [1]. Similarly, anxiety disorders involve AIC hyperactivity, with the right AIC showing positive correlation with neuroticism—a core risk factor for anxiety development [5]. This AIC hyperactivity may reflect misinterpretation of certainty as uncertainty, creating a pervasive sense of unpredictability that characterizes anxiety pathology [5].

Therapeutic Implications and Future Directions

The AIC represents a promising therapeutic target for interventions aimed at improving decision-making under uncertainty in neuropsychiatric disorders. Both pharmacological and neuromodulation approaches that normalize AIC function could potentially ameliorate maladaptive uncertainty processing in conditions like anxiety disorders and addiction [6]. The sex differences in AIC function, particularly regarding estrogen modulation, suggest the potential for personalized treatment approaches that account for hormonal influences on decision-making circuitry [6].

Future research should focus on developing more precise models of AIC subregional contributions to uncertainty and arousal, particularly using high-resolution neuroimaging and causal manipulation approaches in both animal models and humans. The development of tasks that better dissociate different forms of uncertainty (perceptual, outcome, social) will help clarify whether the AIC implements domain-general or domain-specific uncertainty processes [4]. Additionally, longitudinal studies examining AIC development and aging could reveal critical periods for intervention in neurodevelopmental and neurodegenerative conditions characterized by uncertainty processing deficits.

Figure 2: Experimental Workflow for Investigating AIC Function. Research typically begins with paradigm selection appropriate to the research question (human imaging for cognitive/clinical studies; animal models for mechanistic studies), proceeds through parallel data collection methods, and concludes with integrated data analysis to draw mechanistic conclusions about AIC function.

The anterior insular cortex serves as a critical neural hub for processing uncertainty and generating appropriate arousal responses, functioning through its unique position as an integrator of interoceptive information with external sensory cues. Its domain-general role in uncertainty processing, network-switching capabilities, and generation of subjective feeling states make it essential for adaptive decision-making in unpredictable environments. Understanding the precise mechanisms through which the AIC contributes to uncertainty and arousal processing provides crucial insights for developing interventions for neuropsychiatric conditions characterized by maladaptive decision-making, offering promise for more effective treatments for addiction, anxiety disorders, and other conditions involving disrupted uncertainty processing.

The anterior cingulate cortex (ACC) is a critical hub in the primate brain, orchestrating cognitive control and emotional responses to guide behavior. Situated in the medial frontal lobe, this region integrates information about reward, punishment, conflict, and error to facilitate adaptive decision-making, particularly in uncertain environments [10] [11]. Its strategic anatomical position, with extensive connections to prefrontal, limbic, and motor systems, enables the ACC to monitor ongoing actions, evaluate outcomes, and adjust behavioral strategies when contingencies change [10]. This whitepaper synthesizes current research on the ACC's functional architecture, focusing on its principal role in processing uncertainty—a fundamental aspect of complex decision-making with significant implications for understanding both normal cognitive function and disorders of behavioral control.

Anatomical and Functional Organization of the Cingulate Cortex

The cingulate cortex is not a uniform entity but comprises distinct subregions with specialized functions. A fundamental division exists between the anterior cingulate cortex (ACC) and the posterior cingulate cortex. The ACC itself can be further subdivided based on cytoarchitecture and connectivity:

- Dorsal ACC (dACC)/Mid-cingulate Cortex: This region, encompassing Brodmann areas 24' and 32', has strong connections to the lateral prefrontal cortex, parietal cortex, and supplementary motor area. It is primarily involved in cognitive processes such as conflict monitoring, error detection, and effort-based decision making [10] [12].

- Rostral/Ventral ACC (rACC/vACC): This area, with connections to the amygdala, orbitofrontal cortex (OFC), and autonomic brainstem nuclei, is more closely tied to affective processes, including assessing emotional salience and reward value [10] [13].

Functional neuroimaging and tractography studies confirm this dichotomy, revealing distinct white matter pathways linking the dACC to cognitive control networks and the vACC to the limbic system [10]. This parallel processing architecture allows the ACC to integrate cognitive and emotional signals simultaneously, a capacity crucial for navigating uncertain environments where decisions carry potential costs and rewards.

The Cingulate Cortex as a Neural Substrate for Uncertainty

Uncertainty is a multi-faceted concept in decision neuroscience, encompassing expected uncertainty (known stochasticity in outcomes), unexpected uncertainty (rare changes in environmental contingencies), and volatility (the frequency of such changes over time) [14]. The ACC is critically engaged in processing all these forms of uncertainty, acting as a key node in the brain's decision-making network under incomplete information.

Evidence from Neuroimaging and Meta-Analyses

A large-scale meta-analysis of 76 fMRI studies (N = 4,186 participants) provides the most robust evidence for a core uncertainty-processing network. This analysis identified consistent activations across studies, with the anterior insula and ACC being the most prominent hubs [12]. The table below summarizes the key brain regions involved.

Table 1: Neural Correlates of Uncertainty Processing from fMRI Meta-Analysis [12]

| Brain Region | Brodmann Area(s) | Key Associated Function in Uncertainty |

|---|---|---|

| Anterior Cingulate Cortex (ACC) | 24, 32 | Conflict monitoring, cost-benefit assessment, performance adjustment |

| Anterior Insula | 13, 47 | Interoceptive awareness, emotional and motivational anticipation |

| Inferior Frontal Gyrus | 45, 47 | Impulse control, motor planning, behavioral adaptation |

| Medial Frontal Gyrus | 6, 32 | Assessment of potentially threatening stimuli |

| Cingulate Gyrus | 24, 32 | Evaluation of ongoing strategy reliability |

The meta-analysis revealed functional specialization within this network. For instance, the left anterior insula was more active during reward evaluation and anticipation, whereas the right anterior insula was engaged during learning and cognitive control [12]. This suggests a hemispheric asymmetry in how uncertainty is processed, with the left hemisphere more involved in motivational aspects and the right in adaptive control.

Quantitative Encoding of Reward Probability and Uncertainty

The ACC does not merely respond to the presence of uncertainty; it quantitatively encodes its fundamental parameters. Research using event-related potentials (ERPs) has isolated a component called the feedback-related negativity (FRN), which is generated in the ACC and peaks approximately 300 milliseconds after outcome presentation [11].

In a gambling task where reward probability was parametrically manipulated, the FRN amplitude was systematically modulated. The win-related FRN increased as the probability of a win decreased, demonstrating the ACC's sensitivity to reward probability. Furthermore, the win-related FRN was also modulated by reward uncertainty (measured as variance), with the largest amplitudes at a probability of 0.5, where uncertainty is maximal [11]. This shows the ACC performs a rapid, quantitative computation of both the expected value and the risk associated with a choice.

Table 2: Modulation of Feedback-Related Negativity (FRN) by Reward Parameters [11]

| Reward Probability | Reward Uncertainty (Variance) | Win-Related FRN Amplitude | Loss-Related FRN Amplitude |

|---|---|---|---|

| 1.0 (Certain) | 0.00 | Smallest | Not Applicable |

| 0.75 | 0.75 | Moderate | Moderate |

| 0.5 (Most Uncertain) | 1.00 | Largest | Largest |

| 0.25 | 0.75 | Moderate | Moderate |

| 0.0 (Certain) | 0.00 | Not Applicable | Smallest |

Key Experimental Paradigms and Findings

Feature Uncertainty and Divided Attention

An early PET study investigated "feature uncertainty," a paradigm where subjects do not know which visual feature (orientation or spatial frequency) will be relevant for discrimination until after stimulus offset. This creates a state of divided attention and expectancy. Results showed that the feature uncertainty condition, compared to simple discrimination tasks, evoked robust and consistent activation in the dorsal ACC (Brodmann area 32). The study concluded that the dACC is critical in conditions that involve divided attention, expectancy under uncertainty, and cognitive monitoring [15].

Feedback-Driven Value Updating and Behavioral Adaptation

Recent research employing calcium imaging in mice performing a reversal learning task provides granular insight into how individual ACC neurons drive adaptation. When stimulus-reward contingencies were reversed, ACC neurons integrated outcome information to update the value representation of the task-relevant stimulus in subsequent trials [16]. This process forms an internal feedback loop where the difference between expected and actual outcomes (prediction error) is used to iteratively update value representations and guide future decisions. Dynamic recruitment of different neuronal populations in the ACC determined the learning rate of this error-guided value iteration, ultimately controlling the decision to switch behavioral strategies [16]. Optogenetic suppression of the ACC significantly slowed feedback-driven decision switching, confirming its necessity for behavioral flexibility without affecting the execution of an established strategy [16].

Cost-Weighted Decision Making in Ecological Contexts

The role of the ACC in processing potential costs has been explored in ecological settings like simulated driving. An fMRI study placed participants in a scenario where their view was occluded, creating uncertainty about oncoming traffic when turning. Resolving this uncertainty (via an assist system) reduced activity in the ACC and amygdala [13]. This suggests that under conditions of potential high cost, the ACC, in concert with the amygdala, is involved in assessing risk and ambiguity. This supports models of cost-weighted decision making, differentiating it from the reward-weighted processing more commonly associated with the ventromedial prefrontal cortex [13].

The Scientist's Toolkit: Key Research Reagent Solutions

The following table details essential reagents, tools, and methodologies used in contemporary research on the cingulate cortex, as derived from the cited literature.

Table 3: Essential Reagents and Methodologies for Cingulate Cortex Research

| Reagent / Method | Function / Application | Example Use Case |

|---|---|---|

| GCaMP6f (Genetically Encoded Ca²⁺ Indicator) | Monitoring activity of specific neuronal populations via two-photon calcium imaging. | Tracking value representation drift in mouse ACC layer 2/3 excitatory neurons during reversal learning [16]. |

| Optogenetic Inhibitors (e.g., eNpHR) | Temporally precise inhibition of neural activity in a cell-type-specific manner. | Establishing causal role of ACC by suppressing its activity and observing slowed decision switching [16]. |

| Probabilistic Tractography (DWI) | Non-invasive mapping of white matter fiber tracts and structural connectivity in humans. | Differentiating functional roles of ACC and OFC based on their distinct connectivity patterns [10]. |

| Feedback-Related Negativity (FRN) | An ERP component serving as a non-invasive proxy for ACC activity during outcome evaluation. | Quantifying rapid encoding of reward probability and uncertainty in the human ACC [11]. |

| Psycho-Physiological Interaction (PPI) | fMRI analysis method to identify context-dependent changes in functional connectivity. | Mapping how functional connectivity between pre-SMA and associative areas changes with uncertainty [17]. |

| Shannon Entropy | Information-theoretic measure to quantify decision uncertainty in an economic task. | Correlating task-configuration entropy with BOLD signal to identify uncertainty-coding regions [17]. |

Integrated Neural Workflow of Uncertainty Processing

The following diagram synthesizes findings across studies to illustrate the proposed neural workflow for uncertainty processing in hierarchical decision-making, highlighting the integrative role of the cingulate cortex.

The cingulate cortex, particularly its anterior division, serves as a critical integration center for cognitive and emotional signals, enabling adaptive decision-making under uncertainty. Converging evidence from neuroimaging, electrophysiology, and causal manipulations establishes that the ACC quantitatively encodes uncertainty parameters, monitors outcomes, and drives behavioral adaptation through iterative updating of value representations. Its function is supported by a distributed network including the anterior insula, amygdala, and basal ganglia, with distinct subregions of the ACC specializing in cost-benefit analysis and performance adjustment. Understanding these mechanisms provides a solid foundation for future research aimed at developing interventions for psychiatric disorders characterized by impaired decision-making, such as addiction, obsessive-compulsive disorder, and schizophrenia, where the ACC and its associated networks are frequently dysregulated [18].

The prefrontal cortex (PFC) is widely regarded as the pinnacle of brain evolution in humans and serves as the central hub for higher-order cognitive processes [19]. Research over recent decades has systematically investigated its functional organization, moving beyond the simplistic view of a unitary "central executive" to reveal a remarkable functional-anatomical specificity within distinct PFC subregions [19] [20]. This whitepaper synthesizes current evidence on the dissociable networks within the PFC that support two fundamental processes: cognitive control and value-based decision-making. We explore how this functional specialization enables complex decision-making under uncertainty, with particular attention to implications for psychiatric research and therapeutic development. Within the broader thesis of neural substrates of decision-making under uncertainty, understanding these PFC subsystems provides a foundational framework for elucidating how the brain evaluates options, resolves conflict, and selects actions in dynamic environments.

Functional-Anatomical Dissociation in the Prefrontal Cortex

Cognitive Control Network

Cognitive control (CC), often synonymous with executive function, refers to a set of processes that optimize goal-directed behavior and counter automatic responses [20]. These processes include task switching, response inhibition, conflict monitoring, working memory updating, and set shifting [19] [20]. Lesion-symptom mapping studies with large patient samples (N=344, with 165 PFC lesions) have definitively shown that cognitive control functions depend primarily on dorsal sectors of the medial PFC and both ventral and dorsal sectors of the lateral PFC [19].

The core network for cognitive control comprises:

- Dorsolateral Prefrontal Cortex (dlPFC): Critical for response inhibition and maintaining task context [19] [21]. Damage to left dlPFC specifically impairs performance on the Stroop task, which measures response inhibition [19].

- Anterior Cingulate Cortex (ACC): Essential for response/set switching and conflict monitoring [19]. The rostral ACC is particularly important for set shifting, as demonstrated by impairments on the Trail-Making Test and Wisconsin Card Sorting Test following damage to this region [19].

- Frontoparietal Networks: Extensive sectors of the left frontoparietal cortices support complex executive functions like verbal fluency and divergent thinking [19].

The psychometric structure of cognitive control exhibits both "unity and diversity" – with a common component shared across CC tasks alongside specific components for mental set shifting, working memory updating, and response inhibition [20].

Value-Based Decision-Making Network

Value-based decision-making involves evaluating potential rewards and punishments to guide choices [19]. Unlike cognitive control, this function relies predominantly on ventral and medial sectors of the PFC [19] [21].

Key regions include:

- Orbitofrontal Cortex (OFC) and Ventromedial PFC (vmPFC): Central to reward learning, valuation, and decision-making [19] [21]. Patients with vmPFC lesions show marked impairments on the Iowa Gambling Task, which measures value-based decision-making and reward learning [19].

- Frontopolar Cortex: Contributes to complex decision processes, particularly when integrating multiple sources of information [19].

- Ventral Striatum and Posterior Cingulate Cortex: These subcortical and medial parietal regions are preferentially engaged during intertemporal choices involving delayed outcomes [22].

Notably, lesion-deficit mapping reveals essentially zero anatomical overlap between regions critical for cognitive control versus value-based decision-making, indicating these systems are functionally and anatomically dissociable [19].

Integrated Neural Network for Decision-Making

While dissociable, the cognitive control and valuation networks interact within a broader neural architecture that supports decision-making under uncertainty. Current evidence indicates that this integrated system includes both cortical and subcortical structures [21]:

- Cortical Structures: OFC, ACC, and dlPFC

- Subcortical Structures: Amygdala, thalamus, hippocampus, and cerebellum

- Connections: Complex neural network of cortico-cortical and cortico-subcortical connections [21]

This integrated network enables the brain to evaluate options, consider potential outcomes, and select appropriate actions despite uncertainty about future consequences.

Table 1: Key Prefrontal Subregions and Their Cognitive Functions

| Prefrontal Subregion | Primary Cognitive Functions | Associated Tasks | Lesion Effects |

|---|---|---|---|

| Dorsolateral PFC (dlPFC) | Response inhibition, working memory, maintaining task context | Stroop Test, Controlled Oral Word Association Test | Impaired response inhibition, reduced verbal fluency |

| Anterior Cingulate Cortex (ACC) | Response/set switching, conflict monitoring, error detection | Trail-Making Test (Part B - A), Wisconsin Card Sorting Test | Increased perseverative errors, impaired set shifting |

| Ventromedial PFC (vmPFC) | Value representation, reward learning, emotional decision-making | Iowa Gambling Task | Poor decision-making, impaired reward learning |

| Orbitofrontal Cortex (OFC) | Outcome expectation, value-based choice | Probabilistic choice tasks | Altered risk perception, impulsive choices |

| Frontopolar Cortex | Complex decision integration, multi-source evaluation | Complex decision-making tasks | Impaired integration of competing decision factors |

Neurophysiological Mechanisms of Cognitive Control

Adaptive Coding in the PFC

The PFC exhibits remarkable neural plasticity that enables its role in cognitive control. The adaptive coding model proposes that PFC neurons dynamically adapt their tuning profiles to represent information according to current task demands [23]. Rather than being hardwired for specific functions, PFC neurons temporarily reconfigure their response properties based on behavioral context [23].

Neurophysiological studies in non-human primates demonstrate that PFC populations undergo rapid state transitions when processing task instructions, eventually settling into stable low-activity states that maintain task-relevant rules [23]. This temporary configuration of network states enables the same neuronal populations to process identical stimuli differently depending on context.

Dynamic Population Coding

Time-resolved population-level analyses reveal how PFC networks support flexible decision-making:

- Instruction Processing: Task instructions trigger a rapid series of state transitions in PFC populations before establishing a stable rule-representation state [23].

- Context Maintenance: During delay periods, PFC maintains a low-activity state that differentially encodes task context, despite similar overall firing rates [23].

- Flexible Decision Making: Stimulus responses evolve through different trajectories in state space depending on current task rules, demonstrating context-dependent processing [23].

This dynamic coding mechanism allows the PFC to rapidly reconfigure information processing based on changing behavioral demands, providing a neural basis for cognitive flexibility.

Decision-Making Under Uncertainty: Risk vs. Delay

Distinct Neural Substrates for Different Uncertainties

Decision-making under uncertainty involves both probabilistic (risky) and intertemporal (delayed) outcomes. While classical models suggested similar psychological mechanisms for both domains, neuroimaging evidence reveals distinct neural substrates [22].

Table 2: Neural Substrates of Risky vs. Intertemporal Choice

| Neural Region | Risky Choice | Intertemporal Choice | Functional Significance |

|---|---|---|---|

| Posterior Parietal Cortex | ↑ Activation | - | Probability calculation, numerical processing |

| Lateral Prefrontal Cortex | ↑ Activation | - | Executive control, risk evaluation |

| Posterior Cingulate Cortex | - | ↑ Activation | Value representation for delayed outcomes |

| Striatum | - | ↑ Activation | Reward processing, immediate reward bias |

| Anterior Cingulate Cortex | Moderate activation | Moderate activation | Conflict monitoring, choice difficulty |

Behavioral and Neural Dissociations

Direct comparisons between risky and intertemporal choice reveal fundamental differences:

- Neural Activation Patterns: Risky choices preferentially activate the posterior parietal and lateral prefrontal cortices, while intertemporal choices more strongly engage the posterior cingulate cortex and striatum [22].

- Prediction of Choice Behavior: Activation of reward-related regions predicts choices of more-risky options, whereas activation of control regions predicts choices of more-delayed or less-risky options [22].

- Pharmacological Dissociations: Nicotine deprivation affects both risk and delay discounting, while serotonin depletion specifically affects delay discounting without altering risk preferences [22].

These findings indicate that different forms of uncertainty (risk vs. delay) engage at least partially distinct cognitive and neural processes, despite superficial similarities in behavioral patterns.

Experimental Methodologies and Protocols

Key Neuropsychological Assessment Tools

Research on prefrontal subregions relies on standardized neuropsychological tasks that selectively measure specific cognitive functions:

Wisconsin Card Sorting Test (WCST)

- Purpose: Measures set shifting and cognitive flexibility

- Methodology: Participants must sort cards according to changing rules (color, shape, number)

- Key Metrics: Perseverative errors (continuing to use a previously correct rule after it has changed)

- Neural Substrate: Damage to ACC and left medial superior frontal gyrus impairs performance [19]

Trail-Making Test (TMT)

- Purpose: Assesses task switching and cognitive flexibility

- Methodology: Part A requires connecting numbers in sequence; Part B requires alternating between numbers and letters

- Key Metrics: TMT B - A difference score isolates executive switching

- Neural Substrate: Associated with focal regions of left rostral ACC [19]

Stroop Color-Word Test

- Purpose: Measures response inhibition and conflict monitoring

- Methodology: Participants name ink color of color words that are incongruent (e.g., "RED" printed in blue ink)

- Key Metrics: Color-word interference score (difference between naming colors of incongruent words vs. neutral stimuli)

- Neural Substrate: Dependent on left dlPFC integrity [19]

Iowa Gambling Task (IGT)

- Purpose: Assesses value-based decision-making and reward learning

- Methodology: Participants choose cards from four decks with different reward/punishment schedules

- Key Metrics: Net score (advantageous minus disadvantageous choices)

- Neural Substrate: Critically dependent on vmPFC [19]

Lesion-Symptom Mapping Methodology

Voxel-based lesion-symptom mapping (VLSM) provides causal evidence for brain-behavior relationships:

- Participants: Large samples of patients with focal brain lesions (e.g., N=344 with 165 PFC lesions) [19]

- Lesion Mapping: Individual lesions plotted onto reference brain template

- Statistical Analysis: Nonparametric VLSM compares performance scores at each voxel between patients with vs. without damage to that voxel

- Covariate Control: Statistical removal of variance attributable to basic cognitive skills (verbal abilities, spatial abilities, verbal memory, spatial memory) [19]

Neuroimaging Approaches

Functional Magnetic Resonance Imaging (fMRI)

- Application: Measures brain activation during decision-making tasks

- Analysis Methods: Univariate activation comparisons, multivariate pattern analysis, functional connectivity

- Strengths: Whole-brain coverage, good spatial resolution

Neurophysiological Recording

- Application: Records single-unit or population activity in non-human primates

- Analysis Methods: Time-resolved population-level pattern analyses, state space visualization

- Strengths: Excellent temporal resolution, direct neural measurement

Research Reagent Solutions

Table 3: Essential Research Tools for Investigating Prefrontal Function

| Research Tool | Function/Application | Key Features |

|---|---|---|

| Voxel-Based Lesion-Symptom Mapping (VLSM) | Causal brain-behavior mapping | Nonparametric statistical analysis; provides causal evidence unlike fMRI |

| Standardized Neuropsychological Battery | Multi-domain cognitive assessment | Includes TMT, WCST, Stroop, COWA, IGT; enables dissociation of cognitive functions |

| fMRI-Compatible Decision Tasks | Neural activation during decision-making | Presents risky and intertemporal choices; measures BOLD response |

| Population Neural Recording | Neurophysiological mechanisms | Single-unit and multi-unit recording in non-human primates; high temporal resolution |

| Dynamic Pattern Analysis | Neural population dynamics | Time-resolved analysis of population coding; state space trajectory visualization |

Visualization of Prefrontal Functional Organization

Hierarchical Organization of Prefrontal Networks

Dynamic Coding in Prefrontal Cortex

Implications for Psychiatric Research and Drug Discovery

Understanding the neural substrates of cognitive control and value-based decision-making has significant implications for psychiatric disorders and therapeutic development.

Clinical Implications

Deficits in cognitive control and value-based decision-making are transdiagnostic features across multiple psychiatric conditions:

- Addiction: Compromised value representation in vmPFC/OFC combined with reduced cognitive control from dlPFC/ACC contributes to compulsive drug-seeking [20].

- Obsessive-Compulsive Disorder: Impaired cognitive control circuits fail to inhibit intrusive thoughts and compulsive behaviors [20].

- Mood Disorders: Altered value processing and reward anticipation in ventral PFC networks contribute to anhedonia in depression [20].

- Impulsivity Disorders: Dysfunctional interactions between cognitive control and valuation systems lead to poor decision-making and failure to delay gratification [22] [20].

Drug Discovery Applications

Advanced computational approaches are leveraging knowledge of PFC function for therapeutic development:

- Deep Learning Applications: DL-based tools (DeepCPI, DeepDTA, WideDTA, PADME DeepAffinity, DeepPocket) are being applied to identify drug targets and predict drug-target interactions [24].

- ADMET Prediction: AI and DL models enable early prediction of absorption, distribution, metabolism, excretion, and toxicity properties, reducing late-stage failures in drug development [24].

- De Novo Drug Design: DL approaches generate novel chemical scaffolds targeting specific neural mechanisms implicated in PFC dysfunction [25] [24].

The integration of cognitive neuroscience with computational drug discovery holds promise for developing more targeted interventions for psychiatric disorders characterized by PFC dysfunction.

The prefrontal cortex exhibits a remarkable functional-anatomical specialization, with distinct networks supporting cognitive control (dorsolateral PFC and ACC) versus value-based decision-making (orbitofrontal, ventromedial, and frontopolar cortex). These networks operate through dynamic coding mechanisms that adapt to behavioral context and enable flexible decision-making under uncertainty. The dissociation between neural systems processing risky versus delayed outcomes further refines our understanding of decision-making under different forms of uncertainty. This knowledge provides a foundation for understanding the neural basis of psychiatric disorders and developing targeted therapeutic interventions. Future research should focus on characterizing the interactions between these systems and developing computational models that bridge neural mechanisms with cognitive function and clinical applications.

Decision-making under uncertainty is a complex cognitive process that relies on the intricate coordination of multiple neural systems. While the prefrontal cortex often garners significant attention for its role in executive control, subcortical structures are now recognized as fundamental contributors to evaluating options, processing emotions, and guiding actions, particularly in ambiguous or risky situations. This whitepaper synthesizes current research on the distinct and interactive roles of the striatum, amygdala, and broader limbic system in decision-making under uncertainty. Framed within a broader thesis on the neural substrates of this critical cognitive function, we detail the specific computational roles of these regions, present quantitative findings in structured formats, describe key experimental paradigms, and visualize the underlying neural circuitry. Understanding these subcortical contributions provides vital insights for developing novel therapeutic strategies for psychiatric and neurological disorders characterized by decision-making deficits.

The Striatum: Core of Reward and Action Selection

The striatum, a key component of the basal ganglia, is central to learning action-outcome associations, value representation, and motor response selection. Its function is best understood not in isolation, but as part of broader corticostriatal circuits [26].

Functional Anatomy and Corticostriatal Circuits

Evidence from rodent, nonhuman primate, and human studies consistently demonstrates that the dorsal striatum can be partitioned into functionally distinct territories that mediate different forms of decision-making [26]:

- Dorsomedial Striatum (Caudate in primates): This region, interconnected with medial prefrontal, orbitomedial, premotor, and anterior cingulate cortices, mediates goal-directed actions. Choices are flexible, sensitive to changes in the value of the outcome, and rely on action-outcome encoding.

- Dorsolateral Striatum (Putamen in primates): This region, connected to sensorimotor cortices, mediates habitual actions. Behavior is more rigid, stimulus-bound, and operates via a process of sensorimotor association rather than sensitivity to the current value of the goal [26].

The transition from goal-directed to habitual control with overtraining is associated with a shift in neural activity from the dorsomedial to the dorsolateral striatum. Lesions or inactivation of the dorsolateral striatum can render habitual performance sensitive to outcome devaluation once more, reverting it to a goal-directed mode [26].

The Striatum in Uncertainty Processing

The striatum is critically involved in processing the uncertainty inherent in many decisions. During anticipatory anxiety, which involves uncertainty about potential aversive events, the bilateral striatum shows increased activation. The amplitude of the BOLD signal change in this region generally parallels the subjective rating of anxiety, suggesting a role in signaling the intensity of an uncertain threat [27].

Furthermore, the striatum is implicated in the explore-exploit dilemma. In a study where monkeys performed a task analogous to a casino decision, neurons in the ventral striatum, along with the amygdala, signaled the value of exploring new opportunities versus exploiting known rewards [28]. A more recent computational model, the CogLink architecture, posits that the basal ganglia handle lower-level uncertainties—such as outcome uncertainty and associative uncertainty—through a quantile population code that represents a distribution of action-value beliefs, thereby guiding the exploration-exploitation trade-off [18].

Table 1: Key Striatal Functions in Decision-Making Under Uncertainty

| Striatal Region | Primary Function | Role in Uncertainty | Key Supporting Evidence |

|---|---|---|---|

| Dorsomedial Striatum (Caudate) | Goal-directed action; action-outcome encoding | Evaluates actions based on estimated value under uncertainty [26] | Lesions disrupt sensitivity to outcome devaluation [26] |

| Dorsolateral Striatum (Putamen) | Habitual, stimulus-bound action | Promotes rigid, automatic responses despite outcome uncertainty [26] | Inactivation restores sensitivity to action-outcome contingency [26] |

| Ventral Striatum (NAc) | Motivation, reward processing | Signals value of exploring uncertain options [28] | Neuron activity correlates with exploratory choices [28] |

The Amygdala: A Hub for Emotional Valuation and Uncertainty

Traditionally viewed as a fear center, the amygdala is now recognized as a critical node for assigning emotional and motivational significance to stimuli, a function that extends directly to decision-making under uncertainty.

Acquiring Value and Somatic States

The amygdala is essential for associating stimuli with their innate or learned emotional value. During the Iowa Gambling Task (IGT), a classic decision-making paradigm under uncertainty, patients with bilateral amygdala damage fail to generate autonomic (skin conductance) responses after receiving a reward or punishment. This suggests the amygdala is necessary for acquiring the value of stimuli and for inducing somatic states linked to primary inducers (direct rewards/punishments) [29]. Without these somatic signals, decision-making becomes impaired, as individuals lack the emotional cues that normally guide choices away from disadvantageous options.

Driving Risky Choice and Encoding Uncertainty

Recent studies have further elucidated the amygdala's specific role in risk-taking. In a 2025 study, participants made riskier choices when receiving performance feedback from avatars compared to real human faces. This behavioral shift was linked to a more favorable valuation of the uncertainty of which facial expression would be shown. fMRI analysis revealed that this valuation of uncertainty was associated with activity in the amygdala [30]. Specifically, a lower amygdala response to uncertainty in the avatar condition was correlated with increased risk-taking, indicating that the amygdala modulates risk preference by processing social and feedback uncertainty.

Furthermore, the amygdala works in concert with other regions to bias decisions. A disconnection study in rats demonstrated that a circuit from the basolateral amygdala (BLA) to the nucleus accumbens (NAc) biases choice toward larger, uncertain rewards. Disrupting this subcortical circuit reduced risky choice on a probabilistic discounting task [31].

Table 2: Amygdala-Centric Findings in Decision-Making Studies

| Experimental Paradigm | Key Finding Related to Amygdala | Implication for Uncertainty Processing |

|---|---|---|

| Iowa Gambling Task (IGT) [29] | Patients with amygdala damage fail to generate reward/punishment SCRs and choose disadvantageously. | Amygdala is critical for learning stimulus value and generating somatic markers for guidance under uncertainty. |

| Probabilistic Discounting in Rats [31] | A BLA → NAc circuit promotes choice of larger, uncertain rewards. | Amygdala drives risky choice via direct influence on the ventral striatum. |

| Avatar Feedback Task (fMRI) [30] | Reduced amygdala activity to feedback uncertainty predicts increased risk-taking. | Amygdala activity encodes the subjective value of uncertainty, influencing risk preference. |

| Monkey Explore-Exploit Task [28] | Amygdala neurons signal the value of exploring novel opportunities. | Amygdala contributes to resolving explore-exploit dilemmas by valuing uncertain options. |

Integrated Limbic Circuits and the PFC

Decision-making under uncertainty emerges from the dynamic interaction of the striatum and amygdala with the prefrontal cortex (PFC). Separate yet interconnected neural pathways mediate different decision biases [31].

A Tripartite Circuit for Risk-Based Decision Making

Research has identified a core cortico-limbic-striatal circuit involving the medial PFC, basolateral amygdala (BLA), and nucleus accumbens (NAc) that mediates decision-making about probabilistic outcomes [31]. Disconnection studies reveal the distinct contributions of these pathways:

- The BLA-NAc Circuit: This subcortical pathway biases choice toward larger, uncertain rewards. Disrupting communication between these structures reduces risky choice [31].

- The BLA-PFC Circuit: This pathway, particularly the top-down influence from the medial PFC to the BLA, biases choice away from risk. Disrupting this circuit increases the selection of the risky option, suggesting the PFC tempers the urge for riskier rewards as they become less profitable [31].

This demonstrates a dynamic competition between circuits, where the BLA-NAc pathway promotes risk and the PFC-BLA pathway exerts inhibitory control.

The Critical Role of the Hippocampus

The hippocampus, a central limbic structure, also plays a context-sensitive role. A 2024 study with patients with autoimmune limbic encephalitis (which focally affects the hippocampus) revealed a specific deficit: while their sensitivity to uncertainty itself was intact, they showed blunted sensitivity to reward and effort specifically when uncertainty was present. By contrast, their valuation of reward and effort was normal on uncertainty-free tasks [32]. This suggests the hippocampus is not for processing uncertainty per se, but for evaluating other decision attributes within an uncertain context, possibly by providing a rich, episodic context through mental time travel into past experiences or projected futures.

Experimental Protocols & Methodologies

To investigate the neural substrates of decision-making, researchers employ carefully designed behavioral tasks paired with techniques like fMRI and lesion studies.

Key Behavioral Paradigms

- Anticipatory Anxiety Task with fMRI: This paradigm induces anxiety using a classical conditioning approach with painful thermal stimuli. A visual conditioned stimulus (CS; e.g., a square) signals the potential for an unpredictable, painful unconditioned stimulus (US). Neural response is assessed with fMRI while subjects provide real-time ratings of their subjective anxiety upon each CS presentation. This allows direct correlation of BOLD signal with the intensity of anticipatory anxiety [27].

- Probabilistic Discounting Task (Rodents): Rats are trained in operant chambers to choose between a Small/Certain reward and a Large/Risky reward. The probability of receiving the large reward decreases across a session (e.g., from 100% to 50%, 25%, 12.5%). This measures the subject's tolerance for reward uncertainty. The task is often combined with intracerebral muscimol infusions to reversibly inactivate specific brain regions and probe their necessity [31].

- Iowa Gambling Task (IGT): Participants select cards from four decks. Two "disadvantageous" decks offer large immediate gains but larger long-term punishments, resulting in net loss. Two "advantageous" decks offer smaller immediate rewards but even smaller punishments, resulting in net gain. The task measures the ability to forego immediate, risky rewards for long-term benefit. It is often used with skin conductance response (SCR) recording to measure somatic states [29].

- Avatar Feedback Task (fMRI): Participants perform a risk-taking task where they choose between a safe option and a risky option. When they select the risky option, success is followed by an admiring facial expression and failure by a contemptuous expression, shown via either a real human face or an avatar. This paradigm tests how social feedback type modulates risk-taking and its neural correlates [30].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents and Materials for Decision-Making Research

| Item | Function/Application | Example Use Case |

|---|---|---|

| Functional MRI (fMRI) | Non-invasive measurement of brain activity via Blood-Oxygen-Level-Dependent (BOLD) signal. | Correlating neural activity in striatum/amygdala with subjective anxiety [27] or risk-taking [30]. |

| Intracerebral Muscimol Infusions | GABA_A receptor agonist used for reversible, temporary neural inactivation. | Disconnecting neural pathways (e.g., BLA-NAc) to establish causal roles in probabilistic discounting [31]. |

| Skin Conductance Response (SCR) | Measure of autonomic arousal via changes in skin's electrical conductivity. | Quantifying somatic states during the IGT; absent in patients with amygdala damage [29]. |

| Pathway Pain & Sensory Evaluation System | Computerized thermal stimulator for calibrated application of painful/unpleasant stimuli. | Delivering precise unconditioned stimuli (US) in anticipatory anxiety paradigms [27]. |

| Operant Conditioning Chambers | Controlled environments for rodent behavior, equipped with levers, food dispensers, and sensors. | Training and testing rats on probabilistic discounting and other decision-making tasks [31]. |

The striatum, amygdala, and interconnected limbic structures are not primitive emotional centers but sophisticated computational hubs essential for adaptive decision-making under uncertainty. The striatum provides a mechanism for both flexible and habitual action selection, while the amygdala is crucial for assigning emotional value and processing the uncertainty of potential outcomes. Their function is defined by their position within integrated circuits, such as the PFC-BLA-NAc network, where a balance between subcortical drives for risk and cortical control determines behavioral output. The hippocampus further contributes by providing an episodic context for valuation under uncertainty. Disruptions within these specific subcortical circuits or their balance with cortical control can manifest as the maladaptive decision-making observed in conditions like addiction, OCD, and anxiety disorders. Future research and therapeutic development must therefore target the dynamic interactions within these networks rather than isolated brain regions.

Functional Specialization and Hemispheric Asymmetries in Uncertainty Processing

Understanding the neural architecture that supports decision-making under uncertainty represents a fundamental challenge in cognitive neuroscience. This whitepaper synthesizes current research on how the human brain, particularly through hemispheric asymmetries and functional specialization, processes incomplete information during decision formation. The capacity to navigate uncertain environments relies on a distributed network of cortical and subcortical regions that exhibit both lateralized and complementary functions. Recent large-scale neuroimaging studies, meta-analyses, and computational modeling have significantly advanced our understanding of these mechanisms, revealing consistent patterns of hemispheric asymmetry across multiple uncertainty types and decision contexts. This document provides an in-depth technical analysis of these neural substrates, focusing on their implications for research and drug development targeting decision-making pathologies.

The significance of this research extends beyond basic science to clinical applications, as disruptions in uncertainty processing networks are observed across numerous psychiatric and neurological conditions. By mapping the consistent neural correlates and their specialized roles, we establish a foundation for developing targeted interventions that can modulate specific components of the decision-making architecture.

Quantitative Synthesis of Hemispheric Asymmetry Findings

Large-scale studies reveal consistent patterns of functional asymmetry during cognitive tasks. The following tables synthesize key quantitative findings from recent research on hemispheric specialization, particularly in contexts involving uncertainty processing.

Table 1: Network-Level Asymmetry Patterns Across Cognitive Domains (HCP Data, n=989) [33]

| Cognitive Domain | Task Contrast | Left-Hemisphere Networks | Right-Hemisphere Networks | Association with Task Accuracy |

|---|---|---|---|---|

| Language | Story > Math | LAN, FPN, AUD, DMN, PMM, VMM | VIS, SMM | Strong positive in LAN & FPN |

| Motor | Right Hand/Foot > Baseline | Contralateral SMM | - | Strong positive in SMM |

| Motor | Left Hand/Foot > Baseline | - | Contralateral SMM | Strong positive in SMM |

| Social Cognition | Social > Random | LAN, FPN, AUD, DMN | VIS, SMM | Moderate positive |

| Relational Processing | Relation > Match | LAN, FPN, AUD, DMN | VIS, SMM | Strong positive in FPN |

| Working Memory | 2-back > 0-back | VIS, SMM | DMN | Moderate positive |

| Emotion | Face Matching > Shapes | - | DAN, FPN, PMM | Moderate positive |

| Gambling | Reward > Punishment | Minimal asymmetry | Minimal asymmetry | Weak association |

Table 2: Meta-Analysis of Uncertainty Processing Clusters (76 fMRI studies, n=4,186) [12]

| Cluster | Volume (mm³) | Primary Regions | Hemispheric Distribution | Brodmann Areas | Proposed Functional Role |

|---|---|---|---|---|---|

| 1 | 4,664 | Anterior Insula (63.7%), IFG (16.2%), Claustrum (16.2%) | 100% Left | 13 (59.8%), 47 (10.6%), 45 (3.9%) | Motivational anticipation, reward evaluation |

| 2 | 3,736 | Cingulate Gyrus (52.9%), Medial FG (27.6%), Superior FG (19.5%) | 54.9% Left, 45.1% Right | 32 (43.6%), 6 (33.5%), 24 (21.8%) | Threat assessment, anxiety processing |

| 3 | 3,152 | Anterior Insula (61.3%), Claustrum (22.6%), IFG (12.3%) | 100% Right | 13 (53.8%), 47 (11.3%), 45 (2.8%) | Behavioral adaptation, feedback learning |

| 4 | 3,040 | Inferior Parietal Lobule (78.1%), Supramarginal Gyrus (14.7%) | 62.3% Left, 37.7% Right | 40 (78.1%), 2 (9.2%), 7 (4.6%) | Cognitive control, attentional shifting |

| 5 | 2,232 | Middle Frontal Gyrus (42.8%), Precentral Gyrus (34.9%) | 71.8% Left, 28.2% Right | 6 (58.3%), 4 (24.8%), 8 (9.7%) | Motor planning, action selection |

| 6 | 1,992 | Cerebellar Tonsil (58.3%), Inferior Semi-Lunar Lobule (29.4%) | 100% Right | - | Sensorimotor integration |

| 7 | 1,848 | Middle Temporal Gyrus (57.8%), Inferior Temporal Gyrus (32.6%) | 100% Left | 21 (57.8%), 20 (32.6%), 37 (7.1%) | Semantic processing, memory retrieval |

| 8 | 1,720 | Cuneus (48.9%), Middle Occipital Gyrus (31.2%) | 53.1% Left, 46.9% Right | 17 (48.9%), 18 (31.2%), 19 (17.4%) | Visual processing, uncertainty perception |

| 9 | 1,424 | Medial Frontal Gyrus (41.3%), Anterior Cingulate (38.7%) | 68.9% Left, 31.1% Right | 10 (41.3%), 32 (38.7%), 9 (17.5%) | Conflict monitoring, strategy switching |

Neural Architecture of Uncertainty Processing

Hemispheric Specialization in Uncertainty Networks

The neural processing of uncertainty during decision-making engages a distributed network with distinct hemispheric asymmetries. Meta-analytic evidence from 76 fMRI studies reveals that the anterior insula shows particularly strong lateralization: the left anterior insula (Cluster 1) is predominantly associated with reward evaluation and motivational anticipation during uncertainty, while the right anterior insula (Cluster 3) is more involved in behavioral adaptation and feedback-based learning [12]. This functional dissociation extends to prefrontal regions, where the right inferior frontal gyrus contributes to impulse control during uncertain choices, while the left counterpart supports more deliberate motor planning [12].

The cascade model of prefrontal executive function provides a theoretical framework for understanding these asymmetries, suggesting that the prefrontal cortex supports hierarchical control processes during decision-making under uncertainty [12]. According to this model, medial structures including the dorsal anterior cingulate cortex evaluate ongoing strategy reliability, while lateral prefrontal regions generate and maintain alternative strategies. This division of labor appears to respect hemispheric boundaries, with the left hemisphere specializing in sequential, analytical processing of uncertain outcomes, and the right hemisphere contributing to global, integrative assessment of uncertain contexts.

Decision-Making Phases and Hemispheric Contributions

Decision-making under uncertainty unfolds across distinct temporal phases, each engaging specialized hemispheric resources. The Iowa Gambling Task illustrates this temporal dynamics, with early trials (1-50) assessing decision-making under uncertainty (unknown payoffs) and later trials (51-100) assessing decision-making under risk (known payoffs) [34]. Evidence from patients with unilateral hemispheric damage demonstrates that the right prefrontal cortex is crucial for intertemporal decision-making, particularly in weighing long-term versus short-term outcomes [34]. Conversely, frequency-based decision-making (choices based on reward-punishment frequency rather than long-term payoffs) shows more complex patterns that may depend on intact interhemispheric communication.

A single-case study of a patient with left-hemispheric atrophy and subsequent hemispherotomy revealed that unilateral right-hemisphere function supports basic intertemporal decision-making but produces altered patterns in phase-specific decisions [34]. Specifically, after disconnection of the left hemisphere, disadvantageous deck choices in the IGT became contingent on task progression immediately after surgery, but independent of progression after 12 months, suggesting that the right hemisphere can subserve decision-making but with qualitatively different strategic approaches compared to the intact bilateral system.

Experimental Paradigms and Methodologies

Standardized Protocols for Investigating Uncertainty Processing

Human Connectome Project (HCP) Asymmetry Protocol

The HCP employs a comprehensive multi-task fMRI battery to quantify functional asymmetry across cognitive domains [33]. The standardized protocol includes:

- Participants: 989 healthy adults with rigorous quality control (RMS head displacement < 2mm)

- Imaging Parameters: High-resolution fMRI (3T) with surface-based analysis focusing on 91,281 grayordinates

- Task Battery: Seven domains (motor, language, social cognition, relational processing, working memory, gambling, emotion) with 17 contrasts

Asymmetry Quantification: Calculation of normalized asymmetry index (Δ) at 32,492 cortical vertices:

Δ = (L - R) / (|L| + |R|) where L and R represent fMRI signal amplitudes in left and right hemispheres [33]

Analysis Pipeline: Surface-based alignment using symmetrical templates to minimize anatomical variability and enhance detection of subtle asymmetry patterns

- Validation: Split-sample reproducibility analysis (Discovery n=504, Replication n=485) matched for age, sex, and BMI

Decision-Based Payoff Uncertainty Measurement

A novel quantitative approach measures uncertainty based on observed decisions and outcomes rather than subjective beliefs [35]:

- Concept: Decision-based payoff (DBP) uncertainty quantifies how far decisions deviate from optimal with full information

- Calculation: DBP = Average relative regret = Average [(Optimal payoff - Actual payoff) / Optimal payoff]

- Properties: Ranges [0,1]; compatible with first- and second-order stochastic dominance; enables cross-problem comparisons

- Application: Demonstrated through investment in European call options under uncertain asset prices

Florida-And-Georgia (FLAG) Gambling Task

The FLAG task addresses limitations of traditional paradigms like the Iowa Gambling Task by isolating specific cognitive components [36]:

- Structure: 100 trials, each with Sampling phase (5 examples from deck) and Choice phase (deck vs. sure-thing offer)

- Design Advantages: Removes stimulus-response contingency; symmetrically varies magnitude/frequency of gains and losses; enables modeling of multiple cognitive biases

- Computational Modeling: Prospect Theory-inspired framework with parameters for:

- Sensitivity to outliers (η+, η-): Utility function curvature for gains and losses

- Primacy-recency bias (α): Weighting of early vs. recent outcomes

- Loss aversion (λ): Asymmetric weighting of losses versus gains (though not consistently observed)

- Inverse temperature (βr): Choice stochasticity in decision rule

- Validation: 170 young adults; parameter recovery analyses support task's computational properties

CogLink Architecture for Hierarchical Uncertainty

The CogLink framework provides a biologically grounded neural architecture for hierarchical decision-making under uncertainty [18] [37]. This computational model bridges neural mechanisms with cognitive function through several key components:

- Basic Network: Models premotor cortico-thalamic-basal ganglia loops for reinforcement learning and efficient exploration

- Augmented Network: Incorporates associative cortico-thalamic-basal ganglia loops with mediodorsal thalamus and prefrontal cortex interactions for contextual inference and strategy switching

- Quantile Population Coding: Basal ganglia neurons encode action-value distributions using quantile codes, enabling uncertainty representation

- Neural Implementation: Rate-based neurons with dopamine-dependent plasticity mechanisms for online learning

- Specialization: Different circuits handle distinct uncertainty types (outcome uncertainty vs. associative uncertainty)

The CogLink architecture successfully reproduces animal behavior in hierarchical tasks and provides insight into neural mechanisms underlying complex decision-making, including perturbations relevant to schizophrenia [18].

The Scientist's Toolkit: Research Reagents and Methodologies

Table 3: Essential Research Tools for Investigating Uncertainty Processing

| Tool/Reagent | Specifications | Primary Function | Example Applications |

|---|---|---|---|

| HCP Task fMRI Battery | 7 domains, 17 contrasts, 989 participants | Quantifying functional asymmetry across cognitive domains | Mapping network-specific lateralization patterns [33] |

| Activation Likelihood Estimation (ALE) | GingerALE 3.0.2, cluster-level p<0.05 correction | Voxel-wise meta-analysis of neuroimaging foci | Identifying consistent neural correlates across studies [12] |

| Asymmetry Index (Δ) | Δ = (L - R) / (|L| + |R|) | Normalized difference in hemispheric activation | Threshold-independent asymmetry quantification [33] |

| FLAG Task Computational Model | Prospect Theory framework with 4+ parameters | Decomposing decision-making into cognitive biases | Isolating sensitivity to outliers, primacy-recency effects [36] |

| CogLink Neural Architecture | Rate neurons, quantile population codes | Modeling hierarchical decision-making under uncertainty | Linking neural dysfunction to computational psychiatry [18] |

| Decision-Based Payoff Uncertainty | DBP = Average relative regret [0,1] | Quantifying informational uncertainty from observed payoffs | Evaluating decision quality across uncertain environments [35] |

| Iowa Gambling Task (IGT) | 100 trials, uncertainty/risk phases | Assessing decision-making under ambiguity | Studying ventromedial PFC and right hemisphere contributions [34] |

The neural architecture supporting uncertainty processing demonstrates remarkable functional specialization and hemispheric asymmetry. Consistent patterns emerge across multiple methodologies: the left hemisphere shows dominance in reward evaluation and motivational anticipation, particularly through the anterior insula and inferior frontal gyrus, while the right hemisphere specializes in behavioral adaptation and integrative assessment. These asymmetries are not absolute but represent complementary processing strengths that together support flexible decision-making in uncertain environments.

The research tools and methodologies outlined in this whitepaper provide a foundation for advancing both basic science and clinical applications. Future research should focus on elucidating the dynamic interactions between these specialized systems, their development across the lifespan, and their disruption in clinical populations. For drug development professionals, these findings highlight potential targets for modulating specific components of the decision-making architecture in conditions characterized by uncertainty processing deficits, including anxiety disorders, depression, and schizophrenia.

Methodological Approaches and Translational Applications in Biomedicine

Understanding the neural substrates of decision-making under uncertainty represents a fundamental challenge in cognitive neuroscience, with significant implications for developing interventions for neurological and psychiatric conditions. This whitepaper provides an in-depth technical examination of contemporary neuroimaging paradigms, focusing on the complementary strengths of functional magnetic resonance imaging (fMRI) and electroencephalography (EEG) for elucidating brain mechanisms during task-based activation studies. The integration of these multimodal techniques offers researchers a powerful approach to capture both the spatial precision of hemodynamic responses and the temporal dynamics of electrical neural activity, enabling comprehensive mapping of the complex networks governing uncertain decision processes. We synthesize current methodological approaches, experimental findings, and analytical frameworks to equip researchers and drug development professionals with the technical knowledge necessary to design robust neuroimaging studies that can identify biomarkers and evaluate therapeutic interventions targeting decision-making pathologies.

Advanced meta-analytic evidence now confirms that decision-making under uncertainty engages a distributed neural network encompassing prefrontal, striatal, and insular regions, with demonstrated hemispheric specialization in cognitive and emotional processing [12]. The anterior cingulate cortex (ACC) and anterior insula serve as integrative hubs for cognitive and emotional signals during uncertainty, forming a core system that evaluates reliability of ongoing strategies and generates alternative approaches when predictability breaks down [12]. Furthermore, research demonstrates that well-designed task-based fMRI paradigms significantly outperform resting-state protocols in predictive power for behavioral outcomes, highlighting the critical importance of paradigm selection in research and clinical trial design [38].

Neuroimaging Modalities: Technical Foundations and Comparative Analysis

fMRI and EEG: Principles and Complementarity