Breaking Sensitivity Barriers: Advanced Strategies for Detecting Low-Abundance Signaling Targets in Drug Discovery

Detecting low-abundance signaling proteins like cytokines and transcription factors is a monumental challenge in biochemical assay development, directly impacting the success of target identification and drug discovery.

Breaking Sensitivity Barriers: Advanced Strategies for Detecting Low-Abundance Signaling Targets in Drug Discovery

Abstract

Detecting low-abundance signaling proteins like cytokines and transcription factors is a monumental challenge in biochemical assay development, directly impacting the success of target identification and drug discovery. This article provides a comprehensive guide for researchers and drug development professionals, exploring the foundational challenges of the plasma proteome's dynamic range, evaluating advanced methodological solutions from affinity-based probes to targeted mass spectrometry, offering practical troubleshooting for optimization, and establishing a framework for rigorous cross-platform validation. By synthesizing the latest technological advancements and comparative performance data, this resource aims to equip scientists with the knowledge to select, optimize, and validate highly sensitive assays for the most elusive targets.

The Low-Abundance Challenge: Understanding the Dynamic Range and Complexity of Signaling Targets

The human plasma proteome represents an immense reservoir of biological information, reflecting an individual's physiological and pathological states. However, its comprehensive analysis is challenged by an extreme dynamic range of protein concentrations that spans 10 to 11 orders of magnitude [1] [2] [3]. This range extends from high-abundance proteins like albumin (concentrations of ~70 mg/mL) to rare signaling proteins and tissue leakage products present at picogram-per-milliliter levels or lower [2] [3]. This vast concentration difference means that a handful of highly abundant proteins can account for approximately 99% of the total protein mass, obscuring the detection of clinically significant, low-abundance biomarkers [2]. This technical support center provides troubleshooting guidance and FAQs to help researchers overcome these challenges and enhance the sensitivity of their assays for low-abundance signaling targets.

Frequently Asked Questions (FAQs)

1. What exactly is meant by the "dynamic range problem" in plasma proteomics? The dynamic range problem refers to the technical challenge of detecting and quantifying low-abundance proteins in plasma when they are dwarfed by a few highly abundant proteins. The concentration difference between the most abundant and least abundant proteins can exceed 10 billion-fold (10 orders of magnitude), which is beyond the intrinsic detection range of most analytical instruments [1] [2]. This makes it difficult to observe low-abundance signaling proteins, cytokines, and potential disease biomarkers without specialized sample preparation or enrichment techniques.

2. Why can't mass spectrometry alone detect low-abundance biomarkers in neat plasma? In standard bottom-up mass spectrometry (MS) workflows, the majority of detected peptide signals originate from the most abundant plasma proteins. The signals from low-abundance proteins can be lost in the chemical noise or simply not triggered for sequencing due to their low intensity [2]. While MS instruments themselves have a dynamic range of around 4-5 orders of magnitude, this is insufficient to cover the full range of plasma proteins without pre-fractionation or enrichment strategies [2].

3. What are the key advantages of affinity-based platforms like Olink or SomaScan for detecting low-abundance proteins? Affinity-based platforms use targeted binders (antibodies or aptamers) to specifically capture and amplify the signal from proteins of interest. Key advantages include:

- High Sensitivity: Technologies like NULISA have demonstrated particularly high sensitivity and a low limit of detection [4].

- Multiplexing: They allow for the simultaneous measurement of hundreds to thousands of proteins from small sample volumes [4].

- Specificity: Platforms like Olink that use a proximity extension assay require two different antibodies to bind the target, mitigating issues of non-specific binding and improving specificity [4].

4. My Western blot signals for a low-abundance signaling protein are faint or non-existent. What should I check first? Begin with these fundamental checks [5]:

- Antibody Specificity: Confirm your primary antibody is validated for Western blotting and recognizes the specific target epitope.

- Sample Preparation: Ensure efficient protein extraction and use protease inhibitors to prevent degradation of your low-abundance target.

- Detection System: Switch to a high-sensitivity chemiluminescent substrate, which can detect proteins down to the attogram level, offering a significant sensitivity boost over conventional ECL substrates.

Troubleshooting Guides

Problem: Inconsistent or Failed Detection of Low-Abundance Proteins in Mass Spectrometry

Background: This is a common issue in discovery proteomics where the goal is to identify novel, low-level biomarkers.

Step-by-Step Diagnosis:

Assess Sample Quality:

- Check: Review sample collection and storage conditions. Improper handling can degrade low-abundance proteins faster than abundant ones [3].

- Action: Implement standard operating procedures (SOPs) for plasma collection, using consistent anticoagulants (e.g., EDTA, citrate), and ensure rapid processing and freezing at -80°C.

Evaluate Dynamic Range Compression Strategy:

- Check: Determine if high-abundance protein depletion or other enrichment methods were used. Without them, your MS runs are likely dominated by albumin, immunoglobulins, and other high-abundance proteins [6] [2].

- Action: Incorporate an immunoaffinity depletion column (e.g., MARS-14) to remove the top 14-20 abundant proteins [6]. Alternatively, consider nanoparticle-based enrichment methods to increase coverage of the low-abundance proteome [4].

Verify MS Instrument and Method Performance:

- Check: Look at the total number of proteins identified and the coefficient of variation (CV) between replicate runs. High CVs and low protein counts indicate technical issues [2].

- Action: Benchmark your system using a standardized sample set. A recent multicenter study demonstrated that Data-Independent Acquisition (DIA) methods provide superior reproducibility (CVs of 3.3-9.8% at the protein level) and quantification accuracy compared to Data-Dependent Acquisition (DDA) for complex plasma samples [2].

Preventive Measures:

- Always include a standardized control plasma sample in your MS batches to monitor platform performance over time.

- For ultimate quantification accuracy, use targeted MS workflows with internal heavy-labeled standards (e.g., SureQuant PRM) [4].

Problem: High Background or Low Signal-to-Noise in Immunoassays

Background: This problem affects both Western blotting and multiplex affinity assays, reducing confidence in the quantification of low-abundance targets.

Step-by-Step Diagnosis:

Investigate Antibody Performance:

- Check: Confirm the specificity and titer of your primary and secondary antibodies.

- Action: Run a positive control sample known to express the target protein. For Western blots, verify that the antibody recognizes a single band at the expected molecular weight [5]. For multiplex assays, consult the vendor's validation data.

Optimize Assay Conditions:

- Check: Evaluate blocking conditions and wash stringency. Inadequate blocking causes high background, while over-washing can elute your target signal [7].

- Action: Test different blocking buffers (e.g., BSA, non-fat milk, commercial blockers) and systematically adjust the number and duration of wash steps, changing only one variable at a time [7].

Confirm Target Accessibility:

- Check: In Western blotting, ensure complete transfer of the target protein from the gel to the membrane, especially for high or low molecular weight proteins [5].

- Action: Use a gel chemistry appropriate for your target's size (e.g., Tris-Acetate for high MW, Tricine for low MW) and a transfer method (e.g., dry electroblotting) that ensures high efficiency [5].

Preventive Measures:

- Perform a pilot experiment with a serial dilution of your sample to determine the optimal loading amount and antibody concentration.

- Document all optimization steps meticulously in your lab notebook to create a reliable protocol for future use [7].

Comparative Performance of Proteomics Platforms

The following table summarizes the key characteristics of modern platforms used to tackle the plasma proteome dynamic range, based on a recent large-scale comparison [4].

Table 1: Comparison of Plasma Proteomics Platforms for Low-Abundance Protein Detection

| Platform Type | Example Platforms | Approximate Protein Coverage | Key Advantages | Key Limitations / Considerations |

|---|---|---|---|---|

| Affinity-Based | SomaScan 11K | 10,776 assays | High throughput, large multiplexing capacity | Specificity depends on single aptamer binder; can be matrix-sensitive [4] |

| Affinity-Based | Olink Explore 3072/5416 | 2,925 / 5,416 assays | High specificity via proximity extension assay | Limited to pre-selected target panels [4] |

| Affinity-Based | NULISA | 377 assays (combined panels) | Very high sensitivity and low limit of detection | Lower proteome coverage than larger panels [4] |

| MS-Based (Discovery) | MS-Nanoparticle (Seer) | ~6,000 proteins | Unbiased, can detect novel proteins and isoforms | Higher cost, limited throughput, requires specialized expertise [4] |

| MS-Based (Discovery) | MS-HAP Depletion (Biognosys) | ~3,500 proteins | Unbiased, reduced complexity via depletion | Depth of coverage less than nanoparticle enrichment [4] |

| MS-Based (Targeted) | MS-IS Targeted (SureQuant) | ~500 proteins | "Gold standard" for quantification; high reliability with internal standards | Low multiplexing capacity; targets must be pre-defined [4] |

Essential Research Reagent Solutions

Table 2: Key Reagents for Enhancing Assay Sensitivity

| Reagent / Kit | Function | Application Context |

|---|---|---|

| Immunoaffinity Depletion Columns (e.g., MARS-14) | Removes the top 14 highly abundant plasma proteins (e.g., albumin, IgG) to reveal the lower-abundance proteome [6]. | Sample preparation for deep plasma MS analysis. |

| Surface-Modified Magnetic Nanoparticles (e.g., Seer Proteograph) | Enriches for a broader range of low-to-medium abundance proteins based on physicochemical properties, significantly increasing proteome coverage [4]. | Sample preparation for deep plasma MS analysis. |

| High-Sensitivity Chemiluminescent Substrates (e.g., SuperSignal West Atto) | Provides ultra-sensitive detection for Western blotting, capable of detecting target proteins down to the attogram level [5]. | Final detection step in Western blotting for low-abundance targets. |

| Micro/Nanofluidic Preconcentration Chips | Physically concentrates charged biomolecules (enzymes, substrates) from a larger volume into a much smaller one, enhancing local concentration and reaction rates [8]. | Enhancing reaction kinetics and sensitivity for low-concentration enzyme assays. |

| Tandem Mass Tag (TMT) Reagents | Allows multiplexed quantitative analysis of multiple samples (e.g., 10-plex) in a single MS run, reducing instrument time and improving quantitative precision [6]. | Multiplexed quantitative proteomics. |

Experimental Workflow Visualization

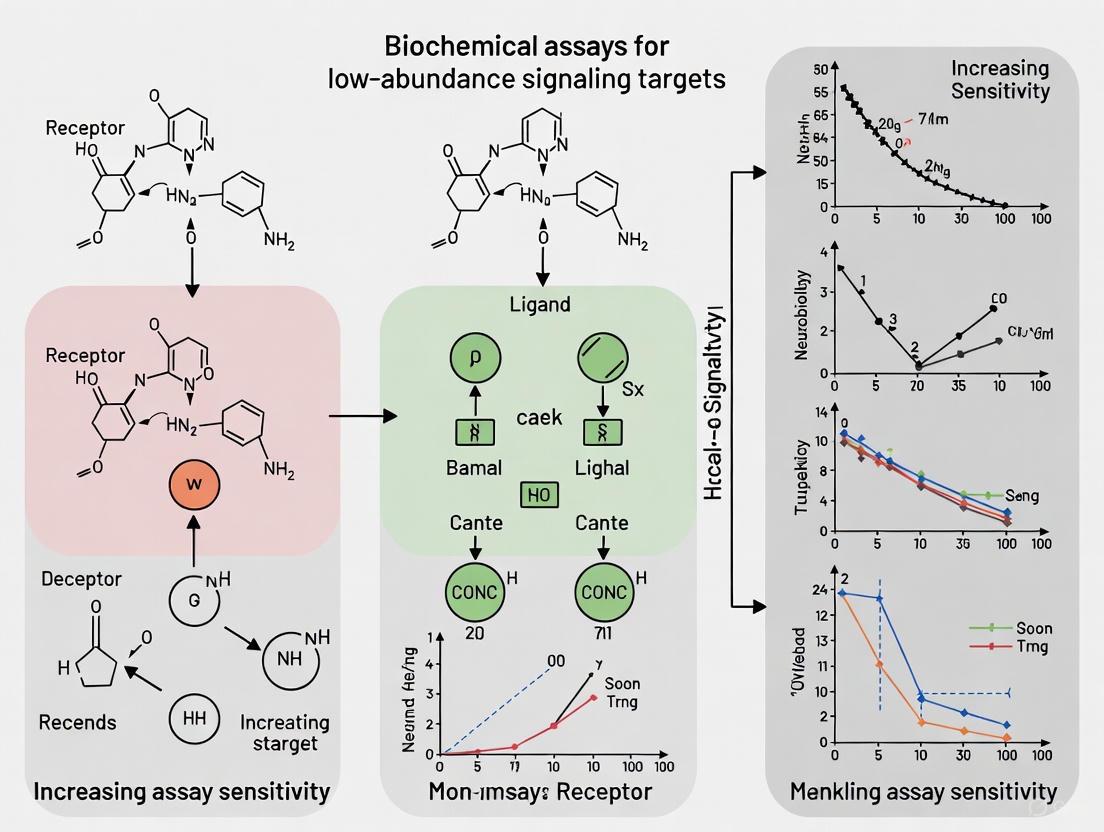

The following diagram illustrates a generalized, optimized workflow for the detection of low-abundance proteins in plasma, integrating strategies from multiple platforms.

Optimized Workflow for Low-Abundance Protein Detection

Advanced Methodology: Microfluidic Preconcentration for Enzyme Assays

For researchers focusing on low-abundance enzymes, a detailed protocol for enhancing reaction rates and sensitivity is provided below [8].

Objective: To significantly increase the reaction rate and lower the limit of detection for low-abundance enzyme assays by preconcentrating both the enzyme and its substrate using a micro/nanofluidic chip.

Materials:

- PDMS Preconcentration Chip: A poly(dimethylsiloxane) device with a surface-patterned ion-selective membrane (e.g., Nafion resin) [8].

- Enzyme and Substrate: e.g., Trypsin and BODIPY FL casein.

- Standard buffers and equipment for microfluidics.

Protocol:

- Chip Fabrication: Fabricate the microchannel in PDMS using replica molding. Pattern a thin planar Nafion film (acting as the ion-selective membrane) on a glass slide via microcontact printing or microflow patterning. Bond the PDMS chip to the glass slide via plasma bonding [8].

- Sample Loading: Introduce the low-concentration mixture of the enzyme and its fluorogenic substrate into the microfluidic device.

- Preconcentration: Apply an electric field. The ion-selective membrane allows the passage of small ions but blocks large charged molecules like proteins and peptides. This results in the continuous accumulation and trapping of the enzyme and substrate molecules in a small volume within the device [8].

- On-Chip Reaction: Allow the enzymatic reaction to proceed within the concentrated plug. The significantly increased local concentrations of both reactants lead to a dramatically enhanced reaction rate.

- Detection: Measure the resulting fluorescent signal from the reaction products. The preconcentration step also increases the concentration of the fluorescent products, leading to a higher signal-to-noise ratio.

Expected Outcomes: This method has been shown to reduce the reaction time required to turn over substrates at 1 ng/mL from ~1 hour to ~10 minutes. Furthermore, it can enhance the sensitivity of detection by ~100-fold, allowing for the measurement of trypsin activity down to 10 pg/mL [8].

The accurate detection of low-abundance signaling targets such as cytokines, transcription factors, and cell surface receptors is pivotal for advancing research in immunology, oncology, and drug development. These molecules often exist at minute concentrations but exert critical regulatory functions, making their reliable measurement essential for understanding disease mechanisms and therapeutic efficacy. Traditional detection methods frequently encounter limitations in sensitivity, specificity, and dynamic range when targeting these biomolecules. This technical support center provides comprehensive troubleshooting guides and detailed protocols designed to overcome these barriers, enhancing the sensitivity and reliability of your assays for low-abundance target research.

Troubleshooting Common Assay Limitations

Flow Cytometry Troubleshooting for Cell Surface Receptors

Q: What should I do if I detect no signal or weak fluorescence intensity when analyzing low-abundance cell surface receptors?

A: Weak or absent signal in flow cytometry for low-abundance targets can stem from multiple technical factors. The table below summarizes common causes and solutions.

Table: Troubleshooting Weak Signal in Flow Cytometry

| Potential Cause | Recommended Solution |

|---|---|

| Suboptimal antibody concentration | Titrate antibody concentration for your specific cell type; use bright fluorochromes for rare proteins [9]. |

| Target inaccessibility | Verify protein location; use appropriate fixation/permeabilization; keep cells on ice to prevent antigen internalization [9]. |

| Improper laser/filter configuration | Check excitation/emission spectra for your fluorochrome; ensure proper laser alignment using calibration beads [9]. |

| Fluorochrome degradation | Protect samples from light exposure; minimize fixation time for tandem dyes [9]. |

For low-abundance intracellular targets like transcription factors, ensure you are using appropriate permeabilization methods. For soluble cytokines, use secretion inhibitors like Brefeldin A to trap proteins within cellular compartments for detection [9].

Q: How can I reduce high background fluorescence that is masking signals from rare cell populations?

A: High background can significantly compromise detection sensitivity for low-abundance targets.

- Cell Quality: Use fresh cells or briefly fixed cells to minimize autofluorescence. Always include unstained controls and employ viability dyes to distinguish dead cells that exhibit nonspecific binding [9].

- Non-specific Binding: Use Fc receptor blocking reagents to prevent antibody binding via Fc regions rather than antigen-specific Fab regions [9].

- Spillover Spreading: High background can result from poor compensation or spillover spreading in multicolor panels. Use single-color controls and fluorescence-minus-one (FMO) controls to accurately set gates and assess background [9].

ELISA Sensitivity Optimization for Cytokine Detection

Q: How can I improve the sensitivity of my ELISA to detect low-abundance cytokines?

A: Achieving high sensitivity in ELISA is critical for detecting low-abundance cytokines. Key strategies include optimizing reagent preparation, incubation conditions, and detection parameters.

Table: Troubleshooting Low Sensitivity in ELISA

| Potential Cause | Recommended Solution |

|---|---|

| Target present below detection limit | Decrease the sample dilution factor or pre-concentrate your samples [10]. |

| Incompatible sample type or assay buffer | Include a known positive control; ensure assay buffer is compatible with your target [10]. |

| Inactive or insufficient substrate | Increase substrate concentration or amount; ensure enzyme reporter is active [10]. |

| Interfering buffer components | Check for sodium azide (inhibits HRP) or EDTA in samples; avoid mixing reagents from different kits [10]. |

| Improper reagent storage | Store all reagents as recommended; use fresh aliquots to avoid repeated freeze-thaw cycles [10]. |

Q: My ELISA results show high background. How can I improve the signal-to-noise ratio?

A: High background is a common issue that obscures detection of low-abundance targets.

- Washing and Blocking: Ensure sufficient washing between steps and optimize blocking conditions. Use PBS or TBS containing 0.05% Tween to reduce nonspecific interactions [10].

- Antibody Specificity: Use affinity-purified, pre-adsorbed antibodies to minimize cross-reactivity [10].

- Contamination: Prepare fresh, uncontaminated buffers and substrates. Always use clean containers and pipette tips to prevent cross-contamination [10].

PCR-Based Detection for Low-Abundance Transcripts

Q: For low-abundance transcription factors, should I use qPCR or ddPCR, and how can I improve data quality?

A: The choice between qPCR and Droplet Digital PCR (ddPCR) depends on your target abundance and sample purity.

- Digital PCR (ddPCR) Superiority for Low-Abundance Targets: For sample/target combinations with low nucleic acid levels (Cq ≥ 29) and/or variable contaminants, ddPCR produces more precise and reproducible data. It partitions reactions into thousands of droplets, allowing absolute quantification without a standard curve and reducing the impact of inhibitors that can cause artifactual results in qPCR [11].

- qPCR Best Practices: If using qPCR, ensure rigorous validation. Primer efficiency must be consistent (90-110%) across all samples, and contaminants must be adequately diluted. Ignoring these factors leads to variable, non-reproducible data, especially for low-abundant targets with small expression differences [11].

- Technical Replicates: A large-scale analysis of RT-qPCR data suggests that for many applications, moving from technical triplicates to duplicates can save resources without compromising data quality, particularly when pipetting is consistent. However, biological replicates remain non-negotiable for capturing true biological variation [12].

Advanced Methodologies for Enhanced Detection

Mass Spectrometry-Based Workflow for Biomarker Discovery

Mass spectrometry (MS), particularly Liquid Chromatography-Tandem Mass Spectrometry (LC-MS/MS), has emerged as a powerful platform for unbiased, high-sensitivity discovery and validation of low-abundance biomarkers in complex samples like blood plasma [13] [14]. The following workflow diagram illustrates a typical MS-based proteomic analysis.

Experimental Protocol: LC-MS/MS Biomarker Discovery [13] [14]

Sample Preparation and Enrichment: Begin with biological samples (e.g., plasma, bone marrow). Deplete high-abundance proteins (e.g., albumin) to unmask low-abundance targets. Use enrichment techniques or fractionation to reduce sample complexity and improve detection depth.

Discovery Phase (Untargeted Proteomics): Utilize Liquid Chromatography-Tandem Mass Spectrometry (LC-MS/MS) for high-throughput, label-free profiling. This identifies differentially expressed proteins across patient cohorts (e.g., responders vs. non-responders). Isobaric tagging (TMT, iTRAQ) can facilitate accurate, multiplexed quantification.

Bioinformatics Analysis: Process high-dimensional data with advanced pipelines. This includes normalization, batch-effect correction, and differential expression analysis. Integrate proteomic data with clinical metadata (e.g., survival outcomes) to prioritize biomarker candidates with diagnostic or prognostic significance.

Validation Phase (Targeted MS): Validate shortlisted biomarkers using targeted MS techniques like Multiple Reaction Monitoring (MRM) or Parallel Reaction Monitoring (PRM). These assays use stable isotope-labeled internal standards for highly precise, absolute quantification of candidate biomarkers in large patient cohorts, which is essential for clinical translation.

Innovative Sensing Platforms

Emerging technologies are pushing the boundaries of sensitivity and throughput for detecting extracellular secretions. The MOMS platform (Molecular Sensors on the Membrane surface of Mother yeast cells) uses aptamers selectively anchored to mother cells to detect secreted metabolites with high sensitivity (limit of detection: 100 nM) and ultra-high throughput (screening over 10^7 single cells) [15]. This exemplifies how novel material and assay designs can overcome limitations of conventional methods.

The Scientist's Toolkit: Essential Research Reagents

Success in detecting low-abundance targets relies on a carefully selected toolkit of reagents and materials. The following table details key solutions for various experimental approaches.

Table: Research Reagent Solutions for Low-Abundance Targets

| Reagent/Material | Function/Application | Key Considerations |

|---|---|---|

| Bright Fluorochrome-Conjugated Antibodies (e.g., PE, APC) [9] | Flow cytometry detection of low-density cell surface receptors. | Match brightest fluorochromes to the lowest expressing antigens in your panel. |

| Secretion Inhibitors (Brefeldin A, Monensin) [9] | Intracellular cytokine staining for flow cytometry; traps secreted proteins in cellular compartments. | Required for assessing cytokines and other secreted molecules. |

| Affinity-Purified/Preadsorbed Antibodies [10] | ELISA and immunoassays; reduces non-specific binding and high background. | Critical for improving signal-to-noise ratio. |

| Stable Isotope-Labeled Internal Standards (e.g., AQUA peptides) [13] | Targeted mass spectrometry (MRM/PRM); enables absolute quantification of proteins. | Essential for precise and reproducible biomarker validation. |

| DNA Aptamers [15] | Flexible molecular recognition elements for cytokines, metabolites; used in novel sensors (e.g., MOMS). | Offer high specificity and stability; can be engineered for various targets. |

| Fc Receptor Blocking Reagents [9] | Flow cytometry; blocks non-specific antibody binding via Fc receptors on immune cells. | Reduces background staining, crucial for high-sensitivity detection. |

| TaqMan Probes vs. SYBR Green [12] | qPCR/RT-qPCR; probe-based chemistry generally shows less variability than dye-based for low-abundance transcripts. | Probe-based assays offer higher specificity, which is beneficial for complex samples. |

FAQs on Experimental Design and Best Practices

Q: How many technical replicates are necessary for reliable qPCR data for low-abundance transcription factors? A: While traditional protocols often default to technical triplicates, recent large-scale evidence suggests that for well-optimized assays with consistent pipetting, duplicates may be sufficient without significant loss of data quality. This can save substantial resources in high-throughput settings. The key is to maintain a high level of technical precision and to prioritize an adequate number of biological replicates to account for true biological variation [12].

Q: What are the main advantages of mass spectrometry over immunoassays for detecting low-abundance proteins? A: MS offers several key advantages: 1) Multiplexing: It can profile thousands of proteins simultaneously in an unbiased manner, unlike single-analyte immunoassays [13] [14]. 2) Specificity: It can distinguish between protein isoforms and post-translational modifications with high accuracy, often surpassing the cross-reactivity issues of antibodies [13] [14]. 3) Dynamic Range: Advanced MS platforms can detect low-abundance analytes in complex mixtures without the need for specific antibodies for each target, making it ideal for discovery [13].

Q: My flow cytometry panel has many colors. How can I ensure I can detect my low-abundance target? A: Panel design is critical. Use tools like spectral viewers to minimize spillover spreading. Follow the "antigen density" rule: assign the brightest fluorochromes to the lowest abundance targets (like many cytokines and transcription factors), and use dimmer fluorochromes for highly expressed antigens. Always include FMO controls for the low-abundance target to correctly set your gates and distinguish true positive events from background [9].

The pursuit of detecting low-abundance signaling targets places immense importance on understanding and controlling biological and technical confounders. These variables, if unaccounted for, can introduce significant noise and bias, obscuring true biological signals and compromising the validity of experimental results. Biological confounders are inherent characteristics of the study subjects, such as age, sex, and Body Mass Index (BMI), which naturally influence molecular readouts. For instance, research has demonstrated that the plasma proteome exhibits significant variability linked to age, sex, and BMI [16]. Similarly, studies on frailty have revealed that biomarkers such as myostatin and galectin-1 in females, and cathepsin B and thrombospondin-4 in males, are expressed in a sex-specific manner, highlighting the profound effect of biological factors [17].

Conversely, technical confounders are introduced during the experimental workflow, from sample collection and processing to instrumental analysis. In proteomic studies, factors such as sample storage duration, temperature, blood collection timing, anticoagulant used, and processing protocols are known sources of variation [16]. A detailed analysis of SWATH-MS data found that sample preparation was a major source of technical variation, differentially affecting the quantification of hundreds of proteins, while instrument reproducibility was generally high [18]. This technical noise is particularly detrimental when measuring low-abundance targets, as the signal of interest may be drowned out by non-biological variation. A systematic approach to identifying, controlling, and correcting for these confounders is therefore a critical prerequisite for successful and reproducible research on low-abundance signaling molecules.

Guide to Identifying Key Confounders

The first step in robust experimental design is recognizing the most common sources of confounding. The table below categorizes key biological and technical variables, their potential impact on assays, and the underlying reasons.

Table 1: Key Biological and Technical Confounders in Assay Development

| Category | Confounding Variable | Potential Impact on Assays | Rationale |

|---|---|---|---|

| Biological | Age | Alters protein and metabolite expression profiles [16]. | Physiological processes and disease risks change with age. |

| Biological | Sex | Causes significant differences in biomarker levels (e.g., frailty biomarkers) [17]. | Hormonal and genetic differences between sexes. |

| Biological | BMI / Metabolic Health | Influences plasma proteome [16] and specific metabolites [19]. | Obesity and metabolic state are linked to chronic inflammation and altered signaling. |

| Technical | Sample Processing Time & Temperature | Affects protein stability and degradation [16]. | Delays or improper temperatures can lead to biomolecule breakdown. |

| Technical | Anticoagulant Used in Blood Collection | Impacts the composition of the plasma proteome [16]. | Different anticoagulants (e.g., EDTA, heparin) can interfere with assays. |

| Technical | Sample Storage Duration | Influences protein integrity and quantification [16]. | Long-term storage, even at low temperatures, can lead to gradual degradation. |

| Technical | Sample Preparation Batch | Major source of variation in quantitative proteomics, affecting hundreds of proteins [18]. | Reagent lots, technician skill, and day-to-day environmental differences. |

| Technical | Assay Plate & Washing Efficiency | In ELISA, poor mixing and inefficient washing increase background noise and variability [20]. | Non-specific binding and reliance on passive diffusion reduce sensitivity. |

Workflow for Confounder Identification

A systematic approach to confounder management begins with its identification in the experimental planning phase. The following workflow outlines the key steps to map out the variables relevant to your study.

Diagram 1: A workflow for identifying potential confounders in an experimental plan, based on established experimental design principles [21].

Troubleshooting Guides & FAQs

Frequently Asked Questions (FAQs)

FAQ 1: Our Western blot results for a low-abundance signaling protein are inconsistent, with high background. What are the key steps to improve this?

- Answer: Detecting low-abundance proteins via Western blot requires enhanced sensitivity and optimized conditions. Key steps include:

- Sample Preparation: Use a higher sample load (50–100 µg per lane). Incorporate a broad-spectrum protease inhibitor cocktail and phosphatase inhibitors (for phosphorylated proteins) to prevent degradation. For membrane proteins, avoid boiling samples to prevent aggregation [22].

- Membrane & Transfer: Use PVDF membranes instead of nitrocellulose due to their higher protein-binding capacity, which is more suitable for low-abundance targets [22].

- Antibody Incubation: Use a higher concentration of both primary and secondary antibodies than standard protocols recommend. Reduce the concentration of blocking agents (e.g., 0%-5% non-fat dry milk) or shorten blocking time to avoid masking weak signals [22].

- Validation: Always include a positive control to confirm the accuracy of the process and antibody effectiveness [22].

FAQ 2: We are designing a plasma proteomics study. How can we estimate the required sample size to account for biological and technical variability?

- Answer: It is possible and recommended to perform a statistical power analysis prior to a large-scale study. This involves:

- Pilot Data: Run a pilot experiment with a small number of samples that incorporates the main biological variables of interest (e.g., disease status) and includes both technical and sample preparation replicates.

- Variance Analysis: Use ANOVA on the pilot SWATH-MS or other quantitative data to partition the total variance into components attributable to biological factors, sample preparation, and instrumental analysis [18].

- Power Calculation: Use the estimated variances to determine the number of biological replicates needed to have sufficient statistical power (e.g., 80%) to detect a specific fold-change in protein expression across the dynamic range of your assay [18].

FAQ 3: Our ELISA sensitivity is insufficient for a low-concentration biomarker. What strategies can we use to enhance it without changing the core platform?

- Answer: Enhancing ELISA sensitivity involves optimizing both the capture and detection steps.

- Improve Surface Coating: Move beyond passive adsorption. Use surface modification with PEG-grafted copolymers or chitosan to reduce non-specific binding. Employ strategies for oriented antibody immobilization, such as using Protein A/G or biotin-streptavidin systems, to increase the number of functionally active capture antibodies [20].

- Enhance Signal Amplification: Integrate cell-free synthetic biology concepts. Emerging techniques like CRISPR-linked immunoassays (CLISA) or T7 RNA polymerase–linked immunosensing assays (TLISA) use programmable nucleic acid and protein synthesis systems to greatly amplify the detection signal, surpassing the sensitivity of conventional enzyme-based detection [20].

- Improve Washing/Mixing: Implement microfluidic systems or other methods to ensure efficient mixing and washing, which minimizes background and improves the signal-to-noise ratio [20].

FAQ 4: How do we validate that a newly identified biomarker is robust and not an artifact of technical variation or confounding biological factors?

- Answer: Robust validation requires internal and external testing.

- Internal Validation: Use cross-validation within your dataset. Split your data into training and test sets to check for overfitting. A model that performs well on the training set but poorly on the test set is likely overfitted and not robust [23].

- External Validation: Test your biomarker or model in a completely independent cohort. This cohort should have different biological and technical characteristics (e.g., collected at a different site, with different demographics) to truly assess generalizability. High variance in model performance (e.g., AUC) between datasets indicates poor transportability and that the biomarker may be sensitive to unaccounted confounders [23].

- Control for Covariates: Statistically adjust for key biological confounders like age, sex, and BMI in your analysis to ensure the biomarker's association is independent of these factors [16] [17].

Troubleshooting Common Experimental Issues

Table 2: Troubleshooting Guide for Common Confounding Issues

| Problem | Potential Cause | Solution | Preventive Measures |

|---|---|---|---|

| High technical variation in quantitative proteomics data. | Sample preparation is a major source of variation, more so than instrumental runs [18]. | Apply batch correction algorithms during data analysis. | Standardize protocols meticulously. Include technical replicates (sample prep and MS) in study design to quantify this variance [18]. |

| Failure to detect a low-abundance protein in Western blot. | Low expression level and/or suboptimal experimental conditions [22]. | Enrich the target (e.g., extract nuclear/membrane fractions). Increase sample load, use PVDF membrane, optimize antibody concentration [22]. | Follow a protocol specifically designed for low-abundance proteins from the start [22]. |

| Biomarker performance declines in an independent cohort. | Overfitting of the initial model and/or influence of cohort-specific confounders (e.g., age, sex, sample processing) not present in the discovery cohort [23]. | Re-calibrate the model with the new data or develop a new model that includes all relevant categories and confounders. | Use internal cross-validation and perform external validation in multiple independent cohorts during development [23]. |

| Poor sensitivity and high background in ELISA. | Random antibody orientation and non-specific binding [20]. | Use oriented immobilization (e.g., Protein G) and nonfouling surface coatings (e.g., PEG). | Implement advanced surface engineering and signal amplification strategies in the assay development phase [20]. |

Essential Protocols for Confounder Control

Protocol: Controlled Experiment Design in 5 Steps

This protocol provides a framework for designing experiments that minimize the influence of confounders from the outset, ensuring high internal validity [21].

Define Your Variables:

- Independent Variable: The condition you manipulate (e.g., drug treatment, disease status).

- Dependent Variable: The outcome you measure (e.g., protein concentration, gene expression).

- Extraneous/Confounding Variables: Identify variables other than your independent variable that could affect the dependent variable (e.g., age, sex, sample processing batch) [21].

Write a Specific, Testable Hypothesis:

- Formulate a clear null hypothesis (H₀) and alternative hypothesis (H₁). For example: H₀: "Drug X does not change the plasma level of biomarker Y," H₁: "Drug X increases the plasma level of biomarker Y" [21].

Design Experimental Treatments:

- Decide on the specific conditions and dosages for your independent variable. Ensure the manipulation is precise and reproducible [21].

Assign Subjects to Treatment Groups:

- Randomization: Randomly assign subjects to control and treatment groups. This helps distribute potential confounding factors (both known and unknown) evenly across groups [21] [24].

- Blocking: For known major sources of variation (e.g., sex, age group), use a randomized block design. Group subjects by the confounding factor (e.g., "males" and "females") and then randomly assign within each group to ensure balance [21].

- Include a Control Group: A group that does not receive the experimental treatment is essential as a baseline [21].

Measure Your Dependent Variable:

- Plan how you will measure the outcome reliably and validly. Use calibrated instruments and standardized protocols to minimize measurement error [21].

Protocol: Sample Preparation for Low-Abundance Protein Analysis (Western Blot)

This protocol outlines critical modifications to standard procedures to enhance the detection of low-abundance proteins, thereby reducing technical noise [22].

Step 1: Cell Lysis and Protein Extraction

- Wash cells twice with cold PBS. Lyse cells in RIPA buffer on ice for 15 minutes.

- Crucial: Add a broad-spectrum protease inhibitor cocktail to prevent protein degradation. For phosphorylated proteins, add a phosphatase inhibitor cocktail.

- Use ultrasonication to break cell clusters and release proteins, especially nuclear proteins (e.g., 3s on, 10s off, 5-15 cycles). Centrifuge at 14,000–17,000 x g for 5 min at 4°C and collect the supernatant [22].

Step 2: Protein Quantification and Loading

- Determine protein concentration using a Bradford or BCA assay.

- Use a 5x loading buffer to avoid excessive dilution of the lysate.

- Load 50-100 µg of protein per lane. Use a 1.5 mm comb to increase loading volume.

- For most proteins, boil samples at 100°C for 10 min. Do not boil multi-transmembrane proteins; instead, incubate at room temperature or 70°C to prevent aggregation [22].

Step 3: Gel Electrophoresis and Transfer

- Run the gel under standard conditions.

- Transfer proteins to a PVDF membrane (pre-wetted in methanol) using semi-dry or wet transfer methods. PVDF has a higher binding capacity than nitrocellulose for low-abundance targets [22].

Step 4: Blocking and Antibody Incubation

- Block the membrane for 1 hour at room temperature with 5% blocking buffer. Note: Reducing blocking agent concentration or time can sometimes help prevent signal masking.

- Incubate with a higher concentration of primary antibody overnight at 4°C on a shaker. Use a higher concentration of HRP-conjugated secondary antibody for 1 hour at room temperature.

- Perform all washes with TBST [22].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents for Controlling Confounders and Enhancing Sensitivity

| Reagent / Kit | Function | Application Context |

|---|---|---|

| Protease & Phosphatase Inhibitor Cocktails | Prevents protein degradation and post-translational modification loss (e.g., dephosphorylation) during sample preparation [22]. | Cell and tissue lysis for Western blot, mass spectrometry. |

| PVDF Membrane | A high protein-binding capacity membrane for more efficient transfer and retention of low-abundance proteins compared to nitrocellulose [22]. | Western blot transfer step. |

| Protein A/G | Bacterial proteins used to immobilize antibodies via their Fc region, ensuring proper orientation and enhancing binding efficiency in immunoassays [20]. | ELISA surface coating, immunoaffinity purification. |

| PEG-grafted Copolymers | Synthetic polymers used to create nonfouling surfaces that minimize non-specific protein adsorption, improving signal-to-noise ratio [20]. | ELISA microplate coating, biosensor surfaces. |

| CRISPR-linked Immunoassay (CLISA) Components | Integrates CRISPR-based nucleic acid amplification with immunoassays for dramatic signal amplification, bridging the sensitivity gap with nucleic acid tests [20]. | Ultra-sensitive detection of low-abundance protein biomarkers. |

| Seer Proteograph XT / ENRICH Kits | Nanoparticle-based or bead-based kits for enriching low-abundance proteins from complex biofluids like plasma, expanding proteome coverage [16]. | Plasma proteomics by mass spectrometry. |

| AbsoluteIDQ p180 Kit | Standardized kit for the targeted mass spectrometry-based quantification of 186 metabolites, providing a controlled workflow for metabolomic studies [19]. | Metabolite biomarker discovery and validation. |

Visualization of a Robust Experimental Workflow

Integrating the control of confounders into every stage of the experimental process is key to success. The following diagram visualizes a robust end-to-end workflow for a study aiming to discover a low-abundance biomarker, highlighting critical control points.

Diagram 2: An end-to-end experimental workflow integrating controls for biological and technical confounders at each stage, based on principles from multiple sources [18] [21] [16].

Frequently Asked Questions (FAQs)

What fundamentally limits the sensitivity of conventional assays for low-abundance targets?

The primary limitation is the signal-to-noise ratio. In conventional immunoassays or western blots, the faint signal from a truly low-abundance target is often indistinguishable from the inherent background noise of the assay system. At picogram-per-milliliter concentrations, the number of target molecules is so small that their collective signal fails to rise significantly above this background [25]. Furthermore, the extreme dynamic range of complex biological samples (like blood plasma, where a few high-abundance proteins constitute over 90% of the total protein mass) masks the signals of rare, low-abundance proteins, making them virtually undetectable without prior enrichment [26].

Why do my negative controls show signal, and how does this impact low-level detection?

Signal in negative controls, or high background, is a common issue that drastically reduces assay sensitivity. This can be caused by multiple factors:

- Insufficient Blocking or Washing: Inadequate blocking leaves "sticky" sites on plates or membranes open for non-specific antibody binding, while insufficient washing fails to remove unbound reagents, both increasing background noise [27].

- Antibody Concentration Too High: Excessive antibody concentrations can promote non-specific binding and aggregation, leading to high uniform background across the assay [27].

- Contaminated Reagents: Trace contaminants, such as horseradish peroxidase (HRP) in reused plastics, can trigger signal generation even in the absence of the target [27]. For picogram-level detection, even minor background signals can obscure the faint true positive signal.

My standard curve is good, but my sample signals are weak or absent. What could be wrong?

This typically indicates an issue specific to your sample or its interaction with the assay:

- Target Concentration Below Detection Limit: The most straightforward explanation is that the target in your sample is below the functional limit of detection for the conventional assay protocol [27].

- Matrix Interference: Components in your sample buffer (e.g., azide, which inhibits HRP) or general biological matrix effects can interfere with antibody binding or the detection chemistry, suppressing the signal [27].

- Target Degradation or Modification: The protein in your sample may be degraded, bound to other molecules, or modified in a way that prevents it from being recognized efficiently by the capture and detection antibodies in a sandwich assay format [27].

What are the most promising technologies for moving beyond these limits?

Several advanced technologies are pushing detection into the femtogram and attogram range:

- Digital Assays: Platforms like the Single Molecule Array (Simoa) digitize the detection by isolating individual immunocomplexes on beads in microwells, allowing for single-molecule counting. This can lower the limit of detection for proteins to attomolar concentrations (high-attogram per milliliter range) [25].

- Signal Enhancement Technologies: Metal Enhanced Fluorescence (MEF) uses plasmonic gold nanoparticles to amplify the emission of nearby fluorophores. This simple modification to a standard europium nanoparticle immunoassay boosted sensitivity ten-fold, achieving a limit of detection of 0.19 pg/mL for HIV p24 antigen [28] [29].

- Advanced Pre-fractionation and Enrichment: For mass spectrometry, techniques like immunodepletion of high-abundance proteins and hexapeptide ligand libraries (e.g., ProteoMiner) compress the dynamic range of samples, enriching low-abundance proteins for more effective detection [26].

Troubleshooting Guide: Common Scenarios and Solutions

| Symptom | Possible Cause | Recommended Solution |

|---|---|---|

| No or Weak Signal | Target abundance below assay detection limit [27]. | Use signal amplification (e.g., MEF) [28] or switch to a digital/counting assay [25]. |

| Incompatible antibody pair (sandwich ELISA) [27]. | Verify antibodies recognize distinct epitopes; use a validated matched pair. | |

| Buffer contains sodium azide (inhibits HRP) [27]. | Use azide-free buffers or ensure thorough washing. | |

| High Background | Inadequate blocking or washing [27]. | Increase blocking time/concentration; add more washes with Tween-20. |

| Antibody concentration too high [27]. | Titrate antibodies to find optimal concentration. | |

| Non-specific antibody binding. | Include species-specific IgG or secondary antibody blockers. | |

| High Well-to-Well Variability | Inconsistent pipetting or mixing [27]. | Calibrate pipettes; ensure solutions are mixed thoroughly before addition. |

| Bubbles in wells during reading [27]. | Centrifuge plate before reading to remove bubbles. | |

| Evaporation during incubation [27]. | Use a plate sealer for long incubation steps. |

Enhancing Sensitivity: Protocols and Workflows

Detailed Protocol: Metal Enhanced Fluorescence (MEF) Immunoassay

This protocol details the single-step modification that can be applied to a standard europium nanoparticle immunoassay (ENIA) to achieve a ten-fold increase in sensitivity, as demonstrated for HIV p24 antigen detection [28].

Principle: The close proximity of excited fluorophores (on europium nanoparticles) to gold nanoparticles allows the fluorophore's emission to couple with the surface plasmons on the metal nanoparticles. This coupling results in reradiated, amplified fluorescence emission [28].

Research Reagent Solutions:

| Item | Function in the Protocol | Example & Specification |

|---|---|---|

| Europium Nanoparticles (EuNPs) | Fluorescent reporter particle; provides long-lived, specific signal for time-resolved detection. | 200 nm carboxyl-modified EuNPs (e.g., Thermo Scientific) [28]. |

| Gold Nanoparticles (AuNPs) | Plasmonic signal enhancer; amplifies the fluorescence signal from the nearby EuNPs. | 150 nm colloidal gold nanoparticles (e.g., Sigma-Aldrich) [28]. |

| Capture & Biotinylated Antibodies | Form the sandwich immunocomplex for specific target capture and detection. | Target-specific pair (e.g., ANT-152 capture antibody, Perkin Elmer biotinylated detector) [28]. |

| Streptavidin | Biotin-binding protein; acts as a bridge between the biotinylated detector antibody and the EuNP. | High-purity streptavidin (e.g., Scripps Lab) [28]. |

| EDC & NHS | Crosslinking agents; activate carboxyl groups on EuNPs for covalent conjugation to streptavidin. | Thermo Scientific EDC (1-Ethyl-3-(3-dimethylaminopropyl)carbodiimide) and NHS (N-Hydroxysuccinimide) [28]. |

| Casein Blocking Buffer | Blocks non-specific binding sites on the microplate to reduce background signal. | Ready-to-use solution (e.g., Thermo Scientific) [28]. |

Methodology:

- Plate Coating: Coat a Nunc maxisorp fluorescence microplate with 55 µL of capture antibody (2 µg/mL in carbonate-bicarbonate buffer, pH 9.6). Incubate for 24 hours at 4°C.

- Blocking: Wash the plate 5 times with wash buffer. Add 300 µL of casein blocking buffer to each well and incubate for 30 minutes at 37°C.

- Antigen Capture: Add 100 µL of your sample (or antigen standard diluted in block buffer) to each well. Incubate at 37°C with shaking for 1 hour. Wash the plate 5 times.

- Detection Antibody Binding: Add 100 µL of biotinylated detector antibody to each well. Incubate at 37°C for 60 minutes. Wash the plate 5 times.

- Europium Nanoparticle Labeling: Add 100 µL of streptavidin-conjugated EuNPs to each well. Incubate at 37°C with shaking for 30 minutes. Perform a final wash cycle (5 times).

- Baseline Signal Measurement: Place the microplate in a fluorescence plate reader (e.g., SpectraMax M5). Measure the fluorescent signal in time-resolved mode (excitation: 340 nm, emission: 615 nm). This is your signal before enhancement (S1).

- Signal Enhancement: Add 100 µL of 150 nm gold nanoparticle solution to each well.

- Enhanced Signal Measurement: Immediately measure the fluorescent signal again using the same instrument settings. This is your metal-enhanced signal (S2). The enhancement factor can be calculated as S2/S1 [28].

Workflow for Low-Abundance Protein Detection in Complex Samples

This workflow is essential for mass spectrometry-based proteomics of samples like blood plasma, where high-abundance proteins overwhelm the signal of low-abundance targets [26].

Quantitative Comparison of Detection Technologies

The following table summarizes the performance of various assay technologies, highlighting the limitations of conventional methods and the advancements offered by newer platforms.

| Assay Technology | Typical Lower Limit of Detection (Proteins) | Key Limitation/Failure Point at Picogram Level |

|---|---|---|

| Conventional ELISA | 10-20 pg/mL [28] | Analog signal is averaged across the well, and low target concentration yields a signal indistinguishable from background noise [25]. |

| Western Blot (Chemilum.) | Low nanogram range [30] | Poor transfer efficiency of proteins to membrane and non-specific antibody binding create high background, masking faint bands [30]. |

| Metal Enhanced Fluorescence | 0.19 pg/mL (demonstrated) [28] | Requires optimization of nanoparticle size and distance to fluorophore for maximum enhancement [28]. |

| Digital ELISA (Simoa) | ~50 aM (attomolar) [25] | Upper limit of dynamic range is constrained by the density of wells/beads; high concentration samples require dilution [25]. |

| Advanced MS with Enrichment | High-attogram level [30] | Without enrichment, the vast dynamic range of biological samples suppresses low-abundance signals; enrichment can be labor-intensive [26]. |

Key Takeaways for the Researcher

Conventional assays fail at picogram-level detection due to fundamental physical and chemical constraints related to signal-to-noise and sample complexity. Overcoming these limits requires a shift in strategy from simple protocol execution to a holistic approach involving:

- Sample Pre-processing to remove interfering high-abundance molecules [26].

- Signal Amplification using physical phenomena like MEF rather than just biochemical methods [28] [29].

- Digital or Counting Assays that detect individual molecules to eliminate the averaging effect that buries low-concentration signals in noise [25].

By understanding these failure modes and implementing the appropriate advanced solutions, researchers can reliably detect and quantify low-abundance signaling targets critical for drug development and clinical diagnostics.

Next-Generation Technologies: From Affinity-Based Probes to Targeted Mass Spectrometry

This section provides a technical comparison of SomaScan, Olink, and NULISA platforms to guide researchers in selecting the appropriate tool for their specific application, particularly in the context of detecting low-abundance signaling targets.

Table 1: Core Technology and Throughput Characteristics

| Feature | SomaScan | Olink PEA | NULISA |

|---|---|---|---|

| Core Technology | Slow Off-rate Modified Aptamers (SOMAmers) [31] | Proximity Extension Assay (PEA) [32] | Proximity Ligation Assay (PLA) [32] |

| Detection Mechanism | Single aptamer binding target protein [16] | Two antibodies required for DNA-tag extension [16] | Two antibodies required for DNA-tag ligation [32] |

| Assay Plexity | 7K - 11K proteins [16] | 3K - 5K proteins [16] | ~200-377 targets (focused panels) [16] |

| Throughput | High [31] | High [31] | Information Not Found |

Table 2: Analytical Performance Metrics for Sensitivity and Reproducibility

| Performance Metric | SomaScan | Olink PEA | NULISA |

|---|---|---|---|

| Sensitivity / Detectability | Broad coverage for discovery [16] | High sensitivity [31] | Highest overall detectability [32] |

| Dynamic Range | Covers wide dynamic range [31] | Information Not Found | Information Not Found |

| Technical Precision (CV) | ~5.3% (median) [16] | Information Not Found | Information Not Found |

| Key Differentiator | Largest proteome coverage [16] | High specificity from dual antibodies [16] [32] | Designed for ultra-sensitive detection of low-abundance targets [32] |

Experimental Protocols for Sensitivity Optimization

Sample Preparation Protocol for Plasma/Serum

Consistent sample handling is critical for assay sensitivity and reproducibility.

- Collection: Collect blood plasma or serum using appropriate anticoagulants [16].

- Processing: Process samples and freeze within 6 hours of collection [32].

- Storage: Store samples at -80°C prior to analysis [32].

- Freeze-Thaw: Minimize freeze-thaw cycles. Olink assays typically use samples after 1 freeze-thaw cycle, while other platforms may tolerate more [32].

Platform-Specific Workflow Diagrams

Research Reagent Solutions

Table 3: Essential Materials for Affinity Proteomics

| Reagent / Material | Function in Experiment | Example Platforms |

|---|---|---|

| SOMAmers | Modified DNA aptamers that bind target proteins with high affinity and specificity [31]. | SomaScan |

| DNA-tagged Antibody Pairs | Pairs of antibodies that bind target protein; each conjugated to a unique DNA oligo for subsequent amplification and detection [32]. | Olink, NULISA |

| Paramagnetic Oligo-dT Beads | Beads used to capture immunocomplexes via poly-A/tail hybridization for efficient washing and background reduction [32]. | NULISA |

| Streptavidin-coated Beads | Magnetic beads used for solid-phase capture of detection antibodies tagged with biotin [32]. | NULISA |

| Internal Control Spikes | Exogenous proteins or controls spiked into each sample for data normalization and removal of technical variation [32]. | NULISA, other platforms |

Technical Support and Troubleshooting FAQs

Q: Our data shows high background noise. What steps can we take to improve signal-to-noise? A: High background can stem from various sources. For NULISA, ensure the two-step purification with oligo-dT and streptavidin beads is performed correctly to remove unbound reagents [32]. For all platforms, verify that sample matrices are compatible and consider optimizing wash stringency. Re-evaluate sample quality, as contaminants can contribute to non-specific binding.

Q: What factors most significantly impact the sensitivity of these assays for low-abundance targets? A: Sensitivity is platform-dependent. NULISA's architecture is designed for highest detectability [32]. Olink's dual antibody requirement increases specificity, reducing false positives for low-level targets [16]. For SomaScan, the unique chemistry of its SOMAmers provides a wide dynamic range, aiding in the measurement of both high and low abundance proteins [31]. Proper sample handling to prevent protein degradation is universally critical.

Q: How do we validate a finding from a discovery platform like SomaScan? A: A common strategy is orthogonal validation. Use a different technology, such as an immunoassay (e.g., Olink or NULISA) or targeted mass spectrometry (e.g., PRM/SRM), to confirm the expression changes of your candidate biomarkers [16]. This cross-platform confirmation strengthens the biological validity of your results.

Q: Why might correlation between different affinity platforms be low for some analytes? A: Different platforms measure distinct protein characteristics (e.g., different epitopes, isoforms, or protein complexes) and use different calibration methods [16] [32]. This is a known phenomenon. Stronger correlations are often observed for abundant analytes, while low-abundance targets may show more platform-specific variation [32]. Always consult platform-specific information for expected performance.

DIA Workflow Troubleshooting and FAQs

Common DIA Pitfalls and Solutions

Q: My DIA experiment is yielding low peptide identification counts and poor quantification. What could be the root cause?

A: Low peptide yields often originate from issues in the initial sample preparation stage, which are then amplified by the comprehensive nature of DIA acquisition. Inadequate sample preparation is the most common point of failure [33].

Table: Common DIA Pitfalls and Fixes

| Pitfall Category | Specific Issue | Recommended Solution |

|---|---|---|

| Sample Preparation | Low peptide yield from challenging matrices (FFPE, low-input samples) [33] | Implement a three-tier QC: protein concentration check (BCA/NanoDrop), peptide yield assessment, and an LC-MS scout run [33]. |

| Chemical interference (salts, detergents) causing ion suppression [33] | Use optimized extraction kits for specific matrices; include checklists for detergent residue screening [33]. | |

| Spectral Library | Tissue or species mismatch between library and samples [33] | Use project-specific spectral libraries built from matched samples or hybrid (public + custom DDA) libraries [33]. |

| Library created from low-quality DDA runs [33] | Build libraries from ≥2 replicate DDA runs under matching LC conditions with iRT standards for calibration [33]. | |

| Acquisition | Wide SWATH windows (>25 m/z) causing chimeric spectra [33] | Use adaptive, dynamic window schemes based on peptide density; aim for windows <25 m/z on average [33]. |

| Inadequate MS2 scan speed for LC peak width [33] | Calibrate cycle time to match LC peak width, ensuring ~8–10 data points per peak (cycle time ≤3 sec) [33]. | |

| Data Analysis | Inappropriate software selection (e.g., library-based tool on library-free data) [33] | Match tool to design: use DIA-NN or MSFragger-DIA for library-free DIA, and Spectronaut or Skyline for library-based projects [33]. |

Q: How can I improve the sensitivity of my DIA method for low-abundance targets?

A: Beyond optimizing standard DIA parameters, you can:

- Employ Immunocapture Clean-up: Use anti-protein antibodies to capture the target protein or specific peptides from a complex sample digest before LC-MS/MS analysis. This significantly reduces sample complexity and can achieve detection of low-abundant biomarkers in the pg mL−1 range [34].

- Downscale LC Systems: Using nanoLC columns (e.g., 75 μm ID or smaller) can greatly enhance sensitivity. Couple this with high-capacity solid-phase extraction (SPE) columns to maintain loading capacity [35].

DIA Experimental Workflow

The following diagram outlines a robust DIA workflow incorporating critical quality control steps to prevent common failures.

PRM Workflow Troubleshooting and FAQs

PRM Fundamentals and Optimization

Q: What is the key advantage of using Parallel Reaction Monitoring (PRM) for quantifying low-abundance proteins?

A: PRM offers high sensitivity and accuracy without the need for antibodies, which can be a major constraint for many protein targets. It enables the simultaneous, precise measurement of dozens of proteins in a single run [36].

Q: My PRM assay lacks sensitivity. What parameters should I investigate?

A: Sensitivity in PRM is influenced by several factors. Focus on optimizing your sample preparation and instrument method.

Table: PRM Sensitivity Optimization Checklist

| Parameter | Consideration for Low-Abundance Targets |

|---|---|

| Peptide Selection | Choose proteotypic peptides that are unique to the target protein and avoid amino acids prone to modifications (e.g., Methionine) [35]. Use databases like UniProt, PeptideAtlas, and Skyline for selection [35]. |

| Internal Standards | Use heavy labelled peptides (AQUA peptides) for quantification. For highest accuracy, especially to correct for variation in enzymatic cleavage, use heavy labelled full-length proteins as internal standards [35]. |

| Chromatography | Use nanoLC systems (e.g., 75 μm ID columns) for enhanced sensitivity via electrospray ionization [35]. |

| Mass Analyzer | PRM is performed on high-resolution, accurate-mass (HRAM) instruments like Orbitraps, which provide high selectivity and less interference [36]. |

| Isolation Window | Use a narrow isolation window (e.g., 1-2 m/z) around the precursor to minimize co-isolation of background ions and improve S/N [37]. |

PRM Experimental Workflow

The workflow for a sensitive PRM assay involves careful planning from peptide selection through data analysis.

Low-Input Linear Ion Trap (LIT) Sensitivity and FAQs

Enhancing LIT Sensitivity

Q: What are the primary strategies for improving sensitivity in ion trap mass analyzers like the LIT?

A: The dominant strategy for enhancing sensitivity in ion traps is the selective enrichment of targeted ions [37]. This involves trapping and accumulating specific ions of interest over time, which increases the signal.

Q: Besides ion accumulation, how can the overall sensitivity of my LIT-based method be improved?

A: Sensitivity is a system-wide property. Key considerations include:

- Reducing Chemical Noise: Ion suppression from co-eluting matrix components is a major concern that reduces signal. Improved chromatographic separation and thorough sample clean-up (e.g., immunocapture) are critical [38].

- Optimizing Ion Transmission: Efficiently guiding ions from the source into the trap is vital. Using techniques like a "pre-filter" or delayed DC ramp can improve transmission efficiency and significantly boost sensitivity [37].

Table: Linear Ion Trap Sensitivity Factors

| Factor | Impact on Sensitivity | Technical Approach |

|---|---|---|

| Ion Enrichment | Directly increases signal for targeted ions. | Use longer ion accumulation/fill times for specific m/z ranges. |

| Ion Transmission | More ions entering the trap leads to a stronger signal. | Ensure ion optics (lenses, guides) are clean and optimally tuned [39]. |

| Chemical Noise | High background reduces signal-to-noise (S/N). | Implement extensive sample clean-up and optimal LC separation to reduce matrix effects [38]. |

Ion Suppression Identification and Mitigation

Ion suppression is a critical challenge for sensitivity. The following workflow helps identify and address it.

The Scientist's Toolkit

Essential Research Reagent Solutions

Table: Key Reagents and Materials for Sensitive Targeted Proteomics

| Item | Function | Application Note |

|---|---|---|

| Heavy Labelled AQUA Peptides | Internal standards for precise, absolute quantification of target peptides. | Spiked into the sample digest to correct for ionization efficiency and instrument variability [35]. |

| Heavy Labelled Full-Length Proteins | Superior internal standards that correct for variability in all steps, including protein extraction and digestion. | Ideal for highest quantification accuracy, though more costly than peptide standards [35]. |

| Anti-Protein Antibodies | For immunocapture sample clean-up; enrich target protein or peptides from complex samples. | Critical for determining low-abundant protein biomarkers (e.g., in pg mL−1 range) in plasma/serum [34]. |

| Indexed Retention Time (iRT) Kit | A set of synthetic peptides for consistent retention time calibration across all runs. | Essential for robust alignment in DIA and reliable scheduling in targeted PRM assays [33]. |

| Trypsin/Lys-C | Proteolytic enzymes for bottom-up proteomics; cleave proteins into analyzable peptides. | High-quality, sequencing-grade enzymes minimize missed cleavages, ensuring reproducible digestion [33]. |

| Multi-Affinity Removal System (MARS) | HPLC columns with antibodies to remove high-abundance proteins from serum/plasma. | Reduces dynamic range, allowing better detection of low-abundance proteins. Risk of losing targets bound to removed proteins [35]. |

Recommended Software Tools

- Skyline: A free, open-source Windows application for designing MRM, PRM, and DIA experiments and analyzing the resulting data. It is vendor-agnostic and supports proteomics, metabolomics, and small molecule analyses [40].

- Panorama: A web-based repository for sharing, and collaborating on Skyline documents and mass spectrometry data. Panorama Public is a ProteomeXchange resource for sharing datasets with the community [40].

Core Concepts and Strategic Importance

The detection and analysis of low-abundance biomarkers are often hindered by two fundamental challenges: the physical masking of trace targets by highly abundant proteins and the limitations of conventional assays in detecting minute signal differences. This technical support document outlines two powerful, complementary strategies to overcome these barriers: high-abundance protein depletion (HAPD) and nanoparticle technology.

High-Abundance Protein Depletion: In complex biofluids like plasma or serum, a small number of proteins, such as albumin and IgG, constitute the majority (~80-90%) of the total protein content [41] [42]. This creates an extreme dynamic range, often exceeding 10 orders of magnitude, which obscures low-abundance signaling proteins and potential disease biomarkers [42]. Depleting these top-tier proteins is a critical first step to "unmask" the deeper proteome for subsequent analysis [43].

Nanoparticle-Enhanced Detection: Nanotechnology addresses the sensitivity limitations of traditional assays. Nanomaterials, owing to their small size and large surface area, serve as excellent platforms for biosensors [44]. They can be functionalized with ligands, antibodies, or probes to specifically capture and enrich low-abundance targets, and they can significantly amplify detection signals, enabling the ultrasensitive identification of targets like single-nucleotide polymorphisms (SNPs) and rare mutations [44].

The following table summarizes the purpose, mechanisms, and primary applications of these two core strategies.

Table 1: Comparison of Core Enrichment Strategies

| Strategy | Primary Purpose | Key Mechanism | Typical Applications |

|---|---|---|---|

| High-Abundance Protein Depletion | Reduce dynamic range of protein concentration | Immunoaffinity or dye-based removal of top 1-20 most abundant proteins (e.g., albumin, IgG) [41] [43] [42] | Proteomic discovery, biomarker validation, sample pre-fractionation for MS or 2D-GE [43] |

| Nanoparticle Technology | Enhance sensitivity & specificity of target detection | Signal amplification; magnetic enrichment; oriented immobilization of probes [44] [45] | Detection of SNPs, rare mutations, low-abundance pathogens, and extracellular targets [44] [45] |

High-Abundance Protein Depletion: A Practical Guide

FAQ: Depletion Kit Selection and Use

Q1: What are the main types of depletion kits, and how do I choose? The two primary types are immunoaffinity-based and immobilized dye-based kits. Immunoaffinity kits (e.g., ProteoPrep20, Agilent MARS) use antibodies to capture specific high-abundance proteins (HAPs) and are generally preferred for their high specificity and efficiency [43]. They can remove between 6 to 20 HAPs simultaneously. Dye-based kits (e.g., those using Cibacron Blue) are often less expensive but can be less efficient, particularly for non-standard samples like umbilical cord serum, and may suffer from nonspecific binding [43] [42]. For most sensitive applications, immunoaffinity-based depletion is recommended.

Q2: My sample is unique (e.g., from animal model or cord blood). What should I consider? The efficiency of a depletion kit can vary significantly with the sample source. For instance, the structure of albumin in fetal or umbilical cord serum differs from that in adult serum, which can reduce the efficiency of some dye-based kits [43]. Always verify kit compatibility with your specific sample type by consulting the manufacturer's data or the scientific literature. When working with a new sample type, it is prudent to run a pilot experiment to confirm depletion efficiency, for example, by SDS-PAGE.

Q3: What are common pitfalls and how can I avoid them?

- Incomplete Depletion: This can occur due to column overloading. Adhere strictly to the manufacturer's recommended sample load volume [43].

- Nonspecific Binding of Low-Abundance Proteins (LAPs): Some LAPs may bind non-specifically to the depletion resin or to the HAPs themselves (the "albumin sponge" effect) [42]. Using a different kit or methodology can help confirm your results.

- Sample Loss and Dilution: The flow-through from depletion is often diluted. A concentration step (e.g., centrifugal ultrafiltration) is typically required before downstream analysis [43].

Troubleshooting Depletion Experiments

Table 2: Troubleshooting Guide for High-Abundance Protein Depletion

| Problem | Potential Cause | Solution |

|---|---|---|

| High-abundance proteins still visible post-depletion | Column overloaded; kit not suitable for sample type | Reduce sample load; verify kit compatibility with your sample type [43] |

| Low recovery of low-abundance proteins | Nonspecific binding to the depletion medium | Use a different depletion kit/strategy (e.g., switch from dye-based to immunoaffinity) [42] |

| High background or smearing in 2D gels | Incomplete removal of HAPs or their fragments | Perform a second round of depletion with a fresh column; optimize wash buffers [43] |

| Poor reproducibility between runs | Column exhaustion or inconsistent sample preparation | Do not exceed the column's recommended number of uses; standardize sample prep protocol [43] |

Experimental Protocol: Immunoaffinity Depletion of Human Plasma/Serum

This protocol outlines the general workflow for using a spin-column format immunoaffinity depletion kit, such as the ProteoPrep20.

- Sample Preparation: Dilute the plasma or serum sample using the kit's specified equilibration buffer (e.g., PBS). Filter the diluted sample through a 0.2 µm spin filter to remove particulates [41].

- Column Equilibration: Load the immunoaffinity spin column with the recommended volume of equilibration buffer. Centrifuge as specified to condition the column.

- Sample Depletion: Apply the prepared, filtered sample to the pre-equilibrated column. Incubate at room temperature for the specified time (e.g., 20 minutes) to allow for binding [41]. Centrifuge and collect the flow-through, which contains your depleted sample.

- Wash: Perform multiple wash steps by applying equilibration buffer to the column, centrifuging, and pooling the wash flow-through with the initial depleted sample to maximize yield [41].

- Concentration (if needed): Concentrate the pooled depleted sample using a centrifugal concentrator with an appropriate molecular weight cut-off to achieve the desired protein concentration for downstream applications [43].

- Column Regeneration (if applicable): For reusable columns, remove the bound HAPs by applying the provided elution buffer (e.g., low-pH glycine buffer). Re-equilibrate the column with storage buffer for future use [41].

Diagram 1: High-Abundance Protein Depletion Workflow

Nanoparticle Technology for Enhanced Sensitivity

FAQ: Leveraging Nanoparticles for Detection

Q1: How do nanoparticles improve the sensitivity of biochemical assays? Nanoparticles enhance sensitivity through several mechanisms:

- Signal Amplification: A single nanoparticle can carry hundreds of signal-generating molecules (e.g., enzymes, fluorophores), dramatically amplifying the signal from a single binding event [44] [46].

- Magnetic Enrichment: Magnetic nanoparticles (MNPs) allow for the physical separation and concentration of target-bound complexes from a complex sample matrix, effectively increasing the local concentration of the analyte before detection [45].

- Improved Probe Orientation: Functionalizing nanoparticles with proteins like Staphylococcal protein A (SPA) ensures antibodies are immobilized in an oriented manner (via their Fc region), which improves binding affinity and reduces steric hindrance, leading to significantly better detection limits [45].

Q2: What are the key considerations when designing a nanoparticle-based assay?

- Surface Functionalization: The method used to attach recognition elements (e.g., antibodies, aptamers) is critical. Oriented immobilization (e.g., using SPA) is superior to random conjugation [45].

- Size and Material: The nanoparticle's size and core material (e.g., gold, magnetic iron oxide, silica) influence its optical properties, magnetic responsiveness, and biocompatibility. The optimal size depends on the application; for example, 40–60 nm gold nanoparticles showed the highest efficiency in degrading the HER2 receptor in one study [47].

- Minimizing Non-Specific Binding: A robust blocking strategy and optimized surface chemistry are essential to prevent the nanoparticle from interacting non-specifically with other sample components.

Q3: Can you provide a quantitative example of sensitivity improvement? Yes. In a lateral flow immunoassay for Mycoplasma pneumoniae, using SPA-functionalized MNPs for orientational labelling and magnetic enrichment lowered the visual limit of detection (LOD) from 10^6 CFU/mL (with conventional random probes) to 10^4 CFU/mL—a 100-fold improvement in sensitivity [45].

Troubleshooting Nanoparticle-Based Assays

Table 3: Troubleshooting Guide for Nanoparticle-Based Assays

| Problem | Potential Cause | Solution |

|---|---|---|

| High background signal | Insufficient blocking; non-specific binding of nanoparticles | Optimize blocking buffer (e.g., BSA concentration); include detergents (e.g., Tween-20) in wash buffers [45] |

| Weak or no signal | Poor antibody orientation; low coupling efficiency; nanoparticle aggregation | Use oriented conjugation (e.g., SPA); characterize conjugation yield; ensure monodisperse nanoparticles during synthesis and storage [45] |

| Poor reproducibility | Inconsistent nanoparticle synthesis or functionalization | Implement rigorous quality control (e.g., DLS for size, UV-Vis for concentration) for each batch [45] |

| Low enrichment efficiency (for MNPs) | Antibody density too high/low; magnetic separation time too short | Titrate antibody-to-nanoparticle ratio; optimize magnetic separation time and strength [45] |

Experimental Protocol: SPA-Functionalized Magnetic Nanoparticles for Pathogen Detection

This protocol is adapted from research on detecting Mycoplasma pneumoniae and demonstrates the synergy of oriented labelling and magnetic enrichment [45].

- Synthesis of Magnetic Nanoparticles (MNPs): Synthesize MNPs (e.g., via the microemulsion method using FeCl₂ and FeCl₃ in a CTAB/butanol/octane system). Wash the product thoroughly with ethanol and resuspend in ultrapure water [45].

- SPA Functionalization (Aggregation–Precipitation Crosslinking): Immobilize Staphylococcal protein A (SPA) onto the MNPs. This creates a stable, oriented surface that specifically binds the Fc region of antibodies.

- Oriented Antibody Conjugation: Incubate the SPA–MNP conjugate with your target-specific monoclonal antibody. The SPA will ensure the antibodies are correctly oriented for optimal antigen binding.

- Sample Incubation and Magnetic Enrichment:

- Mix the antibody-conjugated SPA–MNPs with the sample containing the target pathogen.

- Incubate to allow the formation of pathogen-MNP complexes.

- Place the tube on a magnetic rack to separate the bound complexes from the solution.

- Carefully aspirate and discard the supernatant.