Beyond Movement: Using Actigraphy Data as an Objective Biomarker for Social Interaction Monitoring in Clinical Research

This article explores the innovative application of actigraphy, traditionally used for measuring sleep and physical activity, for the objective monitoring of social interaction in clinical and research settings.

Beyond Movement: Using Actigraphy Data as an Objective Biomarker for Social Interaction Monitoring in Clinical Research

Abstract

This article explores the innovative application of actigraphy, traditionally used for measuring sleep and physical activity, for the objective monitoring of social interaction in clinical and research settings. Aimed at researchers, scientists, and drug development professionals, it synthesizes current evidence on how machine learning models can extract social behavioral patterns from actigraphy data. The content covers the foundational relationship between activity rhythms and social isolation, details methodological approaches for data collection and analysis, addresses key implementation challenges, and validates actigraphy against self-reports and other digital tools. The synthesis provides a roadmap for leveraging this non-invasive, continuous monitoring tool to enhance outcomes in neurology, psychiatry, and geriatric care.

The Scientific Basis: Linking Activity Patterns to Social Isolation and Loneliness

In actigraphy data social interaction monitoring research, precisely defining and distinguishing between social isolation and loneliness is a fundamental prerequisite for robust study design and accurate data interpretation. Although often used interchangeably in lay discourse, these terms represent distinct yet sometimes overlapping constructs. Social isolation is an objective state characterized by a quantifiable lack of social connections and interactions, while loneliness is the subjective, distressing feeling resulting from a discrepancy between one's desired and actual social relationships [1] [2]. This protocol outlines the conceptual definitions, measurement approaches, and analytical considerations essential for researchers, scientists, and drug development professionals working in this field.

Conceptual Definitions and Distinctions

The core distinction lies in the objective versus subjective nature of the experiences.

- Social Isolation (Objective Disconnection): This construct refers to the structural aspect of an individual's social network. It is characterized by a low frequency of social contacts, a small social network, and limited social participation [3] [1]. It is a condition that can be observed and quantified, for instance, by counting social interactions or network ties.

- Loneliness (Subjective Perception): Loneliness is a perceptual state that does not always correlate with objective social metrics. It is defined as the unpleasant experience that occurs when a person's network of social relations is deficient in some important way, either quantitatively or qualitatively [1] [2]. A person can have numerous social contacts yet feel lonely, or have few contacts and feel satisfied.

Table 1: Core Conceptual Distinctions Between Social Isolation and Loneliness

| Feature | Social Isolation | Loneliness |

|---|---|---|

| Nature | Objective, quantifiable | Subjective, perceptual |

| Definition | Scarcity of social connections and interactions | Perception that social needs are not being met |

| Primary Dimension | Structural, external | Emotional, internal |

| Correlation | Low correlation (e.g., Spearman’s correlation = 0.20) [1] | |

| Key Measurable | Social interaction frequency, network size | Feelings of loneliness, perceived social adequacy |

Quantitative Differentiation in Research Findings

Empirical evidence underscores the necessity of measuring these constructs separately, as they correlate with different outcomes and potentially involve distinct biological or behavioral mechanisms.

Table 2: Differential Associations with Health and Behavioral Markers

| Parameter | Association with Social Isolation | Association with Loneliness |

|---|---|---|

| Actigraphy-Measured Sleep Quality | Associated with more disrupted sleep (e.g., higher WASO, lower percent sleep) [1] | Associated with more disrupted sleep (e.g., higher WASO, lower percent sleep) [1] |

| Self-Reported Sleep | Not associated with insomnia symptoms or shorter sleep duration [1] | Strongly associated with more insomnia symptoms and shorter sleep duration [1] |

| Time in Bed | Longer time in bed [1] | Not reported |

| Physical Activity (Actigraphy) | Key factor associated with low social interaction frequency [3] [4] | Less directly associated; relationship may be mediated by other factors [3] [2] |

| Sleep Quality (Actigraphy) | Not the primary related factor [3] | Key factor related to high loneliness levels [3] [4] |

| Digital Phenotyping (Social App Use) | Not the primary focus | Instant messenger and social media usage associated with increased momentary and daily loneliness [2] |

The Scientist's Toolkit: Research Reagents & Essential Materials

Table 3: Essential Materials and Tools for Actigraphy-Based Social Function Research

| Item | Function & Application in Research |

|---|---|

| Wrist-Worn Actigraph (e.g., GENEActiv, ActiGraph GT9X) | The primary tool for objective, continuous monitoring of physical activity and sleep-wake patterns in naturalistic settings. Provides data on activity counts, sleep parameters (TST, WASO, sleep efficiency), and circadian rhythms [2] [5]. |

| Ecological Momentary Assessment (EMA) Mobile App | A smartphone application used for real-time, in-the-moment self-reporting. It reduces recall bias and is ideal for capturing dynamic subjective states like momentary loneliness and the frequency of recent social interactions [3] [2] [6]. |

| Validated Self-Report Scales | Questionnaires administered at baseline or intermittently to provide trait-level measures. Examples include the UCLA Loneliness Scale for loneliness and social network indices for social isolation [1] [2]. |

| Data Integration & Analysis Platform | Software (e.g., R, Python with scikit-learn) capable of handling time-series data from actigraphy and EMA, and for applying machine learning models to identify complex patterns and predictors [3]. |

Experimental Protocols for Concurrent Measurement

Protocol 1: Integrated EMA and Actigraphy Assessment

This protocol is designed to capture the dynamic interplay between objective behavior and subjective experience in a community-dwelling elderly population at risk for cognitive decline [3] [7].

Objective: To explore factors related to social interaction frequency and loneliness levels among older adults in the predementia stage using machine learning models.

Population: Community-dwelling older adults (e.g., >65 years) with Subjective Cognitive Decline (SCD) or Mild Cognitive Impairment (MCI). Sample size ~100 participants [3].

Procedure:

- Baseline Assessment: Collect demographic data, medical history, and administer baseline cognitive and psychological surveys (e.g., K-MMSE-2, MBI-Checklist) [3] [7].

- Device Deployment:

- EMA Data Collection:

- Data Processing:

- Actigraphy Data: Process raw accelerometry data to derive metrics across domains: physical movement (e.g., activity counts), sedentary behavior, sleep quantity (Total Sleep Time - TST), and sleep quality (Wake After Sleep Onset - WASO, sleep efficiency) [3] [1].

- EMA Data: Aggregate social interaction counts and loneliness ratings to create person-level averages (e.g., mean daily social interaction score) and within-person variability metrics [7] [6].

- Data Integration and Analysis:

- Synchronize actigraphy and EMA data streams using timestamps.

- Use machine learning models (e.g., Random Forest, Gradient Boosting Machine) to identify which objective actigraphy domains (physical movement, sleep quality, etc.) are most predictive of low social interaction frequency and high loneliness levels, treated as separate outcomes [3].

Protocol 2: Social Actigraphy for Dyadic Analysis

This protocol assesses the "synchrony" or association in physical activity profiles between individuals in a close dyad (e.g., married couples), providing an objective metric of co-participation in daily life [5].

Objective: To quantify the association between motor activity profiles of two individuals living together and verify the partner's effect on one's physical activity pattern.

Population: Married, cohabiting, healthy retired couples (e.g., 20 dyads). The method is also applicable to other dyadic relationships (e.g., parent-child) [5].

Procedure:

- Device Setup and Synchronization:

- Provide each member of the dyad with a wrist-worn actigraph (e.g., GENEActiv).

- Instruct them to wear the device on their non-dominant wrist 24/7 for 7 consecutive days.

- Synchronize the internal clocks of both devices prior to distribution [5].

- Data Collection:

- Participants go about their normal, unsupervised daily lives.

- Data Analysis:

- Motor Activity Index Calculation: Process raw acceleration data to calculate a Motor Activity (MA) index for each individual in 1-minute epochs over the 7-day period (10,080 data points per person). The MA index is the standard deviation of the acceleration vector magnitude per epoch [5].

- Correlation Coefficient (CC): Calculate the correlation coefficient (zero lag) between the 10,080-point MA profiles of the two dyad members. This CCcouple quantifies the level of association or "synchrony" in their daily activity patterns [5].

- Comparison: Compare the CCcouple against:

- CCself24: The correlation of an individual's profile with their own profile shifted by 24 hours (controlling for daily routines).

- CCbetween: The correlation between randomly paired, unrelated individuals from different dyads [5].

- A significantly higher CCcouple versus CCbetween provides objective evidence that the partner influences one's daily activity pattern, a form of objective social connection.

Actigraphy, which uses wearable sensors to monitor movement, has become an indispensable tool for objectively capturing human behavior in naturalistic settings. By providing continuous, high-resolution data on physical activity and rest, actigraphy devices serve as a critical window into both individual behavioral patterns and socially synchronized rhythms. The technology has evolved significantly from simple motion detection to sophisticated multisensor platforms that can measure physiological parameters such as heart rate, skin temperature, and ambient light exposure, enabling researchers to investigate the complex interplay between biological rhythms, social influences, and environmental factors [8]. This methodological approach is particularly valuable for studying behavior across diverse contexts, from sleep-wake cycles and circadian rhythms to social synchronization phenomena in populations ranging from university students to clinical patients and older adults.

The application of actigraphy in research provides several distinct advantages over traditional observational methods or self-report measures. By collecting data passively as individuals go about their daily routines, actigraphy minimizes recall bias and offers unprecedented insight into real-world behaviors with high ecological validity. Furthermore, the longitudinal nature of actigraphy data collection—often spanning days, weeks, or even months—enables researchers to capture dynamic behavioral patterns and their variations over time, which is particularly valuable for understanding how social contexts shape individual and group behaviors [9] [10]. The emergence of open-source processing platforms like the Modular Actigraphy Platform (MAP) has further enhanced the rigor and reproducibility of actigraphy data analysis, supporting more robust investigations into the social dimensions of human behavior [11].

Theoretical Framework: Social Synchronization of Behavior

A growing body of research demonstrates that human behaviors are not merely individual phenomena but are profoundly shaped by social contexts and relationships. The theoretical foundation for understanding actigraphy as a window into social rhythms draws from two complementary mechanisms: homophilic selection (the tendency to form relationships with others who have similar characteristics) and peer influence (where individuals in close relationships directly affect each other's behaviors) [12]. This framework suggests that social ties can create synchronized behavioral patterns within groups, with closer relationships typically associated with stronger behavioral alignment.

Actigraphy provides an objective methodology to quantify these social synchronization effects by simultaneously monitoring daily activities and sleep-wake patterns across connected individuals. Research with university students has demonstrated that closer friendships show significantly more similar sleep timing and duration compared to more casual friendships, with sleep parameters positively covarying day-to-day irrespective of next-day class schedules [12]. These findings suggest that students' daily sleep patterns may be contingently dependent upon the behavior of their close friends, highlighting the powerful influence of social relationships on fundamental biological rhythms. The social synchronization of behaviors extends beyond sleep to encompass daily activity rhythms, with actigraphy data revealing how social constraints and opportunities shape the timing and intensity of physical activity across different age groups and populations [13] [10].

Quantitative Evidence: Actigraphy Data on Social Behavioral Patterns

Key Studies on Social Synchronization

Recent research has provided compelling quantitative evidence for the social synchronization of behaviors using actigraphy methodologies. The following table summarizes key findings from influential studies in this area:

Table 1: Key Actigraphy Studies on Social Synchronization of Behavior

| Study Population | Sample Characteristics | Monitoring Duration | Key Social Synchronization Findings | Citation |

|---|---|---|---|---|

| University Students | 150 friend pairs (300 students); close vs. casual friendships | 2 weeks | On non-school nights, close friends showed ~30 min smaller differences in sleep timing; daily sleep covaried positively in close friends only | [12] |

| Japanese University Students | 22 female students | 16 weeks (pre- and during pandemic) | Reduced social restrictions during pandemic delayed sleep timing by 20-40 min; individual responses varied substantially based on personality traits | [13] |

| Older Adults (NHANES) | 14,111 individuals from national database | 7 days | Strong age-dependent activity patterns; social and work constraints shape behavioral rhythms across lifespan | [10] |

| Stroke Rehabilitation Patients | 70 subacute stroke patients | 7 days | Interdaily stability (IS) of rest-activity rhythms predicted functional recovery (β=0.23, P=0.013), showing how social routines support rehabilitation | [14] |

Actigraphy Metrics for Social Behavior Research

Actigraphy provides numerous quantitative metrics that can illuminate social influences on behavior. The following table outlines key parameters particularly relevant for social behavior research:

Table 2: Key Actigraphy Metrics for Social Behavior Research

| Metric Category | Specific Parameters | Social Behavior Relevance | Analysis Considerations |

|---|---|---|---|

| Sleep-Wake Timing | Sleep onset, wake time, midpoint | Synchronization among social groups; social jetlag | Differences between school/work nights vs. free nights [12] [13] |

| Sleep Duration & Quality | Total sleep time, sleep efficiency, WASO | Shared sleep behaviors in relationships; social disruption of sleep | Covariation of daily sleep parameters in social dyads [12] |

| Circadian Rhythm Indicators | Interdaily stability (IS), intradaily variability (IV), relative amplitude (RA) | Regularity imposed by social schedules; rhythm synchronization | IS particularly sensitive to social constraints [14] |

| Physical Activity Patterns | Most active continuous hours (M10), least active hours (L5) | Socially facilitated activity; group exercise patterns | M10 timing and volume reflect socially structured activities [10] [14] |

| Chronotype Indicators | Sleep midpoint, morningness-eveningness | Social alignment of preferences; misalignment costs | Derived from free days without social constraints [12] [10] |

Experimental Protocols for Social Behavior Research

Protocol 1: Dyadic Social Synchronization Study

Objective: To investigate behavioral synchronization in friend pairs and compare closeness levels.

Materials:

- Two research-grade actigraphy devices (e.g., ActiGraph wGT3X-BT or Fibion Helix)

- Daily sleep diary forms or electronic data capture system

- Friendship assessment questionnaires (voluntary interdependence scale, friendship ranking)

- Chronotype assessment (Morningness-Eveningness Questionnaire)

- Class schedule/work schedule documentation

Procedure:

- Participant Recruitment and Screening: Recruit friend pairs from target population (e.g., university students, coworkers). Exclude individuals with shift work, medical conditions affecting sleep, or those taking medications significantly affecting sleep/wake patterns.

- Baseline Assessment: Administer friendship closeness measures (voluntary interdependence scale and friendship ranking), chronotype questionnaire (MEQ), and collect demographic information and class/work schedules.

- Device Initialization and Distribution: Initialize actigraphy devices with synchronized time settings. Instruct participants to wear devices continuously on the non-dominant wrist for 2 weeks, removing only for water-based activities.

- Daily Data Collection: Participants complete brief sleep diaries each morning, noting estimated bedtimes, wake times, and any notable events. Actigraphy devices record motion data at 30-60 second epochs.

- Data Retrieval and Quality Check: Collect devices after monitoring period. Verify data quality and sufficient wear time (typically ≥5 valid days including weekends). Exclude participants with insufficient data (<70% valid wear time).

- Data Processing: Process raw actigraphy data using validated algorithms (e.g., Sadeh algorithm for adults, Sazonov algorithm for children) to determine sleep-wake patterns. Calculate daily sleep parameters: onset, offset, midpoint, duration, and efficiency.

- Statistical Analysis: Calculate pairwise absolute differences in sleep parameters for each friend pair. Use linear mixed models to test associations between friendship closeness and sleep parameter differences, controlling for chronotype and class schedules. Test for daily covariation of sleep parameters using time-lagged analyses.

Analytical Considerations: Separate analyses for school/work nights versus free nights. Include appropriate covariates in models (chronotype differences, shared class schedules, same residence status). Consider actor-partner interdependence models for dyadic analyses [12].

Protocol 2: Longitudinal Social Transitions Study

Objective: To examine how changes in social constraints affect behavioral patterns over time.

Materials:

- Waist-worn or wrist-worn actigraphy devices (e.g., ACOS models or Fibion SENS for extended monitoring)

- Environmental light sensors (if available)

- Subjective sleep and mood measures (e.g., OSA-MA sleep inventory)

- Personality assessment (e.g., NEO-FFI)

- Social schedule documentation

Procedure:

- Cohort Establishment: Recruit participants during stable social periods (e.g., regular academic schedule). Collect comprehensive baseline data including personality traits, chronotype, and typical social rhythms.

- Pre-Transition Monitoring: Conduct continuous actigraphy monitoring for 4-8 weeks during baseline period with monthly data downloads and device rotation.

- Transition Identification: Document anticipated social transitions (e.g., vacation periods, exam schedules, changes in work demands).

- Post-Transition Monitoring: Continue monitoring for equivalent period following social transition with identical assessment protocols.

- Contextual Data Collection: Record significant social events, schedule changes, and environmental factors throughout study period.

- Data Processing: Calculate rest-activity rhythm indicators including interdaily stability (IS), intradaily variability (IV), relative amplitude (RA), and sleep timing/duration metrics.

- Multi-Level Analysis: Use piecewise growth models to examine changes in behavioral patterns surrounding social transitions. Test moderation effects of individual characteristics (personality, chronotype) on adaptation to social changes.

Special Considerations: This protocol is particularly suited for natural experiments such as studying behavioral adaptations during pandemic-related restrictions [13] or seasonal changes in social demands.

Table 3: Research Reagent Solutions for Actigraphy Studies

| Resource Category | Specific Tools | Application in Social Behavior Research |

|---|---|---|

| Actigraphy Devices | ActiGraph wGT3X-BT, Fibion Helix, GENEActiv | Core movement sensing; device selection depends on monitoring duration, required parameters, and budget [12] [15] |

| Data Processing Platforms | Modular Actigraphy Platform (MAP), GGIR package, Sleep Sign Act software | Raw data processing; open-source platforms enhance reproducibility and standardization [11] [13] |

| Friendship Assessment Tools | Voluntary Interdependence Scale (ADF-F2), friendship ranking questionnaires | Quantifying relationship closeness as predictor variable in dyadic studies [12] |

| Chronotype Assessment | Morningness-Eveningness Questionnaire (MEQ), Munich Chronotype Questionnaire | Measuring individual timing preferences as potential moderators of social synchronization [12] [13] |

| Sleep Diaries | Consensus Sleep Diary, custom electronic diaries | Supplementary data for verifying actigraphy-derived sleep parameters and contextual information [12] |

| Statistical Packages for Dyadic Data | R with multilevel modeling packages, MLwiN, actor-partner interdependence model scripts | Analyzing non-independent data from social dyads or groups [12] |

Data Processing and Analytical Framework

The transformation of raw accelerometer data into meaningful behavioral indicators requires a sophisticated processing pipeline. The Modular Actigraphy Platform (MAP) represents a significant advancement in this domain, providing a cloud-based computational platform that processes high-resolution time series sensor data to derive sleep and physical activity metrics [11]. This platform integrates open-source scoring algorithms like GGIR and MIMS unit processing, enabling researchers to implement standardized processing workflows while maintaining flexibility for study-specific customization.

A critical consideration in actigraphy research is the selection of appropriate algorithms for sleep-wake scoring and physical activity analysis. Different algorithms have been validated for specific populations, and their performance can vary substantially, particularly for special populations like children or older adults with movement disorders [8] [16]. For social behavior research, the interdaily stability (IS) metric—which quantifies the regularity of rest-activity patterns across days—has proven particularly valuable as it reflects the consistency of social schedules and constraints [14]. Similarly, relative amplitude (RA) measures the distinction between active and rest periods, which often aligns with social routines.

Implementation Considerations and Challenges

Successful implementation of actigraphy research for studying social behaviors requires careful attention to several methodological challenges. Device selection must balance data quality with participant burden, considering factors such as battery life, wearability, and form factor. Recent evidence suggests generally high adherence rates (81.6% pooled adherence) in primary school-aged children, though with substantial variability across studies [16]. Similar considerations apply to adult populations, where device comfort and usability significantly impact compliance.

The placement of actigraphy devices also warrants careful consideration. While wrist-worn devices have become standard for sleep monitoring, waist-worn devices may provide more accurate assessment of physical activity levels [13]. Researchers studying social behaviors must also establish clear protocols for handling missing data, as non-wear periods may not be random and could reflect socially significant behaviors (e.g., device removal for social events). Additionally, the monitoring duration must be sufficient to capture both typical patterns and variations—typically at least 7-14 days to account for weekly cycles in social routines [8] [10].

Statistical analysis of social actigraphy data presents unique challenges due to the non-independence of observations from socially connected individuals. Appropriate analytical approaches include multilevel modeling to account for the nested structure of data (days within individuals within dyads/groups), actor-partner interdependence models for dyadic data, and time-series approaches for assessing covariation and synchronization [12]. Furthermore, researchers must carefully consider how to control for potential confounders such as shared environments, parallel schedules, and selection effects in social relationships.

Actigraphy research into social behaviors is rapidly evolving, with several promising future directions. The integration of additional sensors—such as photoplethysmography for heart rate monitoring, ambient light sensors, and skin temperature monitors—will provide richer data streams to contextualize movement patterns and better understand the physiological correlates of social behaviors [15] [8]. Furthermore, the development of more sophisticated analytical approaches, including machine learning techniques for pattern recognition in large actigraphy datasets, will enable researchers to identify subtle social influences on behavior that may not be captured by traditional metrics [10].

The application of actigraphy in social behavior research also holds significant promise for clinical applications. Recent evidence that circadian rest-activity rhythms predict functional recovery in stroke rehabilitation patients [14] suggests that interventions targeting social rhythms may enhance recovery outcomes. Similarly, the finding that sleep patterns covary in close friend pairs [12] points to potential novel intervention approaches that target social networks rather than individuals for behavior change initiatives.

In conclusion, actigraphy provides a powerful methodology for investigating the social dimensions of human behavior. By objectively capturing daily rhythms of activity and rest in naturalistic settings, actigraphy data reveal how social relationships and constraints shape fundamental biological processes. The continued refinement of actigraphy technology and analytical approaches will further enhance our understanding of the complex interplay between social contexts and individual behaviors, with important implications for both basic research and applied interventions across diverse populations.

This application note synthesizes key research findings on the distinct and interconnected roles of physical activity (PA) and sleep quality as measurable components of social health. Leveraging advancements in actigraphy and digital phenotyping, we present a framework for quantifying these relationships in clinical and real-world settings. The data and protocols provided herein support researchers and drug development professionals in integrating objective behavioral measures into studies of social interaction, loneliness, and related therapeutic outcomes. Evidence from recent clinical studies, including research on autism spectrum disorder (ASD) and aging populations, demonstrates that actigraphy-derived measures of activity and sleep correlate significantly with caregiver-reported outcomes and self-reported loneliness [17] [2] [3]. This document provides structured data summaries, validated experimental protocols, and analytical toolkits to facilitate the adoption of these digital biomarkers in future research.

Social health, encompassing an individual's ability to form relationships and avoid detrimental loneliness, is increasingly recognized as a critical component of overall well-being. Its decline is linked to adverse outcomes, including cognitive impairment and increased mortality risk [2] [3]. Traditional assessment of social health relies heavily on subjective self-reports, which are susceptible to recall and social desirability biases.

The emergence of digital health technologies (DHTs), particularly actigraphy, provides a paradigm shift, enabling continuous, objective, and non-invasive monitoring of related behaviors. Physical activity and sleep quality are two such behaviors that act as key pillars influencing—and being influenced by—social health [17] [18] [2]. This note details how actigraphy data can be used to:

- Establish physical activity as a biomarker for social engagement and a protective factor against loneliness.

- Identify sleep quality as a critical factor correlated with emotional regulation and perceived loneliness.

- Develop targeted interventions and measure treatment efficacy in clinical trials for neuropsychiatric and neurodegenerative disorders.

Key Quantitative Findings

The following tables consolidate primary quantitative evidence from recent studies, highlighting the distinct associations of physical activity and sleep with various aspects of social health.

Table 1: Actigraphy-Based Associations in Autism Spectrum Disorder (ASD) Populations [17]

| Actigraphy Measure | Correlated Clinical Outcome (Caregiver-Reported) | Statistical Significance & Notes |

|---|---|---|

| Daytime Physical Activity | Self-Regulation (ABI Subscale) | Significant correlation (P < 0.05) |

| Sleep Disturbance (Activity during sleep period) | Sleep Quality (JAKE Daily Tracker) | Significant correlation (P < 0.05) |

| Sleep Disturbance | Baseline difference between ASD and Typically Developing (TD) populations | Significant difference (P < 0.05) |

| Daytime Physical Activity & Sleep Metrics | Anxiety (CASI-Anxiety), Social Responsiveness (SRS-2), Repetitive Behaviors (RBS-R) | Potentially relevant correlations reported |

Table 2: Distinct Links to Social Interaction and Loneliness in Predementia and General Populations [2] [3]

| Social Health Metric | Key Actigraphy/Behavioral Correlate | Model Performance / Association |

|---|---|---|

| Low Social Interaction Frequency | Reduced Physical Movement | Key identifying factor in ML models (Random Forest Accuracy: 0.849) [3] |

| High Loneliness Levels | Poor Sleep Quality | Key identifying factor in ML models (GBM Accuracy: 0.838) [3] |

| Momentary Loneliness | Increased Social Media & Instant Messenger Usage | B = 0.53, p = 0.001 (within-person) [2] |

| Daily & Momentary Loneliness | Increased Instant Messenger Usage | B = 2.83, p = 0.018 (daily); B = 2.95, p = 0.017 (momentary) [2] |

| Loneliness (Protective Factor) | Greater Physical Activity | Negative association observed [2] |

Table 3: The Interplay of Physical Activity and Sleep Quality in Mental and Social Health [18] [19] [20]

| Study Focus | Key Finding on Physical Activity (PA) | Key Finding on Sleep Quality |

|---|---|---|

| Older Adults during COVID-19 | Reduced PA levels negatively associated with sleep quality [18] [21] | Sleep quality associated with PA; PA recommended to mitigate isolation's negative effects [18] |

| Chinese College Students (Post-Pandemic) | Improvement post-pandemic not significantly associated with mental health after adjustment for confounders [19] | Improved sleep quality significantly associated with reductions in depression, anxiety, and stress [19] |

| College Students (Chain Mediation) | Reduces mobile phone dependence, indirectly improving sleep duration and quality [20] | Directly improved by PA, and indirectly via reduction of mobile phone dependence [20] |

Experimental Protocols for Actigraphy-Based Social Health Monitoring

This section outlines standardized protocols for employing actigraphy in studies investigating the physical activity-sleep-social health axis.

Protocol 1: Multi-Day Actigraphy for Sleep-Wake and Activity Rhythms

Application: Objective characterization of sleep quality and physical activity patterns in clinical and observational studies [17] [22].

Materials & Equipment:

- Actigraphy Device: Wrist-worn, tri-axial accelerometer (e.g., ActiGraph GT9X Link, GENEActiv).

- Data Management Platform: Vendor-specific software for data aggregation and initial processing (e.g., ActiGraph CenterPoint).

- Analysis Software: Open-source or commercial tools for sleep and activity algorithm application (e.g., R, Python, vendor-specific suites).

Procedure:

- Device Initialization: Configure devices with appropriate sampling frequencies (e.g., 30-100 Hz). Synchronize device clocks to a standard time.

- Participant Instruction: Instruct participants to wear the device on the non-dominant wrist continuously for a minimum of 7 days, removing only for charging or water-based activities. Encourage use of an event marker or sleep diary to log "lights out" and "get up" times.

- Data Collection: A minimum of 5 valid days (including weekdays and weekends) is typically required for reliable analysis.

- Data Preprocessing: Download raw acceleration data. Apply validated algorithms to identify sleep periods (e.g., Cole-Kripke algorithm) and calculate activity counts per epoch.

- Feature Extraction: Calculate key metrics for each 24-hour period. Core metrics include:

- Sleep Metrics: Sleep Onset Time, Wake Time, Total Sleep Time (TST), Sleep Efficiency (SE%), Wake After Sleep Onset (WASO).

- Activity Metrics: Average daily activity counts, time spent in sedentary, light, and moderate-to-vigorous activity.

- Data Aggregation: Compute weekly averages for each metric to account for day-to-day variability.

Protocol 2: Integrated Digital Phenotyping for Momentary Loneliness

Application: High-resolution assessment of real-time behavioral predictors (PA, sleep, smartphone use) and subjective loneliness states [2].

Materials & Equipment:

- Actigraphy Device: As in Protocol 1.

- Smartphone Application: For Ecological Momentary Assessment (EMA) and passive mobile sensing (e.g., movisensXS).

- Cloud Server: For secure, real-time data transfer from the smartphone app.

Procedure:

- EMA Configuration: Program the smartphone app to deliver brief, randomized prompts (e.g., 8 times per day for 7 days). Prompts should assess momentary loneliness (e.g., "During the last hour, to which extent did you feel lonely?" on a VAS 0-100) and recent social activity.

- Passive Sensing: Enable passive collection of smartphone metadata, including usage duration of social media and instant messenger applications.

- Actigraphy Synchronization: Ensure actigraphy data collection is synchronized with the EMA period.

- Data Integration: Align EMA responses and passive mobile sensing data with actigraphy-derived features (e.g., physical activity in the hour preceding an EMA prompt, sleep efficiency from the previous night).

- Statistical Analysis: Employ multi-level modeling to disentangle within-person from between-person effects. Temporal dynamics can be further explored via network analysis.

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Materials and Digital Tools for Actigraphy Research

| Item / Solution | Function / Application | Example Products / Models |

|---|---|---|

| Research-Grade Actigraph | The primary sensor for continuous, objective measurement of movement and sleep-wake patterns. | ActiGraph GT9X Link, GENEActiv, Actigraph Leap [17] [2] [8] |

| Consumer Wearables (for feasibility studies) | Lower-burden option for large-scale or remote studies; wellness tracking. | Oura Ring, Apple Watch, Samsung Galaxy Watch, Fitbit [8] |

| EMA & Mobile Sensing Platform | Software for real-time subjective data collection (EMA) and passive smartphone data acquisition. | movisensXS, Siuvo Intelligent Psychological Assessment Platform [2] |

| Data Processing & Analysis Suite | Software for applying sleep/activity algorithms, statistical analysis, and machine learning. | ActiLife, GGIR (R package), custom Python/R scripts [3] [22] |

| Validated Outcome Measures (for correlation) | Standardized clinical scales to validate and contextualize actigraphy findings. | Autism Behavior Inventory (ABI), UCLA Loneliness Scale, Pittsburgh Sleep Quality Index (PSQI) [17] [2] |

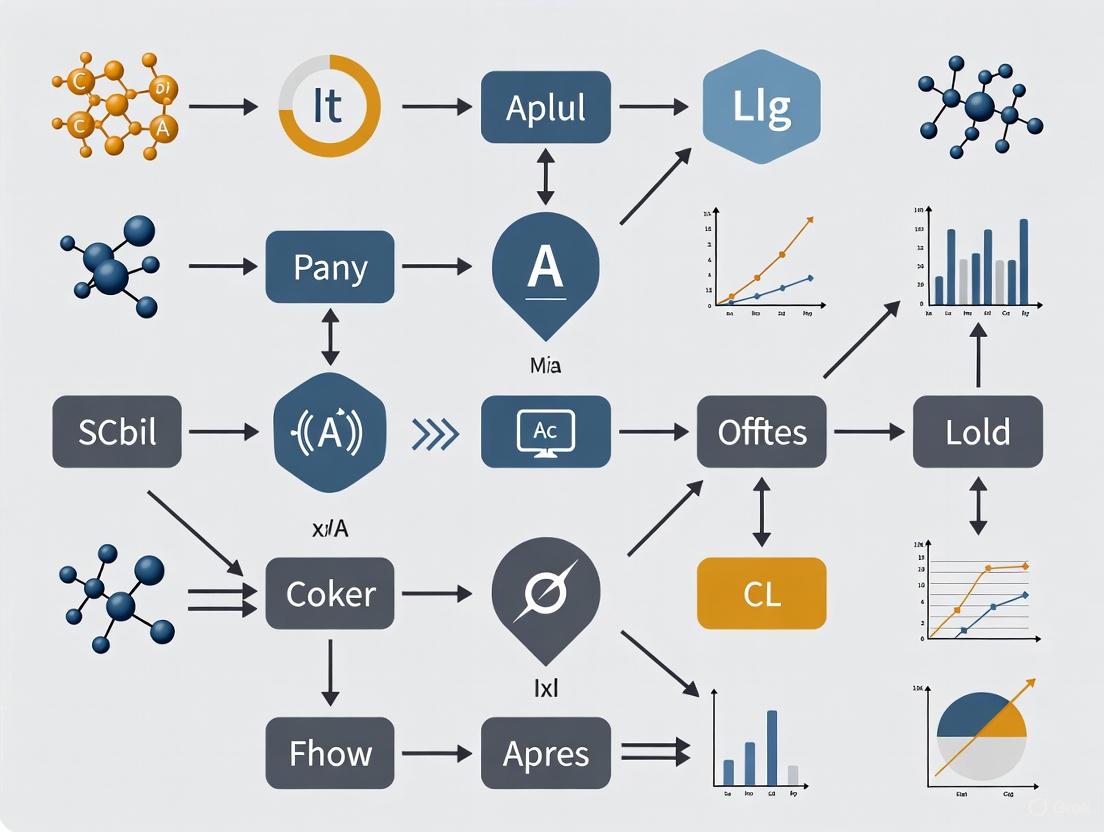

Analytical Framework and Pathway Visualization

The relationship between physical activity, sleep, and social health is not linear but operates through a dynamic system of mediating and moderating factors. The following diagram synthesizes insights from the cited research to map these complex interactions.

Pathway Interpretation:

- Distinct Primary Pathways: Machine learning models identify physical activity as the primary factor for predicting objective social interaction frequency, likely because it facilitates engagement in social activities and environments [3]. Conversely, sleep quality is the primary predictor for subjective loneliness levels, underscoring its role in emotional regulation and perception of social connectedness [3].

- Interconnecting Loops: These pillars are interconnected. Physical activity improves sleep quality, and better sleep provides energy for activity. Both contribute to a positive mental state, which further promotes social engagement and reduces loneliness [20] [23].

- The Role of Digital Behavior: Smartphone and app usage can act as a negative moderator. High usage, particularly of social media and instant messengers, is associated with increased loneliness and can displace both sleep and in-person socializing, creating a vicious cycle [2] [20]. Physical activity can help break this cycle by reducing phone dependence.

The escalating global prevalence of dementia represents one of the most significant public health challenges of our time, with costs exceeding $1.3 trillion annually and projected to affect over 150 million people by 2050 [3]. Within this crisis lies a critical, often overlooked window of opportunity: the predementia stages of Subjective Cognitive Decline (SCD) and Mild Cognitive Impairment (MCI). Recent research reveals an alarming detection gap, with approximately 75% of cognitive impairment cases in primary care settings remaining undiagnosed, rising to over twice the likelihood for African American patients [24]. This diagnostic delay has profound consequences, including medication errors, increased fall risk, and limited access to supportive care.

Digital health technologies, particularly actigraphy and ecological momentary assessment (EMA), are emerging as transformative tools for identifying at-risk individuals and monitoring disease progression. These technologies enable continuous, objective data collection in naturalistic environments, capturing subtle behavioral markers that often precede clinical diagnosis [25] [3] [2]. By focusing on vulnerable populations in these predementia stages, researchers and clinicians can target a period where interventions may still slow cognitive decline and preserve functional independence [3].

Quantitative Evidence: Actigraphy and Social Interaction Correlates in Predementia

Research has established significant correlations between digitally-derived biomarkers and cognitive and social health outcomes in vulnerable older adults. The tables below summarize key quantitative findings from recent studies.

Table 1: Machine Learning Model Performance for Predicting Social Isolation in Predementia Populations

| Prediction Target | Best Performing Model | Accuracy | Precision | Specificity | AUC-ROC |

|---|---|---|---|---|---|

| Low Social Interaction Frequency | Random Forest | 0.849 | 0.837 | 0.857 | 0.935 |

| High Loneliness Levels | Gradient Boosting Machine | 0.838 | 0.871 | 0.784 | 0.887 |

Source: Adapted from Hong et al., 2025 [3]

Table 2: Key Digital Phenotyping Predictors of Loneliness and Social Interaction

| Digital Marker | Association with Loneliness | Temporal Relationship | Effect Size / Statistical Significance |

|---|---|---|---|

| Instant Messenger Use | Positive (Risk Factor) | Both momentary & daily | Momentary: B=2.95, p=0.017; Daily: B=2.83, p=0.018 [2] |

| Social Media Use | Positive (Risk Factor) | Momentary (within-person) | B=0.53, p=0.001 [2] |

| Physical Activity (Actigraphy) | Negative (Protective Factor) | Associated with increased in-person interaction | Identified via network analysis [2] |

| Sleep Quality (Actigraphy) | Negative (Protective Factor) | Key factor for loneliness levels | Key factor identified in ML models [3] |

| Physical Movement (Actigraphy) | Negative (Protective Factor) | Key factor for social interaction frequency | Key factor identified in ML models [3] |

Experimental Protocols for Digital Monitoring in Predementia Research

Protocol 1: Comprehensive Digital Phenotyping for Loneliness and Social Interaction

Objective: To identify objective, real-time risk and protective factors for momentary and daily loneliness using smartphone sensing and wearable actigraphy in community-dwelling older adults with SCD and MCI [3] [2].

Participant Recruitment:

- Target Population: Adults aged 65+ with SCD or MCI.

- SCD Criteria: Self-reported sustained memory decline, no prior MCI/dementia diagnosis, and a score of ≥24 on the Korean Mini-Mental State Examination (K-MMSE-2) if recruited from community centers [3].

- MCI Criteria: Clinical diagnosis by a physician and a K-MMSE-2 score of ≥18 [3].

- Exclusion Criteria: Illiteracy, diagnosis of neurological (e.g., stroke, Parkinson's) or psychiatric disorders (e.g., schizophrenia), or treatment for critical illnesses [3].

Materials and Equipment:

- Actigraphy Device: GENEActiv or ActiGraph GT9X Link wrist-worn devices.

- Smartphone Application: For EMA and passive sensing (e.g., movisensXS).

- Data Processing Platform: Cloud-based computational resources for handling high-resolution time-series data.

Procedure:

- Baseline Assessment: Conduct clinical and cognitive assessments to confirm SCD/MCI status.

- Device Provision: Instruct participants to wear the actigraphy device on the non-dominant wrist 24 hours per day (except during charging/bathing) and install the EMA app on their smartphone.

- Ecological Momentary Assessment: The app delivers 8 randomized prompts daily between 8:00 AM and 10:00 PM for 7 consecutive days. Each prompt assesses:

- Daily Evening Assessment: Administer the 3-item UCLA Loneliness Scale once per evening [2].

- Passive Data Collection:

- Data Integration and Analysis: Process data using open-source algorithms (e.g., GGIR for actigraphy) and employ multilevel modeling and temporal network analysis to examine within-person and between-person effects [11] [2].

Protocol 2: Longitudinal Actigraphy Monitoring for Cognitive Decline Biomarker Discovery

Objective: To establish a standardized workflow for long-term longitudinal actigraphy data processing to identify digital biomarkers predictive of cognitive decline in high-risk populations [26].

Study Design:

- Type: Longitudinal observational study with a target duration of 12 months.

- Population: Individuals with a diagnosis of Major Depressive Disorder (MDD) in remission, as they represent a high-risk group for cognitive decline and dementia [26].

Device and Data Acquisition:

- Device: ActiGraph GT9X-BT Link worn on the non-dominant wrist.

- Data Collection: Raw data collected at 30Hz, with continuous 24-hour wear instructed.

- Data Upload: Data is uploaded to a cloud-based system (e.g., ActiGraph's CentrePoint) during periodic in-person visits every 8 weeks [26].

Data Pre-processing Pipeline:

- Data Trimming: Remove data collected before the first and after the last device initialization.

- Non-Wear Detection: Implement a robust algorithm (e.g., a "majority algorithm" combining the Choi, Troiano, and van Hees methods) to identify periods when the device was not worn. This outperforms relying solely on built-in capacitive sensors [26].

- Sleep/Wake Scoring: Apply validated algorithms (e.g., Cole-Kripke, Tudor-Locke) to epoch data (e.g., 60-second epochs) to identify sleep intervals [26].

- Valid Day Criteria: Define a valid monitoring day based on a minimum wear time (e.g., >10 hours of wear during waking periods). Apply sensitivity analyses to determine the impact of this threshold on outcomes [26].

- Variable Extraction: Calculate key actigraphy-derived variables on a weekly or bi-weekly basis:

- Sleep Parameters: Total sleep time, sleep maintenance efficiency, wake after sleep onset (WASO).

- Activity Parameters: Total activity counts, physical activity energy expenditure, moderate-to-vigorous physical activity (MVPA).

- Circadian Rhythms: Cosinor analysis to estimate rhythm amplitude, acrophase, and mesor.

Compliance Monitoring: Actively monitor wear compliance and address technical issues proactively to mitigate the natural decline in compliance observed over long-term studies [26].

Visualization of Research Workflows

Digital Phenotyping for Social Health Assessment

Longitudinal Actigraphy Data Processing

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Materials and Computational Tools for Digital Monitoring Research

| Item Name | Type | Specifications / Version | Primary Function in Research |

|---|---|---|---|

| ActiGraph GT9X Link | Wearable Device | 3-axis accelerometer, 30-100Hz sampling, capacitive wear sensor [25] [26] | Collects raw tri-axial acceleration data for activity and sleep monitoring in free-living environments. |

| GENEActiv | Wearable Device | 3-axis accelerometer, ±8g dynamic range, 10-100Hz sampling [2] | An alternative research-grade device for continuous wrist-worn actigraphy data collection. |

| GGIR | Open-Source Software | R package [11] [26] | Provides complete end-to-end processing for raw accelerometer data, including non-wear detection, sleep analysis, and physical activity estimation. |

| Modular Actigraphy Platform (MAP) | Computational Platform | Cloud-based, v2.0+ (integrates GGIR & MIMS) [11] | A standardized, scalable platform for processing high-resolution sensor data, enhancing reproducibility and collaboration. |

| movisensXS | Smartphone Application | EMA platform [2] | Deploys ecological momentary assessments, collects self-reported loneliness/social data, and passively gathers smartphone metadata. |

| Monitor Independent Movement Summary (MIMS) | Algorithm | Standardized non-proprietary method [11] | Pre-processes raw accelerometer data into a device-independent unit, enabling cross-device and cross-study comparisons of physical activity. |

| UCLA Loneliness Scale | Assessment Tool | 3-item (ULS-3) and 8-item (ULS-8) versions [2] | Validated self-report instrument for measuring subjective feelings of loneliness and social isolation. |

| Korean Mini-Mental State Examination (K-MMSE-2) | Cognitive Assessment | Standardized cut-off scores (≥24 for SCD, ≥18 for MCI) [3] | Screens for cognitive impairment and establishes participant eligibility in predementia studies. |

The convergence of actigraphy, EMA, and advanced analytics provides an unprecedented opportunity to transform the identification and monitoring of vulnerable populations in the predementia stage. The protocols and tools outlined herein offer a roadmap for generating high-quality, objective data on behavioral markers like social interaction and physical activity, which are critically linked to cognitive health [3] [2].

Future research must prioritize the development of equitable and accessible digital assessment tools to address the stark disparities in early diagnosis, particularly among underserved populations [24]. As these digital phenotyping approaches mature, they hold the potential to move the field toward a model of preemptive care, where lifestyle and therapeutic interventions can be deployed during the critical window of SCD and MCI to ultimately alter the trajectory of cognitive decline.

Actigraphy data, when processed through advanced machine learning pipelines, provides a powerful foundation for uncovering subtle behavioral phenotypes linked to mental health, neurodegenerative conditions, and social functioning. This protocol details standardized methodologies for collecting high-resolution activity data, engineering features related to sleep, physical activity, and circadian rhythms, and applying interpretable machine learning models to identify digital biomarkers. Framed within social interaction monitoring research, these application notes demonstrate how passive actigraphy phenotyping can predict depressive relapse, preterm birth, loneliness, and autism spectrum disorder symptoms, offering clinical researchers a validated framework for objective behavioral assessment in both observational studies and clinical trials.

Actigraphy, the practice of monitoring human rest/activity cycles using wrist-worn accelerometers, has evolved from measuring basic activity counts to enabling sophisticated digital phenotyping of complex behavioral patterns. The emergence of open-source processing platforms and machine learning algorithms has transformed actigraphy from a simple motion-tracking tool into a rich data source for identifying hidden behavioral phenotypes—multidimensional behavioral signatures that correlate with clinical outcomes. Within social interaction monitoring research, these phenotypes provide objective, continuous measures of social engagement, sleep quality, and circadian stability that are less susceptible to recall bias than self-reported measures.

Longitudinal actigraphy data presents unique computational challenges, including substantial missing data (increasing from approximately 5% in the first week to 24% after 12 months), non-wear time misclassification, and the need for standardized processing pipelines to ensure reproducibility across studies [27]. The protocols outlined below address these challenges through validated quality control measures, open-source computational frameworks, and interpretable machine learning approaches designed to extract clinically meaningful insights from high-resolution sensor data.

Core Concepts and Quantitative Evidence

Key Behavioral Domains Captured by Actigraphy

Actigraphy data enables quantification of several behavioral domains that form the basis for machine learning-derived phenotypes:

- Sleep-Wake Patterns: Total sleep time, sleep efficiency, wake after sleep onset (WASO), sleep latency, and sleep fragmentation index provide robust measures of sleep quality and continuity [28].

- Circadian Rhythms: Timing and regularity of sleep onset, sleep offset, and activity rhythms serve as markers of circadian stability, with increased variability associated with poorer health outcomes [29].

- Physical Activity: Activity counts, intensity distributions, and sedentary behavior patterns offer objective measures of daily activity that correlate with mental and physical health status [30].

- Social Engagement: While not directly measured, actigraphy-derived patterns (e.g., timing of daily activity, sleep-wake regularity) correlate with social functioning and can be combined with mobile sensing data for comprehensive social interaction monitoring [31].

Validated Actigraphy Features Predictive of Clinical Outcomes

Table 1: Actigraphy-Derived Features with Demonstrated Predictive Value for Health Outcomes

| Clinical Domain | Most Predictive Actigraphy Features | Algorithm Performance | Citation |

|---|---|---|---|

| Preterm Birth Prediction | Day-to-day variability in sleep start time, Variance in sleep cycle duration, Sleep start time consistency | AUROC: 0.70-0.85 (actigraphy + clinical features) | [29] |

| Depression Relapse | Sleep maintenance efficiency, Wake after sleep onset, Nighttime activity levels | Sensitivity analysis shows substantial impact on MADRS prediction | [27] |

| Loneliness | Physical activity levels, Sleep efficiency, Activity rhythm regularity | Momentary loneliness: B = 2.95, p = 0.017 (instant messaging); B = 0.53, p = 0.001 (social media) | [31] |

| Autism Spectrum Disorder | Sleep disturbance metrics, Daytime physical activity patterns, Stereotypical movement signatures | Significant correlations with caregiver-reported outcomes (p < 0.05) | [17] |

Comparative Performance of Wearable Devices for Feature Extraction

Table 2: Device-Specific Feature Utility in Digital Phenotyping Studies

| Device Type | Most Predictive Features | Coverage (Proportion of Studies Using) | Importance (Proportion Identifying as Predictive) |

|---|---|---|---|

| Actiwatch | Accelerometer data, Activity counts | 85% | 92% |

| Smart Bands | Heart rate, Steps, Sleep parameters, Phone usage | 78% | 88% |

| Smartwatches | Sleep metrics, Heart rate, GPS | 72% | 83% |

| Research Actigraphs (GT9X, GENEActiv) | Raw accelerometry, Sleep-wake patterns, Non-wear time | 91% | 95% |

Experimental Protocols

Protocol 1: Longitudinal Actigraphy Data Collection and Pre-processing

Purpose: To collect high-quality, raw accelerometry data suitable for machine learning applications while addressing challenges of long-term wear compliance and missing data.

Materials:

- ActiGraph GT9X Link or GENEActiv devices

- Charging docks and cables

- Cloud-based data storage system (e.g., CentrePoint Study Admin System)

- Computational resources for data processing

Procedure:

- Device Initialization: Configure devices to collect raw tri-axial accelerometer data at 30-50 Hz sampling frequency on the non-dominant wrist [27].

- Participant Instruction: Instruct participants to wear devices 24 hours/day for study duration, removing only for charging (1-2 hours weekly) and water-based activities. Provide written instructions and charging reminders.

- Data Upload: Schedule regular data uploads (e.g., every 8 weeks) during study visits or implement remote cloud-based upload capabilities.

- Data Pre-processing:

- Convert proprietary file formats (.gt3x, .bin) to standardized .csv format using packages like GGIRread and read.gt3x [11].

- Apply non-wear detection algorithms (e.g., Choi, Troiano, van Hees) to identify and flag non-wear periods [27].

- Implement sleep-wake scoring using validated algorithms (Cole-Kripke, Tudor-Locke) applied to 60-second epochs [27].

- Quality Control:

- Calculate weekly wear time compliance; exclude days with <10 hours of waking wear time [27].

- Apply visual quality checks to verify algorithm performance, especially around sleep-wake transitions.

- Document missing data patterns and implement multiple imputation techniques if needed.

Troubleshooting:

- For declining compliance over time (approximately 20% reduction over 12 months), implement regular reminder systems and monitor wear time remotely when possible [27].

- For capacitive wear sensor inaccuracies (sensitivity 93%, specificity 49%), use algorithmic non-wear detection instead of built-in sensors [27].

Protocol 2: Feature Engineering for Behavioral Phenotyping

Purpose: To transform raw accelerometry data into interpretable features capturing sleep, activity, and circadian rhythm domains for machine learning applications.

Materials:

- Processed actigraphy data with scored sleep-wake periods

- Computational environment (R, Python) with appropriate packages (GGIR, MIMS)

- High-performance computing resources for large datasets

Procedure:

- Sleep Feature Extraction:

- Calculate standard parameters: Total Sleep Time (TST), Sleep Efficiency (SE), Sleep Latency (SL), Wake After Sleep Onset (WASO), Number of Awakenings (NWAK) [28].

- Derive advanced metrics: Sleep Fragmentation Index (SFX), Brief Wake Ratio (BWR), Short Burst Inactivity Index (SBIX) [28].

- Compute intraindividual variability metrics: standard deviation of TST and SE across monitoring days.

- Circadian Rhythm Feature Extraction:

- Calculate sleep mid-point, social jet lag (difference between weeknight and weekend sleep mid-points) [29].

- Compute day-to-day variability in sleep start and sleep end times using standard deviation across valid days [29].

- Derive cosinor analysis parameters: acrophase, amplitude, and mesor of activity rhythms.

- Physical Activity Feature Extraction:

- Calculate average movement counts during waking periods.

- Classify activity intensity levels (sedentary, light, moderate, vigorous) using validated cut-points [30].

- Compute sedentary bout patterns and temporal distribution of activity throughout waking hours.

- Feature Aggregation:

- Aggregate features into weekly averages for longitudinal analysis.

- Compute both mean values and intraindividual variability metrics (standard deviation) for all features.

Validation:

- Compare actigraphy-derived sleep parameters with daily sleep diaries when available [30].

- Validate feature distributions against known population norms for the target population.

- Test feature-quality by examining correlations with clinical outcomes of interest.

Protocol 3: Machine Learning Model Development and Interpretation

Purpose: To develop interpretable machine learning models for identifying behavioral phenotypes associated with clinical outcomes.

Materials:

- Processed actigraphy features with linked clinical outcomes

- Machine learning environment (Python with scikit-learn, XGBoost, SHAP)

- Computational resources for model training and validation

Procedure:

- Data Preparation:

- Split data into training (70%), validation (15%), and test (15%) sets, maintaining participant-level splits to avoid data leakage.

- Standardize features to zero mean and unit variance to facilitate model convergence.

- Address class imbalance in outcome variables using SMOTE or weighted loss functions.

- Model Selection and Training:

- Test multiple algorithm types: Gaussian Naïve Bayes, Logistic Regression, Random Forests, XGBoost, and Neural Networks [29].

- Implement nested cross-validation to optimize hyperparameters and avoid overfitting.

- For preterm birth prediction, consider Gaussian Naïve Bayes as a strong baseline due to feature independence properties [29].

- Model Interpretation:

- Apply SHapley Additive exPlanations (SHAP) to quantify feature importance and direction of effects [29].

- Generate individual-level explanations to identify which features most influenced specific predictions.

- Examine interaction effects between key actigraphy features and clinical/demographic variables.

- Validation and Performance Assessment:

- Evaluate models using area under the receiver operating characteristic curve (AUROC) and area under the precision-recall curve (AUPRC).

- Calculate sensitivity, specificity, and predictive values at optimal classification thresholds.

- Assess clinical utility through decision curve analysis and calibration plots.

Implementation Note: For high-dimensional actigraphy data, simpler models like Gaussian Naïve Bayes may outperform more complex architectures due to the independence structure of well-engineered features and limited sample sizes in clinical datasets [29].

Workflow Visualizations

ML Actigraphy Analysis Pipeline

Behavioral Domains & Clinical Applications

The Scientist's Toolkit: Essential Research Reagents and Platforms

Table 3: Essential Resources for Actigraphy-Based Machine Learning Research

| Category | Specific Tools/Platforms | Primary Function | Key Features | Validation Status |

|---|---|---|---|---|

| Wearable Devices | ActiGraph GT9X Link | Raw tri-axial accelerometry data collection | Research-grade, capacitive wear sensor, 30Hz sampling | Validated against PSG (91-93% agreement) [27] |

| GENEActiv | Raw accelerometry data collection | ±8g dynamic range, 10-100Hz sampling, waterproof | Used in EMA studies with loneliness assessment [31] | |

| Data Processing Platforms | Modular Actigraphy Platform (MAP) | Cloud-based processing of raw sensor data | Containerized modules, GGIR and MIMS integration, scalable | Processed 686 files across 4 pediatric cohorts [11] |

| GGIR Open-Source Package | Raw data processing for sleep and physical activity | Non-wear detection, sleep scoring, feature extraction | Validated in multiple population studies [11] | |

| Non-Wear Algorithms | Choi Algorithm | Non-wear time classification | 90-min zero-count windows with artifact allowance | Validated in room calorimeter study [32] |

| Troiano Algorithm | Non-wear time classification | NHANES-based criteria for waking periods | Widely implemented in population studies [32] | |

| van Hees Algorithm | Non-wear detection using raw data | Raw acceleration-based, detects sleep non-wear | Superior to built-in capacitive sensors [27] | |

| Sleep Scoring Algorithms | Cole-Kripke Algorithm | Sleep-wake scoring from actigraphy | Developed for adult populations, 1-minute epochs | Validated against PSG [27] |

| Tudor-Locke Algorithm | Sleep period identification | Identifies sleep intervals from activity patterns | Used in longitudinal depression studies [27] | |

| Clinical Outcome Measures | Montgomery-Åsberg Depression Rating Scale (MADRS) | Depression symptom severity | 10-item clinician-rated scale | Primary outcome in Wellness Monitoring Study [27] |

| UCLA Loneliness Scale | Subjective loneliness assessment | 3-item and 8-item versions | Momentary and daily assessment in EMA [31] |

From Data to Insight: Methodological Frameworks for Social Interaction Assessment

Ecological Momentary Assessment (EMA) and longitudinal actigraphy are powerful methodological approaches for capturing dynamic human behaviors and physiological states in real-time within natural environments. These approaches are particularly valuable for monitoring complex, fluctuating phenomena such as substance use, sleep-wake patterns, physical activity, and mental health symptoms. EMA is designed to collect real-time data on behavior, thoughts, and feelings while minimizing retrospective recall bias [33]. Actigraphy provides objective, continuous monitoring of rest-activity cycles using wearable accelerometer-based devices [26] [34]. When integrated, these methods enable researchers to examine temporal relationships between psychological states, contextual factors, and behavioral or physiological outcomes over time, offering significant advantages over traditional retrospective assessments or laboratory-based measurements.

The integration of these methodologies is particularly relevant for drug development professionals and clinical researchers seeking to understand the real-world impact of treatments on daily functioning and symptom patterns. This application note provides detailed protocols and considerations for implementing these approaches in research studies, with particular emphasis on substance use and mental health applications where these methods have demonstrated significant utility.

EMA Methodological Protocols

Core EMA Design Configurations

EMA studies employ various assessment schedules to capture phenomena of interest, each with distinct advantages depending on research questions and target populations. The most common designs combine different sampling approaches to balance comprehensive assessment with participant burden.

Table 1: EMA Sampling Protocols and Applications

| Sampling Type | Description | Common Applications | Considerations |

|---|---|---|---|

| Event-Based | Participant-initiated recordings when specific events occur | Substance use episodes, craving episodes, pain flare-ups | Captures targeted behaviors but may miss contextual background |

| Time-Based (Random) | Random prompts throughout waking hours | Mood states, contextual factors, background symptoms | Provides representative sampling of experiences; cannot capture specific events |

| Time-Based (Fixed) | Assessments at predetermined times | Morning/evening routines, medication schedules | Ensures coverage of specific timepoints but may be anticipatory |

| Interval Reporting | Multiple assessments within predefined blocks | Daily activity patterns, symptom progression | Balances detail with structure; still involves some recall |

| Daily Diary | Single end-of-day retrospective report | Daily summaries, aggregate behaviors | Higher retrospective bias but lower participant burden |

The prototypical EMA design combines event-based reporting of target behaviors (e.g., substance use) with random time-based assessments to capture contextual background and state variables [33]. This approach allows researchers to compare moments when target behaviors occur with randomly sampled moments throughout participants' daily lives, enabling powerful within-subject analyses of behavioral precursors and consequences.

Implementation Protocols and Compliance

Successful EMA implementation requires careful attention to participant training, technological infrastructure, and compliance monitoring. Evidence suggests that even challenging populations can successfully comply with EMA protocols when properly designed and supported.

Participant Training Protocol:

- Conduct in-person device orientation sessions with hands-on practice

- Provide simplified written instructions for reference

- Implement practice trials before formal data collection begins

- Establish clear guidelines for device care, charging, and troubleshooting

Compliance Enhancement Strategies:

- Utilize user-friendly interfaces with intuitive navigation

- Implement reminder systems for missed assessments

- Provide regular feedback on compliance performance

- Offer compensation structures that incentivize sustained participation

Studies have demonstrated good compliance across diverse populations, including those with substance use disorders and serious mental health conditions. In one study of community-dwelling adults with suicidal ideation, participants maintained an 82.05% EMA response rate over 28 days, with only slight decreases in the second half of the monitoring period (from 86.96% to 76.31%) [35]. Notably, actigraphy adherence in the same study remained exceptionally high at 98.1%, suggesting that passive monitoring can maintain excellent compliance even when active reporting declines.

Perhaps surprisingly, research has demonstrated feasibility even in challenging populations. Homeless crack-cocaine addicts showed 77% response rates to telephone-based EMA prompts, with only 10% dropout and minimal equipment loss [33]. Similarly, individuals in treatment for heroin and cocaine use successfully complied with EMA protocols for up to six months with rare device loss or damage [33].

Longitudinal Actigraphy Methodology

Actigraphy Data Collection Protocols

Longitudinal actigraphy involves extended monitoring of rest-activity patterns using wrist-worn accelerometers. These devices collect high-frequency movement data that can be processed to estimate sleep parameters, physical activity levels, and circadian rhythms.

Table 2: Actigraphy Device Specifications and Processing Parameters

| Parameter | Recommended Settings | Alternative Options | Rationale |

|---|---|---|---|

| Device Placement | Non-dominant wrist | Dominant wrist, ankle | Standardization; minimizes movement artifacts |

| Sampling Frequency | 30-50 Hz | 10-100 Hz based on memory needs | Balances resolution with battery life |

| Epoch Length | 1-minute intervals | 10-second to 6-minute epochs | Standard for sleep scoring; adjust for activity |

| Data Collection Mode | Time Above Threshold (TAT) | Zero Crossing Mode (ZCM), Proportional Integration Mode (PIM) | Movement intensity quantification |

| Minimum Wear Time | 21+ hours/day for 5+ days | Varies by research question | Ensures representative data |

Device Selection and Validation: Research-grade actigraphs (e.g., ActiGraph GT9X Link, GENEActiv) should be selected over consumer wearables due to validated algorithms, research support, and regulatory acceptance [26] [36]. Devices should be tested for reliability and validity against gold standard measures (e.g., polysomnography for sleep parameters) before deployment in clinical trials.

Longitudinal Wear Protocol: Participants should be instructed to wear the device 24 hours per day throughout the monitoring period, removing only for water-based activities or when instructed by researchers [26]. Regular charging schedules should be established (typically 1-2 hours every 5-7 days, depending on device battery life), with participants maintaining wear logs to document removal periods and notable events.

Data Processing and Quality Control

Longitudinal actigraphy presents significant data processing challenges due to extended monitoring periods and inevitable non-wear time. Standardized processing pipelines are essential for ensuring data quality and comparability across studies.

Non-Wear Detection Algorithms: Multiple approaches exist for identifying periods when devices were not worn:

- Built-in wear sensors (e.g., capacitive sensors in ActiGraph GT9X) but these may have specificity issues [26]

- Choi algorithm - developed for hip-worn devices but adaptable to wrist placement [26]

- Troiano algorithm - uses activity counts to detect prolonged inactivity [26]

- van Hees algorithm - processes raw acceleration data for improved accuracy [26]

Research comparing these methods has led to the development of consensus approaches such as the "majority algorithm" that combines multiple detection methods to improve accuracy [26]. Implementation of these algorithms in open-source packages (e.g., GGIR) has improved standardization across studies.

Data Quality and Compliance Monitoring: In longitudinal studies, compliance with device wear typically decreases over time. One year-long study reported missing data proportions increasing from a mean of 4.8% in the first week to 23.6% after 12 months [26]. Establishing pre-processing thresholds for minimum wear time (e.g., ≥10-12 hours/day for ≥14 days) is essential for ensuring data quality [37].

The Modular Actigraphy Platform (MAP) represents an advanced approach to processing high-resolution sensor data through containerized modules that can be updated or replaced independently [38]. This cloud-based system integrates open-source scoring algorithms (e.g., GGIR, MIMS) while maintaining version control and computational efficiency, addressing the significant data infrastructure challenges associated with large-scale actigraphy studies [38].

Integrated EMA-Actigraphy Applications

Substance Use Research Applications

EMA and actigraphy have proven particularly valuable in substance use research, where behaviors are episodic and strongly influenced by contextual factors, mood states, and physiological rhythms.

Opioid Use Disorder Protocol: A recent study demonstrated the application of integrated EMA and deep learning to predict critical outcomes in patients receiving medication for opioid use disorder (MOUD) [39]. The protocol included:

- Context-sensitive EMAs assessing stress, pain, social setting, and substance use

- 7-day sliding windows of EMA data to predict next-day outcomes

- Recurrent deep learning models with SHAP analysis for feature interpretation

This approach successfully predicted non-prescribed opioid use (AUC=0.97), medication nonadherence (AUC=0.68-0.79), and treatment retention (AUC=0.89) using EMA-derived features [39]. Recent substance use emerged as the strongest predictor of imminent opioid use, while life-contextual factors better predicted longer-term adherence and retention.

Tobacco and Alcohol Research: EMA designs in tobacco and alcohol research typically combine event-based recording of smoking/drinking episodes with random time-based assessments of mood, context, and cravings [33]. This enables examination of proximal precursors to substance use and assessment of real-world treatment effects.

Mental Health Monitoring

Integrated EMA-actigraphy approaches show particular promise for monitoring mental health conditions characterized by fluctuating symptoms and circadian disruptions.

Bipolar Disorder Applications: An evidence map of actigraphy studies in bipolar disorder identified rest-activity rhythm (RAR) metrics as potentially valuable markers of illness phase transitions and treatment response [36]. Key parameters include:

- Timing markers - sleep onset, mid-sleep point, acrophase

- Variability measures - day-to-day consistency in sleep-wake patterns

- Amount parameters - total sleep time, activity levels

While most studies have been small-scale (median sample size=15) with brief monitoring periods (median=7 days), the consistent association of RAR metrics with clinical outcomes supports their potential as digital biomarkers [36].

Suicide Risk Monitoring: A recent feasibility study implemented a 28-day monitoring protocol with EMA surveys 3 times daily plus actigraphic event marking when participants experienced strong suicidal impulses [35]. This integrated approach revealed distinct temporal patterns in suicidal impulses, with peaks between 9-10 PM and lowest frequency in early morning hours (4-6 AM) [35]. The combination of active EMA and passive actigraphy provided complementary data streams for understanding dynamic risk factors.

Implementation Workflow and Data Integration

The successful integration of EMA and longitudinal actigraphy requires careful planning of data collection, processing, and analytical workflows. The following diagram illustrates a standardized pipeline for integrated data collection:

Integrated EMA-Actigraphy Data Collection Workflow

The analytical approach for integrated EMA-actigraphy data must account for the multilevel structure of the data (moments nested within days nested within persons) and the complex temporal dependencies between variables.

Analytical Pipeline for Integrated EMA-Actigraphy Data

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Materials and Analytical Tools

| Tool Category | Specific Examples | Function | Implementation Considerations |

|---|---|---|---|

| Actigraphy Devices | ActiGraph GT9X-BT Link, GENEActiv | Raw tri-axial acceleration data collection | Research-grade vs. consumer devices; sampling rate; battery life |

| EMA Platforms | LogPad, Smartphone apps (custom), Cell phones | Real-time subjective data collection | User interface design; scheduling flexibility; data security |

| Data Processing Algorithms | GGIR, Cole-Kripke, Tudor-Locke, Choi | Sleep scoring, non-wear detection, feature extraction | Open-source vs. proprietary; validation against gold standards |

| Non-Wear Detection | Choi algorithm, Troiano algorithm, van Hees method | Identifying device removal periods | Sensitivity to sleep vs. wake non-wear; validation methods |

| Cloud Data Platforms | CentrePoint, Brain-CODE, MAP | Secure data transfer, storage, and processing | HIPAA compliance; version control; computational efficiency |

| Analytical Frameworks | Multilevel modeling, recurrent neural networks, SHAP | Modeling hierarchical longitudinal data | Handling missing data; temporal dependencies; feature importance |

Regulatory and Ethical Considerations

The implementation of EMA and longitudinal actigraphy in clinical research requires careful attention to ethical and regulatory considerations, particularly when deployed in vulnerable populations or for regulatory endpoints.

Privacy and Data Security: Sensitive data collected through these methods—including detailed information about illegal behaviors, mental health symptoms, and daily patterns—requires robust protection [40]. Recommended protocols include:

- Data encryption both in transit and at rest

- Secure transmission protocols for wireless data transfer

- De-identification procedures that maintain temporal resolution while protecting identity

- Access controls with role-based permissions

Regulatory Acceptance: Regulatory bodies including the FDA and EMA have shown increasing interest in actigraphy-based endpoints, with approved use in specific contexts such as Duchenne muscular dystrophy [37]. For successful regulatory acceptance, measures must demonstrate:

- Content validity - concepts measured are meaningful to patients [37]

- Analytical validity - reliability, accuracy, and sensitivity of measurements

- Clinical validity - association with clinically meaningful outcomes

Patient-centered research has identified that individuals with pulmonary arterial hypertension (PAH) and chronic thromboembolic pulmonary hypertension (CTEPH) value time spent in non-sedentary activity and moderate-to-vigorous physical activity over simpler metrics like step count, highlighting the importance of engaging patients in endpoint selection [37].