Advanced Strategies for EEG Artifact Reduction: A Comprehensive Guide for Biomedical Research and Drug Development

Clean electroencephalography (EEG) data is paramount for accurate analysis in neuroscience research and drug development.

Advanced Strategies for EEG Artifact Reduction: A Comprehensive Guide for Biomedical Research and Drug Development

Abstract

Clean electroencephalography (EEG) data is paramount for accurate analysis in neuroscience research and drug development. This article provides a comprehensive guide to reducing neural data artifacts in EEG recordings, tailored for researchers and drug development professionals. It begins by establishing a foundational understanding of diverse artifact types, from physiological sources like ocular and muscle activity to non-physiological technical noise. The core of the article explores a wide spectrum of artifact removal methodologies, from established signal processing techniques like Independent Component Analysis (ICA) to cutting-edge machine learning and deep learning models, including hybrid CNN-LSTM architectures. It further offers practical troubleshooting and optimization strategies for challenging recording environments, such as simultaneous EEG-fMRI, and provides a rigorous framework for the validation and comparative analysis of different denoising techniques. By synthesizing modern practices, this guide aims to enhance data integrity and reliability in pharmacokinetic/pharmacodynamic modeling and clinical neuroscience applications.

Understanding the Enemy: A Complete Guide to EEG Artifact Types and Origins

Frequently Asked Questions (FAQs)

Q1: What are the most common types of EEG artifacts I might encounter in my research? EEG artifacts are unwanted signals that originate from sources other than the brain's neuronal activity. They are broadly categorized as follows [1] [2]:

- Physiological Artifacts: Generated from the subject's own body.

- Ocular Artifacts: Caused by eye movements and blinks. They have high amplitude and are most prominent in frontal electrodes [1].

- Muscle Artifacts (EMG): Caused by tension in head, neck, or jaw muscles, such as talking or swallowing. They have a broad frequency range and can be very challenging to remove [1].

- Cardiac Artifacts (ECG): Caused by the electrical activity of the heart, often appearing as a periodic QRS-like pattern in the EEG [2].

- External/Environmental Artifacts: Arise from outside the subject.

Q2: My wearable EEG data is very noisy. Do standard artifact removal methods work for low-channel count, mobile setups? Wearable EEG presents specific challenges, including motion artifacts and signal degradation from dry electrodes. While standard methods are used, they require adaptation [3].

- Independent Component Analysis (ICA) is widely applied but its effectiveness can be limited by the reduced spatial resolution of low-density EEG arrays [3].

- Blind Source Separation (BSS) methods, including ICA, assume a sufficient number of channels to separate sources effectively, which is a limitation for low-channel count setups [4].

- Emerging deep learning approaches are showing promise for handling motion and muscular artifacts in real-time, wearable settings as they can learn features directly from the data without requiring a high channel count [3] [4].

- Auxiliary sensors, such as Inertial Measurement Units (IMUs), have great potential for detecting motion artifacts but are currently underutilized in practice [3].

Q3: How can I quickly check my raw EEG data for major artifacts before full processing? Visual inspection is a fundamental first step. You can use plotting functions in toolboxes like MNE-Python to browse your data [2]:

- Plot Raw Data: Scroll through the continuous data in a "vertical" viewmode to see all channels stacked. Look for large, abrupt deflections (eye blinks), high-frequency "bursts" (muscle noise), or regular, sharp patterns (cardiac artifacts) [2] [5].

- Check the Power Spectrum: Plot the power spectral density of your data. Look for sharp peaks at 50/60 Hz (power line noise) and its harmonics [2].

- Use a Databrowser: Tools like

ft_databrowserin FieldTrip or the MNE browsing interface allow you to visually mark and annotartifactual periods for later rejection [5].

Q4: When should I reject data segments versus using a correction algorithm? The choice depends on your research question and the extent of contamination [2] [6].

- Reject Epochs: This is the safest method when artifacts are large, infrequent, and short-lived. It is recommended when you have a sufficient number of clean trials remaining for analysis. Simply discarding contaminated epochs prevents the artifact from influencing your results.

- Repair with Algorithms: Use correction methods (e.g., ICA, regression, deep learning) when artifacts are frequent and rejecting them would lead to an unacceptable loss of data, or when the artifact overlaps with the neural signal of interest (e.g., a blink occurring during a key event-related potential) [6].

Troubleshooting Guides

Problem: Persistent Ocular (Eye Blink and Movement) Artifacts

Symptoms: Large, low-frequency deflections in frontal EEG channels, time-locked to eye blinks or movements.

Solutions:

- Independent Component Analysis (ICA): This is the most common and effective method [6].

- Procedure: Apply ICA to your multi-channel data to decompose it into independent components. Inspect the topography and time course of each component. Components showing a frontal pole topography and a waveform that matches blinks should be selected for removal. Reconstruct the signal without these artifactual components [6].

- Considerations: ICA works best with a sufficient number of channels and high-quality data. It requires manual component selection or a validated automated classifier.

- Regression in Time or Frequency Domain: This method requires a recorded EOG reference channel [1].

- Procedure: A transmission factor is calculated between the EOG reference and the EEG channels. The estimated artifact contribution is then subtracted from the EEG data [1].

- Considerations: A key limitation is that the EOG channel itself contains brain signals, so this method can subtract relevant neural activity along with the artifact [1].

Problem: Muscle Artifact (EMG) Contamination

Symptoms: High-frequency, irregular, and low-voltage activity that can be widespread or localized over temporal muscles.

Solutions:

- Automated Detection and Rejection: Use algorithms to identify and mark periods of high muscle activity.

- Procedure: Calculate metrics like amplitude, variance, or frequency features (e.g., power in the 20-60 Hz band) on sliding windows of data. Epochs where these metrics exceed a predefined threshold are marked for rejection [3].

Spatial Filtering and Source Separation: ICA can also separate and remove muscle artifacts [1] [6].

- Procedure: The decomposition process is similar to that for ocular artifacts. Muscle artifact components are often characterized by a high-frequency, "spiky" time course and a topography focused on the temporal areas. These components are removed before signal reconstruction [6].

Advanced Deep Learning Methods: Newer models are highly effective for this difficult artifact.

- Procedure: Use a pre-trained model like CLEnet, which integrates Convolutional Neural Networks (CNN) and Long Short-Term Memory (LSTM) networks to extract both morphological and temporal features of EEG, effectively separating clean neural signals from EMG contamination [4].

Problem: Power Line (50/60 Hz) and High-Frequency Noise

Symptoms: A persistent, oscillatory peak at 50 Hz or 60 Hz (and its harmonics at 120 Hz, 180 Hz, etc.) visible in the power spectrum.

Solutions:

- Notch Filtering: Apply a narrow band-stop filter centered precisely at the power line frequency (e.g., 50 Hz) [2].

- Caution: Notch filters can introduce ringing artifacts and may remove a small portion of neural signal in the same frequency band. Use them judiciously.

- SSP or SSPP: These projection-based methods are effective for removing periodic noise.

- Procedure: The method identifies the topographical pattern of the environmental noise and creates a projector that removes this pattern from the data [2].

Quantitative Data on Artifact Removal Techniques

Table 1: Performance Comparison of Modern Artifact Removal Algorithms (Based on Semi-Synthetic Data)

| Algorithm | Artifact Type | Key Metric: Signal-to-Noise Ratio (SNR) | Key Metric: Correlation Coefficient (CC) | Best For |

|---|---|---|---|---|

| CLEnet (CNN + LSTM) [4] | Mixed (EOG + EMG) | 11.50 dB | 0.925 | Multi-channel data with unknown artifacts |

| 1D-ResCNN [4] | Mixed (EOG + EMG) | Not Reported | ~0.90 (inferred) | Single-channel scale feature extraction |

| NovelCNN [4] | EMG | High Performance | High Performance | EMG-specific artifact removal |

| EEGDNet (Transformer) [4] | EOG | High Performance | High Performance | EOG-specific artifact removal |

| ICA (Traditional) [3] [6] | Ocular, Muscular | Varies with data | Varies with data | Multi-channel data with clear source topographies |

Table 2: Essential Research Reagent Solutions for EEG Experiments

| Item | Function / Purpose | Example Use-Case |

|---|---|---|

| 64-channel EEG cap (10-20 system) | High-density spatial sampling for source localization and effective ICA [7] | Auditory MMN studies in clinical populations [7] |

| Electrooculogram (EOG) electrodes | Provide reference signals for vertical and horizontal eye movements [1] [7] | Critical for regression-based ocular artifact correction or EOG-assisted ICA component identification [1] |

| Conductive Gel & Abrasive Prep Kits | Ensure low electrode-skin impedance (< 10 kΩ), reducing baseline noise and electrode artifacts [7] | Mandatory for all high-fidelity ERP studies, especially in clinical drug development [7] |

| Auditory Stimulation System | Precisely deliver standard and deviant tones for evoked potentials (e.g., MMN, P300) [7] | Investigating sensory processing deficits in schizophrenia or Alzheimer's disease [7] |

| Automated Artifact Detection Software (e.g., MNE, FieldTrip) | Perform filtering, epoching, and automated artifact rejection based on statistical thresholds [2] [5] | Standardizing preprocessing pipelines across a large cohort of subjects for consistent results [2] |

Detailed Experimental Protocols

Protocol 1: Mismatch Negativity (MMN) Paradigm for Translational Psychiatry

This protocol is adapted from a transnosographic study investigating MMN as a biomarker in schizophrenia and Alzheimer's disease [7].

Objective: To measure pre-attentive auditory sensory memory by eliciting the Mismatch Negativity (MMN) event-related potential (ERP).

Stimulus Presentation:

- Stimuli: Use a sequence of auditory tones binaurally presented via headphones.

- Standard Tone: 1000 Hz, 50 ms duration (80% probability).

- Deviant Tone 1 (Duration): 1000 Hz, 100 ms duration (10% probability).

- Deviant Tone 2 (Frequency): 1050 Hz, 50 ms duration (10% probability).

- Parameters: Sound level at 85 dB SPL, with 5-ms rise and fall times. The inter-stimulus interval (ISI) should be fixed at 600 ms. The total recording duration is approximately 13.5 minutes [7].

EEG Acquisition:

- Setup: Record using a 64-electrode cap configured according to the international 10-20 system.

- Settings: Sampling rate ≥ 2048 Hz; band-pass filter during acquisition: 0.1-70 Hz.

- Auxiliary Channels: Record two EOG channels to monitor eye blinks and movements.

- Impedance: Keep all electrode impedances below 20 kΩ.

- Subject Task: To control for attention, the subject should watch a silent, emotionally neutral video during the auditory stimulation [7].

Preprocessing & Analysis:

- Filtering: Apply a 50 Hz (or 60 Hz) notch filter to remove line noise.

- Epoching: Segment the continuous data into epochs from -200 ms to +500 ms relative to each stimulus onset.

- Baseline Correction: Apply a baseline correction using the pre-stimulus (-200 to 0 ms) period.

- Artifact Rejection: Automatically reject epochs containing amplitudes exceeding ±100 µV or with a peak-to-peak amplitude difference greater than 150 µV in the EOG channels.

- ERP Calculation: Average the standard and deviant epochs separately. Subtract the standard ERP from the deviant ERP to obtain the MMN difference wave.

- Metrics: Extract from the MMN wave (90-290 ms window) the peak amplitude (µV), peak latency (ms), and area under the curve (AUC) [7].

Protocol 2: ICA-Based Ocular and Muscle Artifact Removal

Objective: To separate and remove artifacts from EEG data using Blind Source Separation (BSS) without the need for reference channels.

Procedure:

- Preprocessing: Filter the raw, continuous data (e.g., 1-100 Hz band-pass) and optionally apply a notch filter. This prepares the data for ICA.

- ICA Decomposition: Run an ICA algorithm (e.g., Infomax, Extended-Infomax) on the preprocessed data. This produces an unmixing matrix

W[6].- The output is a set of independent components, each with a time course (

activations = W * data) and a scalp topography (Winv = inv(W)).

- The output is a set of independent components, each with a time course (

- Component Classification:

- Ocular Artifacts: Look for components with a frontal pole topography and a low-frequency, high-amplitude time course containing large, punctate deflections corresponding to blinks [6].

- Muscle Artifacts: Look for components with a topography focused on the temporal areas and a high-frequency, "spiky" activation time course [6].

- Artifact Removal: Project the data back to the sensor space, excluding the artifactual components.

clean_data = Winv(:, good_components) * activations(good_components, :);- Where

good_componentsis a vector of indices for all non-artifactual components [6].

Experimental Workflows and Signaling Pathways

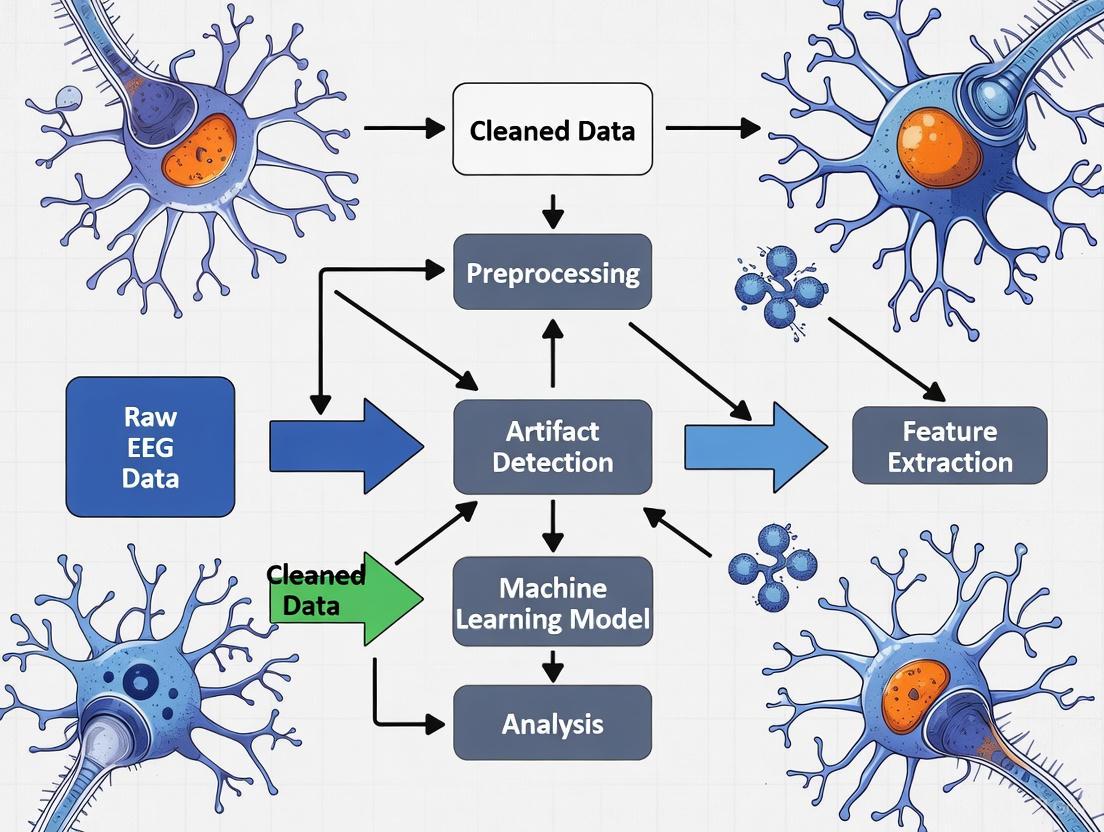

EEG Artifact Removal and Analysis Workflow

ICA-Based Artifact Separation Principle

FAQ: Understanding and Identifying Physiological Artifacts

Q1: What are physiological artifacts, and why are they a critical issue in EEG research? Physiological artifacts are unwanted signals in EEG recordings that originate from the body's own non-neural activities, such as eye movements, muscle contractions, or heartbeats [1] [8]. They are a primary concern because their amplitude is often much larger than neural signals, potentially masking brain activity, biasing analysis, and leading to misinterpretation or clinical misdiagnosis [1] [8]. Accurately identifying and removing them is a foundational step for ensuring data integrity in neuroscience research and drug development.

Q2: How can I distinguish between common physiological artifacts based on their appearance? Each major physiological artifact has a characteristic signature in the time and frequency domains. The table below summarizes key identifying features.

Table: Identification Guide for Common Physiological Artifacts

| Artifact Type | Origin | Time-Domain Effect | Frequency-Domain Effect | Most Affected Channels |

|---|---|---|---|---|

| Ocular (EOG) | Corneo-retinal dipole (eye blinks, movements) [8] | Sharp, high-amplitude deflections [8] | Dominant in low frequencies (Delta, Theta bands) [8] | Frontal (e.g., Fp1, Fp2) [8] |

| Muscle (EMG) | Muscle contractions (jaw, neck, face) [1] [8] | High-frequency, chaotic "noise" [1] | Broadband, dominates Beta/Gamma bands (>13 Hz) [8] | Temporal, Frontotemporal [9] |

| Cardiac (ECG) | Electrical activity of the heart [1] [10] | Rhythmic, recurring waveform (pulse artifact) [10] | Overlaps multiple EEG bands; fundamental at heart rate [10] | Central, neck-adjacent channels [8] |

| Sweat | Low-frequency shifts from sweat glands [8] | Very slow, large baseline drifts [8] | Contaminates Delta and Theta bands [8] | Widespread, often all channels [8] |

Q3: My analysis pipeline is automated. Are there quantitative detection methods I can use? Yes, several automated methods leverage statistical and spectral properties of the signal for detection [9]. These are often applied after a decomposition technique like Independent Component Analysis (ICA) to increase sensitivity [9].

Table: Quantitative Methods for Automated Artifact Detection

| Detection Method | Primary Principle | Best For Identifying |

|---|---|---|

| Spectral Thresholding | Identifies power exceeding a threshold in specific frequency bands [9] | Muscle (20-60 Hz), Ocular (1-3 Hz) artifacts [9] |

| Extreme Value | Flags data points exceeding a fixed voltage threshold [9] | Gross ocular artifacts and large movement transients [9] |

| Kurtosis | Measures how "peaked" or outlier-heavy the data distribution is [9] | Components with transient, high-amplitude peaks (e.g., eye blinks) [9] |

| Joint-Probability | Calculates the improbability of a data sample given the overall distribution [9] | Unusual or transient events that are statistical outliers [9] |

Troubleshooting Guides

Issue 1: Persistent Ocular Artifacts Overwhelming Frontal Channels

Problem: Eye blinks and movements create large, recurring deflections in frontal EEG channels, obscuring cognitive signals of interest.

Solution:

- Pre-processing: Apply a high-pass filter with a cutoff of 0.5-1.0 Hz can reduce slow drifts from eye movements, but use caution as it may also distort neural data [11].

- Advanced Correction: Use Independent Component Analysis (ICA) to separate and remove artifact components [3] [9]. This is the most common and effective method.

- Workflow: After standard filtering and epoching, run ICA on your data.

- Identification: Correlate independent components with a recorded EOG channel or visually inspect components for a frontal scalp topography and time course linked to blinks [9].

- Removal: Subtract the identified artifact components from the data.

- Experimental Control: Instruct participants to fixate on a point and minimize blinks during critical trial periods, if the protocol allows.

Issue 2: Contamination from Muscle Activity (EMG) in Temporal Regions

Problem: High-frequency noise from jaw clenching, swallowing, or neck tension contaminates temporal channels, masking beta and gamma brain oscillations.

Solution:

- Spectral Analysis: Inspect the power spectrum of suspicious channels for elevated power in the 20-60 Hz range [9].

- ICA-Based Removal: ICA can effectively separate and remove muscle artifacts, as they are statistically independent from brain signals [3] [1].

- Alternative Methods: For wearable EEG with low channel counts, consider deep learning approaches (e.g., CNN-LSTM models) or ASR-based pipelines, which are emerging as powerful tools for muscular and motion artifacts [3] [12] [8].

- Protocol Adjustment: Ensure participants are relaxed and remind them to unclench their jaw and relax their face during recording.

Issue 3: Rhythmic Cardiac Artifact Mimicking Neural Activity

Problem: The QRS complex from the heartbeat appears as a rhythmic artifact in central or neck-adjacent EEG channels [10] [8].

Solution:

- Detection: Use an R-peak detection algorithm (e.g.,

R_peak_detect.min MATLAB) on a simultaneously recorded ECG channel or an EEG channel showing the clearest artifact [10]. - Targeted Filtering: Instead of filtering the entire signal, apply a zero-phase filter only to the EEG segments time-locked to the detected QRS complexes. This preserves neural information outside of these brief windows [10].

- Component-Based Removal: ICA can also be used to isolate and remove cardiac components, which have a stable, periodic time course and a characteristic topography [1] [9].

Issue 4: Slow Baseline Drifts Caused by Perspiration

Problem: Slow, large-amplitude drifts in the signal caused by sweat, which can saturate amplifiers and distort event-related potentials.

Solution:

- Filtering: A high-pass filter with a very low cutoff (e.g., 0.1 Hz or 0.5 Hz) is often effective at removing these slow drifts [8].

- Linear Detrending: For shorter epochs, applying linear detrending can help remove slow, linear shifts in the baseline [11].

- Environmental Control: Record in a cool, temperature-controlled environment to minimize sweating.

Experimental Protocols for Artifact Management

Protocol 1: Ocular Artifact Removal Using Independent Component Analysis (ICA)

This is a widely adopted methodology for correcting eye blinks and movements [3] [9].

Workflow Overview:

Detailed Methodology:

- Preprocessing: Begin with raw, continuous EEG data. Apply a 1 Hz high-pass filter to remove slow drifts that can impede ICA performance.

- Epoching: Segment the data into epochs (e.g., -100 ms to 600 ms around a stimulus) if analyzing event-related potentials.

- ICA Decomposition: Use an ICA algorithm (e.g., Infomax, FastICA) to decompose the epoched data into independent components. Each component consists of a fixed scalp topography and an associated time course [9].

- Component Identification: Identify components representing ocular artifacts. Key indicators include:

- A scalp topography showing strong, focal projections to frontal electrodes.

- A time course containing large, infrequent deflections that correlate with known blink events or a recorded EOG channel [9].

- Artifact Removal & Reconstruction: Subtract the identified artifact components from the data. The remaining components are then back-projected to the sensor space to create the cleaned EEG dataset.

Protocol 2: Targeted Cardiac Artifact Removal via QRS Detection

This protocol is effective for removing pulse artifacts without distorting the entire EEG signal [10].

Workflow Overview:

Detailed Methodology:

- Data Acquisition: Record a simultaneous ECG signal alongside the EEG.

- R-Peak Detection: Use an algorithm (e.g., the open-source

R_peak_detect.mfunction for MATLAB) to accurately identify the R-peaks of the QRS complex in the ECG signal [10]. - Epoch Definition: Define short time windows around each detected R-peak that encompass the entire QRS complex.

- Targeted Filtering: Apply a zero-phase filter only to these short EEG segments that are time-locked to the cardiac cycle. This method prevents the loss of important neural information in the rest of the signal that would occur if the entire dataset were filtered [10].

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Tools and Algorithms for Physiological Artifact Management

| Tool/Algorithm | Function | Application Notes |

|---|---|---|

| Independent Component Analysis (ICA) | Blind source separation; decomposes EEG into independent components for artifact identification and removal [3] [1] [9]. | Gold standard for ocular and cardiac artifacts. Less effective for non-stationary muscle noise. Requires multi-channel data. |

| Automated Spike Detection (e.g., Autoreject) | Automatically detects and rejects bad trials or channels based on statistical thresholds [11]. | Useful for initial data cleaning. May reject useful data if not calibrated carefully. |

| Wavelet Transform | Time-frequency analysis that allows for localized denoising of specific artifact components [3] [1]. | Effective for non-stationary artifacts like EMG. Can be combined with other methods in hybrid pipelines. |

| Deep Learning Models (e.g., CNN-LSTM, GANs) | Learns complex patterns to separate clean EEG from artifacts in an end-to-end manner [3] [12]. | Emerging, powerful approach; promising for real-time applications and motion artifacts. Requires large datasets for training. |

| Artifact Subspace Reconstruction (ASR) | Statistical method that removes high-variance components in sliding windows [3]. | Widely applied for ocular, movement, and instrumental artifacts in wearable EEG. |

| Zero-Phase Filtering | Filters data in forward and reverse directions to eliminate phase distortion [10]. | Crucial for targeted filtering (e.g., cardiac artifact removal) to preserve temporal relationships. |

Troubleshooting Guides

Power Line Interference (Mains Noise)

Problem: High-frequency, monotonous noise at 50 Hz or 60 Hz is present across many or all channels, often obscuring the neural signal of interest. This interference originates from electromagnetic fields generated by alternating current (AC) in power lines and electronic equipment [13] [14].

Identification:

- Visual Pattern: Rhythmic, high-frequency oscillations that are very regular in appearance [15] [14].

- Spectral Profile: A sharp peak at exactly 50 Hz (e.g., in Europe) or 60 Hz (e.g., in the USA) in the frequency spectrum [13] [16].

Solutions:

| Troubleshooting Step | Description | Rationale |

|---|---|---|

| Preventive Measures | Use actively shielded cables and keep them short. Remove unnecessary electronics from the recording environment. Ensure the recording room is properly grounded [14] [17]. | Active shielding minimizes capacitive coupling from AC fields. Short cables reduce the antenna effect [14]. |

| Notch Filtering | Apply a notch filter at 50 Hz or 60 Hz during post-processing [13] [14]. | This directly attenuates power at the specific interference frequency. Caution is advised as it can cause signal distortions and ringing artifacts in the time domain [16]. |

| Advanced Processing | Use modern denoising algorithms like Spectrum Interpolation, CleanLine, or Discrete Fourier Transform (DFT) filtering [16]. | These methods can remove line noise with less signal distortion compared to traditional notch filters, especially when noise amplitude fluctuates [16]. |

Electrode Pop

Problem: A single channel shows a sudden, large, steep deflection (positive or negative) that quickly returns to baseline. This is caused by a sudden change in impedance at the electrode-skin interface [13] [18].

Identification:

- Visual Pattern: A single channel shows a sudden, large, steep deflection (positive or negative) that quickly returns to baseline. The artifact has no electrical field, meaning it is confined to one electrode [15] [18].

- Common Causes: A loose or drying electrode; poor initial contact; pressure or pull on the electrode cable; a dirty electrode [14] [18].

Solutions:

| Troubleshooting Step | Description | Rationale |

|---|---|---|

| Preventive Measures | Ensure all electrodes are firmly attached with good conductive contact before starting the recording. Check impedances to identify poor connections [14] [17]. | A stable, low-impedance connection prevents sudden shifts in conductivity that cause pops [13]. |

| Immediate Action | If pops occur during a recording, check and re-attach the offending electrode. Visually inspect for dried gel or physical displacement [18]. | Fixing the physical connection problem is the most direct solution. |

| Post-Processing | Mark the affected channel segment for rejection. For persistently bad channels, consider replacing (interpolating) the entire channel's data using signals from surrounding good channels [13] [17]. | This prevents the large, non-physiological spike from contaminating the analysis. Interpolation should be used cautiously [13]. |

Cable Movement

Problem: Sudden, high-amplitude, irregular deflections appear in the data, often correlated with participant movement. This is caused by triboelectric noise (friction within the cable) or conductor motion in a magnetic field [13] [14].

Identification:

- Visual Pattern: Sudden, high-amplitude, irregular deflections in the signal [14]. If the cable swings rhythmically, it may induce oscillations at the swing frequency [13].

- Context: The artifact is directly correlated with participant movement [13].

Solutions:

| Troubleshooting Step | Description | Rationale |

|---|---|---|

| Preventive Measures | Use high-quality, low-noise cables with active shielding. Secure cables to the participant's body or cap using Velcro or tape to minimize movement [14] [17]. | Active shielding eliminates capacitive coupling, and securing cables reduces triboelectric noise and physical strain [14]. |

| Hardware Setup | In wireless systems, ensure the transmitter is securely fixed to the cap. Keep cable lengths as short as practically possible [17]. | Minimizing moving parts and cable length directly reduces the source of the artifact [17]. |

| Post-Processing | Identify and mark movement-corrupted segments for artifact rejection. Filtering may attenuate slow drifts from cable sway, but overlapping artifacts are hard to separate from neural data [13]. | This excludes sections of data where the signal is irrevocably contaminated by motion [13]. |

Frequently Asked Questions (FAQs)

Q1: Why should I avoid using a notch filter as my first choice for removing power line noise? While effective at removing noise at a specific frequency, notch filters (especially IIR filters like Butterworth) can introduce ringing artifacts and distort the time-domain signal, which is critical for analyzing event-related potentials (ERPs) [16]. It is often preferable to use methods like Spectrum Interpolation or CleanLine, which have been shown to remove non-stationary line noise with less distortion [16].

Q2: My reference electrode has a poor connection. How does this affect my data? The reference electrode is crucial as it provides the baseline against which all other electrodes are measured. A bad reference connection will introduce artifacts into every single channel of your recording if you are using a common reference montage [14] [17]. Always ensure your reference electrode has a stable, low-impedance connection.

Q3: Can I use deep learning to remove these artifacts? Yes, deep learning is an emerging and powerful tool for EEG artifact removal. Models like Generative Adversarial Networks (GANs), sometimes combined with Long Short-Term Memory (LSTM) networks, are being developed to effectively separate artifacts from neural signals while preserving the underlying brain activity [12]. These methods can learn complex patterns and show promising results in handling various artifact types.

Q4: One of my electrodes keeps popping. I've re-applied it, but the problem continues. What should I do? First, check the cable and connector for damage. If the hardware is intact, the issue may be persistent poor contact or drying gel. Your best options are to:

- Re-reference your data to a different, stable electrode (e.g., from the left to the right mastoid) if your montage allows [18].

- Exclude the bad channel from analysis and, for high-density arrays, interpolate its data from neighboring good channels [13].

Table 1: Performance Comparison of Power Line Noise Removal Methods [16]

| Method | Key Principle | Advantages | Disadvantages |

|---|---|---|---|

| Notch Filter | Bandstop filter attenuating a narrow frequency band. | Simple and widely available. | Can cause severe ringing artifacts and signal distortion in the time domain [16]. |

| DFT Filter | Fits and subtracts sine/cosine waves at the noise frequency. | Avoids corrupting frequencies away from the line noise. | Assumes constant noise amplitude; fails with fluctuating noise [16]. |

| CleanLine | Regression-based approach using multitapers. | Removes only deterministic line components, preserving background spectrum. | May fail with large, non-stationary artifacts [16]. |

| Spectrum Interpolation | Interpolates the noise frequency in the Fourier spectrum. | Less signal distortion than a notch filter; handles non-stationary noise well. | Requires transformation to frequency domain and back [16]. |

Table 2: Essential Research Reagent Solutions & Materials

| Item | Function in Artifact Mitigation |

|---|---|

| Active Electrode Systems | Amplify the signal at the electrode source, making it more resilient to cable movement and environmental interference [13] [14]. |

| Low-Noise, Actively Shielded Cables | Minimize the pickup of mains interference and reduce triboelectric noise caused by cable movement [14]. |

| High-Quality Electrolyte Gel | Ensures stable, low-impedance contact between electrode and skin, preventing electrode pops and slow drifts [17]. |

| Faraday Cage / Shielded Room | Electromagnetically isolates the recording setup, physically blocking external noise sources [17]. |

Experimental Protocols

Protocol: Removing Power Line Noise via Spectrum Interpolation

This protocol is adapted from methods shown to effectively remove non-stationary power line noise with minimal distortion [16].

- Data Segmentation: Divide the continuous EEG data into manageable, possibly overlapping, segments (e.g., 1-2 seconds in length).

- Fourier Transform: Apply a Discrete Fourier Transform (DFT) to each segment to convert the data from the time domain to the frequency domain.

- Identify and Interpolate: In the amplitude spectrum, identify the bin(s) corresponding to the power line frequency (e.g., 50 Hz) and its harmonics.

- Interpolate: Replace the amplitude values at these bins by interpolating from the neighboring frequency bins on either side. A linear or spline interpolation can be used.

- Inverse Transform: Apply an Inverse Discrete Fourier Transform (iDFT) to reconstruct the time-domain signal without the targeted line noise components.

Protocol: A Multi-Channel Wiener Filter for Stimulation Artifact Removal

This advanced protocol uses a linear Wiener filter to predict and remove large artifacts caused by electrical stimulation, which is common in neural implant and BCI research [19].

- Record Stimulation Input and Artifact Output: Apply a known, varying electrical stimulation current (the input signal, x[n]) while recording the resulting large artifacts (the output signal, y[m]) on all recording channels in the absence of neural activity.

- Calculate Correlation Matrices: Compute the covariance matrix of the input signals (Cxx) and the cross-correlation matrix between the output and input signals (Ryx).

- Estimate Wiener Filter: Calculate the optimal multi-channel Wiener filter (ĥ) that maps the stimulation currents to the recorded artifacts using the Wiener-Hopf equation: ĥ = (Cxx)⁻¹Ryx.

- Apply Filter for Prediction: During the actual experiment with concurrent neural activity, use the derived filter (ĥ) to predict the artifact on each recording channel by convolving the stimulation current with the filter.

- Subtract Prediction: Subtract the predicted artifact from the recorded signal to reveal the underlying neural activity.

FAQ: Understanding EEG Artifacts and Their Consequences

What is an EEG artifact and why is it a problem? An EEG artifact is any signal recorded by the EEG that does not originate from the brain's electrical activity [20] [8]. These unwanted signals contaminate the recording, obscuring genuine neural information. Because EEG measures very weak signals in the microvolt range, artifacts can easily mimic or mask true brain activity, leading to incorrect data interpretation and potentially severe clinical misdiagnosis, such as confusing an artifact with epileptiform activity [8].

What are the most common types of artifacts I might encounter? Artifacts are typically categorized by their origin. The table below summarizes the primary types, their causes, and their impact on the EEG signal [1] [13] [8].

Table 1: Common EEG Artifacts and Their Characteristics

| Artifact Type | Origin/Cause | Key Characteristics | Impact on EEG Signal |

|---|---|---|---|

| Ocular (EOG) | Eye blinks and movements [13] [8]. | Slow, high-amplitude deflections; most prominent over frontal electrodes [13]. | Obscures frontal delta/theta rhythms; can mimic cognitive processes [8]. |

| Muscle (EMG) | Head, jaw, or neck muscle contractions (e.g., clenching, talking) [13] [8]. | High-frequency, broadband noise; "spiky" morphology in time domain [13]. | Masks beta/gamma band activity; reduces clarity across entire spectrum [8]. |

| Cardiac (ECG/Pulse) | Electrical activity of the heart or pulse-induced electrode movement [13] [8]. | Rhythmic, recurring waveform synchronized with the heartbeat [13]. | Can be mistaken for a cerebral rhythm or epileptiform discharge [13]. |

| Electrode Pop | Sudden change in electrode-skin impedance (e.g., from drying gel) [13] [8]. | Very sharp, high-amplitude transient typically isolated to a single channel [13]. | Introduces large, non-physiological spikes that can be misinterpreted as pathological [8]. |

| Line Noise | Electromagnetic interference from AC power (50/60 Hz) [13] [8]. | Persistent high-frequency oscillation at 50 or 60 Hz [13]. | Obscures high-frequency neural oscillations and adds non-neural noise [8]. |

How can artifacts directly lead to misdiagnosis? Artifacts pose a direct risk to patient safety by mimicking genuine neurological phenomena. For instance [8]:

- A muscle artifact from head or neck tension can be misinterpreted as an epileptic spike or seizure activity.

- A pulse artifact, caused by the rhythmic movement of an electrode near a blood vessel, can resemble a cerebral rhythm or periodic discharge, potentially leading to an incorrect diagnosis of epilepsy [13]. The core challenge is that artifacts do not conform to a realistic head model of brain activity, but their appearance can be deceptively similar to real abnormalities [20].

What is the foundational concept for recognizing an artifact? The primary foundation for recognizing artifacts is identifying the mismatch between potentials generated by the brain and activity that does not conform to a realistic head model [20]. This involves assessing whether the signal's spatial distribution, frequency content, and timing are physiologically plausible for neural origins.

Troubleshooting Guides: Artifact Identification and Removal

Guide 1: Identifying Common Artifacts in Your Recording

Use this workflow as a decision tree to identify unknown artifacts in your EEG data. The diagram below outlines the logical steps for diagnosing common artifact types based on their visual characteristics.

Guide 2: Selecting an Artifact Removal Method

No single artifact removal method is optimal for all situations. The choice depends on the artifact type, analysis requirements, and available resources. The following table compares the most prevalent techniques used in the field [1] [21].

Table 2: Comparison of Prevalent EEG Artifact Removal Methods

| Method | Best For | Key Advantages | Key Limitations | Suitability for Online Use |

|---|---|---|---|---|

| Independent Component Analysis (ICA) | Ocular and large muscle artifacts [1] [13]. | Does not require reference channels; effective for separating sources [1]. | Requires multi-channel data; computationally intensive; manual component selection can be subjective [21]. | Limited [21]. |

| Regression (in Time/Frequency Domain) | Ocular artifacts when EOG reference is available [1]. | Simple principle and implementation [1]. | Requires reference channels (EOG); can lead to over-correction and removal of neural signals [1] [21]. | Possible [21]. |

| Wavelet Transform | Non-stationary artifacts like EMG and electrode pops [1]. | Good for analyzing transient signals and local features in time and frequency [1]. | Parameter selection (e.g., mother wavelet) can be complex; can alter the underlying EEG [21]. | Possible [21]. |

| Deep Learning (e.g., AnEEG, GANs) | Complex artifacts in high-density EEG; automated pipelines [12]. | High performance; can model complex patterns; potential for full automation [12]. | Requires large datasets for training; "black-box" nature reduces interpretability [22] [12]. | Yes (with pre-trained models) [12]. |

| Blind Source Separation (BSS) | Various artifacts, especially when reference channels are unavailable [1]. | Does not require reference channels; versatile [1]. | Can be computationally complex; may not separate all artifacts perfectly [1] [21]. | Limited [21]. |

The diagram below provides a structured workflow for selecting the most appropriate artifact removal strategy based on your specific context and constraints.

Guide 3: Experimental Protocol for Deep Learning-Based Artifact Removal

The following protocol outlines the methodology for implementing a deep learning-based artifact removal tool, such as the AnEEG model, which uses a Generative Adversarial Network (GAN) with LSTM layers [12].

Objective: To remove multiple types of artifacts from EEG signals while preserving the underlying neural information. Key Components of the AnEEG Model [12]:

- Generator: A network that takes artifact-contaminated EEG as input and generates cleaned EEG as output. It typically uses LSTM layers to capture temporal dependencies in the signal.

- Discriminator: A network that judges whether the signal produced by the Generator is "clean" (i.e., indistinguishable from a ground-truth clean EEG) or artificially generated.

- Adversarial Training: The Generator and Discriminator are trained simultaneously in a competitive process, where the Generator strives to produce cleaner signals, and the Discriminator becomes better at detecting artifacts, leading to overall improvement.

Procedure:

- Data Preparation:

- Acquire EEG datasets containing both artifact-contaminated signals and corresponding ground-truth clean signals. These can be semi-simulated (by linearly mixing clean EEG with EOG/EMG) or real datasets with clean segments identified by experts [12].

- Preprocess the data (e.g., band-pass filtering, normalization) and segment it into epochs.

- Split the data into training, validation, and test sets.

Model Training:

- Train the GAN model on the contaminated EEG inputs with the goal of producing outputs that match the clean EEG targets.

- The loss function typically combines:

- Adversarial Loss: Measures the Discriminator's ability to distinguish real from generated signals.

- Content Loss (e.g., L1 or L2 loss): Ensures the generated signal is structurally similar to the ground-truth clean signal [12].

Model Validation & Testing:

- Validate the model on a separate dataset using quantitative metrics (see below).

- Test the final model on a held-out test set to evaluate its performance.

Performance Metrics for Validation [12]:

- NMSE (Normalized Mean Square Error): Lower values indicate better agreement with the original signal.

- CC (Correlation Coefficient): Higher values mean a stronger linear relationship with the ground truth.

- SNR (Signal-to-Noise Ratio) & SAR (Signal-to-Artifact Ratio): Higher values indicate better artifact suppression.

The Scientist's Toolkit: Key Research Reagents & Solutions

This table details essential materials and computational tools referenced in the featured experiment and broader field of EEG artifact research [12] [23].

Table 3: Essential Research Tools for Advanced EEG Artifact Handling

| Tool / Reagent | Function / Description | Application Context |

|---|---|---|

| GAN with LSTM (AnEEG) | A deep learning architecture for generating artifact-free EEG signals from noisy inputs [12]. | Automated, high-performance artifact removal for standard EEG [12]. |

| TMS-Compatible EEG Amplifier | A specialized amplifier designed to handle the massive voltage spike induced by a TMS pulse without saturating [23]. | Essential for clean data acquisition in combined TMS-EEG studies [23]. |

| Carbon-Wire Loops (CWL) | Reference sensors placed on the head that exclusively capture MR-induced artifacts without neural signals [24]. | Critical for effective artifact removal in simultaneous EEG-fMRI recordings [24]. |

| Reference EOG/ECG Electrodes | Additional electrodes placed to specifically record eye movement and heart activity [1]. | Provides a reference signal for regression-based removal of ocular and cardiac artifacts [1] [21]. |

| ICA Algorithm (e.g., in EEGLAB) | A blind source separation algorithm that decomposes multi-channel EEG into independent components for manual or automatic artifact rejection [1] [13]. | Versatile tool for analyzing and removing various artifacts from standard EEG recordings [1]. |

Simultaneous Electroencephalography and functional Magnetic Resonance Imaging (EEG-fMRI) is a powerful multimodal technique that combines the high temporal resolution of EEG with the high spatial resolution of fMRI, providing unparalleled insights into brain dynamics. However, EEG signals recorded inside an MRI scanner are contaminated by severe artifacts that can be hundreds of times greater than the neural signals of interest. These artifacts originate from the MRI environment itself and pose significant challenges for researchers and clinicians. The three primary artifacts are gradient artifacts caused by switching magnetic field gradients during image acquisition, ballistocardiogram (BCG) artifacts resulting from cardiac-related movements in the static magnetic field, and motion artifacts from subject movement. Effective management of these artifacts is essential for obtaining reliable neural data and accurate interpretation of brain connectivity and function. This technical support center provides comprehensive troubleshooting guides and FAQs to address the specific issues researchers encounter during simultaneous EEG-fMRI experiments.

Frequently Asked Questions (FAQs)

What are the main types of artifacts in simultaneous EEG-fMRI?

- Gradient Artifacts (GA): These are the largest source of noise, induced by the rapid switching of magnetic field gradients during fMRI acquisition. The amplitude of GAs can be up to 100 times greater than the EEG signal and their frequency content overlaps with that of neural signals, making simple filtering ineffective [25] [26].

- Ballistocardiogram (BCG) Artifacts: These are caused by cardiac-related head and body movements, as well as the pulsatile flow of blood (a conductive fluid) in the static magnetic field. The BCG artifact is time-locked to the heartbeat and can have an amplitude 3-4 times that of the EEG signal [25] [26] [27].

- Motion Artifacts: These occur when the subject's head moves within the scanner, inducing currents in the EEG electrodes according to Faraday's law of induction. This movement can be caused by the subject themselves or by cardio-balistic forces [25].

- Environmental Artifacts: This category includes interference from power lines, ventilation systems, lights in the MR room, and vibrations from the scanner's helium cooling pump [25].

Which BCG artifact removal method should I choose for my study?

The choice of method depends on your analysis goals, as different methods have distinct strengths and weaknesses. The table below summarizes the performance characteristics of common BCG artifact removal techniques, based on a 2025 systematic evaluation [28].

Table 1: Performance Comparison of BCG Artifact Removal Methods

| Method | Best Performance Metric | Key Characteristic | Impact on Network Topology |

|---|---|---|---|

| Average Artifact Subtraction (AAS) | Best signal fidelity (MSE = 0.0038, PSNR = 26.34 dB) [28] | Template-based subtraction; simple but can leave residuals [28] [25] | Affects functional connectivity patterns [28] |

| Optimal Basis Set (OBS) | Highest structural similarity (SSIM = 0.72) [28] | Uses PCA to capture artifact variations; better for temporal structure [28] [26] | Significantly affects network structure [28] |

| Independent Component Analysis (ICA) | Greater sensitivity in dynamic graph metrics [28] | Blind source separation; requires manual component selection [28] [25] | Shows frequency-specific patterns in dynamic graphs [28] |

| OBS + ICA (Hybrid) | Lowest p-values in dynamic connectivity (e.g., theta-beta bands) [28] | Combines strengths of OBS and ICA [28] [27] | Reveals pronounced frequency-specific effects [28] |

| Denoising Autoencoder (DAR) | High SSIM (0.8885) and SNR gain (14.63 dB) [29] [30] | Deep learning approach; learns direct mapping from noisy to clean signals [29] [30] | Not fully characterized for network topology [29] |

For signal quality, AAS or the deep learning-based DAR are strong candidates. If your focus is functional connectivity or network analysis, OBS or hybrid methods (e.g., OBS+ICA) may be more appropriate, as they better preserve the relationships between signals. ICA, while sometimes weaker on pure signal metrics, can be valuable for detecting frequency-specific patterns in dynamic analyses [28].

Can I use simultaneous EEG-fMRI for real-time applications like neurofeedback?

Yes, recent advances have made real-time artifact removal feasible. The EEG-LLAMAS platform is an open-source software specifically designed for low-latency BCG artifact removal. It has been validated for real-time use, introducing an average lag of less than 50 ms, which makes it suitable for closed-loop neurofeedback paradigms within the MRI environment [31] [32].

Why does my EEG data still contain artifacts after applying AAS?

Residual artifacts after Average Artifact Subtraction are common and are often due to temporal jitter. This jitter arises because the MRI machine and the EEG system typically operate on separate clocks, causing slight variations in the sampling of each artifact instance. This in turn degrades the accuracy of the averaged template [26]. To mitigate this, ensure your setup uses synchronized clocks between the EEG and MRI systems. Alternatively, consider using methods like the Optimal Basis Set (OBS) or FASTR, which are explicitly designed to account for this variability by modeling the principal components of the artifact residuals [26].

How do I effectively remove Gradient Artifacts?

The FASTR algorithm, an advanced form of OBS, is widely considered effective for gradient artifact removal. Unlike simple AAS, which uses one average template, FASTR constructs a unique artifact template for each slice in each EEG channel. It then supplements the average with a linear combination of basis functions derived from PCA on the artifact residuals, leading to more thorough cleanup [26]. Furthermore, the choice of fMRI sequence matters. Spiral sequences generate gradient artifacts an order of magnitude larger than Echo Planar Imaging (EPI) sequences. However, with accurate synchronization, AAS can suppress artifacts from both sequences effectively below 80 Hz [33].

Troubleshooting Guides

Guide 1: Systematic Artifact Removal Workflow

The following diagram provides a logical workflow for tackling artifacts in your EEG-fMRI data.

Guide 2: Addressing Poor BCG Artifact Removal

If BCG artifact removal is unsatisfactory, follow this troubleshooting guide.

Experimental Protocols for Key Artifact Removal Methods

Protocol 1: Optimal Basis Set (OBS) for BCG and Gradient Artifacts

The OBS method improves upon AAS by accounting for variability in the artifact shape over time [26].

- Artifact Epoching: Segment the continuous EEG data into epochs time-locked to each heartbeat (for BCG) or slice trigger (for gradient artifacts).

- Template Creation: Compute an average artifact template for each channel from the epochs.

- Principal Component Analysis (PCA): Perform PCA on the matrix of artifact epochs. The dominant principal components form the "Optimal Basis Set" that captures the main modes of artifact variation.

- Projection and Subtraction: For each artifact epoch, project it onto the optimal basis set to create a patient- and time-specific template.

- Subtraction: Subtract this tailored template from the original EEG signal in each epoch.

- Signal Reconstruction: Reconstruct the continuous, cleaned EEG signal from the processed epochs.

Protocol 2: Hybrid OBS-ICA Method

This protocol combines the template-based approach of OBS with the blind source separation of ICA, often yielding superior results for connectivity analysis [28] [27].

- Apply OBS: First, clean the data using the standard OBS protocol (steps 1-6 above). This will remove the bulk of the BCG artifact but may leave some residuals.

- Apply ICA: Perform Independent Component Analysis (e.g., using Infomax or FastICA algorithms) on the OBS-cleaned data.

- Component Identification: Manually inspect the resulting independent components and their topographies to identify any residual artifact components. These often have a stereotypical BCG shape or a non-brain-like topography.

- Component Removal: Remove the identified artifact components.

- Signal Reconstruction: Project the remaining components back to the sensor space to obtain the final cleaned EEG signal.

The following tables consolidate key performance metrics from recent studies to aid in method selection.

Table 2: Quantitative Performance of Artifact Removal Methods

| Method | Key Metric | Reported Value | Context / Frequency Band |

|---|---|---|---|

| AAS | Mean Squared Error (MSE) | 0.0038 [28] | Best performance for signal fidelity [28] |

| AAS | Peak Signal-to-Noise Ratio (PSNR) | 26.34 dB [28] | Best performance for signal fidelity [28] |

| OBS | Structural Similarity Index (SSIM) | 0.72 [28] | Best performance for structural similarity [28] |

| DAR (Deep Learning) | Root-Mean-Squared Error (RMSE) | 0.0218 ± 0.0152 [29] [30] | Outperforms traditional methods [29] |

| DAR (Deep Learning) | Structural Similarity Index (SSIM) | 0.8885 ± 0.0913 [29] [30] | Outperforms traditional methods [29] |

| DAR (Deep Learning) | SNR Gain | 14.63 dB [29] [30] | Significant improvement over noisy input [29] |

Table 3: Impact on Functional Connectivity (Graph Theory Metrics)

| Artifact Removal Method | Impact on Dynamic Connectivity | Notable Frequency Band Effects |

|---|---|---|

| AAS | Method-specific differences observed [28] | Affects network topology across bands [28] |

| OBS | Method-specific differences observed [28] | Affects network topology across bands [28] |

| ICA | Greater sensitivity in dynamic graphs [28] | Reveals frequency-specific patterns [28] |

| OBS + ICA | Lowest p-values across frequency pairs [28] | Pronounced effects in theta-beta & delta-gamma pairs [28] |

| All Methods | Dynamic analysis shows more pronounced effects than static analysis [28] | Beta and gamma bands show stronger differentiation [28] |

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Hardware and Software for EEG-fMRI Artifact Management

| Item | Type | Function / Application |

|---|---|---|

| MRI-Compatible EEG Amplifier | Hardware | Essential for safe operation inside the scanner; resistant to electromagnetic interference. |

| Synchronization Interface | Hardware | Synchronizes the EEG and MRI clocks to reduce temporal jitter in gradient artifacts [26] [33]. |

| Reference Layer / Carbon Wire Loops | Hardware | Active hardware solution that records artifact signals from a separate layer for subtraction, significantly reducing BCG artifacts [27]. |

| Piezoelectric Sensor / ECG Electrodes | Hardware | Provides a precise reference signal of cardiac activity (QRS complex) for BCG artifact removal algorithms [26]. |

| EEG-LLAMAS Software | Software | Open-source platform for real-time, low-latency (<50 ms) BCG artifact removal, enabling neurofeedback [31]. |

| FASTR Algorithm | Software | An advanced OBS method implemented in software (e.g., in FMRIB's EEGLAB plugin) for effective gradient and BCG artifact removal [26]. |

| Denoising Autoencoder (DAR) | Software (Algorithm) | A deep learning framework that learns to map artifact-contaminated EEG to clean signals, showing state-of-the-art performance [29] [30]. |

From Theory to Practice: A Survey of Modern EEG Artifact Removal Techniques

FAQ: System Setup and Selection

What are the key advantages of modern dry electrode systems over traditional gel-based electrodes? Dry electrode systems offer significant practical benefits for experimental setups. They eliminate the need for skin abrasion and conductive gel, reducing preparation time. Studies show the average setup time for dry electrodes is approximately 4 minutes, compared to over 6 minutes for wet systems [34]. Furthermore, dry electrodes maintain stable signal quality over longer recording periods because they avoid the signal degradation that occurs as conductive gel dries out [34]. Their design often includes features like ultra-high impedance amplifiers and mechanical isolation to stabilize against movement artifacts [34] [35].

My research involves movement. Should I choose a gel-based or dry EEG system? Dry EEG systems are often better suited for studies involving participant movement. While they can be more susceptible to motion artifacts due to the lack of a gel-based mechanical buffer, their shorter setup time and improved portability make them ideal for dynamic, real-world settings [35]. For the highest signal fidelity in a fully controlled, stationary environment, a gel-based system might still be preferable.

How scalable are modern EEG acquisition systems for high-throughput research? Modular acquisition systems based on Field-Programmable Gate Array (FPGA) technology now provide high scalability. You can start with a single, compact 8-lead acquisition module and use a daisy-chain interface to expand to 16 leads [36]. For even greater channel counts, multiple basic modules can be connected in parallel to a central FPGA unit, constructing a high-density, high-throughput system suitable for large-scale studies [36].

Troubleshooting Guides

Issue: Poor Signal Quality Across Multiple Channels

Symptoms: Unusually high impedance readings, signals appear noisy or flatlined across several channels.

Resolution Steps:

- Isolate the Problem: Follow the signal chain to identify the faulty component:

Recording Software -> Computer -> Amplifier -> Headbox -> Electrodes -> Participant[37]. - Check Electrode Connections: Ensure all electrodes are properly plugged in. Re-clean and re-apply electrodes, adding conductive gel or pressure as needed. Swap out electrodes to rule out a "dead" electrode [37].

- Test Hardware Components: Restart the recording software and amplifier. If the issue persists, try swapping the headbox with a known-working unit. If the problem disappears, the original headbox may be faulty [37].

- Investigate Participant-Specific Factors: If the issue remains after steps 1-3, the cause may be participant-specific. Remove all metal accessories from the participant. Check for hairstyle or skin products that might interfere. Try applying the ground electrode to a different location, such as the participant's hand or collarbone [37].

Issue: Persistent Artifacts in Dry EEG Recordings During Movement

Symptoms: Signal contains high-frequency noise or large amplitude shifts during participant movement.

Resolution Steps:

- Apply a Combined Cleaning Pipeline: Implement a multi-stage denoising strategy. A proven method involves first using ICA-based algorithms (like Fingerprint + ARCI) to remove physiological artifacts (eye, muscle, cardiac), followed by spatial filtering (like Spatial Harmonic Analysis - SPHARA) for general noise reduction [35].

- Validate with Quantitative Metrics: Assess the improvement by calculating standard deviation (SD), signal-to-noise ratio (SNR), and root mean square deviation (RMSD) pre- and post-processing. One study demonstrated that combining Fingerprint+ARCI with an improved SPHARA method reduced SD from 9.76 µV to 6.15 µV and improved SNR from 2.31 dB to 5.56 dB in dry EEG [35].

Performance Data for Hardware Selection

Table 1: Quantitative Comparison of EEG Artifact Removal Methods

| Method Category | Example Techniques | Key Performance Findings | Advantages | Limitations |

|---|---|---|---|---|

| Spatial & ICA-Based Combination | Fingerprint + ARCI + SPHARA [35] | Reduced SD to 6.15 µV; Improved SNR to 5.56 dB [35] | Effective for movement artifacts in dry EEG; complementary noise reduction. | Requires multi-step processing pipeline. |

| Advanced Deep Learning | CLEnet (Dual-scale CNN + LSTM) [38] | Achieved SNR of 11.50 dB and CC of 0.925 in mixed artifact removal [38] | End-to-end removal of multiple artifact types (EMG, EOG, ECG); suitable for multi-channel data. | Requires significant computational resources for training. |

| Generative Deep Learning | AnEEG (LSTM-based GAN) [12] | Lower NMSE/RMSE and higher CC vs. wavelet techniques [12] | Generates artifact-free signals; preserves original neural information. | Complex adversarial training process. |

Table 2: Technical Specifications of a Scalable EEG Acquisition Module

| Parameter | Specification | Research Implication |

|---|---|---|

| Core Chip | ADS1299 [36] | Provides high-quality acquisition with built-in pre-filtering and analog-to-digital conversion. |

| A/D Conversion | 24-bit [36] | Enables capture of microvolt-scale neural signals with high fidelity. |

| Sampling Rate | 250 - 4,000 SPS (for 8 leads) [36] | Offers flexibility for various paradigms, from slow cortical potentials to high-frequency activity. |

| Common-Mode Rejection | -110 dB [36] | Effectively suppresses ambient environmental noise. |

| Scalability | Daisy-chain stacking to 16 leads; parallel module connection [36] | Allows system to grow with research needs, from portable wearables to high-density setups. |

Experimental Protocols

Protocol 1: Validating a Combined Denoising Pipeline for Dry EEG

This protocol is designed to optimize artifact removal from dry EEG data collected during motor tasks [35].

Workflow: The experimental and data processing workflow is as follows:

Methodology Details:

- Equipment: 64-channel dry EEG cap with PU/Ag/AgCl electrodes [35].

- Paradigm: Use a motor execution task (e.g., left/right hand, feet, or tongue movements) with visual cues to generate reproducible cortical patterns [35].

- Processing - ICA-Based Cleaning: Apply the Fingerprint method to automatically identify and classify independent components containing physiological artifacts (ocular, muscular, cardiac). Follow this with the ARCI (Artifact Reconstruction and Subtraction using a Constrained ICA) algorithm to remove these artifacts [35].

- Processing - Spatial Denoising: Apply SPHARA, a spatial filter that uses the harmonic components of the sensor geometry to reduce noise while preserving neural signal patterns across the scalp [35].

- Validation: Calculate the following metrics on the processed data and compare them to the preprocessed (but uncleaned) signal to quantify improvement [35]:

- Standard Deviation (SD): Measures signal variability; lower values indicate reduced noise.

- Signal-to-Noise Ratio (SNR): Measures the level of the desired signal relative to background noise; higher values are better.

- Root Mean Square Deviation (RMSD): Quantifies the difference between the cleaned signal and a reference; interpretation is context-dependent.

Protocol 2: Implementing a Deep Learning-Based Artifact Removal Model

This protocol uses the CLEnet model for end-to-end removal of various artifacts from multi-channel EEG data [38].

Workflow: The deep learning pipeline for artifact removal involves the following stages:

Methodology Details:

- Model Architecture: CLEnet integrates two main branches [38]:

- Morphological Feature Extraction: Uses two Convolutional Neural Networks (CNNs) with different kernel sizes to identify and extract features at multiple scales. An improved one-dimensional Efficient Multi-Scale Attention (EMA-1D) module is embedded to enhance relevant temporal features during this process [38].

- Temporal Feature Extraction: Processes the features using a Long Short-Term Memory (LSTM) network to capture the temporal dependencies inherent in EEG signals [38].

- Training: The model is trained in a supervised manner using a mean squared error (MSE) loss function, which minimizes the difference between the model's output and the ground-truth clean EEG signals [38].

- Datasets: The model can be trained and validated on semi-synthetic datasets (where clean EEG is artificially mixed with EOG, EMG, or ECG) or on real datasets with labeled artifacts [38].

The Scientist's Toolkit

Table 3: Essential Research Reagents and Hardware for EEG Acquisition

| Item | Function / Explanation |

|---|---|

| Scalable EEG Acquisition Module | A foundational hardware unit, often based on chips like the ADS1299, that provides the core functions of signal amplification, filtering, and analog-to-digital conversion for a set number of channels. It forms the basis for scalable systems [36]. |

| FPGA (Field-Programmable Gate Array) Central Module | A reconfigurable hardware processor that enables high-throughput data streaming, parallel processing of multiple acquisition modules, and real-time implementation of complex algorithms like artifact removal [36]. |

| Dry PU/Ag/AgCl Electrodes | Dry-contact electrodes made from Polyurethane with Silver/Silver Chloride coating. They enable rapid setup without gel and are suitable for wearable systems, though may be more prone to movement artifacts [35]. |

| SPHARA (Spatial Harmonic Analysis) | A spatial filtering algorithm used for denoising. It leverages the geometric structure of the EEG electrode array to separate signal from noise in the spatial domain and is particularly effective when combined with other methods [35]. |

| ICA-Based Cleaning Algorithms (Fingerprint, ARCI) | A set of algorithms that use Independent Component Analysis to blindly separate recorded EEG into statistically independent components. These can be automatically or manually classified and removed before signal reconstruction [35]. |

| CLEnet Model | A pre-trained or customizable deep learning model integrating CNN and LSTM networks designed for end-to-end artifact removal from multi-channel EEG data, capable of handling multiple artifact types [38]. |

Troubleshooting Guides and FAQs for EEG Artifact Reduction

Frequently Asked Questions (FAQs)

Q1: What is the primary value of using ICA over simple artifact rejection for EEG data?

ICA allows for the subtraction of artifacts embedded in the data without removing the affected data portions. This is superior to simply rejecting bad data segments because it preserves the original amount of data, which leads to a higher signal-to-noise ratio for subsequent analysis. Artifact rejection, in contrast, reduces the number of trials available, which can be detrimental for analyses like multivariate pattern analysis that benefit from larger data sets [39] [40].

Q2: My ICA results look different each time I run it on the same data. Is this a problem?

Slight variations are normal. When using algorithms like Infomax ICA (runica), decompositions start with a random weight matrix, so convergence is slightly different every time [41]. Features that do not remain stable across multiple runs on the same data should not be interpreted. For a rigorous assessment of reliability, you can use tools like the RELICA plugin, which performs ICA on bootstrapped versions of your data [41].

Q3: How much data do I need to compute a stable ICA decomposition?

ICA works best with a large amount of basically similar and mostly clean data [41]. A general rule is that you need more than kN^2 data sample points, where N is the number of channels and k is a multiplier that tends to increase with the number of channels [42]. For example, with a 32-channel dataset, having 30,800 data points gives about 30 points per weight, which is sufficient. For high-density arrays (e.g., 256 channels), significantly more data is required [42].

Q4: What are the key criteria for identifying an artifact component versus a brain component?

You should evaluate multiple properties of each component [41]:

- Scalp Map: Artifacts have characteristic topographies. For example, eye blink components show strong frontal projections, while muscle artifacts can be more peripheral [41].

- Time Course: Inspect the component's activation for patterns like individual eye movements or high-frequency muscle noise [41].

- Power Spectrum: The power spectrum of an eye blink artifact typically shows a smoothly decreasing pattern, while muscle artifacts have broad-band high-frequency power [41].

- ERPimage: For epoched data, this can reveal if the component's activity is time-locked to events [41].

Q5: For single-channel EEG systems, can I still use ICA?

Standard multi-channel ICA is not applicable to single-channel data. However, alternative data-driven decomposition methods have been developed for single-channel EEG. These include techniques like Empirical Mode Decomposition (EMD), Singular Spectrum Analysis (SSA), and the more recent Fixed Frequency Empirical Wavelet Transform (FF-EWT), which can separate artifact sources from the single-channel signal [43].

Troubleshooting Common ICA Problems

Problem: Poor ICA Decomposition Quality

- Cause 1: Insufficient data. The dataset is too small for the number of channels.

- Solution: Ensure you have enough data points (see FAQ above). If data is limited, use the PCA option during ICA to reduce the number of components to be found [41].

- Cause 2: Presence of strong low-frequency drifts. Slow drifts reduce the independence of sources [44].

- Solution: Apply a high-pass filter before running ICA. A cutoff frequency of 1 Hz is recommended. Note that the ICA solution found from the filtered signal can be applied to the unfiltered raw signal [44].

- Cause 3: Incorrect channel types included.

- Solution: If your dataset contains both EEG and non-EEG channels (e.g., EMG), run ICA only on the EEG channels. ICA assumes an instantaneous relationship, which may not hold for signals like EMG that involve propagation delays [41].

Problem: Unable to Identify Specific Artifacts

- Cause: Lack of reference for what artifacts look like in components.

- Solution: Use automated tools to flag potential artifacts. The MNE-Python package, for example, includes functions like

create_eog_epochsandcreate_ecg_epochsto automatically detect ocular and cardiac artifacts and find the ICA components that best match them [44].

- Solution: Use automated tools to flag potential artifacts. The MNE-Python package, for example, includes functions like

Key Experimental Protocols and Data

Protocol 1: Standard ICA for Ocular and Cardiac Artifact Removal in MNE-Python

This protocol outlines the steps for using ICA to remove eye blinks and heartbeats from EEG/MEG data within the MNE-Python framework [44].

- Filter the Data: High-pass filter the data at 1 Hz to remove slow drifts that can negatively impact the ICA solution. Keep a copy of the unfiltered data.

- Create ICA Object: Instantiate the ICA object, specifying the method (e.g.,

fastica,picard, orinfomax) and the number of components (n_components). - Fit ICA: Fit the ICA model to the filtered data.

- Detect Artifacts:

- Use

create_eog_epochsto find segments of data containing eye blinks. - Use

create_ecg_epochsto find segments containing heartbeats.

- Use

- Find Artifact Components: For each artifact type, use the

find_bads_ecgandfind_bads_eogmethods to identify which independent components best match the artifact. - Visual Inspection: Plot the properties of the suspected components (topography, time course, spectrum) to confirm they are artifacts.

- Apply ICA: Specify the identified artifact components for exclusion and apply the ICA solution to the original, unfiltered data. This reconstructs the sensor signals without the artifact components.

Protocol 2: Advanced Dry EEG Denoising with Combined Methods

A 2025 study introduced a pipeline that combines temporal and spatial methods for superior artifact reduction in dry EEG, which is particularly prone to noise [35].

- Apply ICA-based cleaning: Use the "Fingerprint" method followed by the "ARCI" method to remove physiological artifacts (eye, muscle, cardiac).

- Spatial Filtering: Apply Spatial Harmonic Analysis (SPHARA) to the output. The improved version of SPHARA includes an additional step of zeroing out artifactual jumps in single channels before applying the spatial filter.

- Validation: The performance of the combined method was quantitatively assessed using Signal-to-Noise Ratio (SNR), standard deviation (SD), and Root Mean Square Deviation (RMSD), showing superior results compared to either method alone [35].

Quantitative Performance of Dry EEG Denoising Techniques The following table summarizes the results from a 2025 study comparing different denoising pipelines for dry EEG data [35].

| Denoising Method | Standard Deviation (μV) | Signal-to-Noise Ratio (dB) | Root Mean Square Deviation (μV) |

|---|---|---|---|

| Reference (Preprocessed) | 9.76 | 2.31 | 4.65 |

| Fingerprint + ARCI | 8.28 | 1.55 | 4.82 |

| SPHARA | 7.91 | 4.08 | 6.32 |

| Fingerprint + ARCI + SPHARA | 6.72 | 5.56 | 6.90 |

ICA Algorithm Comparison for EEG Data The table below compares common ICA algorithms available in toolboxes like EEGLAB and MNE-Python [41] [44] [42].

| Algorithm | Description | Best Use Case / Notes |

|---|---|---|

| Infomax (runica) | Default in EEGLAB; uses gradient ascent to maximize information transfer [41]. | General purpose; stable for up to hundreds of channels [42]. Use the extended option for subgaussian sources like line noise [41]. |

| FastICA | Uses fixed-point iteration to maximize non-Gaussianity [44]. | Fast for computing components one-by-one, but overall decomposition may not be faster than Infomax [42]. |

| Picard | A newer algorithm using accelerated optimization [44]. | Faster convergence and more robust for real EEG/MEG data where sources may not be completely independent [44]. |

| Jader | Uses 4th-order moments (kurtosis) [42]. | Impractical for high-density datasets (>50 channels) due to high memory demands [42]. |

Essential Visualizations

ICA-Based EEG Cleaning Workflow

ICA as a Blind Source Separation Model

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in ICA for EEG |

|---|---|

| EEGLAB | A MATLAB toolbox providing a comprehensive interactive environment for ICA analysis, including running decompositions, component inspection, and labeling [41]. |

| MNE-Python | An open-source Python package for exploring, visualizing, and analyzing human neurophysiological data. It includes implementations of FastICA, Picard, and Infomax algorithms, and automated tools for finding artifact components [44]. |

| BrainVision Analyzer | A commercial software package that integrates ICA into a user-friendly workflow for EEG data processing, including tools for unmixing and back-projecting components [40]. |

| RELICA Plugin | An EEGLAB plugin used to assess the reliability and stability of ICA decompositions by bootstrapping the data, helping to address the stochastic nature of ICA [41]. |

| ICLabel | An EEGLAB plugin that provides an automated classification of independent components into categories such as brain, muscle, eye, heart, line noise, and channel noise, aiding in objective component selection [41]. |

| Dry EEG Cap (e.g., waveguard touch) | A 64-channel dry electrode system used in mobile and ecological recording scenarios. Research indicates that combined methods (ICA + SPHARA) are particularly effective for denoising the more pronounced artifacts in dry EEG [35]. |

Blind Source Separation (BSS) is a powerful suite of unsupervised learning algorithms fundamental to modern electroencephalography (EEG) research. These techniques are designed to solve a core problem in neural signal processing: isolating unknown source signals from their mixtures recorded at the scalp without prior information about the sources or their mixing process [45]. In the context of EEG, these unknown sources represent a combination of neural activity originating from the brain and various artifacts from physiological (e.g., eye blinks, muscle activity, heartbeats) and non-physiological origins [46] [35]. The ability of BSS to disentangle these superimposed signals makes it an indispensable tool for reducing neural data artifacts, thereby enhancing the reliability and validity of neuroscientific findings and clinical applications, including drug development research [47].

The mathematical foundation of BSS models the multichannel EEG measurements, ( X \in R^{M \times T} ) (where M is the number of electrodes and T is the number of time points), as a linear mixture of unknown source signals, ( S \in R^{M \times T} ), such that ( X = A S ). Here, ( A \in R^{M \times M} ) is an unknown mixing matrix that encapsulates the volume conduction properties of the head. The goal of any BSS algorithm is to estimate a demixing matrix, ( W \in R^{M \times M} ), which inverts this process to recover the original sources: ( \hat{S} = W X ). The core challenge lies in estimating ( W ) based only on the observed data ( X ) and a statistical principle that defines "source independence" [45]. Different BSS algorithms employ different principles and optimization strategies to achieve this separation, each with distinct strengths and weaknesses for handling various types of EEG artifacts and preserving neural signals of interest.

Core BSS Algorithms and Their Underlying Principles

Independent Component Analysis (ICA) Variants