A Researcher's Guide to Troubleshooting Reproducibility in Biochemical Screening Assays

This article provides a comprehensive framework for researchers and drug development professionals to diagnose, troubleshoot, and resolve reproducibility issues in biochemical screening assays.

A Researcher's Guide to Troubleshooting Reproducibility in Biochemical Screening Assays

Abstract

This article provides a comprehensive framework for researchers and drug development professionals to diagnose, troubleshoot, and resolve reproducibility issues in biochemical screening assays. Covering foundational principles, methodological best practices, systematic troubleshooting of common pitfalls, and rigorous validation techniques, the guide synthesizes current industry standards and scientific literature. It aims to equip scientists with actionable strategies to enhance data quality, minimize false positives, and accelerate reliable hit identification in drug discovery.

Defining the Problem: Why Reproducibility is the Cornerstone of Reliable Screening

Key Terminology FAQ

Q1: What is the core difference between reproducibility and replicability?

The terms are often used interchangeably, but they describe distinct concepts. The most common definitions are contrasted below. Note that some fields, particularly computer science, use the opposing definitions (B1) [1] [2].

Table: Comparison of Common Terminology Frameworks

| Term | Claerbout & Karrenbach (Common in Computational & Biological Sciences) [2] | Association for Computing Machinery (ACM) [2] |

|---|---|---|

| Reproducible | A researcher can duplicate results using the original author's data, code, and materials [3] [2] [4]. | An independent group obtains the same result using artifacts they develop completely independently (different team, different setup) [1] [2]. |

| Replicable | A new study, collecting new data, arrives at the same scientific findings as a prior study [2] [5]. | An independent group obtains the same result using the original author's artifacts (different team, same setup) [1] [2]. |

In summary:

- Reproducibility generally concerns the ability to re-run the same analysis to confirm the original findings [4] [5].

- Replicability generally concerns the ability to confirm findings through a new, independent study [4] [5].

Q2: How do robustness and generalisability fit into this framework?

These terms describe related but more advanced stages of reliable research [2]:

- Robustness: A result is robust when the same dataset is subjected to different analysis workflows (e.g., one pipeline in R, another in Python) and produces a qualitatively similar answer. This shows the finding is not dependent on a specific analytical method [2].

- Generalisability: This combines replicable and robust findings. A generalisable result is one that is not dependent on a particular dataset nor a specific analysis pipeline, indicating it may be widely applicable [2].

Q3: Why is there a "reproducibility crisis" in science?

Surveys indicate that more than 70% of researchers have tried and failed to reproduce another scientist's experiments, and over half believe there is a significant crisis [3]. In machine learning, this is exacerbated by factors like code not being shared; one survey found only 6% of presenters at top AI conferences shared their algorithm's code [3]. Contributing factors include [1] [3] [4]:

- Poor documentation of methods, data analysis, and materials.

- Lack of transparency in available data, code, and raw results.

- Publication bias, where journals prefer novel, positive, statistically significant results.

- Misaligned incentives that prioritize new findings over confirmation.

Q4: What are the specific barriers to reproducibility in computational and machine learning assays?

In computational fields, achieving methods reproducibility is particularly challenging due to [3]:

- Non-determinism in hardware and software: GPU floating-point calculations and some functions in libraries like cuDNN are not guaranteed to be reproducible across runs.

- Randomness in algorithms: Random weight initialization, dataset shuffling, and layers with inherent randomness (e.g., dropout) can produce different results each time.

- Evolving frameworks and platforms: Frequent updates to machine learning frameworks can change behaviors, making it difficult to rerun old code.

- Complex, undocumented workflows: The research process often involves numerous iterative changes to code and data that are not fully captured or archived.

Q5: What is a key strategy to improve reproducibility in high-throughput screening (HTS) for drug discovery?

A foundational strategy is rigorous assay validation and process optimization before initiating a full HTS campaign. This involves [6] [7]:

- Statistical evaluation of the HTS process to ensure it can reliably distinguish active from non-active compounds.

- Using robust statistics to handle assay variability, such as employing medians instead of means for data that does not conform to a normal distribution [7].

- Treating cells as reagents by establishing and documenting standard procedures for cell culture preparation, handling, and authentication to ensure consistency [7].

Troubleshooting Guides for Reproducibility Issues

Guide 1: Troubleshooting Non-Reproducible Computational Results

This guide addresses the common "It doesn't run!" problem when trying to reproduce a computational analysis.

Table: Troubleshooting Computational Reproducibility

| Symptom | Possible Cause | Solution |

|---|---|---|

| Code fails to execute or produces errors. | Missing software dependencies, incorrect versions, or outdated code. | Use containerization (e.g., Docker, Singularity) to package the exact operating system and software environment. For smaller projects, use virtual environments (e.g., Conda) with version-pinned packages. |

| Results are numerically slightly different. | Underlying non-determinism in hardware (GPUs) or software (random number generation, parallel processing). | Set all possible random seeds (Python, NumPy, TensorFlow, PyTorch) and use deterministic algorithms where available. Document all seed values used. |

| Results are drastically different. | Undocumented pre-processing steps, different data, or incorrect use of the provided code. | Demand access to the rawest form of the data and the full analysis pipeline, from raw data to final results. Check for discrepancies in data splitting or normalization procedures. |

| Performance metrics are much lower. | Hyperparameters were not adequately reported or tuned; the model is sensitive to small changes. | Report the hyperparameter search space, the method used for selection (e.g., random search, Bayesian optimization), and the final chosen values for every experiment [3]. |

Prevention Protocol: Adopt a reproducibility checklist for all projects. Key items include [3]:

- Code & Data: Share version-controlled code and link to a downloadable dataset.

- Dependencies: List all software dependencies and their versions.

- Hyperparameters: Specify the range of hyperparameters considered and the method for selecting the best ones.

- Computing Infrastructure: Describe the hardware and software environment used (e.g., GPU model, CUDA version).

Guide 2: Troubleshooting Irreproducible Biochemical Assays

This guide helps diagnose and solve issues where experimental results in biochemical screens cannot be reproduced.

Table: Troubleshooting Experimental Reproducibility in Assays

| Symptom | Possible Cause | Solution |

|---|---|---|

| High well-to-well or plate-to-plate variability. | Unoptimized assay conditions, reagent instability, or pipetting inaccuracies. | Perform a full assay validation, determining key parameters like Z'-factor to assess assay robustness. Use liquid handlers with regular calibration and ensure reagents are properly stored and fresh. |

| In-cell assays show high variation. | Cell line misidentification, contamination, or inconsistent cell culture conditions (passage number, confluence, media). | Authenticate cell lines regularly and use good cell culture practice. Document passage numbers and ensure consistent handling. Treat cells as validated reagents [7]. |

| Inability to distinguish true positives from nuisance compounds. | Compound interference (e.g., aggregation, reactivity, fluorescence). | Run orthogonal assays and counter-screens to identify and filter out compounds with non-specific activity [7]. Follow guidelines like the Assay Guidance Manual (AGM). |

| Results from a new study do not replicate the original findings. | Differences in experimental conditions that were not fully documented (e.g., buffer composition, temperature, instrument settings). | Provide a detailed, step-by-step protocol in the methods section. Document all materials (source, catalog number, batch) and instrument settings. |

Prevention Protocol: Adhere to a structured checklist for reporting, such as the RIDGE checklist for segmentation models, which can be adapted for general assay development [8]. Key items include:

- Materials: Provide comprehensive details on biological reagents, compounds, and equipment (make, model, software version).

- Data Sources & Eligibility: Describe data sources, imaging modalities, and precise eligibility criteria for samples.

- Ground Truth: Detail the qualifications of annotators, the tools used for annotation, and methods for measuring and mitigating interobserver variability [8].

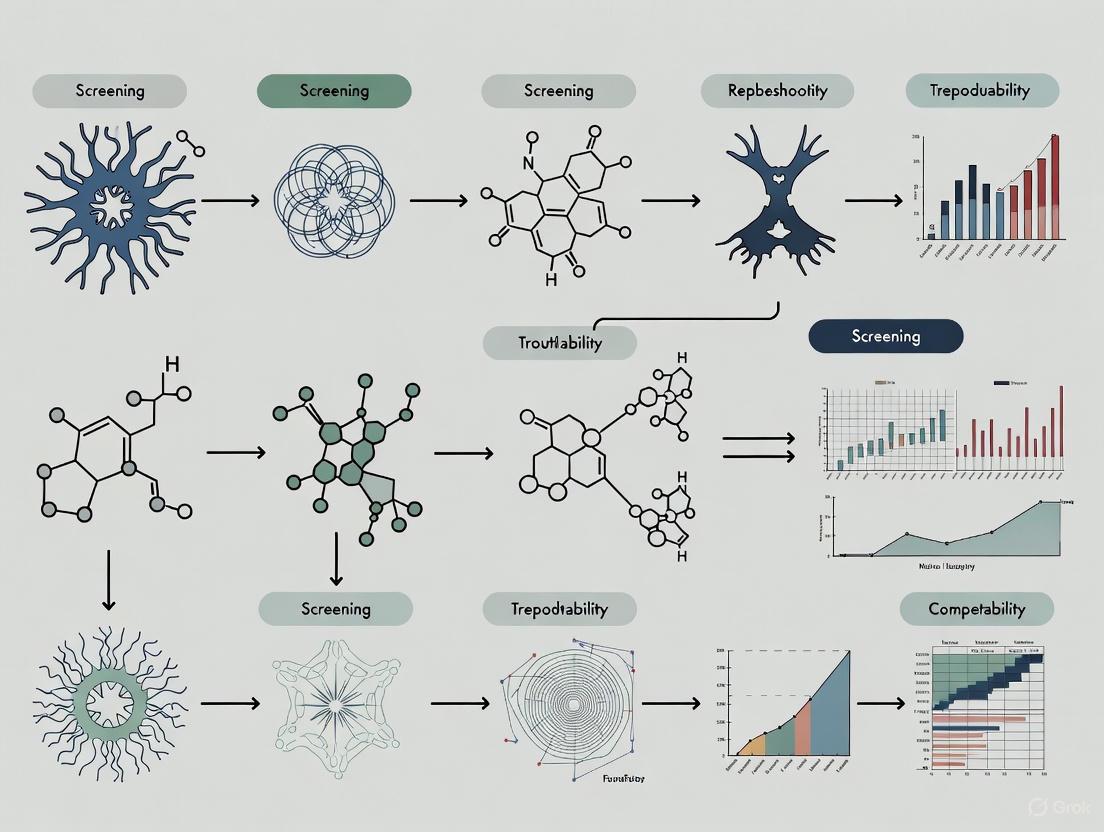

Conceptual Workflow Diagram

The following diagram illustrates the logical relationship between the key concepts of reproducibility, replicability, and robustness, and how they build toward generalisable knowledge.

The Scientist's Toolkit: Essential Research Reagent Solutions

This table details key materials and tools essential for ensuring reproducibility in experimental research, particularly in fields like biochemical screening.

Table: Essential Reagents and Tools for Reproducible Research

| Item | Function in Ensuring Reproducibility | Key Considerations |

|---|---|---|

| Authenticated Cell Lines | The foundational biological reagent for in vitro assays. Using misidentified or contaminated lines invalidates all subsequent results. | Regularly authenticate using STR profiling. Document source, passage number, and culture conditions. Treat cells as validated reagents, not just tools [7]. |

| Assay Guidance Manual (AGM) | A free, comprehensive eBook of best practices for developing robust and reproducible assays for drug discovery. | Provides disease-agnostic standards for assay design, validation, and implementation for HTS and structure-activity relationship (SAR) studies [7]. |

| Version-Controlled Code | Tracks all changes to computational analysis scripts, allowing anyone to recreate the exact analysis at any point in time. | Use systems like Git. Combine with containerization (e.g., Docker) to capture the full software environment. |

| Sample Management System | Ensures sample integrity (e.g., compounds, proteins) by tracking source, concentration, storage conditions, and freeze-thaw cycles. | A strong collaborative relationship between screening and sample handling groups is critical to identify the root cause of assay failure [7]. |

| Statistical Reproducibility Tools | Pre-registration of study design and analysis plans prevents p-value hacking and other forms of unconscious bias [2]. | Clearly define the choice of statistical tests, model parameters, and threshold values before conducting the analysis. |

Troubleshooting Guides

TR-FRET Assay Troubleshooting

Issue: No assay window.

- Cause & Solution: Incorrect instrument setup is the most common reason. Consult your instrument manufacturer's setup guides to ensure compatibility with TR-FRET assays and verify that the correct emission filters are installed. Unlike other fluorescence assays, TR-FRET is exceptionally sensitive to emission filter selection [9].

Issue: Inconsistent EC50/IC50 values between labs.

- Cause & Solution: Differences often originate from variations in compound stock solution preparation. Standardize the preparation of 1 mM stock solutions across all teams and laboratories [9].

Issue: High variability between sample replicates.

- Cause & Solution: Pipetting errors or reagent lot-to-lot variability. Use ratiometric data analysis (acceptor signal/donor signal) to account for small variances in reagent delivery and lot-to-lot differences. Always use a master mix for working solutions [9].

Luciferase Reporter Assay Troubleshooting

Issue: Weak or no signal.

- Cause & Solution: Non-functional reagents, low transfection efficiency, or a weak promoter. Check reagent functionality and plasmid DNA quality. Optimize transfection by testing different DNA-to-transfection reagent ratios. The sample signal must be above the background and negative control [10].

Issue: High background signal.

- Cause & Solution: Contamination or suboptimal plate selection. Use newly prepared reagents and fresh samples. For luminescence assays, use white plates with clear bottoms to reduce background [10].

Issue: High variability between experiments.

- Cause & Solution: Pipetting errors or using different reagent batches. Prepare a master mix, use a calibrated multichannel pipette, and employ a dual-luciferase assay system to normalize data with an internal control reporter [10].

Issue: Signal interference.

- Cause & Solution: Test compounds may inhibit the luciferase enzyme. Avoid known inhibitors like resveratrol, use proper controls, or lower the concentration of the interfering compound [10].

Biochemical Assay General Troubleshooting

Issue: Apparent IC50 value differs from published data.

- Cause & Solution: A 2-3 fold variation is typically within an expected range. Significant differences can arise from variations in assay sensitivity, enzyme-to-substrate ratio, reaction time, or whether a pre-incubation step with the enzyme was used. Adhere strictly to the published protocol and note that values from different assay formats (e.g., colorimetric vs. Mass Spec) are not directly comparable [11].

Issue: Colored compounds interfere with colorimetric readouts.

- Cause & Solution: The compound's intrinsic color absorbs light at the detection wavelength. For each concentration of the colored compound, prepare a separate blank and normalize the assay signal against it [11].

Frequently Asked Questions (FAQs)

Q: What is the difference between IC50 and EC50?

- A: The IC50 (Inhibitory Concentration 50) is the concentration of a compound required to inhibit a biological or enzymatic process by 50%. It is used for inhibitors. The EC50 (Effective Concentration 50) is the concentration required to produce 50% of a maximum desired effect and is used for agonists [11].

Q: How should I design a dose-response experiment to determine an accurate IC50?

- A: Use a serial dilution of your compound in 3-fold increments across 9-10 concentrations. The concentration range must be sufficient to capture the full curve, with the lowest concentrations showing no effect and the highest concentrations showing maximum effect, defining the upper and lower asymptotes of the graph [11].

Q: Can I use cell lysates with my biochemical assay kit?

- A: It is generally not recommended. Biochemical assay kits are designed with validated, recombinant purified proteins to directly test compound effects on a specific target. Using complex cell lysates introduces many uncontrolled variables, making it impossible to confirm the signal's specificity [11].

Q: My assay has a large window but is still not robust. Why?

- A: Assay window alone is an insufficient measure of robustness. Use the Z'-factor, a statistical parameter that incorporates both the assay window size and the variability (standard deviation) of your data. An assay with a Z'-factor > 0.5 is considered excellent for screening. A large window with high noise can have a worse Z'-factor than a small, tight window [9].

Q: What is a homogeneous assay?

- A: A homogeneous assay requires no wash steps to remove unbound molecules, as unbound material does not interfere with the detection signal. This makes it simpler and more amenable to high-throughput screening (e.g., TR-FRET, AlphaScreen). A heterogeneous assay, like an ELISA, requires multiple wash steps [11].

Experimental Protocols

Protocol 1: TR-FRET Ratiometric Data Analysis

Ratiometric analysis is critical for minimizing pipetting and reagent variability in TR-FRET assays [9].

- Collect Raw Data: Obtain Relative Fluorescence Unit (RFU) values for both the donor and acceptor emission channels.

- Calculate Emission Ratio: For each well, divide the acceptor RFU by the donor RFU.

- For a Terbium (Tb) donor: Ratio = RFU (520 nm) / RFU (495 nm)

- For a Europium (Eu) donor: Ratio = RFU (665 nm) / RFU (615 nm)

- Normalize Data (Optional but Recommended): To express data as a normalized response ratio, divide all emission ratio values by the average emission ratio of the negative control (bottom of the curve). This sets the assay window starting at 1.0 and does not affect the IC50 calculation [9].

- Plot and Analyze: Graph the normalized ratio against the logarithm of the compound concentration to generate your dose-response curve.

Protocol 2: Determining IC50 for an Enzyme Inhibitor

This protocol outlines the steps for a biochemical enzyme inhibition assay [11].

- Compound Preparation:

- Dissolve the compound in 100% DMSO at a high concentration (e.g., 10-100 mM).

- Perform a serial dilution in DMSO using 3-fold increments to create a 10-point dilution series.

- Further dilute the compound in assay buffer, ensuring the final concentration of DMSO is constant (e.g., 0.1-1%) across all wells, including controls.

- Assay Setup:

- In a low-binding 96-well plate, combine the diluted compound, enzyme, and substrate in assay buffer according to the kit protocol.

- Include critical controls: a "no compound" control (100% enzyme activity), a "no enzyme" control (background signal), and if available, a control with a known inhibitor.

- Reaction and Detection:

- Incubate the plate under the recommended conditions (time, temperature).

- Develop the assay and read the signal using a compatible plate reader (e.g., fluorescence, luminescence).

- Data Analysis:

- Calculate the percentage of inhibition for each compound concentration:

% Inhibition = [1 - (Signal_compound - Signal_background) / (Signal_no_compound - Signal_background)] * 100 - Plot the % Inhibition vs. the Log10 of the compound concentration.

- Fit the data using a four-parameter logistic (4PL) curve fit to determine the IC50 value.

- Calculate the percentage of inhibition for each compound concentration:

Data Presentation

Table 1: Quantitative Metrics for Assay Validation and Reproducibility

| Metric | Formula / Description | Interpretation | Reference | ||

|---|---|---|---|---|---|

| Z'-factor | `1 - [3*(σpositive + σnegative) / | μpositive - μnegative | ]` | > 0.5: Excellent assay for screening. | [9] |

| Assay Window | (Signal at top of curve) / (Signal at bottom of curve) | A fold-change; however, a large window does not guarantee a good Z'-factor. | [9] | ||

| IC50 | Concentration causing 50% inhibition. | A measure of compound potency; lower value = greater potency. | [11] | ||

| EC50 | Concentration causing 50% of max effect. | A measure of agonist effectiveness; lower value = greater potency. | [11] |

Table 2: Research Reagent Solutions for Enhanced Reproducibility

| Reagent / Tool | Function in Assay | Impact on Reproducibility |

|---|---|---|

| Automation-ready Consumables (e.g., SpecPlate) | Meniscus-free, defined optical pathlength plates for UV/Vis. | Eliminates dilution steps and pipetting errors; ideal for HTS automation [12]. |

| Biomimetic Barrier Systems (e.g., PermeaPad) | Synthetic barrier for passive permeability studies. | Provides a consistent, animal-free model that is more reproducible than variable cell-based systems [12]. |

| Fluorescent Ligands (for HCS) | Non-radioactive probes for studying ligand-receptor interactions in live cells. | Enables real-time, high-content kinetic readouts with improved safety and subcellular resolution [13]. |

| Master Mixes | A single, homogeneous mixture of all assay reagents. | Reduces pipetting variation and well-to-well variability, standardizing reaction conditions [9] [10]. |

| Validated Reference Inhibitors/Agonists | Internal controls provided with or purchased for assay kits. | Serves as a benchmark for assay performance and cross-experiment comparison [11]. |

Workflow and Signaling Pathway Diagrams

Assay Troubleshooting Flow

HCS vs Traditional Assay Workflow

Reproducibility is a fundamental challenge in biochemical screening assays, with over 50% of preclinical results estimated to be irreproducible, costing billions annually in research funds [14]. For researchers and drug development professionals, identifying and controlling the root causes of variability is essential for generating reliable, decision-grade data. This guide addresses three major sources of variability—reagent stability, environmental factors, and protocol deviations—providing actionable troubleshooting and FAQs to enhance the rigor of your experimental workflows.

Troubleshooting FAQs

1. My assay shows high background. What should I check? High background is frequently caused by insufficient washing, which fails to remove unbound components [15]. Ensure you are following the recommended washing procedure precisely. You can increase the number of washes or add a 30-second soak step between washes to improve stringency. Also, verify that you are using fresh plate sealers and reservoirs for each step, as reused materials can harbor residual HRP enzyme that causes high background [15].

2. I have achieved a standard curve, but my sample readings are inconsistent (poor duplicates). What are the likely causes? Poor duplicates typically point to issues with liquid handling or plate condition [15]. First, check your washing process; if using an automatic plate washer, ensure all ports are clean and unobstructed. Second, assess your pipetting technique and ensure all reagents are at room temperature before use to minimize volumetric errors. Finally, uneven plate coating or a poor-quality plate that binds unevenly can also cause this issue [15].

3. My assay results are inconsistent from one run to the next (poor assay-to-assay reproducibility). How can I fix this? This often stems from uncontrolled variables between runs [15]. Key areas to standardize include:

- Protocol Adherence: Strictly follow the same protocol from run to run, avoiding any modifications.

- Incubation Conditions: Adhere to the recommended incubation temperature and times. Avoid incubating plates in areas with environmental fluctuations [15].

- Reagent Preparation: Check calculations and prepare fresh standard curves and buffers for each run. Using internal controls can also help normalize data across runs [15].

4. I suspect a new lot of reagent is causing a shift in my results. What is the best way to investigate this? Perform a reagent lot crossover study [16]. Run a set of patient samples or quality controls using both the old and new reagent lots in the same assay. Compare the results to determine if the difference is statistically and clinically significant. CLSI guidelines provide detailed frameworks for designing these studies [16]. If an unacceptable bias is found, you may need to request a replacement lot from the manufacturer or, after appropriate validation, apply a correction factor [16].

5. What environmental factors are most critical to control in a testing laboratory? Several environmental factors must be monitored and controlled to ensure assay accuracy [17]:

- Temperature: Critical for reagent stability, enzyme kinetics, and proper operation of volumetric equipment.

- Humidity: High humidity can cause hygroscopic materials to absorb moisture, altering their weight and composition.

- Vibrations: Can disrupt sensitive equipment like analytical balances and cause settling or stratification in samples.

- Air Quality: Airborne particles and chemical vapors can contaminate samples or reagents.

Troubleshooting Guide: Common Problems and Solutions

Table 1: Common ELISA Issues and Solutions

| Problem | Possible Source | Recommended Test or Action |

|---|---|---|

| High Background | Insufficient washing [15] | Increase wash number; add 30-second soak step [15]. |

| Contaminated buffers or reused plate sealers [15] | Use fresh plate sealers and reservoirs; make fresh buffers. | |

| No Signal | Incorrectly prepared or old reagents [15] | Check calculations; make new buffers/standards; use new standard vial. |

| Reagents added in wrong order [15] | Review and repeat protocol, ensuring correct order. | |

| Poor Duplicates | Uneven coating or poor plate quality [15] | Use a qualified ELISA plate; check coating volumes and method. |

| Pipetting error [15] | Ensure reagents are at room temperature; check pipette calibration. | |

| Poor Assay-to-Assay Reproducibility | Variations in incubation temperature/time [15] | Adhere strictly to recommended protocols; avoid areas with temperature fluctuations. |

| Buffer contamination or improper standard prep [15] | Make fresh buffers and standard curves for each run. | |

| Edge Effects | Uneven temperature across plate [15] | Use plate sealers; avoid incubating plates on uneven surfaces. |

Table 2: Environmental Factors Impacting Assay Performance

| Factor | Potential Impact on Assays | Control and Monitoring Method |

|---|---|---|

| Temperature | Alters reaction kinetics, reagent stability, and equipment performance [17]. | Use calibrated thermometers; record ambient and incubation temperatures [17]. |

| Humidity | Can cause condensation, alter sample concentration, or impact hygroscopic materials [17]. | Use dehumidifiers/humidifiers; record relative humidity levels [17]. |

| Vibrations | Causes noise in sensitive data and can disrupt equipment alignment [17]. | Use anti-vibration platforms; monitor equipment repeatability [17]. |

| Air Quality | Particulates or chemical vapors can contaminate samples and reagents [17]. | Maintain proper ventilation; use closed containers; track QC sample results [17]. |

| Electrical Supply | Surges or dips can damage instruments or cause reading errors [17]. | Use uninterruptible power supplies (UPS) and voltage stabilizers [17]. |

Experimental Protocols for Validation

Protocol 1: Reagent Stability Testing

Purpose: To determine the in-use and shelf-life stability of critical reagents, ensuring consistent performance over time.

Method (Isochronous Design) [18]:

- Aliquot and Storage: On Day 0, prepare multiple identical aliquots of the reagent and store them under the recommended conditions (e.g., -20°C).

- Scheduled Removal: On each scheduled test day (e.g., Day 1, 7, 30, etc.), remove one aliquot from storage and immediately transfer it to a presumed stable storage condition (e.g., -70°C or lower) to halt further degradation.

- Final Batch Testing: At the end of the study period, test all aliquots—including a freshly prepared one (Day 0 control)—in a single, randomized batch experiment. This minimizes inter-assay variability.

- Data Analysis: Compare the performance of each time-point aliquot against the Day 0 control. The stability limit is the longest duration for which the reagent's performance (e.g., measured signal, recovery of a known concentration) remains within pre-defined acceptance criteria (e.g., ±5% deviation) [18].

Protocol 2: Plate Uniformity Assessment

Purpose: To validate the performance and signal variability of an assay across the entire microplate before commencing high-throughput screening [19].

Method (Interleaved-Signal Format) [19]:

- Plate Layout: Create a plate layout that interleaves "Max," "Min," and "Mid" signal controls across the plate. For example, in a 96-well plate, assign wells for maximum signal (H), minimum signal (L), and a mid-point signal (M) in a patterned fashion (e.g., Figure 1 below).

- Assay Execution: Run the assay according to your protocol over multiple days (e.g., 3 days for a new assay) using independently prepared reagents each day.

- Data Analysis: Calculate the Z'-factor for each day to assess the assay's robustness and signal window. Analyze the data for spatial patterns (e.g., edge effects, row/column biases) that indicate environmental non-uniformity.

- Z'-factor: A statistical measure of assay quality. A Z'-factor > 0.5 is generally considered excellent for screening.

Protocol 3: Reagent Lot Crossover Study

Purpose: To evaluate the equivalence of a new reagent lot against the current lot before implementation.

Method [16]:

- Sample Selection: Select a panel of 5-10 patient samples or quality control materials that span the assay's reportable range (low, mid, and high concentrations).

- Testing: Assay all selected samples with both the current (old) reagent lot and the new reagent lot in the same run, preferably in duplicate or triplicate.

- Statistical Analysis: Perform linear regression and Bland-Altman analysis to compare results. The laboratory must pre-define acceptance criteria for bias (e.g., mean difference < 5%) based on the medical requirements of the test [16].

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for Managing Reagent Variability

| Item | Function | Best Practice Guidance |

|---|---|---|

| ELISA Plate (Qualified) | Solid phase for antibody binding. | Use plates designed for ELISA, not tissue culture [15]. |

| Reference Standards & Controls | Calibrate the assay and monitor performance. | Handle according to directions; use new vials for critical assays [15]. |

| Stable, Low-Background Substrate | Generates detectable signal (e.g., color, light). | Mix and use immediately; protect from light [15]. |

| Liquid Handling Equipment (Calibrated) | Accurate and precise dispensing of reagents. | Calibrate regularly; use correct pipettes and tips; ensure tips are sealed [14]. |

| Plate Sealer (Disposable) | Prevents evaporation and contamination during incubation. | Use a fresh sealer for each assay step; do not reuse [15]. |

| Documented Stability Data | Provides manufacturer's data on reagent shelf-life. | Follow storage and in-use stability claims; conduct in-house verification [18]. |

Assay Validation and Troubleshooting Workflows

Assay Troubleshooting Logic Flow

Reagent Lot Validation Decision Flow

Frequently Asked Questions (FAQs)

Q1: A recent survey suggested that over 70% of researchers have been unable to reproduce other scientists' experiments, and over 50% have been unable to reproduce their own. What are the primary factors contributing to this reproducibility crisis? [20] [21]

A1: The reproducibility crisis in life science research, including single-cell transcriptomics, stems from several interconnected factors [20] [21]:

- Lack of access to data and materials: Reproducibility is hindered when raw data, protocols, and key research materials like authenticated cell lines are not readily shared [20].

- Poor experimental design and research practices: Studies designed without a thorough review of existing evidence or with insufficient blinding and randomization are less likely to be reproducible [20].

- Inability to manage complex datasets: Many researchers lack the tools or training to properly analyze, interpret, and store the large, complex datasets generated by modern technologies like single-cell RNA sequencing (scRNA-seq) [20] [22].

- Cognitive and reporting biases: Confirmation bias and the under-reporting of negative or null results skew the scientific literature, making it difficult to assess the true state of knowledge [20].

- Competitive academic culture: The pressure to publish novel, positive findings in high-impact journals often de-incentivizes the careful, repetitive work required for reproducibility [20].

Q2: In single-cell RNA-seq analysis, my cluster results seem to change every time I re-run my analysis with slightly different parameters. How can I stabilize my findings?

A2: Cluster instability is a well-known source of irreproducibility in single-cell genomics [23]. It is common for reanalysis of the same dataset to find 20% fewer or more clusters, with only 50-70% equivalence in cell-type assignments [23]. This arises from numerous analytical decisions. To improve robustness:

- Transparent Reporting: Clearly document all criteria and parameters used for quality control, normalization, highly variable gene selection, and the clustering algorithm itself [23].

- Internal Reproducibility Evaluation: Assess the robustness of your clusters by performing permutations. For example, randomly remove 10% of cells and re-cluster the remainder; a meaningful fraction of cells will often reassign to a different cluster. Reporting a reproducibility metric, like a Rand Index, from such an exercise is recommended [23].

- Define Core Clusters: Consider designating only the cells that consistently cluster together across permutations as a "core" population for downstream analysis, while treating cells with ambiguous identity separately [23].

Q3: My single-cell experiment has data from thousands of individual cells. Can I treat these individual cells as biological replicates for statistical testing when comparing conditions?

A3: No, this is a critical mistake that leads to a high false-positive rate. Treating cells as independent replicates is a statistical error known as sacrificial pseudoreplication, as it confounds variation within a sample with variation between samples [24]. Cells from the same biological sample are correlated. One study found that analyses ignoring sample variation had false positive rates between 30-80%, while methods accounting for it had rates of 2-3% [24].

- Correct Approach: Pseudobulking. A standard correction is to use a "pseudobulk" approach. This involves summing or averaging read counts for each cell type within each biological sample first. Traditional bulk RNA-seq differential expression methods (e.g., DESeq2, limma) are then applied to these sample-level summaries [24].

Q4: I am planning my first single-cell RNA-seq experiment. What are the most common pitfalls during sample preparation, and how can I avoid them?

A4: Success in scRNA-seq starts long before sequencing. Common pitfalls and their solutions include [25]:

- Inappropriate Cell Suspension Buffer: The presence of media, DEPC, RNases, magnesium, calcium, or EDTA can interfere with the reverse transcription reaction. Solution: Wash and resuspend cells in EDTA-, Mg2+-, and Ca2+-free 1x PBS or a recommended FACS collection buffer [25].

- Delayed Processing: RNA degradation begins immediately after cell collection. Solution: Process samples immediately after plating or snap-freeze them in dry ice and store at –80°C until processing [25].

- Low Viability and High Debris: Low viability can lead to sequencing mostly ambient RNA instead of cellular transcriptomes. Solution: Aim for cell preparations with >90% viability and minimal debris [24].

- Skipping Pilot Experiments: Different cell types have varying RNA content. Solution: Always run a pilot experiment with a few samples and controls to optimize parameters like PCR cycle numbers for your specific cell type [25].

Troubleshooting Guides

Issue 1: High Background in Negative Controls

- Potential Cause: Contamination from the environment, amplicons from previous PCR reactions, or reagents.

- Solution: Practice stringent RNA-seq lab techniques. This includes wearing a clean lab coat and gloves, changing gloves frequently, and maintaining physically separated pre- and post-PCR workspaces. Using a clean room with positive air flow for pre-PCR work greatly decreases contamination risk [25].

Issue 2: Low cDNA Yield After Reverse Transcription

- Potential Cause 1: The reverse transcription reaction is being inhibited by components in your sample buffer.

- Potential Cause 2: The starting RNA mass is too low or the protocol is not optimized for your cell type.

- Solution: Perform a pilot experiment. Use a positive control with a known amount of RNA (e.g., 10 pg for single cells) to confirm your technique. Adjust the number of PCR cycles based on the expected RNA content of your cells [25].

Issue 3: Inconsistent Clustering Results

- Potential Cause: The inherent variability and numerous decision points in the scRNA-seq analytical pipeline.

- Solution: Adopt a standardized workflow for internal validation [23].

- Documentation: Meticulously record all parameters for QC, normalization, and clustering.

- Permutation Test: Systematically remove a random subset (e.g., 10%) of cells or samples and re-run your clustering pipeline.

- Calculate a Reproducibility Metric: Use a metric like the Rand Index to quantify the agreement between original and new cluster assignments.

- Refine Clusters: Use the results to define a stable set of "core" clusters for robust downstream analysis.

Quantitative Data on Reproducibility

The following tables summarize key quantitative findings from studies on research reproducibility.

Table 1: Survey Data on Reproducibility Challenges

| Finding | Field | Source | Reference |

|---|---|---|---|

| Over 70% of researchers could not reproduce another scientist's experiments. | Biology | Nature Survey (2016) | [20] |

| Over 50% of researchers could not reproduce their own experiments. | Biology | Nature Survey (2016) | [20] [21] |

| Only 20-25% of validation studies were "completely in line" with original oncology reports. | Oncology Drug Development | Prinz et al. | [26] |

| Only 6 out of 53 "landmark" preclinical studies could be confirmed. | Oncology | Begley & Ellis | [26] |

| An estimated $28 billion per year is spent on non-reproducible preclinical research. | Preclinical Research | Meta-analysis (2015) | [20] |

Table 2: Single-Cell RNA-seq Sample Preparation Guidelines

| Parameter | Recommendation | Purpose | Source |

|---|---|---|---|

| Cell Concentration | 1,000 - 1,600 cells/µL | Optimal for cell capture in droplet-based systems (e.g., 10X Genomics). | [24] |

| Total Cell Number | 100,000 - 150,000 cells | Ensures sufficient material for loading and recovery of target cells. | [24] |

| Viability | >90% | Reduces sequencing of background RNA from dead/dying cells. | [24] |

| Buffer | Mg2+/Ca2+-free PBS, 0.04% BSA | Prevents inhibition of the reverse transcription reaction. | [25] [24] |

Experimental Protocols & Workflows

Detailed Protocol: Preparing a Single-Cell Suspension for 10X Genomics

Principle: To isolate a suspension of live, single cells free of contaminants that inhibit downstream enzymatic reactions [25] [24].

Materials:

- Tissue sample or cell culture

- Appropriate dissociation reagents (e.g., collagenase, trypsin)

- EDTA-, Mg2+- and Ca2+-free 1x Phosphate-Buffered Saline (PBS)

- Bovine Serum Albumin (BSA)

- Cell strainer (e.g., 40 µm)

- Trypan Blue or other viability dye

- Automated cell counter or hemocytometer

Procedure:

- Dissociation: Dissociate tissue using a validated mechanical and/or enzymatic protocol (consult resources like the Worthington Tissue Dissociation Database).

- Quenching & Washing: Quench enzymatic activity with a complete medium. Centrifuge the cell suspension and wash the pellet with 1x PBS + 0.04% BSA.

- Filtration & Counting: Pass the cell suspension through a 40 µm cell strainer to remove aggregates. Take an aliquot to count cells and assess viability using Trypan Blue exclusion.

- Resuspension: Centrifuge and resuspend the cell pellet in PBS + 0.04% BSA at the target concentration of 1,000 - 1,600 cells/µL. The total cell count should be >100,000 cells.

- Storage & Transport: Keep the cell suspension on ice and process it as quickly as possible to minimize RNA degradation and transcriptome changes.

Visual Guide: Single-Cell RNA-Seq Wet-Lab to Dry-Lab Workflow

The following diagram outlines the key steps in a single-cell RNA-seq experiment, highlighting critical decision points that impact reproducibility.

Single-Cell RNA-Seq Workflow and Reproducibility Checkpoints

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Reagents and Materials for scRNA-seq Experiments

| Item | Function | Critical Consideration |

|---|---|---|

| Authenticated Cell Lines | Source of biological material; ensures genotype and phenotype are as expected. | Using misidentified or cross-contaminated cell lines invalidates results. Use low-passage, authenticated materials [20]. |

| Unique Molecular Identifiers (UMIs) | Short nucleotide barcodes that label individual mRNA transcripts during reverse transcription. | Allows for accurate transcript counting by correcting for PCR amplification bias, crucial for quantitative accuracy [22] [24]. |

| Cell Barcodes | Short nucleotide barcodes that label all mRNA from a single cell. | Enables pooling of thousands of cells in a single reaction while retaining the ability to deconvolute data back to individual cells [24]. |

| RNase Inhibitors | Protects the fragile RNA template from degradation during sample preparation. | Essential for working with low-input RNA to preserve transcriptome integrity [25]. |

| Mg2+/Ca2+-Free Buffer | Suspension medium for cells during sorting and loading. | Prevents chelation of reaction components and inhibition of reverse transcriptase enzyme [25]. |

Core Concepts: Reproducibility and Metrology

What does "reproducibility" mean in the context of biochemical screening?

Reproducibility is a multi-faceted concept. The scientific community often distinguishes between these key types [20] [27]:

- Methods Reproducibility: The ability to repeat the exact same experimental procedures using the same materials, data, and methodologies as the original study. This relies on complete disclosure of protocols, reagents, and analytical code [27].

- Results Reproducibility (Replicability): Obtaining similar results through an independent study that closely matches the procedures of the original work [27].

- Inferential Reproducibility: Drawing qualitatively similar conclusions from a re-analysis of the original data or an independent dataset [27].

What is a "metrology mindset" and why is it critical for assay development?

A metrology mindset is the formal application of the science of measurement to your experimental workflow. It involves understanding that every measurement result is an estimate, and its quality is defined by a rigorous assessment of its measurement uncertainty (MU) [28]. This mindset shifts the goal from simply getting a result to understanding the confidence and reliability of that result, which is the foundation of reproducible science [29].

What is Measurement Uncertainty (MU), and how is it different from error?

Measurement Uncertainty (MU) is a quantitative parameter that characterizes the dispersion of values that can be reasonably attributed to the analyte being measured [28]. Unlike "error," which is the difference from a true value, uncertainty acknowledges that a true value is often unknowable and instead establishes an interval (e.g., Confidence Interval) around your result where the true value is expected to lie with a given probability [28].

Table: Key Differences Between Error and Uncertainty

| Feature | Error | Uncertainty |

|---|---|---|

| Definition | Difference between measured and true value | Estimate of the dispersion of values attributable to the measurand |

| Concept | Theoretical, often unknowable | Practical, can be quantified |

| Systematic Components | Correctable if known | Accounted for in the uncertainty budget even after correction |

| Final Output | A single value | A value with a defined interval (e.g., ± U) |

Troubleshooting Guides: Common Reproducibility Issues

FAQ: My assay lacks a robust signal window (e.g., low Z'-factor). What should I check?

A poor assay window, quantified by a low Z'-factor (a key metric for assay robustness where >0.5 is suitable for screening), often stems from instrumental or reagent issues [9].

Troubleshooting Steps:

- Verify Instrument Setup: For fluorescent assays like TR-FRET, the single most common reason for failure is an incorrect choice of emission filters. Confirm your instrument's filter settings against the assay's requirements [9].

- Check Reagent Integrity: Ensure reagents are stored correctly and have not expired. Test new lots of critical reagents to rule out degradation or manufacturing variability.

- Confirm Protocol Execution: Review pipetting accuracy, incubation times, and temperatures. Small deviations can significantly impact the signal-to-noise ratio. Automated liquid handlers can minimize human error in these steps [30].

FAQ: I cannot reproduce a published IC₅₀ value. Where did I go wrong?

Differences in calculated potency (IC₅₀ or EC₅₀) between labs are frequently traced to foundational preparation steps [9].

Troubleshooting Steps:

- Stock Solution Preparation: This is the primary reason for EC₅₀/IC₅₀ discrepancies. Pay close attention to how compound stock solutions are prepared (e.g., solvent, concentration accuracy) and stored [9].

- Reagent Authentication: Are you using the same biological materials? Using misidentified, cross-contaminated, or over-passaged cell lines is a major contributor to irreproducible results. Always use authenticated, low-passage reference materials and document their source [20].

- Methodological Detail: Published methods may omit critical details like buffer preparation order ("20 mM HEPES pH 7.2, 150 mM NaCl" is not the same as "20 mM HEPES, 150 mM NaCl, pH 7.2") or specific instrument models. Contact the corresponding author for clarification if needed [31].

FAQ: My experimental data is noisy and inconsistent from day to day. How can I stabilize it?

Day-to-day variability points to a lack of procedural control and environmental stability.

Troubleshooting Steps:

- Control for Cognitive Bias: Implement blinding and randomize sample processing orders to prevent subconscious influences like confirmation bias [20].

- Thoroughly Document Methods: Create and adhere to detailed Standard Operating Procedures (SOPs) that report key parameters such as blinding, instrumentation, number of replicates, statistical analysis methods, and criteria for data inclusion/exclusion [20].

- Robust Data Management: Complex datasets require proper tools for analysis, interpretation, and storage. A lack of these tools can lead to inconsistencies in how data is processed between runs [20].

Experimental Protocols for Enhancing Reproducibility

Protocol: Assessing Assay Robustness with Z'-Factor

The Z'-factor is a standard statistical measure for assessing the quality and robustness of high-throughput screening assays [9].

Methodology:

- Run Controls: Perform your assay in a microtiter plate, designating wells for a positive control (e.g., 100% inhibition) and a negative control (e.g., 0% inhibition). Include a sufficient number of replicates for each (e.g., n≥16).

- Calculate Means and Standard Deviations: For both the positive control (PC) and negative control (NC), calculate the mean (μ) and standard deviation (σ) of the signal.

- Apply the Z'-Factor Formula:

Z' = 1 - [ 3*(σ_pc + σ_nc) / |μ_pc - μ_nc| ] - Interpret the Result:

- Z' > 0.5: An excellent assay suitable for screening.

- 0 < Z' ≤ 0.5: A marginal assay that may need optimization.

- Z' < 0: A "yes/no" type assay; there is no separation between the controls.

Table: Z'-Factor Interpretation Guide

| Z'-Factor Value | Assay Quality Assessment | Suitability for HTS |

|---|---|---|

| Z' > 0.5 | Excellent | Excellent |

| 0 < Z' ≤ 0.5 | Marginal / Doable | May require optimization |

| Z' < 0 | Unacceptable | No (overlap between controls) |

Workflow: A Metrology-Based Approach to Assay Development

The following diagram outlines a systematic workflow for developing assays with a metrology mindset, focusing on identifying and controlling for sources of uncertainty.

The Scientist's Toolkit: Essential Research Reagent Solutions

Using high-quality, well-characterized reagents is non-negotiable for reproducible research.

Table: Key Research Reagents and Their Functions in Ensuring Reproducibility

| Item | Function & Importance in Reproducibility | Best Practice |

|---|---|---|

| Authenticated Cell Lines | Embodies the biological system under study. Misidentified or contaminated lines render all data invalid [20]. | Use repositories that provide STR DNA profiling and regular mycoplasma testing [20] [32]. |

| Validated Antibodies | Key reagent for detection in assays like ELISA or Western Blot. Non-specific binding causes false results [31]. | Use application-validated antibodies and report the clone/catalog number. |

| Reference Standards | Provides a known baseline to calibrate instruments and validate assay performance across time and locations [29]. | Use traceable, certified reference materials where available. |

| High-Purity Biochemicals | Components of assay buffers and solutions. Impurities can introduce unexpected inhibition or activation. | Source from reputable suppliers and document lot numbers. |

| Automated Liquid Handlers | Performs repetitive liquid dispensing tasks. Minimizes human error and variability in pipetting, a major source of noise [30]. | Implement for critical reagent addition; ensure regular calibration. |

Uncertainty Budget: A Top-Down Model for Biochemical Assays

Given the complexity of biological systems, a full "bottom-up" uncertainty calculation as described in the Guide to the Expression of Uncertainty in Measurement (GUM) is often impractical [28]. A "top-down" model that uses existing quality control data is recommended.

Methodology:

- Identify Major Sources: List the main factors contributing to variability in your result (e.g., pipetting volume, temperature fluctuation, operator skill, reagent lot).

- Quantify Contributions: Use data from internal quality control (IQC) and method validation studies to assign a standard uncertainty (u) to each major source. For example, the long-term standard deviation of your IQC material can be a key contributor.

- Combine Uncertainties: Calculate the combined standard uncertainty (u_c) using the root sum of squares method:

u_c = √(u₁² + u₂² + u₃² ...). - Calculate Expanded Uncertainty: Multiply the combined standard uncertainty by a coverage factor (k), typically k=2 for a 95% confidence interval, to get the expanded uncertainty (U):

U = k * u_c[28].

This process creates an "uncertainty budget" that tells you which factors are most responsible for variability in your measurements, allowing you to focus optimization efforts where they matter most.

Building a Robust Foundation: Assay Development and Optimization Strategies

Reproducibility is a fundamental requirement in biochemical screening and drug discovery. A lack of it wastes resources, erodes trust in scientific findings, and significantly hampers the development of new therapies [20]. The selection of an appropriate detection platform is a critical decision that directly impacts the reliability and reproducibility of your data. This technical support center provides troubleshooting guides and FAQs for three prevalent technologies: Fluorescence Polarization (FP), Time-Resolved Förster Resonance Energy Transfer (TR-FRET), and Luminescence. The following sections are designed to help you identify, understand, and resolve common issues, ensuring your assays are robust and your results are reproducible.

Detection Platform Fundamentals and Selection Guide

Understanding the core principles of each technology is the first step in selecting the right platform and effectively troubleshooting problems. The table below summarizes the fundamental mechanisms and common applications of FP, TR-FRET, and Luminescence assays.

Table 1: Core Principles of FP, TR-FRET, and Luminescence Assays

| Platform | Detection Principle | Typical Assay Format | Key Advantages |

|---|---|---|---|

| Fluorescence Polarization (FP) | Measures the change in molecular rotation of a fluorescent tracer upon binding [33]. | Binding assays (protein-ligand, protein-DNA), enzymatic assays. | Homogeneous ("mix-and-read"), ratiometric, no separation steps required, real-time kinetics [33]. |

| TR-FRET | Measures energy transfer from a long-lifetime donor (e.g., Tb) to an acceptor when in close proximity [34]. | Protein-protein interactions, kinase activity, target engagement. | Reduced background due to time-gated detection, ratiometric, suitable for complex biological samples [34]. |

| Luminescence (e.g., ADP-Glo) | Measures light output from an enzymatic reaction (e.g., luciferase) proportional to analyte like ADP [35]. | Enzyme activity (kinases, ATPases), cell viability, reporter gene assays. | High sensitivity, large dynamic range, minimal background from compound autofluorescence. |

The following diagram illustrates the core signaling mechanism for each detection technology.

Troubleshooting Common Assay Issues: FAQs

Fluorescence Polarization (FP) Assays

Q: My FP assay has a very small assay window (low signal-to-noise ratio). What could be the cause? A: A small assay window often stems from an inappropriate tracer or issues with the detection system.

- Tracer Size: Ensure the molecular volume difference between the free and bound tracer is large enough. FP is most sensitive for interactions between a small molecule (<1.5 kDa) and a large partner (>10 kDa) [33].

- Fluorophore Choice: Traditional green dyes (e.g., FITC) can be affected by compound autofluorescence. Switching to red-shifted dyes (e.g., Cy3B, Cy5, BODIPY TMR) can minimize this background interference [33].

- Instrumentation: Verify that your microplate reader is properly configured for FP. Monochromators are not recommended due to low light transmission; optical filters are preferred. Also, ensure the instrument uses a xenon lamp for UV range excitation [33].

Q: I am observing high background or inconsistent polarization values. How can I resolve this? A: This can be caused by compound interference or light scattering.

- Compound Interference: Test compounds can be fluorescent themselves (autofluorescence) or can quench the tracer's signal. It is good practice to measure the fluorescence background of wells containing only the compound and buffer, and subtract this value from the final calculation [33].

- Light Scattering: Precipitated compounds or particles in the solution can scatter light, causing artifacts. Centrifuging assay plates before reading or using red-shifted dyes can help mitigate this issue [33].

TR-FRET Assays

Q: My TR-FRET assay has no assay window. What is the most common reason? A: The single most common reason for a failed TR-FRET assay is the use of incorrect emission filters on your microplate reader [9]. Unlike other fluorescence assays, TR-FRET requires specific filter sets to accurately capture the donor and acceptor signals while minimizing cross-talk and background. Always consult your instrument's compatibility guide and use the recommended filters for your specific TR-FRET reagent.

Q: The TR-FRET signal is weak, even with the correct filters. What should I check? A: A weak signal can be due to several factors related to reagent quality and assay conditions.

- Reagent Quality: Antibodies and other protein reagents can exhibit lot-to-lot variance (LTLV). Impurities like aggregates or fragments can lead to high background and reduced specific signal [36]. Always use authenticated, high-quality reagents and test new lots before full implementation.

- Donor-Acceptor Pair: Ensure spectral overlap is optimal. The donor's emission must efficiently excite the acceptor. For example, a Terbium (Tb) donor has characteristic emissions that should overlap with the acceptor's absorption spectrum [34].

- Assay Components: Confirm that all components, including tags, antibodies, and buffers, are compatible and at optimal concentrations.

Luminescence Assays

Q: My luminescence-based kinase assay (e.g., ADP-Glo) shows high variability and false positives/negatives. Why? A: Luminescence assays can be susceptible to compounds that interfere with the luciferase enzyme itself.

- Luciferase Inhibitors: Some compounds directly inhibit the firefly luciferase used in the detection step, leading to a false decrease in signal that can be mistaken for inhibition [35]. This was demonstrated in a screen where Gentian violet was a false positive in a scintillation assay but was correctly identified as a luminescence inhibitor [35].

- Troubleshooting Step: Implement a counterscreen or a confirmatory assay using a different detection technology (e.g., a radiometric method like SPA) to triage hits and eliminate these format-specific false positives [35].

Q: The luminescence signal is low across all wells, including controls. A: This typically indicates a problem with the assay reagents or protocol.

- Reagent Stability: Luciferase reagents can be unstable. Ensure reagents are fresh, have been stored properly, and are protected from light. Thaw and prepare them according to the manufacturer's protocol.

- ATP Contamination: In assays measuring ADP, trace amounts of ATP in buffers or from enzyme preparations can cause high background. Use ultrapure water and high-quality reagents.

- Protocol Timing: Luminescence signals can decay over time. Ensure a consistent and appropriate incubation time between reagent addition and reading on the plate reader.

Essential Research Reagent Solutions

The quality and consistency of core reagents are paramount for assay reproducibility. The following table outlines key materials and their functions, with a focus on mitigating lot-to-lot variance.

Table 2: Key Research Reagents and Their Roles in Assay Reproducibility

| Reagent Category | Specific Examples | Critical Function | Considerations for Reproducibility |

|---|---|---|---|

| Fluorescent Tracers | T2-BODIPY-FL, T2-BODIPY-589 [34] | Binds to the target of interest; generates the primary signal in FP/TR-FRET. | Purity, labeling efficiency, and spectral properties must be consistent. Cross-platform tracers can enhance data comparability [34]. |

| Antibodies | Anti-6xHis-Tb (for TR-FRET) [34] | Binds to tagged proteins; serves as a donor in TR-FRET. | Aggregates or fragments can cause high background [36]. Use SEC-HPLC and CE-SDS to monitor purity and stability across lots [36]. |

| Enzymes | Luciferase, Horseradish Peroxidase (HRP) [36] | Generates or modulates the detectable signal in luminescence/colorimetric assays. | Quality is measured in activity units, which can vary between batches. Source enzymes from reputable suppliers with consistent QC. |

| Antigens/Proteins | Recombinant kinases, calibrator peptides [36] | The target or standard for the assay. | Purity, activity, and stability are critical. SDS-PAGE and SEC-HPLC are key for assessing quality. Synthetic peptides should be checked for truncated by-products [36]. |

| Cell Lines | Engineered reporter lines, primary cells | Provides the cellular context for the assay. | Authenticate cell lines regularly (e.g., via STR DNA profiling) to avoid misidentification and cross-contamination, a major source of irreproducible data [20] [32]. |

Experimental Protocol: A Cross-Platform Tracer Evaluation

This protocol outlines how to evaluate a fluorescent tracer for cross-platform utility in both TR-FRET and NanoBRET (a luminescence-based technique), a strategy that can enhance data consistency in drug discovery campaigns [34].

Objective: To determine the performance of a fluorescent tracer (e.g., T2-BODIPY-FL or T2-BODIPY-589) in both TR-FRET and cellular NanoBRET target engagement assays.

Materials:

- Purified, tagged protein of interest (e.g., His-RIPK1)

- Tracer compound (e.g., T2-BODIPY-FL, T2-BODIPY-589)

- Anti-tag TR-FRET antibody (e.g., Anti-6xHis-Tb)

- TR-FRET assay buffer

- Cell line expressing the NanoLuc-fused protein of interest

- NanoBRET substrate (e.g., furimazine)

- Opti-MEM or other suitable assay medium

- White, solid-bottom microplates (e.g., 384-well)

- Multi-mode microplate reader capable of TR-FRET and BRET detection

Method: Part A: TR-FRET Assay Optimization

- Prepare Reagents: Dilute the His-tagged protein, anti-6xHis-Tb antibody, and tracer in TR-FRET assay buffer to their working concentrations.

- Titration: In a 384-well plate, serially dilute the tracer across a range of concentrations (e.g., 0.1 nM to 10 µM).

- Reaction Assembly: For each well, mix the protein, antibody, and diluted tracer. Include controls without protein (for background) and without tracer (for donor-only signal).

- Incubation: Incubate the plate in the dark at room temperature for 1-2 hours to reach equilibrium.

- Signal Detection: Read the plate on a TR-FRET-compatible microplate reader. Test different filter pairs (e.g., 520/490 nm, 640/490 nm) to find the optimal signal-to-background ratio for your tracer [34].

- Data Analysis: Calculate the TR-FRET ratio (Acceptor Emission / Donor Emission). Plot the ratio against the tracer concentration to determine the equilibrium dissociation constant (Kd) and the Z'-factor to assess assay robustness [34].

Part B: NanoBRET Assay Evaluation

- Cell Preparation: Seed the NanoLuc-fused protein-expressing cells into a 384-well plate and culture until they reach the desired confluence.

- Tracer Treatment: Dilute the tracer in assay medium and add it to the cells. Include a control with a high concentration of an unlabeled inhibitor to define non-specific binding.

- Substrate Addition: Add the NanoBRET substrate (furimazine) according to the manufacturer's instructions.

- Signal Detection: Incubate briefly and read the plate on a luminescence-compatible reader capable of dual-emission detection (e.g., 460 nm and 610 nm LP filter). A monochromator can be used to optimize detection parameters [34].

- Data Analysis: Calculate the BRET ratio (Acceptor Emission / Donor Emission). A specific signal over background indicates successful cellular target engagement. The Z'-factor should be calculated to confirm assay quality [34].

The workflow for this cross-platform evaluation is summarized below.

Universal assay platforms promise a streamlined approach to analyzing multiple biological targets simultaneously, offering significant advantages in throughput, cost, and sample conservation. However, realizing this potential requires careful navigation of platform-specific challenges to ensure reliable and reproducible results. This technical support center is designed within the broader context of troubleshooting reproducibility issues in biochemical screening assays. It provides researchers, scientists, and drug development professionals with targeted FAQs and evidence-based guides to diagnose, understand, and resolve common experimental problems, transforming complex data into credible biological insights.

Understanding Reproducibility in Universal Assays

Reproducibility—the precision of measurements under varying conditions like different locations, operators, or measuring systems—is a fundamental challenge in assay development [37]. From a measurement science (metrology) perspective, confidence in research results is built not just on reproducibility, but on a systematic understanding of all sources of measurement uncertainty [37].

Universal platforms consolidate multiple experimental steps, but this integration can also combine and amplify sources of variability. Key concepts include:

- Reproducibility vs. Repeatability: Repeatability refers to precision under identical, short-term conditions, while reproducibility assesses precision across expected variations in the real world [37].

- Sources of Uncertainty: These can be diverse, including incomplete definition of the measurand, non-representative sampling, inadequate knowledge of environmental effects, and personal bias [37].

Frequently Asked Questions & Troubleshooting Guides

1. We observed high inter-assay variability in our multiplex immunoassay. What could be the cause?

High inter-assay variability, or poor reproducibility, often points to systematic issues in the assay platform or its execution.

- Possible Causes and Solutions:

- Plate-to-Plate Variability: Irregularities in the manufacturing process, such as inconsistent spotting of capture antibodies, can cause significant variability between lots or even plates from the same lot [38].

- Action: Request lot-validation data from the supplier and consider implementing a pre-rinse step with a PBS/Tween-20 solution to mitigate spotting irregularities [38].

- Insufficient Washing: Inadequate washing can lead to high background signal and poor precision [15].

- Action: Increase the number of wash steps, add a 30-second soak step between washes, and ensure automated plate washer ports are clean and unobstructed [15].

- Sample Matrix Effects: Components in patient samples (e.g., lipids, debris) can interfere with detection [39].

- Action: Clarify samples by centrifugation (5-10 minutes) and ensure at least a 1:1 ratio of sample to assay diluent. For cell lysates, dilute to reduce detergent concentration to ≤0.01% [39].

- Plate-to-Plate Variability: Irregularities in the manufacturing process, such as inconsistent spotting of capture antibodies, can cause significant variability between lots or even plates from the same lot [38].

2. Our high-throughput screen yielded an unusually high number of active compounds. How can we identify false positives?

A high hit rate often signals interference from the compound library itself rather than true biological activity.

Common Types of Compound Interference [40]:

- Compound Aggregation: Molecules form colloids that non-specifically sequester proteins.

- Compound Fluorescence: Chemicals fluoresce at wavelengths used for detection.

- Luciferase Inhibition: Compounds directly inhibit the firefly luciferase reporter enzyme.

- Redox Reactivity: Compounds interfere with the assay's redox potential.

Strategies for Mitigation:

- Include Detergent: Adding 0.01–0.1% non-ionic detergent (e.g., Triton X-100) to the assay buffer can disrupt compound aggregates [40].

- Employ Orthogonal Assays: Confirm hits using a secondary assay with a different detection technology or reporter (e.g., switching from luminescence to fluorescence) [40].

- Utilize Counter-Screens: Run a parallel assay designed specifically to identify common interferers, such as a standalone luciferase inhibition assay [40].

3. We are getting no signal in our ELISA when one is expected. What should we check?

A lack of signal indicates a failure in the assay's detection system.

- Troubleshooting Steps [15]:

- Verify Reagent Integrity: Ensure the standard has been handled correctly and has not expired. Make new buffers to rule out contamination.

- Check Protocol Adherence: Confirm that all reagents were added in the correct order and that the detection antibody and enzyme conjugate (e.g., streptavidin-HRP) were used at the recommended concentrations.

- Confirm Plate Coating: Use plates designed for ELISA, not tissue culture plates. Ensure the capture antibody was diluted in an appropriate buffer (e.g., PBS) without extraneous protein during the coating step.

- Review Instrument Settings: Check that the plate reader is using the correct wavelengths and is functioning properly.

Troubleshooting Guide at a Glance

The table below summarizes common issues, their potential origins, and recommended actions.

| Problem | Potential Cause | Recommended Action |

|---|---|---|

| High Background [15] | Insufficient washing | Increase wash steps; add a soak step; check plate washer. |

| Poor Duplicates [15] | Uneven coating or washing | Use fresh plate sealers; ensure consistent pipetting and reagent addition. |

| Poor Assay-to-Assay Reproducibility [38] [15] | Plate-to-plate variability; protocol drift | Adhere strictly to protocol; use internal controls; validate new reagent lots. |

| Low or No Signal [15] | Degraded reagents; incorrect procedure | Make fresh buffers/standards; check calculations; review protocol steps. |

| Apparent High Activity in HTS [40] | Compound interference (e.g., aggregation) | Add detergent to buffer; perform orthogonal/counter-screens. |

Experimental Protocols for Validation & Troubleshooting

Protocol 1: Validating a Multiplex Immunoassay Platform

This protocol is based on a systematic evaluation of the Searchlight platform and can be adapted for validating any multiplex assay [38].

- Objective: To assess the accuracy, intra-assay, and inter-assay reproducibility of a multiplex platform prior to use in a large clinical study.

- Materials:

- Multiplex assay kit (e.g., Human 9-plex cytokine array).

- Corresponding singleplex assay kits (e.g., from R&D Systems) for comparison.

- Recombinant protein standards.

- Pooled normal human plasma and relevant patient samples (e.g., EDTA plasma from critically ill patients).

- Plate washer and appropriate imaging or detection instrument.

- Methodology:

- Spike and Recovery: Spike recombinant proteins into normal plasma at concentrations within the standard curve range. Assay in triplicate on both the multiplex and singleplex platforms. Calculate percent recovery.

- Intra-Assay Variability: Measure identical patient samples in replicate on the same plate on the same day. Calculate the coefficient of variation (CV%).

- Inter-Assay Variability: Measure identical patient samples on different days using different plates from the same lot. Calculate the CV% for this comparison.

- Platform Comparison: Assay identical patient samples on both the multiplex and singleplex platforms to compare absolute values and correlation.

- Data Analysis: A well-validated platform should demonstrate efficient spike recovery (>70-80%) and low intra- and inter-assay CVs (e.g., <15%). High inter-assay CVs suggest significant plate-to-plate variability [38].

Protocol 2: Confirming Target Engagement in HTS

This protocol outlines steps to triage hits from a high-throughput screen to eliminate false positives [40].

- Objective: To distinguish genuine bioactive compounds from those causing assay interference.

- Materials:

- Hit compounds from primary HTS.

- Reagents for orthogonal and counter-screen assays.

- Non-ionic detergent (e.g., Triton X-100).

- Methodology:

- Retest in Primary Assay: Confirm initial activity.

- Test for Detergent Sensitivity: Re-test hits in the primary assay buffer supplemented with 0.01% Triton X-100. A significant loss of activity suggests the hit may be an aggregate [40].

- Orthogonal Assay: Test all confirmed hits in an assay that measures the same biology but uses a fundamentally different detection method (e.g., a cell-based functional assay vs. a biochemical binding assay).

- Counter-Screen: Test hits in an assay designed to detect the specific interference mechanism suspected (e.g., a luciferase inhibitor counter-screen for a luminescence-based primary assay).

- Data Analysis: Genuine hits will typically maintain activity in the orthogonal assay and show no activity in specific counter-screens. Compounds whose activity is abolished by detergent or that are active in counter-screens are likely false positives.

The Scientist's Toolkit: Essential Research Reagents

The table below lists key materials and their functions for ensuring robust and reproducible assay performance.

| Item | Function | Example & Notes |

|---|---|---|

| Control Probes & Slides [41] | Verifies sample RNA quality and assay performance. | RNAscope control slides (e.g., Human HeLa Cell Pellet); PPIB/UBC (positive), dapB (negative). |

| Universal Assay Buffer [39] | Provides a consistent matrix for diluting samples and standards. | Thermo Fisher Universal Assay Buffer (Cat. No. EPX-11110-000). Reduces matrix effects. |

| Non-Ionic Detergent [40] | Disrupts compound aggregates in HTS, reducing false positives. | Triton X-100, used at 0.01-0.1% in assay buffer. |

| Internal Controls [15] [42] | Monitors assay performance and reproducibility across runs. | Technopath Multichem IA Plus QC materials; in-house pooled controls with known analyte levels. |

| Plate Sealers [15] | Prevents evaporation and contamination; reused sealers can cause contamination. | Use a fresh, non-reusable sealer for each incubation step. |

| ELISA Plates [15] | Optimized surface for antibody binding. | Use plates designed for ELISA, not tissue culture plates, for efficient capture antibody binding. |

Pathways & Workflows

Troubleshooting Pathway for HTS Hit Validation

This workflow provides a logical sequence for confirming that active compounds from a screen are genuine hits.

Multiplex Immunoassay Validation Workflow

A systematic approach to validating a new multiplex platform before committing precious clinical samples.

Reproducibility forms the cornerstone of scientific advancement, yet biomedical research faces a significant challenge, often termed a "reproducibility crisis." Evidence from metastudies suggests only between 10% to 40% of published research is reproducible [27]. A 2016 survey of 1,576 researchers revealed that over 70% have tried and failed to reproduce another scientist's experiments, and more than 50% could not replicate their own findings [21] [14] [20]. This widespread issue erodes trust, wastes resources—estimated at $28 billion annually in the U.S. alone on non-reproducible preclinical research—and slows scientific progress [21] [14] [27].

A critical, often-overlooked factor contributing to this problem is the inadequate qualification and stability testing of research reagents. Variability in reagent performance, driven by improper storage, handling, or a simple lack of understanding of their stability profile, introduces a hidden variable that can invalidate experimental results. This technical support center is designed to provide researchers, scientists, and drug development professionals with targeted troubleshooting guides and FAQs, framed within a broader thesis on troubleshooting reproducibility issues. Our focus is on establishing systematic protocols for reagent qualification, stability testing, and storage optimization to ensure data integrity and experimental robustness.

Understanding Reproducibility: Definitions and Core Concepts

To effectively troubleshoot, it is essential to define key terms often used interchangeably. Based on guidelines from the Association for Computing Machinery and other scholarly efforts, we adopt the following definitions [27]:

- Repeatability: Obtaining consistent measurement results with stated precision by the same team using the same measurement procedure, system, and operating conditions in the same location on multiple trials.

- Replicability: A different group of researchers yields consistent results with stated precision using the same measurement procedure and system, under the same operating conditions, in the same or a different location.

- Reproducibility: An independent group obtains consistent results using a different measuring system, in a different location on multiple trials.

Furthermore, Goodman et al. (2016) refine the concept of research reproducibility into three dimensions [27]:

- Methods Reproducibility: The ability to obtain sufficient detail about study procedures and data to repeat the exact same workflows.

- Results Reproducibility: The ability to obtain consistent results through an independent study closely matching the procedures of the original one.

- Inferential Reproducibility: The ability to draw qualitatively similar conclusions from an independent study or a re-analysis of the original research.

Systematic reagent qualification directly addresses the first two dimensions, ensuring that the foundational components of an experiment are consistent, reliable, and fully documented.

Troubleshooting Guides and FAQs

FAQ: Common Reagent and Assay Challenges

Q1: Our laboratory frequently obtains different EC50/IC50 values for the same compound in the same cell-based assay. What are the most likely sources of this variability?

- A: Differences in stock solution preparation are a primary culprit [9]. Ensure consistent solvent use, accurate concentration verification, and proper storage conditions for stock solutions. Furthermore, in cell-based assays, consider whether the compound is being pumped out of the cells or is targeting an inactive form of the protein (e.g., a kinase) when the assay requires the active form [9].

Q2: Why does our TR-FRET assay show no assay window, or why is the signal weaker than expected?